Image Fusion, a technique which combines complimentary information from different images of the same scene so that the fused image is more suitable for segmentation, feature extraction, object recognition and Human Visual System. In this paper, a simple yet efficient algorithm is presented based on contrast using wavelet packet decomposition. First, all the source images are decomposed into low and high frequency sub-bands and then fusion of high frequency sub-bands is done by the means of Directive Contrast. Now, inverse wavelet packet transform is performed to reconstruct the fused image. The performance of the algorithm is carried out by the comparison made between proposed and existing algorithm.

Deep Dive into A new Contrast Based Image Fusion using Wavelet Packets.

Image Fusion, a technique which combines complimentary information from different images of the same scene so that the fused image is more suitable for segmentation, feature extraction, object recognition and Human Visual System. In this paper, a simple yet efficient algorithm is presented based on contrast using wavelet packet decomposition. First, all the source images are decomposed into low and high frequency sub-bands and then fusion of high frequency sub-bands is done by the means of Directive Contrast. Now, inverse wavelet packet transform is performed to reconstruct the fused image. The performance of the algorithm is carried out by the comparison made between proposed and existing algorithm.

The information science research associated with the development of sensory system focuses mainly on how information about the world can be extracted from sensory data. In general, a single sensor is not sufficient to provide an accurate view of the real world. For the improvement in the capabilities of the intelligent machines and systems, concept of multiple sensors was presented. As a result, in the past few years multi-sensor fusion has become an important area of research and development. Hence, the single representation of different sources of sensory information is called multi-sensor fusion. Multi-sensor fusion can occur at the signal, image or feature. At most all advanced sensors of today, produce images. For example optical cameras, millimeter wave (MMW) cameras, infrared cameras, x-ray imagers, radar imagers etc. So the information, which we are getting from the advance sensors, is in the form of images. In image-based application fields, image fusion has emerged as a promising research area. Hence, image fusion is the process by which we combine two or more images into single image having important features from all. This fused image contains a more accurate description of the scene than any of the individual source images.

The simplest way for image fusion is pixel-by-pixel [1] gray level average of the source images. However this way leads to undesirable side effects such as reduced contrast. In the recent years, many image fusion methods have been proposed, such as statistical and numerical methods, hue-saturation-intensity (HSI) method, principal component analysis (PCA) method, image gradient pyramid [2,3] and multiresolution methods [4,5,6,8]. Statistical and numerical methods involve huge computation using floating point arithmetic. So these methods are time and memory consuming. The HSI method is based on the representation of the low spatial resolution images using HSI system and then substituting intensity component by a high resolution image. In PCA method, original images are transformed into uncorrelated images and then fused by choosing maximum value among all. PCA is frequently used for fusion because of its ability to compact the redundant data into fewer bands.

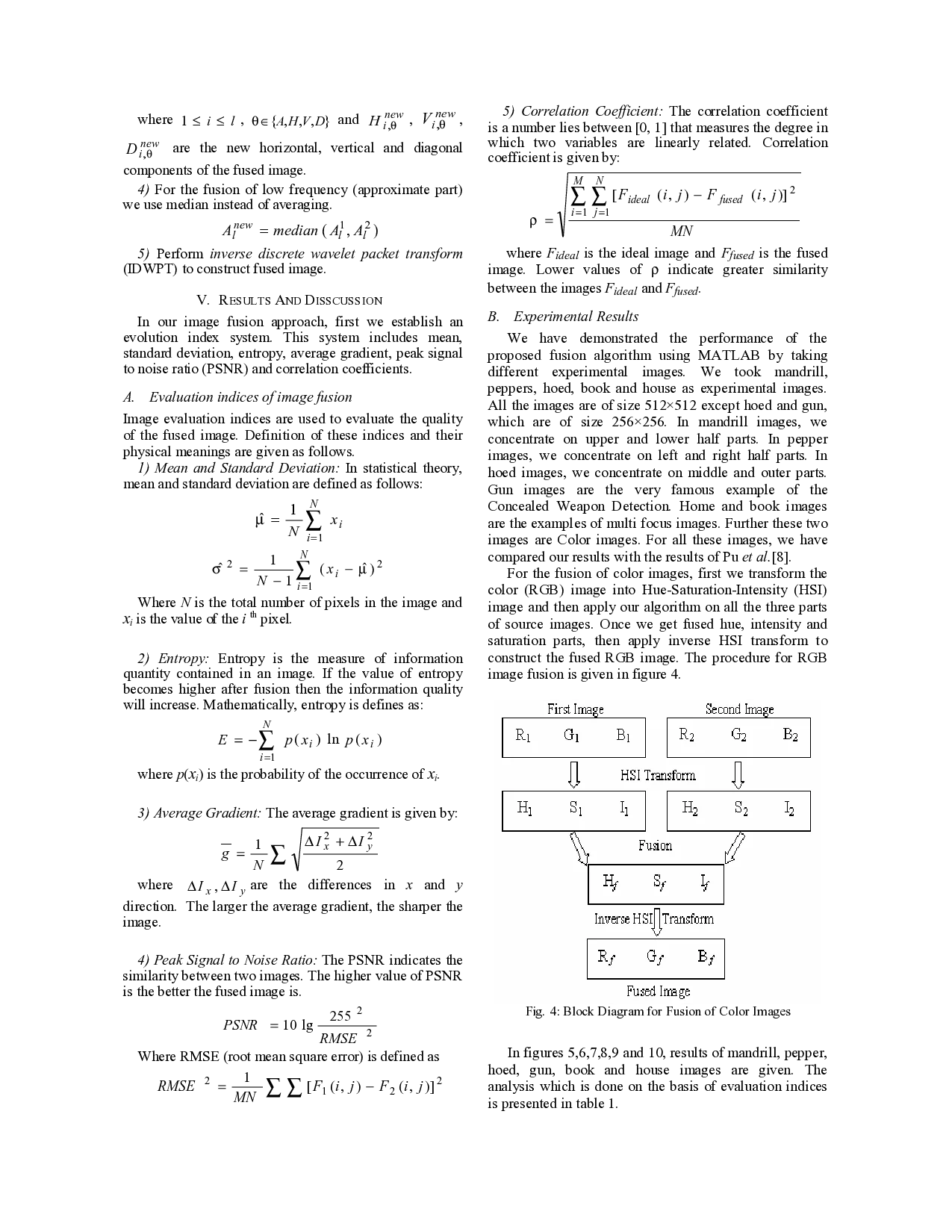

In the recent years, fusion methods based on image gradient pyramid and multiresolution analysis become very popular. The basic idea behind these methods is that source images are decomposed by applying pyramid or wavelet transform, then fusion operation is performed on the transformed images. These methods produce very good results in less computation time and less memory. Burt et al. [2] suggested a method in which the images are decomposed into gradient pyramid. Taking into account the variances in a 3×3 or 5×5 window, activity measure of each pixel is computed. Depending upon this measure, larger value is chosen. Li et al. [6] used similar method except the fact that for decomposition, discrete wavelet transformation(DWT) is used and consistency verification is also done along with area based activity measure and maximum selection. Pu et al. [8] suggested a contrast based image fusion method employed in the wavelet domain. After decomposition, directive contrast is computed for all decomposed images to fuse them.

In this paper, we present fusion scheme based on directive contrast using wavelet packet transform (WPT) [7,9] domain. In this scheme, we improve the method proposed by Pu et al. [8]. First we extend the concept of directive contrast for WPT domain (given in section 3). The benefit of WPT over DWT is that WPT allows better frequency localization of signals where we want as many small values as possible where as the standard wavelet transform may not produce the best result because it is limited to wavelet bases (the plural of basis). WPT increases by a power of two with each step. The comparison which is made by us shows that performance of our algorithm is better than Pu et al. [8].

The rest of the paper is organized as follows. The wavelet packet transform and directive constrast is explained in Section 2 and 3. In Section 4, proposed fusion algorithm is introduced. The experimental results are presented in section 5. Finally, the concluding remarks are given in Section 6.

The wavelet packet transform (WPT) generalizes the discrete wavelet transform and provides a more flexible tool for the time-scale analysis of data. All advantages of the wavelet transform are retained because the wavelet basis is in the repertoire of bases available with the wavelet packet transform. Given this, the WPT may eventually become a standard tool in signal and image processing.

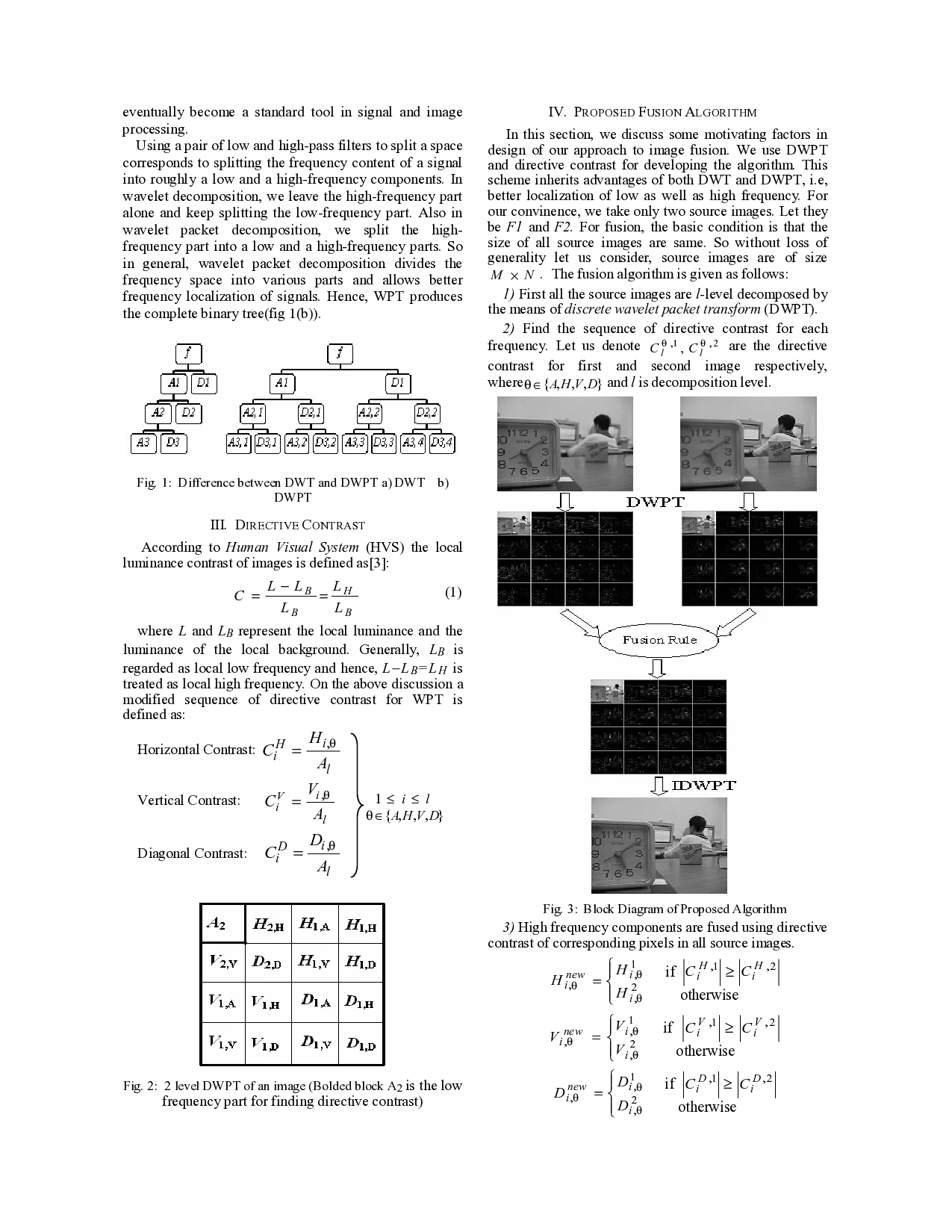

Using a pair of low and high-pass filters to split a space corresponds to splitting the frequency content of a signal into roughly a low and a high-frequency components. In wavelet decomposition, we leave the high-frequency part alone and keep splitting the low-frequency part. Also in wavelet packet decomposition, we split the highfrequency part

…(Full text truncated)…

This content is AI-processed based on ArXiv data.