Probabilistic reasoning with answer sets

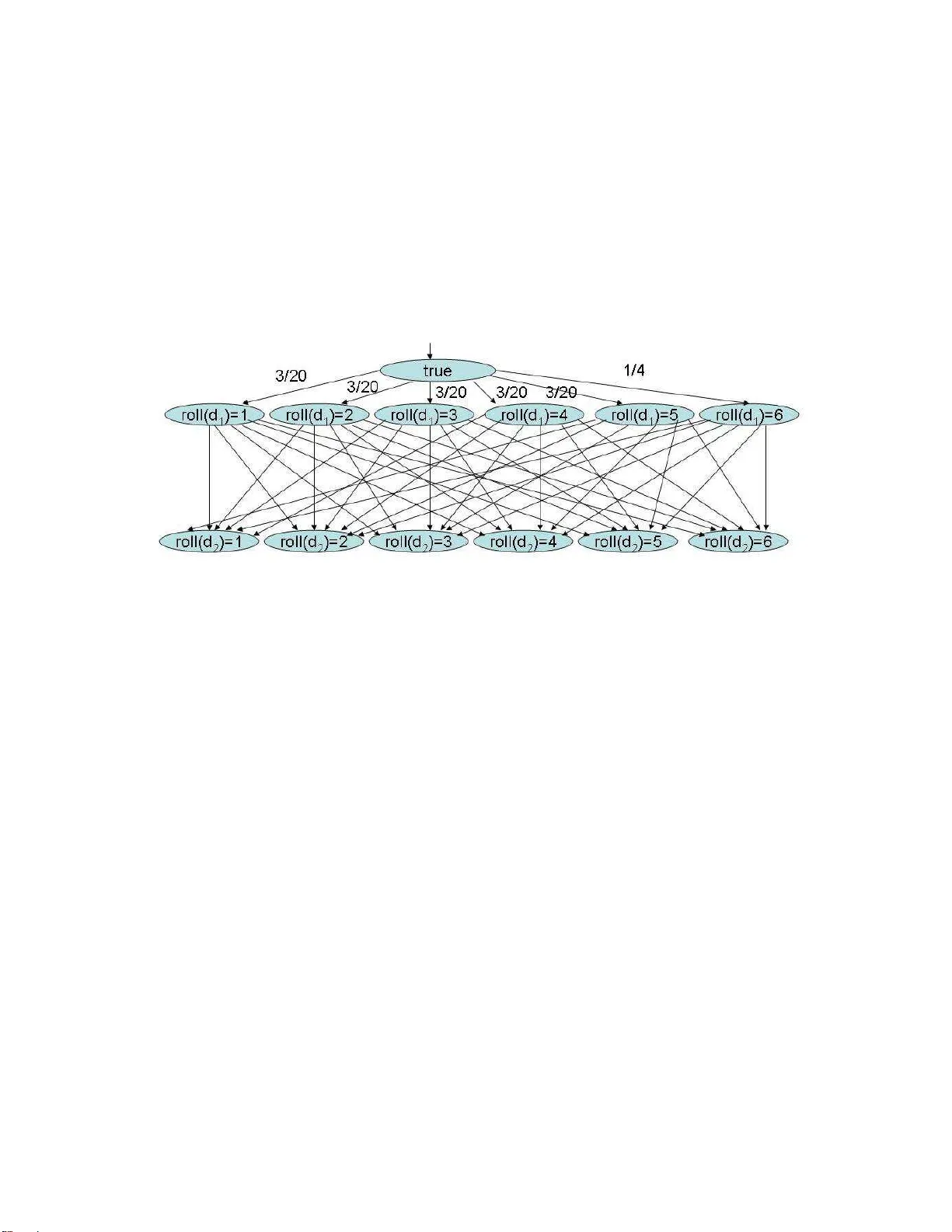

This paper develops a declarative language, P-log, that combines logical and probabilistic arguments in its reasoning. Answer Set Prolog is used as the logical foundation, while causal Bayes nets serve as a probabilistic foundation. We give several n…

Authors: ** - **Chitta Baral** (University of Texas at Dallas) - **Michael Gelfond** (University of Texas at Austin) - **(공동 저자)** – 논문에 따라 Balduccini, Lee, et al. 등 **