Self-similarity of complex networks and hidden metric spaces

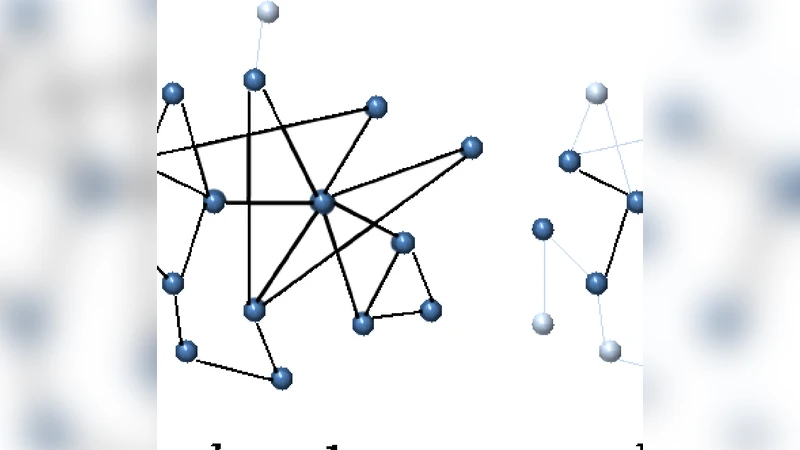

We demonstrate that the self-similarity of some scale-free networks with respect to a simple degree-thresholding renormalization scheme finds a natural interpretation in the assumption that network nodes exist in hidden metric spaces. Clustering, i.e., cycles of length three, plays a crucial role in this framework as a topological reflection of the triangle inequality in the hidden geometry. We prove that a class of hidden variable models with underlying metric spaces are able to accurately reproduce the self-similarity properties that we measured in the real networks. Our findings indicate that hidden geometries underlying these real networks are a plausible explanation for their observed topologies and, in particular, for their self-similarity with respect to the degree-based renormalization.

💡 Research Summary

The paper addresses a striking empirical observation: many real‑world scale‑free networks appear self‑similar when subjected to a simple degree‑thresholding renormalization. In this procedure one removes all vertices whose degree falls below a chosen threshold k_T, recomputes the degrees of the remaining vertices, and repeats the operation. Despite the drastic reduction in size, key statistical descriptors—degree distribution, clustering coefficient, average shortest‑path length—retain the same functional forms across successive renormalization steps. Traditional random‑graph models, such as Erdős‑Rényi or the configuration model, cannot reproduce this invariance, prompting the authors to search for a deeper structural explanation.

The authors propose that the observed self‑similarity is a natural consequence of an underlying hidden metric space in which nodes are embedded. Each vertex i is assigned a hidden variable κ_i that determines its expected degree, and a coordinate θ_i on a one‑dimensional circular metric (the unit circle). The probability that two vertices i and j are linked is given by

p_{ij}= \frac{1}{1+\bigl

Comments & Academic Discussion

Loading comments...

Leave a Comment