Partial Correlation Estimation by Joint Sparse Regression Models

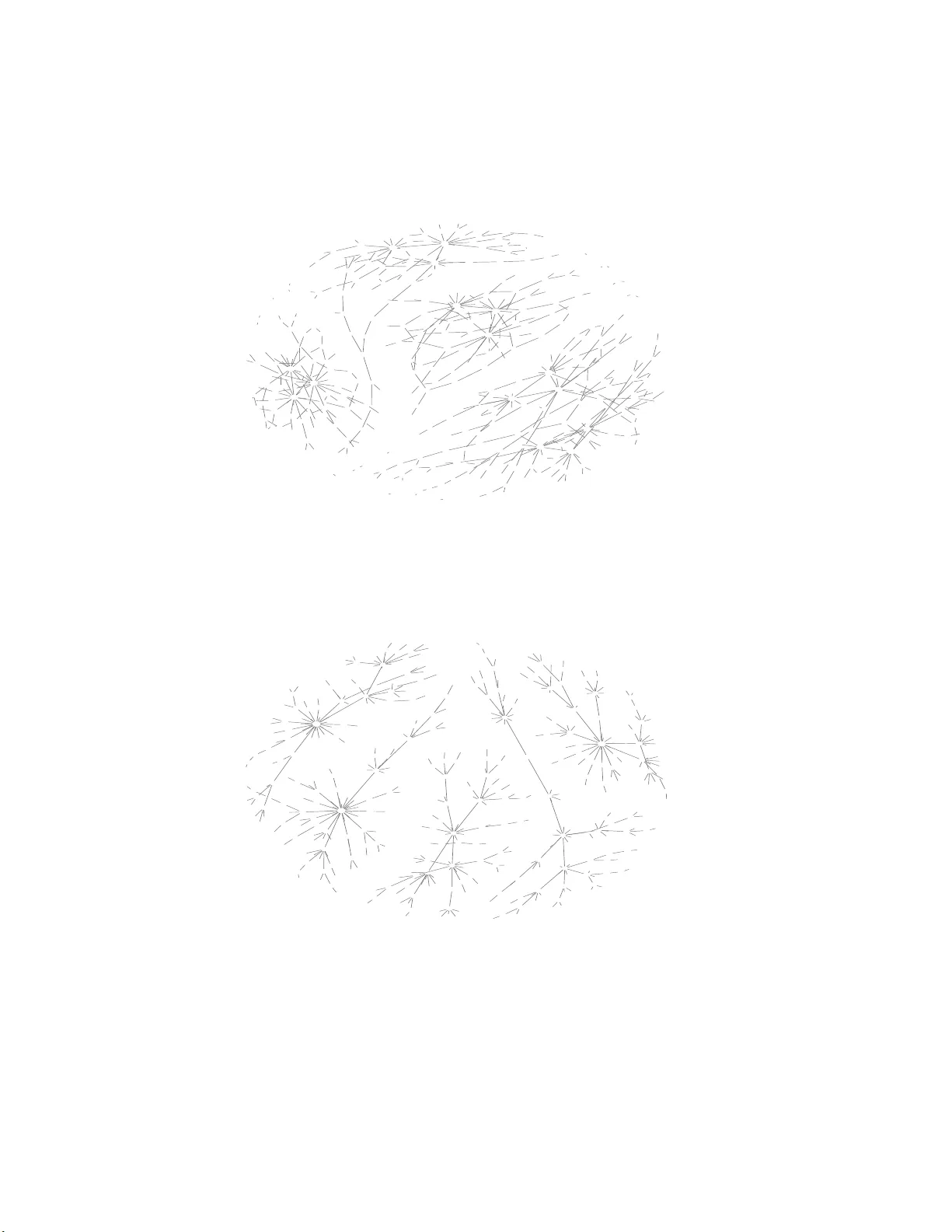

In this paper, we propose a computationally efficient approach -- space(Sparse PArtial Correlation Estimation)-- for selecting non-zero partial correlations under the high-dimension-low-sample-size setting. This method assumes the overall sparsity of…

Authors: Jie Peng, Pei Wang, Nengfeng Zhou