Approximate Sparse Decomposition Based on Smoothed L0-Norm

In this paper, we propose a method to address the problem of source estimation for Sparse Component Analysis (SCA) in the presence of additive noise. Our method is a generalization of a recently proposed method (SL0), which has the advantage of direc…

Authors: Hamed Firouzi, Masoud Farivar, Massoud Babaie-Zadeh

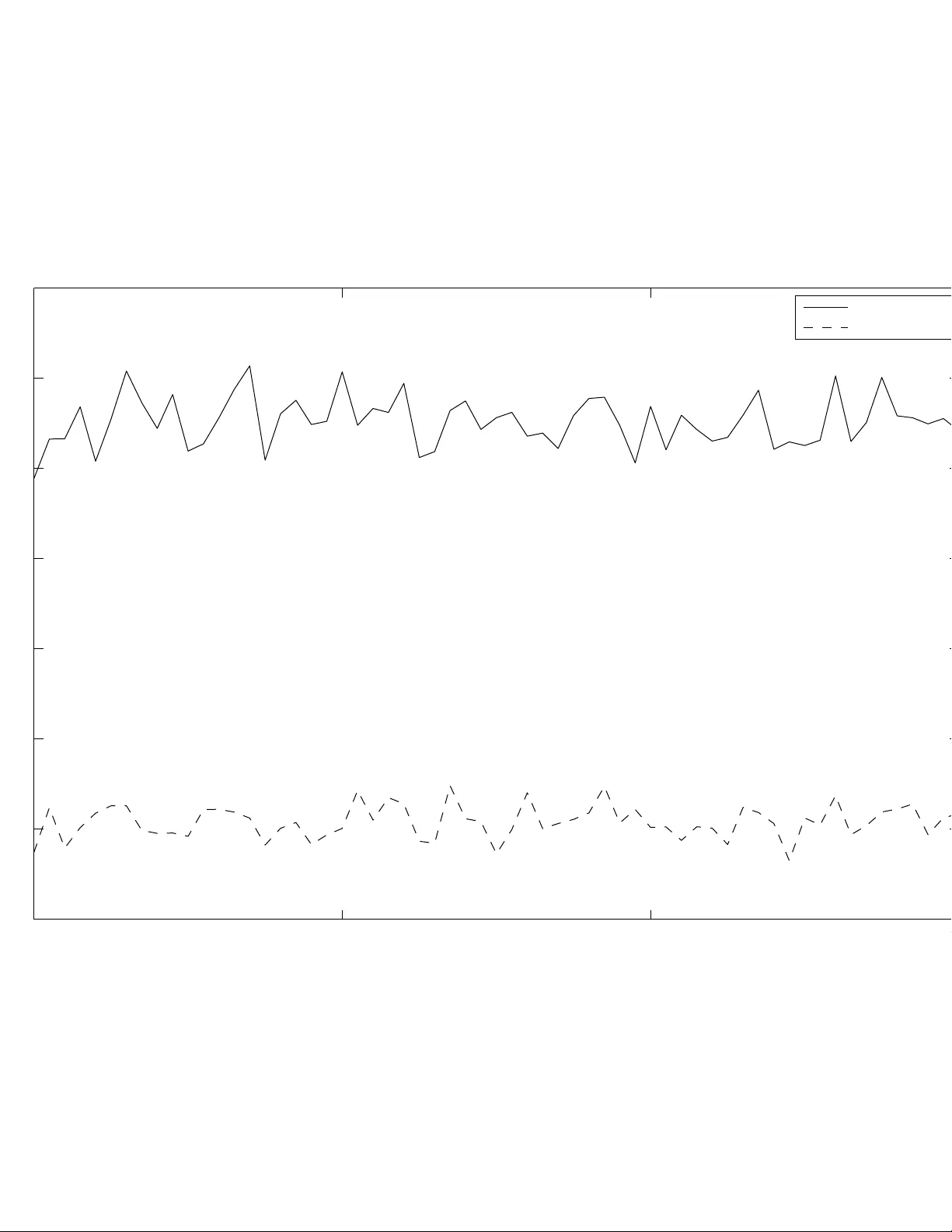

APPR O XIMA TE S P ARSE DECOMPOSITION B ASED ON SMOO THED ℓ 0 -NORM H. F ir o uzi, M. F arivar , M. Babaie-Zadeh ∗ Sharif Univ ersity of T echnolog y Department Of Electrical Engineering T ehran, Iran C. J utten GIPSA-LAB, Grenoble, France ABSTRA CT In this pa per , we propose a method to address the problem of sou rce estimation fo r Sparse Component Analysis (SCA) in the presence of additi ve no ise. Our method is a g eneralization of a recently proposed method (SL0), which has the adv antage of directly minimizing the ℓ 0 -norm instead of ℓ 1 -norm, while being very fast. SL0 is based on minimization of the smoothed ℓ 0 -norm su bject to A s = x . In order to better estimate the source vector for noisy mixtures, we suggest then to remove the constraint As = x , by relaxing exact equality to an approximation (we call our method Smoothed ℓ 0 -norm Denois- ing or SL0DN). The fi nal r esult can then be obtained by minimiza- tion of a proper linear combination of the smoothed ℓ 0 -norm and a cost function for the approximation. Experimental results empha- size on the significant enha ncement of the modified method in noisy cases. Index T erms — atomic decomposition, sparse decomposition, sparse representation, o ver -complete signal r epresentation, sparse source separation 1. INTRODUCTION Blind source separation (BSS ) consists of detecting the underlying source si gnals within some observ ed mixtures of them without any prior information about the sources or the mixing system. Let x ∈ R n be the vector of observed mixtures and s ∈ R m denote the vec- tor of unkno wn source signals. T he mixing equation f or the linear instantaneous noisy model will be: x = As + n (1) where A is the n × m unkno wn mixing matrix and n denotes the additi ve noise vector . The aim of BSS is then to estimate s from observ ed data x wit hout any knowledge of the mixing matri x, A , or the source signals. In the determined case, when n ≥ m , the problem can be suc- cessfully solved using Independen t Component Analysis (ICA) [1]. Ho wev er , in the underdetermined (or o ver -complete) cases where fewe r observations than sources are prov ided, ev en if A is kno wn, there are infinitely many solutions to the problem since the number of unkno wns exce eds the number of equations. This ill -posedness could be resolved by the assumption of ‘S parsity’, i. e. resulting in non totally bli nd source separation problem. A si gnal is considered to be sparse when only a few of its samples t ake significant value s. Thus, among all possible solutions of (1) we seek the sparsest one, ∗ This work has been partiall y supported by Iran National Science Foun- dation (INSF) under contract number 86/994, and al so by ISMO and Frenc h embassy in Ira n i n the frame work of a Gundi-Shapo ur coll aboratio n program. which has then minimum number of nonzero components, i.e. min- imum ℓ 0 -norm. SCA can also be viewed as the problem of representing a signal x ∈ R n as a linear combination of m vectors, called atoms [2]. The atoms { ϕ i } m i =1 collectiv ely f orm a dictionary , n × m matrix, over which the signal is to be decomposed.There are special interests in the cases where m > n (refer for example to [3] and the references in it). Again we hav e the problem of fi nding the sparsest solution of the set of underde termined linear equations x = P m i =1 s i ϕ i where Φ , [ ϕ 1 , . . . , ϕ m ] is the dictionary of m atoms. This problem is also called ‘atomic decomposition’ and has many potential applica- tions in div erse fields of science [3]. The general Sparse Component Analysis (SC A) problem con- sists of two st eps: fir st estimating t he mixing matrix, and then finding the sparsest source ve ctor , assuming the mixing matrix to be kno wn. The first step can be acco mplished by means of clustering meth- ods [4]. In this paper , we focus our attention on the second step; that is for a give n mixing matrix, w e wish to find t he solution to the follo wing minimization problem: ˆ s = argmin k s k 0 subject to x = As (2) where k s k 0 denotes the number of non-zero elements of s (and is usually called the ℓ 0 -norm of s ). So far , severa l al gorithms such as Basis P ursuit (BP) [5, 6] and Matching P ursuit (MP) [2, 4] hav e been proposed to approximate the solution of (2). The former is based on the observ ation that f or most large underdetermined systems of linear equations the mini- mal ℓ 1 -norm ( P i | s i | ) solution i s also the sparsest solution [6 ]. The minimization of ℓ 1 -norm can be efficiently solved using Li near Programming (LP) techniques [7]. Despite all recent dev elopments, computational ef ficiency has still remained as a main concern . Recently in [8], the idea of using smoothed ℓ 0 -norm (SL0) was introduced. More precisely this algorithm minimizes a smooth approximation of the ℓ 0 -norm denoted by m − F σ ( s ) , and the ap- proximation tends to equality when σ → 0 . The algorithm then sequentially solves the prob lem: maximize F σ ( s ) s . t . As = x (3) for a decreasing sequence of σ . This approximation accommoda tes for both continuous optimiza- tion techniques to esti mate the sparsest solution of (2) and a noise- tolerant algorithm. The idea turned out to be both ef ficient and ac- curate, i. e. providing a better accuracy than ℓ 1 -norm minimization algorithms while be ing ab out two o rders o f magnitude faster [8] than LP . Ho wev er , the propose d algorithm has not been designed f or t he noisy case (1), where a noise vector , n , has been added to the ob- served mixture x . In this paper , we wi ll tr y to generalize the proposed method to this noisy case by removing the As = x constraint and relaxing the e xact equ ality to an approximation. In sparse de compo- sition vie wpoint, this means an approximate sparse decomposition of a si gnal on an ov er-comp lete dictionary . The fi nal algorithm wi ll then be an iterativ e minimization of a proper linear combination of smoothed ℓ 0 -norm and k As − x k 2 2 . This paper is orga nized as fo llows. S ection 2 discusses the ma in idea of the proposed method. Section 3 giv es a formal statement of the fi nal algorithm. Finally , experimental results are presented in Section 4. 2. MAIN IDEA As stated in the previous section, when the dimensions increase, finding the mi nimum ℓ 0 -norm solution of (2) is impractical for two reasons. Firstly because ℓ 0 -norm of a vecto r is a discontinuou s func- tion of its elements and leads to an intractable combinatorial opti- mization, and secondly becau se of the solution being highly sen- sitiv e to noise. The idea of [8] is then to replace the ℓ 0 -norm by continuous function, which approximates Kroneck er delta function, and use optimization techniques to minimize it subject to As = x , as a constraint. For e xample, consider the Gaussian like function: F σ ( s ) = m X i =1 exp ( − s 2 i / 2 σ 2 ) (4) where s i denotes the i -t h element of vector s . For sufficiently small v alues o f σ , F σ ( s ) tends to count the number of zero elements o f the vector s. T hus we hav e: k s k 0 = m − lim σ → 0 F σ ( s ) (5) where m is the dimension of the vector s . The sparsest solution of (2) can then be appro ximated by the solution of the f ollo wing minimization problem: ˆ s = argmin ( m − F σ ( s )) subject to x = As (6) The abov e minimization task can be accomplished using common gradient type (e.g. steepest descent) algorithms. Note that the value of σ determines ho w smooth the function F σ is; the smaller the valu e of σ , the better the estimation of k s k 0 but t he larger the probability o f being t rapped in local minima of the cost function. The idea of [8] for escaping from local minima is then to use a decreasing set of v alues for σ i n each it eration. More precisely for ea ch v alue of σ t he minimization algorithm is initi ated with the minimizer of the F σ ( s ) for the pre vious (larger) v alue of σ . No w consider a more realistic case where a noise vector , n , has been added to the observed mixture, as in (1). Here we no- tice that we have an uncertainty on exact value of the observed vec- tor and it seems reasonable to remov e the x = As constraint and reduce it to x ≈ As . This idea is based on the observ ation that in presence of considerable noise, this constraint may lead to a to- tally dif ferent sparse deco mposition. T hus we wish to minimize two terms; k As − x k 2 as cost of approximation, and the smoothed ℓ 0 - norm ( m − F σ ( s ) ), as the measure of sparsity . For the sake of simplicity , we choose k As − x k 2 2 as the cost of approximation. Therefore, the idea will naturally leads us to the follo wing minimization problem: ˆ s = argmin J σ ( s ) = ( m − F σ ( s )) + λ k As − x k 2 2 (7) where λ > 0 , represents a compromise between the two terms of our cost function; sparsity and equality condition. Intuitiv ely , we may exp ect that for less noisy mixtures, the v alue of λ should be greater t han that of observ ations with high noise quantity . Further discussion on the choice of λ is left to Section 4. Another adva ntage of remo ving x = As constraint appears when the dicti onary matrix, A , i s not full rank. In this case satis- fying the exa ct equality constraint for observed vectors, which are not in column space of A is impossible and as a result the previous algorithm fails to find an y answer . 3. FINAL ALGORITHM The final algorithm is shown in Fig 1. W e call our algorithm SL0 DeNoising (SL0DN). As seen in the algo rithm, the final v alues of t he pre vious esti mation are u sed fo r th e initialization of the next steepest descent st ep. The decreasing sequence of σ is used to escape from getting trapped into local minima. Direct calculations sho w that: ∆ s = ∂ J σ ( s ) ∂ s = λ (2 A T ( As − x )) + 1 σ 2 [ s 1 e ( − s 2 1 / 2 σ 2 ) , . . . , s m e ( − s 2 m / 2 σ 2 ) ] T (8) In the minimization part, the stee pest de scent with variable step- size ( µ ) has been applied: If µ is such that J σ ( s − µ ∆ s ) < J σ ( s ) we multiply it b y 1.2 for the ne xt iteration, otherwise it is mu ltiplied by 0.5. 4. EXPERIMENT AL RESUL TS In this section we in vestigate the performance of the proposed method and present our simulation results. Since our framewo rk is a gener- alization of the idea presented in [8], the practical considerations in that paper can be directly imported into our frame work. In [8], it has been experimen tally shown that SL0 is about two orders of magnitude faster than the state-of-the-art interior-point LP solvers [7], while being more accurate. W e provide the comparison results of ou r method with the SL0 method. Moreover a comp arison with Basis Pursuit Denoising will be presented. In all exp eriments, sparse sources have been artificially gener- ated using a Bernoulli-Gaussian model: each source is ‘ activ e’ with probability p , and i s ‘inactiv e’ with probability 1 − p . If it is active, its value is modeled by a zero-mean Gaussian r andom variable with v ariance σ 2 on ; if i t is not activ e, its value is modeled by a zero-mean Gaussian random v ariable wit h va riance σ 2 off , where σ 2 off ≪ σ 2 on . Consequently , each s i is distributed as : s i ∼ p · N (0 , σ on ) + (1 − p ) · N (0 , σ off ) , (9) Sparsity implies that p ≪ 1 . W e considered p = 0 . 1 , σ off = 0 . 01 and σ on = 1 . El ements of the mixing matrix, A , and noise vector , n , were also considered to hav e normal distributions with standard de viation of 1 and σ n , respecti vely . As in [8], the set of decreasing v alues for σ was fixe d to [1 , 0 . 5 , 0 . 2 , 0 . 1 , 0 . 05 , 0 . 02 , 0 . 01] . Experiment 1. Optimal value of λ In this experiment, we i n v estigate the effect of λ on the perfor- mance of our method. W e set the dimensions to m = 1000 , n = 400 , and for each value of σ n = 0 , 0 . 01 , . . . , 0 . 15 we plotted the a v- erage Signal to Noise Ratio (SNR), defined by 10 log 10 k s k 2 k ˆ s − s k 2 , as a function of λ (in this section, all the results are averag ed over 100 experimen ts). Figure 2 sho ws a sample of our experiments. Dash line represents the results obtained from (6), which is indepen dent • Initialization: 1. Let ˆ s 0 = A T ( AA T ) − 1 x . 2. Choose a suitable v alue for λ as a function of σ n . The value of σ n for a set of observed mixtures may be estimated either directly from the observed mixtures (see for examp le [9 ] and references therein) or using a bootstrap method (discussed in experiment 1 of Sec- tion 4). 3. Choose a suitable decreasing sequence for σ , [ σ 1 . . . σ K ] . and a sufficiently small value for the step-size parameter , µ . • For k = 1 , . . . , K : 1. Let σ = σ k . 2. Minimize (approximately) the function J σ ( s ) using L i terations o f the steepes t descent algo - rithm: – Initiali zation: s ← ˆ s k − 1 . – for j = 1 . . . L (loop L t imes): (a) Let: ∆ s = λ (2 A T ( As − x )) + 1 σ 2 [ s 1 e ( − s 2 1 / 2 σ 2 ) , . . . , s m e ( − s 2 m / 2 σ 2 ) ] T (b) If J σ ( s − µ ∆ s ) < J σ ( s ) let ρ = 1 . 2 else ρ = 0 . 5 . (c) Let s ← s − µ ∆ s (d) Let µ ← µ × ρ . (v ariable step-size) (e) Set ˆ s k ← s . • Final answer is ˆ s = ˆ s K . Fig. 1 . T he final algorithm of SL0DN. of λ . Note that, there exists an interv al in which the c hoice of λ will result in a better estimation compared to SL 0. The S NR takes its maximum in this region for som e value of λ , which we call λ opt . As mentioned i n the previous section, we expect an appropriate choice of λ to be a decreasing fun ction of σ n since with the increase of noise powe r , the cost of approximation ( Ax ≈ s ) decreases. T o verify this, for ea ch v alue of σ n , we obtained the v alue of λ opt using the curv es similar to F ig. 2 . Figure 3 shows the values of λ opt as a function of σ n in [0,0.15]. W e fit these results with a curv e of type 1 α + β x 2 to fi nd t he follo wing rule of thumb for the choice of parameter λ : λ ≈ 1 0 . 007 + 3 . 5 σ 2 n . (10) This formula giv es a rough approximation for the choice of appro- priate λ i n the initialization step of the algorithm. Notice that we ha ve tw o choices for the initialization o f the pro- posed m ethod: either to estimate σ n directly from the observed mix- tures [9] and then use (10) to find an approximation of λ opt , or to follo w this iterativ e approach to solve the pro blem: 1. choose an arbitrary reasonable value of σ n . 2. take λ opt from the curve. 20 40 60 80 100 120 140 11 12 13 14 15 16 17 18 19 20 21 λ SNR (dB) Fig. 2 . A v erage Output SNR for differen t choices of λ for σ n =0.05. 0 0.05 0.1 0.15 0 50 100 150 λ opt σ n Fig. 3 . λ opt as a function o f noise powe r ( σ n ). The continuous curv e sho ws our approximation of λ opt . 3. run the algorithm and aft er con ver gence, compute an estima- tion of σ n from the obtained so urce vector and th en goto step 2. Experiment 2. Speed and perfor mance In order to measure the speed of our algorithm, we run the al- gorithm 100 t imes for m = 1000 , n = 400 and σ n = 0 . 05 . The simulation is performed in MA TLAB7 environ ment using an Intel 2.8Ghz processor and 512MB of memory . T he average run time of SL0DN was 2.062 seconds while the average time for SL0 was 0.242 seconds. Al though SL0DN is someho w slo wer than SL0, but regard- ing to T able I in [8], the algorithm is sti ll much faster than ℓ 1 -magic and FOCUSS. W e proceed with the performance analysis of the proposed algo- rithm. In this exp eriment, we fix the parameters m, n, p with those of e xperiment 1 and for each valu e of σ n , choose the value of λ wit h (10). In F ig. 4 the average output SNR is compared to the results of SL0. It can be seen that e xcept for low-n oise mixtures ( σ n < 0 . 02) , SL0DN achie v es a b etter SNR. Thus for noisy mixtures, t he case for most real data, the act of approximately satisfying As = x con- straint is justified exp erimentally . 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.16 0 5 10 15 20 25 30 35 SL0 SL0DN GPSR Fig. 4 . Comparison between SL0DN, SL0 and BPDN . 500 1000 1500 2000 13 14 15 16 17 18 19 20 m SNR (dB) SL0DN SL0 Fig. 5 . A verage Output SNR v ersus m . A verages are tak en o ver 1 00 experimen ts. W e also compared the results SL 0DN with Basis P ursuit De- Noising (BPDN) which is much faster than BP . W e used Gradi- ent Projection for Sparse Reconstruction (GPSR ) [10] algorithm for BPDN. The r esults of GPSR are sho wn in Fig. 4 with dotted line. As we see, the av erage SNR curv e of GPSR lies un der the two other curves except for lo w noise mixtures. It worths mentioning that the av erage run time of GPSR was 3.15 6 seconds. Experiment 3. Dimension Dependency In this experiment we study the performance of the prop osed method for different dimensions of sources and mixtures. In this experimen t, the values of m and n change wit hin a constant ratio ( n = 0 . 4 m ). The av erage output SNR for both methods are shown in Fig.5. The results suggest t hat the quality of estimation is almost independe nt of the dimensions. 5. CONCLUSION W e presented a fast method for Sparse Component Analysis (S CA) or atomic decomposition on over -complete dictionaries, i n presence of additiv e noise. The method was a generalization of SL0 method. The proposed method was based on smoo thed ℓ 0 -norm minimization and satisfying the equ ality constraint approximately instead of exa ct equality constraint. The proposed method is fast while being more robust against noisy mixtures than the original SL0. Experimental results approv ed t he performance and the noise- tolerance of our method for noisy mixtures. 6. REFERENCES [1] A. Hyv ¨ arinen, J. Karhunen, and E. Oja, Independent Compo- nent Analysis , John Wiley & S ons, 2001 . [2] S . Mallat and Z . Zhang, “Matching pursuits with time- frequenc y dictionaries, ” IEEE T ran s. on Signal Pro c. , vol. 41, no. 12, pp. 3397–3 415, 1993. [3] D. L. Donoho, M. El ad, and V . T emlyak o v , “Stable rec ov ery o f sparse overcomp lete representations i n t he presence of noise, ” IEEE T r ans. Info. Theory , vol. 52 , no. 1, pp. 6–18, Jan 2006. [4] R. Gribon v al and S. Lesage, “ A survey of sparse compon ent analysis for blind source separation: principles, perspectiv es, and new challenges, ” in Pro ceedings of ESANN’06 , April 2006, pp. 323–330 . [5] S . S. Chen, D. L. Donoho, and M. A. S aunders, “ Atomic decomposition by basis pursuit, ” SIAM Journa l on Scientific Computing , vo l. 20, no. 1, pp. 33–61, 1999. [6] D. L . Donoho, “For most large underdetermine d systems of linear equations t he minimal ℓ 1 -norm solution is also the spars- est solution, ” T ech . Rep., 2004. [7] E . Candes and J. Romberg, “ ℓ 1 -magic: Recov ery of sparse signals via con v ex programming” 2005, URL: www .acm.caltech.edu/l1magic/do wnloads/l1magic.pdf. [8] G. H. Mohimani, M. Babaie-Zadeh, an d C. Jutten, “Fast s parse representation based o n smoothed l 0 -norm, ” accepted for pub- lication in IEEE T rans. on Signal Pr oc. (available as eprint arXiv 0 809.2508). [9] H. Z ayyani, M. Babaie-Zadeh and C. Jutten “S ource estima- tion in noisy Sparse Component Analysis, ” 15’ th In tl. Conf. on Digital Signal Processing (DSP2007), pp. 219-222 July 2007. [10] Mario A.T . Figueiredo, Robert D. No wak, and Stephen J. W right, “Gradient projection for sparse recon- struction: Application to compressed sensing and other in verse problems”, IEE E Journal of Selected T opics in Signal Processing: Special Issue on Con vex Optimization Method s for Signal Processing, 1(4), pp. 586-59 8, 2007 0 0.02 0.04 0.06 0.08 0.1 0.12 0.14 0.1 6 5 10 15 20 25 30 σ n SNR (dB) SL0 Noisy−SL0 500 1000 1500 2 000 13 14 15 16 17 18 19 20 m SNR (dB) Our Method Method of [1]

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment