Artificial Intelligence Techniques for Steam Generator Modelling

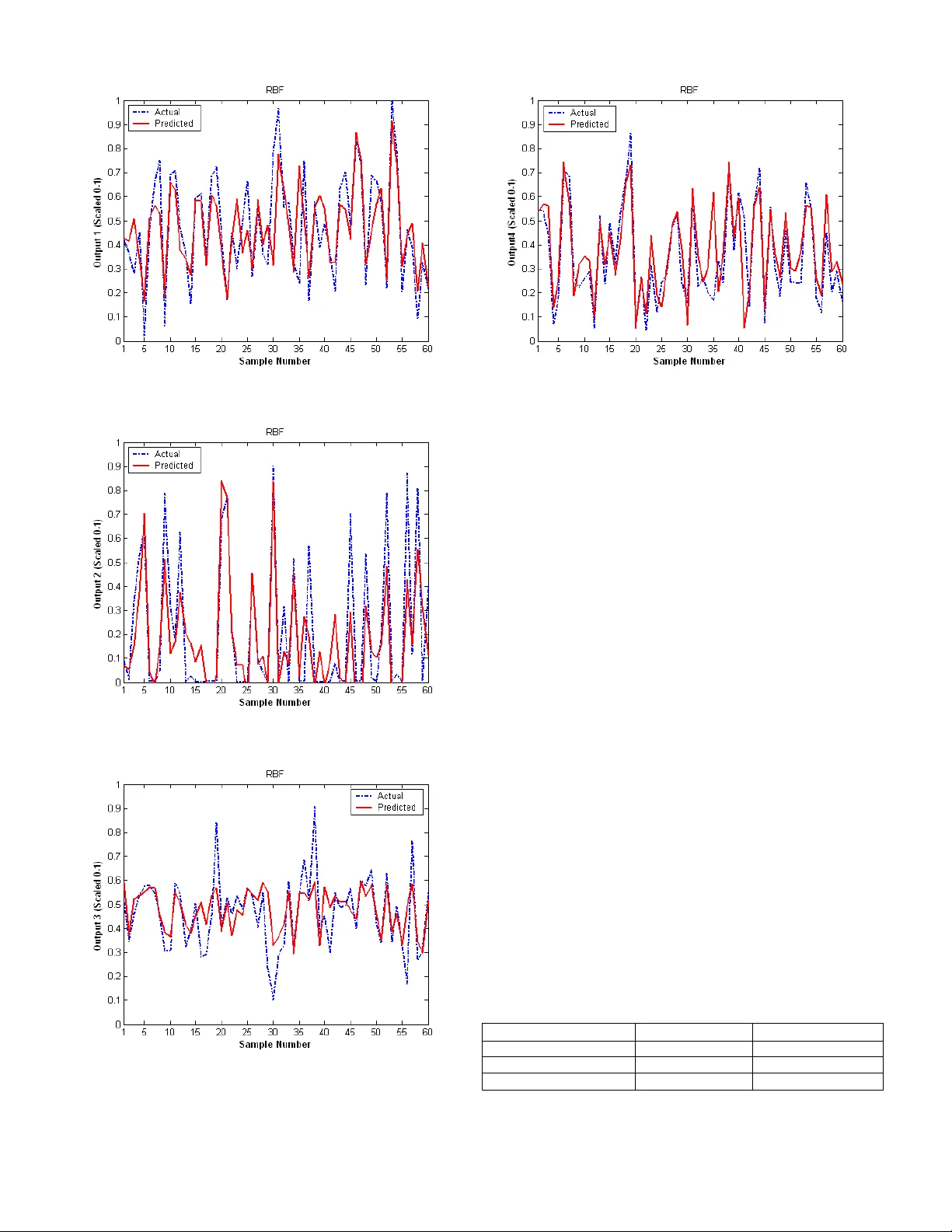

This paper investigates the use of different Artificial Intelligence methods to predict the values of several continuous variables from a Steam Generator. The objective was to determine how the different artificial intelligence methods performed in m…

Authors: Sarah Wright, Tshilidzi Marwala