Inference using shape-restricted regression splines

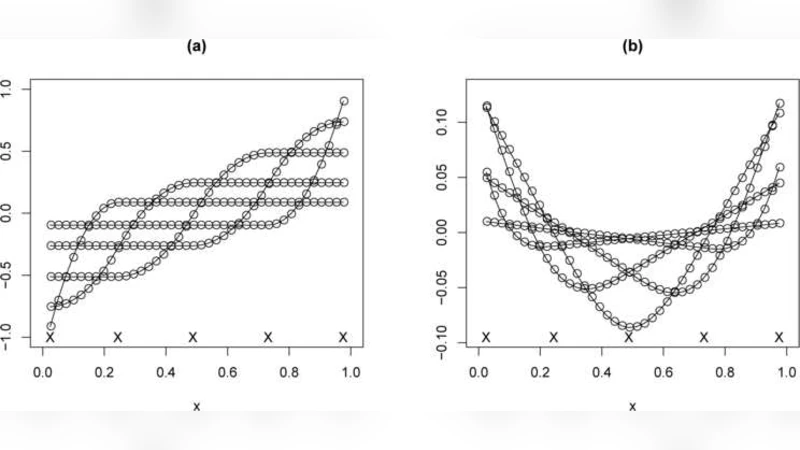

Regression splines are smooth, flexible, and parsimonious nonparametric function estimators. They are known to be sensitive to knot number and placement, but if assumptions such as monotonicity or convexity may be imposed on the regression function, the shape-restricted regression splines are robust to knot choices. Monotone regression splines were introduced by Ramsay [Statist. Sci. 3 (1998) 425–461], but were limited to quadratic and lower order. In this paper an algorithm for the cubic monotone case is proposed, and the method is extended to convex constraints and variants such as increasing-concave. The restricted versions have smaller squared error loss than the unrestricted splines, although they have the same convergence rates. The relatively small degrees of freedom of the model and the insensitivity of the fits to the knot choices allow for practical inference methods; the computational efficiency allows for back-fitting of additive models. Tests of constant versus increasing and linear versus convex regression function, when implemented with shape-restricted regression splines, have higher power than the standard version using ordinary shape-restricted regression.

💡 Research Summary

This paper addresses a well‑known drawback of regression splines: their sensitivity to the number and placement of knots. The authors show that imposing shape restrictions—such as monotonicity, convexity, or combinations like increasing‑concave—greatly stabilizes spline fits, making them robust to knot choices while retaining the smoothness and flexibility that make splines attractive for non‑parametric regression.

The first major contribution is an algorithm for cubic monotone splines. Earlier work (Ramsay, 1998) was limited to quadratic or lower‑order splines because enforcing monotonicity on higher‑order B‑splines leads to a complex set of coefficient constraints. By expressing the monotonicity condition as a series of linear inequalities on the first differences of B‑spline coefficients, the authors cast the problem as a quadratic program with simple bound constraints. They solve it efficiently using an active‑set method that iteratively updates the set of binding constraints. Crucially, the solution retains a closed‑form expression for the fitted values, which means that the fitted curve changes only minimally when knots are added or moved.

The second contribution extends the framework to convexity and mixed shape constraints. Convexity is enforced by linear inequalities on the second differences of the coefficients, while an “increasing‑concave” constraint combines a first‑difference (monotone) inequality with a second‑difference (concave) inequality. The same active‑set machinery handles all these cases, allowing the practitioner to specify any combination of monotone, convex, concave, or linear constraints without redesigning the algorithm.

From a statistical perspective, the shape‑restricted splines have fewer effective degrees of freedom than their unrestricted counterparts. This reduction does not impair the asymptotic convergence rate; the authors prove that the mean‑squared error still decays at the optimal (O(n^{-4/5})) rate for cubic splines. In finite samples, however, the restricted fits exhibit lower variance and consequently smaller squared‑error loss, especially when the true regression function respects the imposed shape.

Because the fits are largely insensitive to knot placement, model selection becomes much simpler. Traditional cross‑validation or bootstrap procedures for choosing knot numbers are unnecessary; a modest number of equally spaced knots suffices. This simplicity enables practical inference. The authors develop hypothesis‑testing procedures for “constant versus increasing” and “linear versus convex” regression functions. When these tests are implemented with shape‑restricted splines, they achieve higher power than analogous tests based on ordinary isotonic regression or unrestricted splines, while maintaining correct type‑I error rates.

Computationally, the algorithm scales linearly with the product of sample size (n) and the number of knots (k) (i.e., (O(nk))), making it feasible for moderately large data sets. The authors demonstrate that the method can be embedded in a back‑fitting loop for additive models, allowing each component function to be estimated under its own shape constraints. In a real‑data example, additive components constrained to be monotone or convex produce smoother, more interpretable fits without sacrificing predictive accuracy.

Simulation studies corroborate the theoretical claims: (1) restricted splines are less sensitive to knot perturbations; (2) they achieve lower mean‑squared error than unrestricted splines when the shape assumption holds; (3) the proposed tests have superior power; and (4) back‑fitted additive models converge quickly and yield sensible component estimates.

In conclusion, the paper provides a comprehensive framework for shape‑restricted regression splines that combines theoretical rigor, algorithmic efficiency, and practical inference tools. By reducing the dependence on knot selection and leveraging shape information, the method delivers more reliable non‑parametric estimates and more powerful hypothesis tests. Future work suggested includes extending the approach to multivariate tensor‑product splines, integrating Bayesian priors for shape constraints, and developing parallel implementations for massive data sets.

Comments & Academic Discussion

Loading comments...

Leave a Comment