Adversarial Scheduling Analysis of Game Theoretic Models of Norm Diffusion

In (Istrate, Marathe, Ravi SODA 2001) we advocated the investigation of robustness of results in the theory of learning in games under adversarial scheduling models. We provide evidence that such an analysis is feasible and can lead to nontrivial res…

Authors: Gabriel Istrate, Madhav V. Marathe, S.S.Ravi

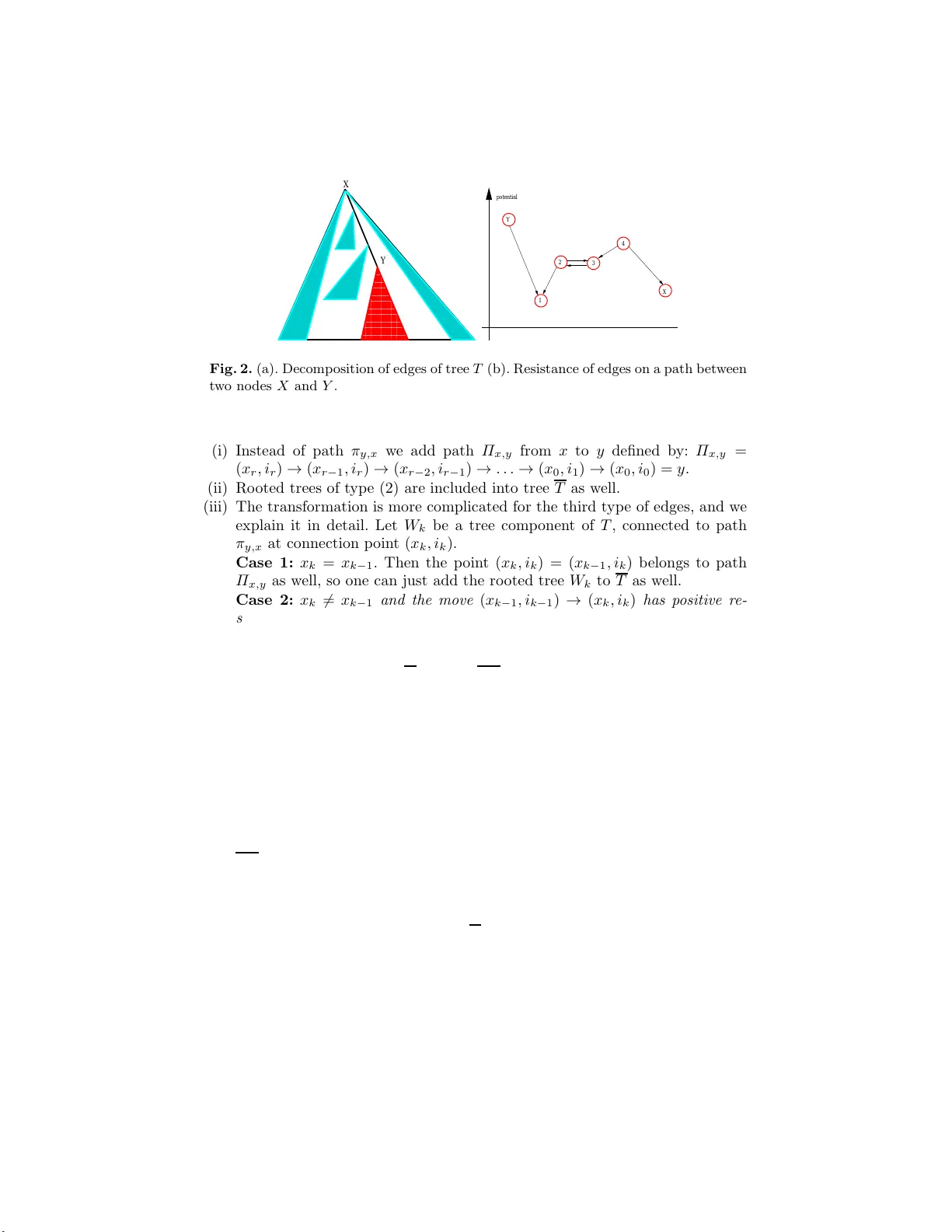

Adv ersarial Sc heduling Analysis of Game-Theoretic Mo dels of Norm Diffusion Gabriel Istrate 1 ⋆ , Madhav V . Mara the 2 , and S.S. Ra vi 3 1 e-Austria Institute, V.Pˆ arv an 4, cam. 045B, Timi¸ soara R O-300223, R omania 2 Netw ork Dynamics and Simula tion Science Lab oratory , Virginia T ec h, 188 0 Pratt Drive Building X V, Blac ksburg, V A 24061. Email: mmarathe@vbi. vt.edu 3 Computer S cience Dept., S.U.N.Y. at Alban y , Albany , NY 122 22, U.S.A. Email: rav i@cs.albany.ed u Abstract. In [IMR01] we advocated the inv estigation of robustness of results in the t h eory of learning in games under ad versarial sc heduling mod els. W e provide evidence that such an a nalysis is feasible and can lead to nontrivial results b y investig ating, in an adversarial scheduling setting, P eyton Y oung’s mo del of diffusion of norms [Y ou98]. In particular, our main result incorporates c ontagion into P eyton Y oung’s model. Keyw ords: evolutionary games, adversar ial sc heduling, discrete Marko v c hains. 1 In tro duction Game-theoretic equilibria are st e ady-state pr op erties ; that is, given that all the play ers’ a ctions co rresp ond to an equilibrium point it w ould be irrationa l for an y of them to deviate fro m this behavior when the other s stick to their stra tegy . The fundamental problem facing this t ype of concept is that it do es not predict how players arrive at this e quilibrium in t he fir s t plac e , o r how they “cho o se” one such equilibrium, if several s uc h p oints exis t. The theory of equilibr ium selection of Hars´ anyi and Selten [HS88 ] assumes so me form of prior co ordination betw een players, in the form of a tr acing pr o c e dur e . This stro ng pr erequisite is often unrealistic. The theory o f l e arning in games [FL99] a ttempts to explain the emergence of equilibria as the result of an ev olutionary “ learning” pro cess. Models of this type assume one (or several) populations of agents , that in teract by pla ying a certain game, and updating their behavior based on the outcome o f this in teraction. Results in ev olutionar y ga me theory are imp ortant not necessarily as realis- tic mo dels o f strategic b ehavior. Rather, they provide pos sible explanations for exp eriment ally observed featur es o f real- w orld so cial dynamics. F or instance, the fundamen tal insigh t behind the concept of sto chastic al ly st able str ate gies is that contin uous “ noise” (or small deviations from r ationality) can pro vide a so lutio n to the equilibrium selection problem in ga me theo ry . disc us sion on the ro le of ⋆ correspondin g author. Email : gabrielis trate@acm.org strategic lea r ning in equilibrium s election see [PY05]) Simila r issues apply when mathematical mo deling is r eplaced with computer ex periments, in the ar ea o f agent-b ase d so cial simu lation [GT05]. Epstein [Eps0 7] (see also [AE96]) has a d- vocated a genera tiv e approa c h to so cial science: in or der to better understand a given phenomeno n one sho uld be able to generate it via simulations. Given that such mathematical mo dels or simulations are emerg ing a s to ols for p olicy-making (see e.g . [NBB99,ECC + 04]), how can we b e sure that the conclusions that w e de r iv e from the output of the simulation do not crucia lly depe nd on the particular assumptions a nd features we embed in it ? Part of the a ns w er is that these results have to display “robustness” with re s pect to the v a rious idealizations inherent in the mo del, be it mathematica l o r co mputational. V ar ious issues that might impact the robustness o f th e conclusions ha ve b een previously cons ider ed in the game-theoretic litera ture; for instance, the cele- brated result o f F oster and Y oung [FY90] can b e viewed as inv estigating the robustness of Nas h equilibria with respec t to the intro duction of small amounts of random noise (or pla yer mistakes). In this pap er we are only concer ned with o ne such issue: sche duling , i.e.the order in which agents get to up date their strategie s . Tw o alternatives are most po pular, b oth in the mathematical and the computer simulation liter ature: in the synchr onous mo de ( every pla yer up dates at every step. A p opular alternative is uniform matching . Mo dels of the latter type assume an underlying (hyper )graph top ology (describing the sets of players a llo wed to simultaneously update in one step as a r esult of game pla ying) and c hoo se a (hyper )edge uniformly at random from the av ailable o ne s . Employing a uniform matching mo del in mul- tiagent mo dels of s ocia l systems is unrealistic for it assumes p erfe ct and glob al randomness; it is not clear whether this assumption is w aranted in the “re al life” situa tions that the theory is supp osed to mo del. Indeed, notwithstanding the question re g arding the existence of computational randomness in nature, the structure of so cial interactions is neither r andom, nor uniform, and compris e s many r egular, “day by day” interactions, as well a s a smaller num b er of “o c- casional” ones . A r andom matching mo de l do es not take int o account lo c ality and cannot, therefore , adequately mo del “co n tagion” effects (i.e. play ers becom- ing activ ated as a r esult of some of their neighbors doing so ) . On the other hand, so cia l systems are inher ently distribute d , and it is not c le ar whether the assumption of glob al r andomness is r easonable in a sim ulation setting. W e inv estigate in a n adversaria l setting Peyton Y oung’s mo del of evolution of norms [Y ou98] (see also [Y o u0 3]). The dynamics mo dels an impo rtant aspect of so cial netw orks, the emerge nce of c onventions , and has b een prop osed a s an evolutionary justification for the emergence of cer tain rules in the prag matics of natural language [Ro o0 4]. Our results can b e s umma rized a s follows: r esults on selection of strict-do mina n t equilibria under random no ise extend (Theo- rem 9) to a clas s of nonada ptiv e schedulers. Howev er, such an extension fails for adaptiv e sc hedulers, e v en those with fairness prop e rties s imila r to those of a random sc heduler. Our ma in res ult (Theorem 1 0) extends the conv ergence to the strictly-dominant equilibrium to a class o f “no nmalicious” adaptive schedulers that models c ont agion and has a certain r eversibility prop erty (the class of suc h schedulers includes the rando m scheduler as a s pecial case). How ever for this class of schedulers the c onver genc e t ime is not necessarily the one from the c ase of random sc heduling. Besides the rele v ance of our results to e v olutionar y game-theory , we hop e that the concepts and tec hniques relev ant to this pap er can b e fruitfully exploited in the theor y of r apidly mixing Markov chai ns , of great interest in Theoretica l Computer Science. Not surprising ly , our framework is related to the the ory of self-stabiliza tion of distribute d systems [Dol00 ]. Our pro ofs highlight some principles and tech- niques of this theory ( the existenc e of a winning st r ate gy for sch e duler-luck games [Dol00], mo notonicity and c omp ositio n of winning str ate gies ) that can be applied to the pa rticular pr o blem we study , and co nceiv ably in more g eneral settings as well. 2 Preliminaries A genera l class of models for whic h adversarial analysis can be naturally consid- ered is that of p opulation games [Blu0 1]. Systems o f interest in this class cons ist of a n umber of agents , defined as the vertices o f a h ype r graph H = ( V , E ). One edge of this hypergr aph repres en ts a particular c hoice of all play ers who can pla y (one or more simultaneous instances o f ) a g ame G that defines the dyna mics. Each play er has a state (generally a mixe d strateg y of G ) c hosen from a certain set S . The global state of the system is an element o f S = S V . The dy na mics pro ceeds b y choo sing one edge e o f H (according to a sc heduling mechanism that is specified by the scheduler), letting the agents in e play the ga me, a nd upda ting their states as a result of game playing. 2.1 Sc hedulers Denote by X ∗ the set of finite words ov er alphab et X . Definition 1. A deterministic sc heduler is sp e cifie d by a mapping f : E ∗ × S → E , wher e E is t he set of e dges of H and S is the set of p ossible st ates of t he system. Mapping f sp e cifies the next sche dule d e lement, give n the curr ent history. L et b ≥ 1 . A sche duler that c an cho ose one item among a set of m elements is (w ors t-case) b -fair if every agent is guar ante e d to b e sche dule d at le ast onc e in any se quenc e of b ( m − 1) + 1 c onse cutive steps. One particularly restricted class of schedulers is that of non-adaptiv e sched- ulers, corresp onding to upda tes of the nodes /edges according to a fixed permu- tation, indep enden t of the initial state of the system. The above definitions a r e well-suited for deterministic sche du lers . They ar e not well-suited for probabilistic schedulers (such as ra ndom matching), since fo r any fixed n umber of steps B with p ositive probability it will take more than B steps to sch edule each element at lea st o nc e . Also, the definitions do not allow for multiple agents to be scheduled simultaneously . Therefor e in this case we need to emplo y slightly different definitions. Definition 2. A (probabilistic) sc heduler assigns a pr ob ability distribution p w, s on E to e ach p air ( w , s, s 0 ) c onsisting of initial pr efixes w ∈ E ∗ , s ∈ S ∗ with | w | = | s | and starting state s 0 ∈ S . The n ext element e ∈ E to b e sche dule d, given pr efixes w, s and initial st ate s 0 , is sample d fr om E ac c or ding to p w, s,s 0 . A non-a daptive pr ob abili stic s che duler is sp e cifie d by (a) a c ol le ction (multi- set) Σ = {D 1 , . . . , D m } of pr ob abili ty distributions on t he set E such that every x ∈ E b elongs to the supp ort of some distribution D i and (b) a fi x e d p ermutation π of Σ . The sche duler pr o c e e ds by (p ossibly c oncurr ently) sche duling elements of E sample d fr om a distribution fr om Σ chosen ac c or ding to (c onse cutive r ep- etitions of ) p ermutation π . F or C > 0 , a non-adaptive pr ob abili stic sche duler is C individua lly -fair if for every x ∈ E , t he pr ob ability that x is sche dule d during one r ound of π is at le ast C / | E | . One can define, for any given triple ( w , s, s 0 ), where w ∈ E ∗ , s ∈ S ∗ and s 0 ∈ S , a proba bilit y π w, s,s 0 , the probability that, s ta rting from state s 0 the scheduler uses w as the initial prefix o f its schedule and evolves its global state according to string s . Let Ω denote the resulting pro ba bilit y space. W e divide each tra jectory of a pro babilistic sc heduler in to r ounds : the fir s t ro und is the smallest initial segment that sc hedules ea c h element of E at least once, the second round is the sma llest segmen t starting at the end of the first round that schedules eac h elemen t at leas t o nce, and so on. Giv en this conven tion, it is easy to see that for any k > 0 and s ∈ S the family W k of strings w cons is ting of exactly k r oun ds rea liz es a complete partitio n of the probability s pace Ω , i.e. P w ∈ W k π w, s = 1. Definition 3. If f ( · ) is a function on inte gers, we say that a family of pr ob- abilistic sche dulers, indexe d by n , the c ar dinality of the set E , i s O ( f ( n ))-fair w.h.p. if ther e exists a monotonic al ly de cr e asing function g : (0 , ∞ ) → (0 , 1) , with lim ǫ →∞ g ( ǫ ) = 0 such that for every state s ∈ S , denoting by l i the r an- dom variable me asuring the length of the i ’th r ound, we have lim n →∞ Prob[ l i > ǫ · f ( n )] < g ( ǫ ) . Random sch eduling can be sp ecified by a non-adaptive probabilistic scheduler whose s e t Σ consists of just one distributio n, namely the uniform distribution o n E . This sc heduler is 1-individually fair and, b y th e w ell-known Coup on C o llector Lemma it is also O ( n log ( n ))-fa ir w.h.p. 2.2 P eyton Y o ung’s mo del o f norm diff usion The setup of this model is the following: ag en ts loca ted at the vertices of a graph G interact by playing a tw o-per son symmetric g ame with pay off matr ix M = ( m i,j ) i,j ∈{ A , B } display ed in Figure 1. It is assumed that strategy A is a so called strict risk-dominant e quilibrium . That is, we hav e a − d > b − c > 0. Each undirected edge { i, j } ha s a p ositive weigh t w ij = w j i that measures its “imp ortance”. When scheduled, age n ts pla y (using the s ame str ategy , identified as t he ag ent’s st ate ) ag a inst each of their neighbor s. If agent i is the one to upda te, x is the joint profile of agents’ strategies, and z ∈ { A , B } is the candidate new state, p β ( x i → z | x ) ∼ e β · ν i ( z ,x − i ) , where ν i ( z , x − i ), the payoff of the i ’th agent should he play strateg y z while the o thers’ profile remains the same is given b y ν i ( z , x − i ) = P ( i,j ) ∈ E w ij m z ,x j . Under random scheduling, the pro cess we defined is a v ariant o f the best-resp onse dyna mics. This latter pro ces s (viewed as a Markov chain) is not ergo dic. Indeed, the since in game G it is alwa ys better to play the same stra tegy as your partner, the dynamics has at least t wo fixed po in ts, states “all A ” and “all B ”. strategies A B A a,a c,d B d ,c b,b Fig. 1. P a yoff matrix An imp orta n t prop erty of Peyton Y oung’s dy namics is that it corr espo nds to a p otential game: there exists a function ρ : V → R suc h that, for an y pla yer i , any p ossible actions a 1 , a 2 of play er i , a nd a n y a ction profile a of the other play ers, u i ( a 1 , a ) − u i ( a 2 , a ) = ρ ( a 1 , a ) − ρ ( a 2 , a ) (where u i is the utility fun ction of pla yer i ). In other w ords c hanges in utility as a result of stra tegy up date corres p ond to c hanges in a glo bal po ten tial function. An explicit po ten tial is given by ρ ∗ ( x ) = P ( h,k ) ∈ E w h,k m x h ,x k . 2.3 Sto c hastic stabil it y A fundamen tal concept w e are dealing with is that o f a sto chastic al ly stable state for dynamics describ ed b y a Marko v chain. Definition 4. Consider a Markov pr o c ess P 0 define d on a finite st at e sp ac e Ω . F or e ach ǫ > 0 , define a Markov pr o c ess P ǫ on Ω . P ǫ is a regular p erturb ed Marko v pr oce ss if al l of the fol lowing c onditions hold. – P ǫ is irr e ducible for every ǫ > 0 . – F or every x, y ∈ Ω , lim ǫ> 0 P ǫ xy = P 0 xy . – If P xy > 0 then t her e exists r ( m ) > 0 , the resis ta nce of transition m = ( x → y ) , su ch that as ǫ → 0 , P ǫ xy = Θ ( ǫ r ( m ) ) . L et µ ǫ b e the (unique) stationary distribution of P ǫ . A state s is stochastically stable i f lim ǫ → 0 µ ǫ ( s ) > 0 . Peyton Y oung’s mo del of diffusion of norms can b e easily reca st into the framework o f Definition 4, b y defining ǫ = e xp ( − β ). Observ atio n 1 Peyton Y oun g’s mo del of diffusion of norms c an b e re c ast into the fr amework of Definition 4. L et ǫ = exp ( − β ) . As β → ∞ , ǫ → 0 . Consider now t he Markov chain Γ ǫ c orr esp onding t o the original dynamics. It has tr an- sition matrix D ǫ = D 1 ,ǫ . . . D m,ǫ , wher e D i,ǫ = ( d ǫ i,k,l ) is the tr ansition matrix c orr esp onding to sche duling ( and up dating) a no de ac c or ding to D i . It is e asy to se e that lim ǫ → 0 d ǫ k,l = d k,l . Mor e over, by the natu r e of the dynamics, as ǫ → 0 e ach element of D i,ǫ is either zer o ( in c ase the state tr ansition k → l c ann ot b e r e ali ze d by up da ting any single no de memb er of supp ( D i ) ), tends to a p osi- tive c onstant which is the pr ob ability that the no de c orr esp onding to the tr ansition k → l is chosen (i n c ase the tr ansition k → l c orr esp onds to a “b est re ply” move), or ten ds to z er o, asymptotic al ly like Θ ( ǫ r i,k,l ) , for some r i,k,l > 0 (otherwise). Definition 5. A tree ro oted at no de j is a set T of e dges su ch that for any state w 6 = j ther e exist s a un ique ( dir e cte d) p ath fr om w to j . The resista nce of a ro oted tree T is the sum of r esistanc es of al l e dges in T . The following characterization of sto chastically stable states is presented as Lemma 3.2 in the Appendix of [Y ou98]: Prop osition 6. L et P ǫ b e a r e gular p erturb e d Markov pr o c ess, and for e ach ǫ > 0 let µ ǫ b e the unique stationary distribution of P ǫ . Then lim ǫ → 0 µ ǫ = µ 0 exists, and µ 0 is a stationary distribution of P 0 . The st o chastic al ly stable states ar e pr e cisely those states z su ch that ther e exists a tr e e r o ote d at z of minimal r esistanc e (among al l r o ote d tr e es). Definition 7. Given a gr ap h G , a nonempty subset S of vertic es and a r e al num- b er 0 ≤ r ≤ 1 / 2 we say tha t S is r -close - knit i f ∀ S ′ ⊆ S, S ′ 6 = ∅ , e ( S ′ ,S ) P i ∈ S ′ deg ( i ) ≥ r , wher e e ( S ′ , S ) is t he numb er of e dges with one endp oint in S ′ and t he other in S , and deg ( i ) is the de gr e e of vert ex i . A gr aph G is ( r , k )-clo se-knit if every vertex is p art of a r -close-knit set S , with | S | = k . Definition 8. Given p ∈ [0 , 1] , the p -inertia of the pr o c ess is the maximum, over al l s tates x 0 ∈ S , of W ( β , p, x 0 ) , the exp e ct e d waiting t ime u ntil at le ast 1 − p of the p opulation is playing action A c onditional on starting in st ate x 0 . The mo del in [Y o u03,Y ou98] assumes indep endent individual updates, ar- riving at r andom times gov erned (for each ag en t) by a Poisson arriv al pro cess with rate one. Since we ar e, how ev er, in terested in adversarial mo dels tha t do not hav e an e a sy description in con tinuous time we will assume that the pro cess pro ceeds in discrete steps. At each such step a r andom no de is sc heduled. It is a simple exercise to translate the result in [Y ou03,Y ou98] to an equiv alent o ne for global, discrete-time scheduling. T he c onclusions of this tra nslation are: – The stationary distribution of the pro cess is the Gibbs distribution , µ β ( x ) = e β ρ ( x ) P z e β ρ ( x ) , where ρ is the potential function of the dynamics. – ” All A “ is the unique sto chastically-stable state of the dynamics. – Let r ∗ = b − c a − d + b − c , and let r > r ∗ , k > 0. On a family of ( r, k )-close- knit graphs the conv ergence time is O ( n ). 3 Results First we note that Peyton Y oung’s r esults easily extend to non-adaptive sc hed- ulers. A daptive schedulers on the o ther hand, even those o f fair ness no higher than that of the random scheduler, can prec lude the sys tem from ever enter- ing a state wher e a prop ortion higher than r of a gent s plays the r isk-dominant strategy: Theorem 9. The fol lowing hold: (i) F or all non-adaptive s che dulers, the st ate “al l A ” is the un ique sto chas- tic al ly s t able state of the system. (ii) L et G b e a class of gr aph s that ar e ( r , k ) -close-knit for some fixe d r > r ∗ . L et f = f ( n ) b e a class of non-adaptive Θ (1) individual ly fair sche dulers. Given any p ∈ (0 , 1) ther e ex ists a β p such that for al l β > β p ther e exists a c onstant C such that the p -inertia of the pr o c ess (un der sche duling given by f ) is at most C · m · n , wher e m = m ( n ) is t he numb er of r ounds of f and n is the numb er of vertic es of the underlying gr aph. (iii) F or every 0 < r < 1 ther e e xists an adaptiv e sche duler which i s O ( n log( n )) - fair w.h.p. (wher e t he c onstant hidden in t he “O” notation dep ends on r ) that c an for ever pr event the system, starte d on t he “al l B ’s” c onfigura tion, fr om ever having mor e than a fr action of r of the agents playing A . (i) L e t i ∈ V and let M i be the restric tion of the given dynamics cor resp onding to the case when only one node, node i , is sc heduled in all mov es (otherwise the dynamics is similar to the original one). It is e a sy to see that M i is a no n- ergo dic Marko v c hain and that µ β is a stationary distr ibution for the Mar k ov chain M i . This is so b ecause for t wo config urations x, y that o nly differ in po sition i , the ratio of transitio n probabilities p x,y /p y ,x is equal to to exp [ β · ( ρ ∗ ( x ) − ρ ∗ ( y ))], which is precisely µ β ( x ) /µ β ( y ). Now co nsider the matrix D k corres p onding to the distr ibution with the same notation as in the p erio dic schedule. It is a convex com bination of the ma- trices M i , hence it will also hav e µ β as a stationar y distribution. W e infer that the pr oduct of matrices D k corres p onding to the cy clic schedule als o has µ β as a stationary distribution. But it is easy to s ee that the Markov c hain cor resp onding to o ne round of the cyclic schedule is irr educible (since one can navigate betw een an y tw o states in at most | V | rounds, by flipping the differing bits and k eeping the bits tha t coincide fixed) a nd ap erio dic (since the pro babilit y o f remaining in a given state is p ositive). Ther efore, it must hav e an unique stationary distribution, which is nece s sarily µ β . (ii) Co nsider a vertex v ∈ V and a r -close- k nit set of size k containing v , de- noted S v . Co nsider Γ v, β the version of the pro cess where each vertex in S v upda tes just as befor e, but each vertex in V \ S v alwa ys cho oses state B when scheduled. This restricted dynamics on V still corres ponds to a p oten tial ga me, s p ecified by p otential function ρ ∗ ( x ) = X ( i,j ) ∈ E ρ ( x i , x j ) , for x ∈ { A, B } S v B V \ S v , and with the Gibbs distribution µ β ( x ) = e β · ρ ∗ ( x ) P z e β · ρ ∗ ( z ) as its stationar y dis- tribution. Aga in, just a s in [Y ou93] (since the precise scheduling order doe s not play a role in this result) the condition that G is ( r, k )-close- knit implies that the state A S defined as “all A ” on S v and “all B ” on V \ S v is the state with the highest p o ten tial among the po ssible states of the system. One additional co mplication of the dynamics Γ v, β is that it o ften schedules (unnecessarily) no des outside S v , that do not c hange. Consider Ξ v, β that is the version of Γ v, β that “only schedules no des in S v ” (i.e. it ignores mov es of Γ v, β that schedule no des outside of S v ). T o describe this dynamics formally , view ea c h distribution D i as a set of symbols fr om the alphab et V . Then the set of tra jectories of the dynamics Γ v, β can b e specified b y the w ords o f the regular langua ge L Γ = ( D 1 · D 2 · . . . · D m ) ∗ . T ra jectories of Ξ v, β corres p ond to w ords in another regular langua ge L Ξ , more precisely to the ones corresp onding to deleting sym b ols in V \ S v from words in L Γ . This regular language can be specified by the r egular expression (( D 1 ∪ { λ } ) · . . . · ( D m ∪ { λ } ) ∩ S + v ) ∗ . This expression yie lds a matrix of size 2 | S v | × 2 | S v | for Ξ v, β . Claim. F or every ǫ > 0 there exists η ∈ N such that, for every η ′ > η ∈ N any initial sta te o f Γ v, β and every state T ∈ { A , B } S v . | P r [ Γ v, β in state T | | w | = η ′ · m · n ] − Π ( T ) | ≤ ǫ. Pr o of. Let ǫ > 0. As Ξ v, β conv erges to its stationa ry distribution, there exists k > 0 such that ∀ k ′ > k and every initial s ta te of Ξ v, β | P r [ Ξ v, β in state T || w | = k ′ ] − ˜ Π ( T ) | ≤ ǫ/ 2 , (1) where ˜ Π is the stationa ry distribution of dyna mics Ξ . Of course, states with po sitiv e supp o rt in ˜ Π hav e the same probabilit y in Π , that is ∀ T ∈ { A , B } S v : Π ( T ) = ˜ Π ( v ) . Let Y be a random tra jectory of leng th η ′ · m · n in l Γ and let pr ( Y ) its pro jection onto L Ξ . Claim. There exists η > 0 such that ∀ η ′ > η Prob | Y | = η ′ · m · n [ | pr ( Y ) | < k ] ≤ ǫ 2 . Pr o of. The proba bility that an y giv en distribution D i whose supp ort in- cludes some ele ment in S v will schedule (in a given round) a no de in this set is Ω (1 /n ), by the fairness condition. Ther e is at least one such D i among all the m distributions. Therefore, the exp ected length of pr ( Y ) is Ω ( k /n ). A simple application of Marko v’s inequalit y gives the desired result. Now write P r [ Γ v, β in state T || w | = η ′ · m · n ] = P j P r [ Γ v, β in state T | | w | = η ′ · m · n, | pr ( w ) | = j ] · Prob[ | pr ( w ) | = j ] Therefore we have P r [ Γ v, β in state T || w | = η ′ · m · n ] − Π ( t ) | ≤ ≤ P j | P r [ Γ v, β in state T | | w | = η ′ · m · n, | pr ( w ) | = j ] − Π ( T ) | · · Prob[ | pr ( w ) | = j ] The first term in the pro duct is a n absolute difference b et ween tw o probabil- it y v alues, and th us has absolute v alue at most one. Therefore, by Claim ii, if w e neglect in the sum those ter ms with j < k only c hanges the sum by a t most ǫ/ 2. On the other hand P r [ Γ v, β in state T | | w | = η ′ · m · n, | pr ( w ) | = j ] = P r [ Ξ v, β in state T || w | = k ′ ] . Now, using E quation (1 ), Claim ii follo ws. F r om now on the pro of mirrors rather clo sely the one for the case of ra n- dom scheduling (prese n ted in the Appendix to [Y ou9 3]): first, b ecause the stationary distribution of pro ces s Γ is the Gibbs distr ibution, there ex ists a finite v alue β ( Γ , S, p ) such that µ v ( A S ) ≥ 1 − p 2 / 2 for all β > β ( Γ , S, p ). There are only a finite num be r of nonis o morphic dynamical systems Γ v, β (where iso morphism of dynamical system is mean t to b e the isomorphism of the underlying graph topolo gies S v and of the pro jection of schedulers ont o S v ). In particular w e can find β ( r, k , p ) and η ( r, k , p ) s uc h that, for all gr aphs G ∈ G and all r -close- knit subsets S with k vertices, the following relation holds for al l initial states: ∀ β ≥ β ( r , k , p ) , ∀ η ′ ≥ η, P r [ y η ′ · m · n = A S ] ≥ 1 − p 2 . (2) where y t is the state of the dynamica l system on state S v at time t . W e can now derive that for ev ery close-knit set S ∀ β ≥ β ( r , k , p ) , ∀ η ′ ≥ η, P r [ x η ′ · m · n = A S ] ≥ 1 − p 2 . ( 3) where x t is now the state o f the pro cess from the theorem. The argument is obtained via essen tially the same coupling as the one fr o m [Y ou98], hence it is omitted from this writeup. Since ev ery vertex i is contained in a ( r , k )- close-knit set, it follows that ∀ β ≥ β ( r , k , p ) , ∀ η ′ ≥ η, P r [ x η ′ · m · n i = A ] ≥ 1 − p 2 . Therefore the exp ected prop ortion of vertices playing action A at time η ′ · m · n is at least (1 − p 2 ) n . But this implies that ∀ t ≥ η · m · n, P r [ at least (1 − p ) n nodes hav e label A ] ≥ 1 − p. Indeed, if this w asn’t the case, then with probability a t least p more than pn no des at time t w ould have lab el B , a con tradictio n. Now, b y a n application of Markov’s inequality , the e xpected time until at least (1 − p ) n no des are lab elled A is at most η · m · n/ (1 − p ). Since this holds for all graphs G in G , the p -inertia of the pro cess is bounded as stated in the theorem. (iii) Co nsider a scheduler working in r ounds. In ea c h round the scheduler is scheduling no de s according to a fixe d pe rm utation π , the same for all rounds. In each ro und the scheduler is schedulin g each node at least once. F or the first ⌈ rn ⌉ + 1 no des the scheduler contin ues scheduling each of them (after the initial one) until the no de switches to strateg y B . The scheduler plays each re maining node exactly once. It is easy to see tha t there exists a constant ǫ > 0 (that may depend on β ) such that, at each sta ge, each ag en t switches to strateg y B with pro babilit y greater or equal to ǫ . Therefore the probability that an y g iv en ag e n t needs to be sc heduled fo r more than c lo g( n ) rounds befo re turning to B is o (1 /n ) for large enough c . It follows that the given sc heduler is O ( n log ( n ))-fair w.h.p. 3.1 Main result: Diffusio n of norms by con tagion Adaptive schedulers can displa y t wo v ery differen t notions of adaptiveness: (i) The next node dep ends only on the set of previously sch eduled no des, or (ii) It cr ucially depends o n the states of the system so far. The adaptive schedulers in Theor em 9 (iii) was crucia lly using the se c ond, stronger , kind of adaptiveness. I n the seq ue l w e s tudy a model that displays adaptiveness of type (1) but not of type (2). The mo del is s pecified as follows: T o each no de v w e ass ocia te a probability distr ibution D v on the v ertices o f G . W e then cho ose the next scheduled node according to the following pro cess. If t i is the no de s c heduled at stage i , we chose t i +1 , the next scheduled no de, by sampling from D t i . In other words, the s cheduled node p erforms a (non- uniform) random walk o n the vertices of graph G . T o exclude tec hnical problems such as the perio dicity o f this random walk, we a ssume that it is alw ays the case that v ∈ su pp ( D v ). Also, let H b e the directed g raph with edge s defined as follo ws: ( x, y ) ∈ E [ H ] ⇐ ⇒ ( y ∈ supp ( D x )). This dynamics generalizes bo th the class o f non-a daptiv e schedulers from pr evious re s ult and the rando m scheduler (for the case whe n H is the co mplete graph). In the context of v an Ro oy’s evolutionary analysis of signalling g ames in natural language [Roo0 4], it functions as a simplified model for an essential asp ect of emergence of linguistic conv ent ions: transmission via c ontagion . It is easy to see that the dynamics can be describ ed by an aper io dic Marko v chain M on the set on V { A , B } × V , where a state ( w , x ) is des cribe d as follows: – w is the set of strategies c hosen b y the agents. – x is the lab el of the last agent that w as given the chance to upda te its state. If the directed g raph H is strong ly co nnected then the Ma rko v chain M is irreducible, hence it has a stationa ry distribution Π . W e will, therefor e, limit ourselves in the sequel to settings with strongly connected H . W e will, further, assume that the dynamics is we akly r eversible , i.e . ( x ∈ supp ( D y )) if and only if ( y ∈ supp ( D x )). This, of course, means that the graph H is un directed. Note that since we do not constrain other w is e the transition pr o babilities of distributions D i , the s ta tionary distribution Π of the Markov chain does n ot , in general, de- comp ose as a pro duct of comp onent distributions. That is, one cannot generally write Π ( w , x ) as Π ( w , x ) = π ( w ) · ρ ( x ), for some distributions π , ρ . Theorem 10. The set Q = { ( w, x ) | w = V A } is the set of st o chastic al ly stable states for the diffusion of norms by c ontagio n. Pr o of. The s ta tes in Q a re obviously reachable from one another by ze r o-resistance mov es, so it is enough to consider one state y ∈ Q and prov e that it is sto chas- tically stable . T o do so, b y Prop ositio n 6 , all w e need to do is sho w that y is the ro ot of a tree of minimal resis ta nce. Indeed, consider ano ther s ta te x ∈ Q a nd let T be a minimum potential tree roo ted at x . Claim. Ther e exists a tree T r oo ted at y having p otent ial less or equa l to the po ten tial of the tree T , strictly smaller in c a se x is not a state having all its first-comp onent lab els equal to A . Let π y ,x = ( x 0 , i 0 ) → ( x 1 , i 1 ) → . . . → ( x k , i k ) → ( x k +1 , i k +1 ) → . . . → ( x r , i r ) be the pa th from y to x in T (that is ( x 0 , i 0 ) = y , ( x r , i r ) = x ). W e will define T by vie w ing the set of edges of T as pa rtitioned into s ubsets of edges corresp onding to paths as follo ws (see Figure 2 (a)): (i) The set of edges of pa th π y ,x . (ii) The set of edges of the subtr ee ro oted a t y . (iii) E dges of tree co mponents (p erhaps co nsisting of a single no de) ro oted at a no de of π y ,x , other than y (but poss ibly b eing x ). T o obtain T we will transform each tree (path) in the ab ov e decomp osition of T in to one that will be added to T . The transformation go es as follows: 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 0000000000 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 1111111111 X Y Y X potential 4 3 2 1 Fig. 2. (a). Decomp osition of edges of tree T (b) . Resistance of edges on a path b et ween tw o no des X and Y . (i) Ins tead of path π y ,x we add path Π x,y from x to y defined b y: Π x,y = ( x r , i r ) → ( x r − 1 , i r ) → ( x r − 2 , i r − 1 ) → . . . → ( x 0 , i 1 ) → ( x 0 , i 0 ) = y . (ii) Ro oted tr e es of type (2) a re included into tree T as w ell. (iii) The trans formation is more complicated for the third t yp e of edges, and we explain it in detail. Let W k be a tr e e comp onent of T , connected to path π y ,x at connection p o in t ( x k , i k ). Case 1: x k = x k − 1 . Then the p oint ( x k , i k ) = ( x k − 1 , i k ) b elongs to path Π x,y as w ell, so one can just add the roo ted tree W k to T as w ell. Case 2: x k 6 = x k − 1 and the move ( x k − 1 , i k − 1 ) → ( x k , i k ) has p ositive r e- sistanc e . In this case, s ince in configuration x k − 1 and scheduled node i k we hav e a choice of either moving to x k or s ta ying in x k − 1 , it follows that the mov e ( x k , i k ) → ( x k − 1 , i k ) has zero resistance. Therefore w e ca n add to T the tree W k = W k ∪ { ( x k , i k ) → ( x k − 1 , i k ) } . The tree has the same resistance as the one of tree W k . Case 3: x k − 1 6 = x k and the mov e ( x k − 1 , i k − 1 ) → ( x k , i k ) ha s zero re s istance. Let j be the smalles t integer such that either x k + j +1 = x k + j or x k + j +1 6 = x k + j and the mo ve ( x k + j , i k + j ) → ( x k + j +1 , i k + j +1 ) has pos itiv e resistanc e . In this case, one can firs t replace W k by W k ∪ { ( x k , i k ) → ( x k +1 , i k +1 ), ( x k +1 , i k +1 ) → . . . → ( x k + j , i k + j ) } without increasing its tota l resistance. Then we apply one of the techniques from Case 1 or Ca s e 2. Case 4: x k − 1 6 = x k , the mov e ( x k − 1 , i k − 1 ) → ( x k , i k ) has zer o res istance, and all mov es on π y ,x , fr om x k up to x have zero resis tance. Then define W k = W k ∪ ( x k , i k ) → ( x k +1 , i k +1 ) → . . . → x . It is easy to see that no t wo sets W k int ersect on an edge having p ositive resistance. The union of the paths o f all the sets is a directed a sso ciated graph W ro oted a t y , that contains a ro oted tree T of p oten tial no larger tha n the p otential of W . Since tr ansformations in c a ses (i),(iii) do no t increase tree resistance, to compare the po ten tials of T and W it is eno ugh to compar e the resistances o f paths π y ,x and Π x,y . W e come now to a f undamental pro p erty of the game G : since it is a po ten tial game, the resistance r ( m ) of a mo ve m = ( a 1 , j 1 ) → ( a 2 , j 2 ) only depends on the v a lues of the p otent ial function at thre e p oints: a 1 , a 2 and a 3 , where a 3 is the state obtained by a s signing node j 2 the v alue not assig ned b y mo ve to a 2 . Specifica lly , r ( m ) > 0 if either ρ ∗ ( a 2 ) < ρ ∗ ( a 1 ), in whic h case r ( m ) = ρ ∗ ( a 1 ) − ρ ∗ ( a 2 ), or a 2 = a 1 and ρ ∗ ( a 3 ) > ρ ∗ ( a 1 ), in which case r ( m ) = ρ ∗ ( a 3 ) − ρ ∗ ( a 1 ). In other words, the resistanc e of a mov e is p ositive in the following tw o cases: (1) The mov e leads to a decr ease of the v alue of the potential function. I n this cas e the resistance is equal to the difference of p otentials. (2) The move corres ponds to keeping the cur rent sta te (th us not mo difying the v a lue of the p otential function), but the alternate mov e would ha ve increased the p otential. In this ca se the resistance is equal to the v alue of this increase. Let us now compar e the resis ta nces of paths π y ,x and Π x,y . First, the tw o paths co n tain no edges o f infinite resistance, since they corresp ond to po ssible mov es under Marko v c hain dynamics P ǫ . If we discoun t second comp onents, the t wo paths corr espo nd to a single sequence of states Z connecting x 0 to x r , more precisely to tr aversing Z in opp osite dir e ctions . (The last move in Π x,y has zer o resistance and can thus be dis c o un ted). Resistant mov es of type (2) are taken int o accoun t by b o th trav ersals , and con tribute the sa me resistance v alue to bo th paths. So, to compare the resista nces of the tw o paths it is enough to compare resistance of mo ves o f type (1). Mov es of t ype (1) of p ositive res istance are tho se that lead to a decre ase in the p otential function. Decr easing p o ten tial in one direction co rresp onds to increa s ing it in the other (therefor e such mov es hav e zero resistance in the opp o site direction). An illus tr ation of the tw o types of mov es is given in Figur e 2 (b), where the pa th b etw een X a nd Y goes through four other no des, lab eled 1 to 4. The relative heigh t of eac h node cor resp onds to the v a lue of the potential function at that node. No des 2 and 3 ha ve equal potential, so the transition betw een 2 and 3 contributes a n equal amoun t to the resistance of paths in both dire c tions (whic h may be po sitiv e or not). Other than that o nly transitions of p ositive resistance are pictured. The conclusio n o f this ar gumen t is that r ( π y ,x ) − r ( Π x,y ) = ρ ∗ ( x ) − ρ ∗ ( y ) ≥ 0, and r ( π y ,x ) − r ( Π x,y ) > 0 unless x is an “all A ” s ta te. 3.2 The inerti a o f diffus ion of norms with con tagion Theorem 10 shows that r andom scheduling is not essent ial in ensuring that sto c hastically stable s ta tes in Peyton Y oung’s mo del cor resp ond to a ll players playing A : the same result ho lds in the mo del with contagion. On the other hand, the result on the p -iner tia of the process on families of clos e-knit graphs is not robust to such an extension. Indeed, consider the line gr aph L 2 n +1 on 2 n + 1 no des lab elled − n, . . . , − 1 , 0 , 1 . . . n . Consider a r a ndom walk mo del such that: (a) the or ig in of the r andom walk is no de 0, and (b) the walk go es left, go es right or sta ys in place, each with pro babilit y 1 / 3. It is a well-kno wn pr oper t y of the r andom w alk that it tak es Ω ( n 2 ) time to reach nodes at distance Ω ( n ) fro m the origin. Therefore, the p -inertia of this random walk dy na mics is Ω ( n 2 ) ev en though for every r > 0 ther e exis ts a constant k such that the family { L 2 n +1 } is ( r , k )-close-k nit for large enough n . In the journa l version of the pap er we will present an upp er b ound o n the p -inertia for the diffusion of nor ms with con tagio n based o n c oncepts similar to the blanket time of a ra ndo m walk [WZ96]. 4 Conclusions an d Ac kno wledgmen t s Our r esults hav e made t he original statement b y Peyton Y oung mor e robust, and hav e highlig hted the (la c k of ) imp ortance o f v arious prop erties of the random scheduler in the results from [Y ou98]: the r eversibility of the random scheduler, as w ell a s its inability to use the global system state are imp ortant in an adver- sarial s e tting, while its fairness prop erties a re not crucia l for conv ergence, only influencing conv ergence time. Also , the fact that the stationar y distr ibution of the perturb ed pro cess is the Gibbs distribution (true for the rando m scheduler) do es not necessarily extend to the adv ersar ial setting. This w ork has been suppor ted b y the Romanian CNCSIS under a PN-II “Idei” Grant, b y the U.S. Department o f Energy under contract W -70 5-ENG-36 and b y NSF Grant CCR-9 7-3493 6. References [AE96] R. Axtell and J. Epstein. Gr owing Art ificial So cieties: So ci al Scienc e f r om the Botto m Up . The MIT Press, 1996. [Blu01] L. Blume. Po pulation games. In S . D urlauf and H. P eyton Y oung, editors, So cial Dynami cs: Ec onomic Le arning and So cial Evolution . MIT Press, 2001. [Dol00] S. Dolev. Self-stabilization . M.I.T. Press, 2000. [ECC + 04] J.M. Ep stein, D. Cummings, S. Chakrav art y , R . S inga, and D. Burk e. T o- war d a Containment Str ate gy for Smal l p ox Bioterr or. An Individual-Base d Computational Appr o ach . Brookings Institution Press , 2004. [Eps07] J. Epstein. Gen er ative So cial Scienc e: Studies in A gent-b ase d Computational Mo deling . Princeton U nive rsity Press, 2007. [FL99] D. F udenberg and D. K. Levine. The The ory of L e arning in Games . M.I.T. Press, 1999. [FY90] D. F oster and H .P . Y oung. Stochastic ev olutionary game dynamics. The o- r etic al Population Biolo gy , 38(2):219–232, 1990. [GT05] N. Gilbert and K. T roizc h. Simul ation for so cial scientists (se c ond e dition) . Op en Universit y Press, 200 5. [HS88] J. Hars´ anyi and R. S elten. A Gener al The ory of Equil ibrium Sele ction in Games . The M.I.T . Press, 1988. [IMR01] G. Istrate, M.V. Mara the, and S.S. Ra vi. A dvers arial models in evolution- ary game dy n amics. In Pr o c e e dings of the 13th ACM-SIAM Symp osi um on Discr ete A lgorithms (SOD A’01) , 20 01. (journal v ersion in preparation). [NBB99] K. Nagel, R . Bec kmann, and C. Barrett. TRANSIMS for transp ortation planning. In Y. Bar-Y am and A. Minai, editors, Pr o c e e dings of the Se c ond International Confer enc e on Complex Systems . W estview Press, 1999. [PY05] H. Peyton-Y oung. Str ate gic L e arning and Its Limits . Oxford Universit y Press, 2005. [Roo04] R. V an Rooy . Signalling games select Horn strategies. Linguistics and Phi- losophy , 27:423–497, 2004. [WZ96] P . Winkler and D. Zuck erman. Multiple co ver time. Ra ndom Structur es and Algor ithms , 9(4):4 03–411, 1996. [Y ou93] H.P . Y oung. The evolution of conv entions. Ec onometric a , 61 (1):57–84, 19 93. [Y ou98] H.P . Y oung. Indivi dual Str ate gy and So cial Structur e: an Evolutionary The- ory of Institut ions . Princeton Univ ersity Press, 1998. [Y ou03] H.P . Y oun g. The d iffusion of innov ations in social n et wor ks. In L. Blume and S. Durlauf, editors, The Ec onomy as a Complex System III . O xford Universit y Press, 2003.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment