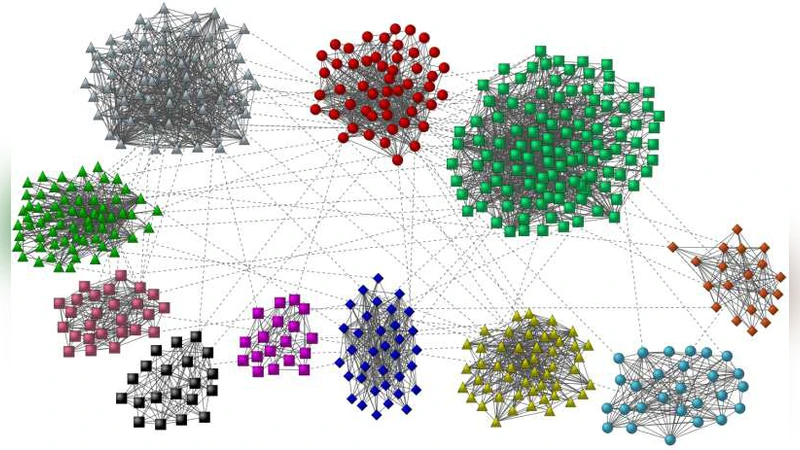

Benchmark graphs for testing community detection algorithms

Community structure is one of the most important features of real networks and reveals the internal organization of the nodes. Many algorithms have been proposed but the crucial issue of testing, i.e. the question of how good an algorithm is, with respect to others, is still open. Standard tests include the analysis of simple artificial graphs with a built-in community structure, that the algorithm has to recover. However, the special graphs adopted in actual tests have a structure that does not reflect the real properties of nodes and communities found in real networks. Here we introduce a new class of benchmark graphs, that account for the heterogeneity in the distributions of node degrees and of community sizes. We use this new benchmark to test two popular methods of community detection, modularity optimization and Potts model clustering. The results show that the new benchmark poses a much more severe test to algorithms than standard benchmarks, revealing limits that may not be apparent at a first analysis.

💡 Research Summary

The paper addresses a fundamental problem in network science: how to reliably evaluate community‑detection algorithms. While the Girvan‑Newman (GN) benchmark has been the de‑facto standard for many years, it suffers from three major unrealistic assumptions: (i) all nodes have essentially the same degree, (ii) all communities have identical size, and (iii) the network is very small (128 nodes). Real‑world networks, however, display heavy‑tailed degree distributions and a broad spectrum of community sizes, often spanning several orders of magnitude. Consequently, an algorithm that performs well on the GN benchmark may fail dramatically on realistic data, and the community‑resolution limit of modularity‑based methods can remain hidden.

To overcome these shortcomings, the authors propose a new class of benchmark graphs that simultaneously incorporates (a) a power‑law degree distribution with exponent γ, (b) a power‑law community‑size distribution with exponent β, (c) a tunable average degree ⟨k⟩, and (d) a mixing parameter µ that controls the fraction of a node’s edges that point outside its own community. The construction proceeds as follows: (1) sample a degree sequence from the prescribed power‑law, then generate a simple graph with that exact degree sequence using the configuration model; (2) assign each node a target internal‑to‑external edge ratio (1‑µ):µ; (3) draw community sizes from a second power‑law, ensuring that the smallest community is larger than the minimum degree and the largest community exceeds the maximum degree; (4) iteratively place “homeless” nodes into randomly chosen communities, evicting nodes when a community becomes full, until all nodes are assigned; (5) perform a series of rewiring steps that preserve each node’s degree while adjusting the internal/external split to match µ as closely as possible. The algorithm scales linearly with the number of edges, allowing the generation of graphs with up to 10⁵–10⁶ nodes in a few seconds. Convergence is practically guaranteed for realistic parameter ranges (2 ≤ γ ≤ 3, 1 ≤ β ≤ 2).

Using this benchmark, the authors evaluate two widely used community‑detection methods: (i) modularity maximization via simulated annealing, and (ii) the Potts‑model approach of Reichardt and Bornholdt. Accuracy is measured by the normalized mutual information (NMI) between the planted partition and the algorithm’s output, averaged over many graph realizations.

Results on the classic GN benchmark reproduce the well‑known behavior: for external degree k_out < 6 (µ < 0.5) modularity optimization recovers the four planted groups almost perfectly, and performance degrades only when communities become very fuzzy. In contrast, on the new benchmark the performance of both algorithms depends strongly on all four parameters. Modularity optimization exhibits a pronounced resolution limit: when β is large (i.e., community sizes are highly heterogeneous) the method tends to merge small communities, causing a steep drop in NMI. Higher average degree ⟨k⟩ mitigates this effect because internal connectivity is stronger, but increasing the total number of nodes (from N = 1 000 to N = 5 000) systematically lowers NMI, indicating that scalability issues are not captured by the GN test. The Potts model shows a similar trend: it is slightly more robust to heterogeneous community sizes but still suffers from performance loss as the network grows.

Overall, the study demonstrates that the new benchmark provides a much more stringent and realistic testbed. It reveals algorithmic weaknesses—such as modularity’s inability to resolve small communities in the presence of large ones, and the sensitivity of both methods to network density and size—that are invisible on the GN benchmark. The authors make their graph‑generation code publicly available, encouraging the community to adopt this benchmark for future algorithmic development and comparative studies.

Comments & Academic Discussion

Loading comments...

Leave a Comment