Evaluation and selection of models for out-of-sample prediction when the sample size is small relative to the complexity of the data-generating process

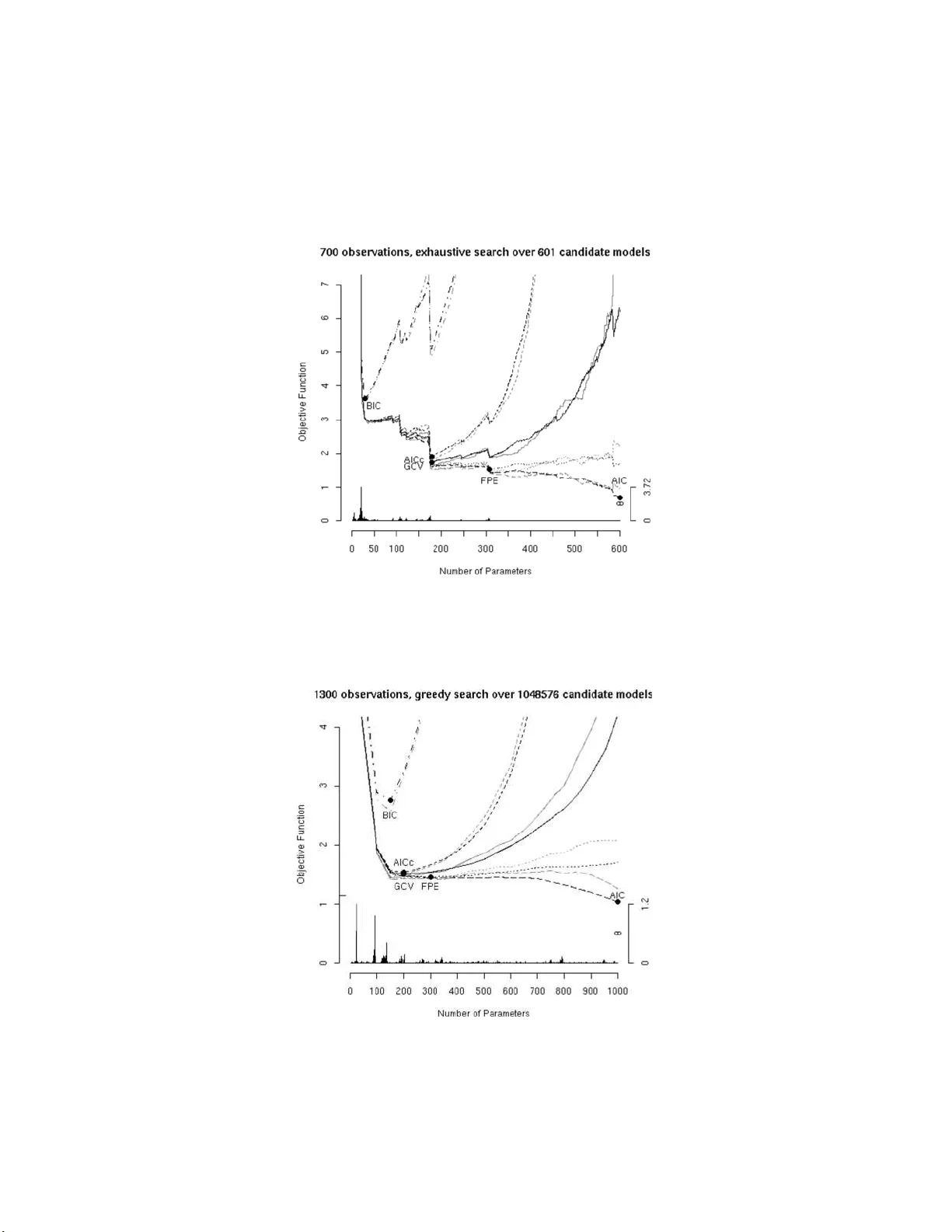

In regression with random design, we study the problem of selecting a model that performs well for out-of-sample prediction. We do not assume that any of the candidate models under consideration are correct. Our analysis is based on explicit finite-s…

Authors: ** Hannes Leeb (Department of Statistics, Yale University) **