Statistics of spikes trains, synaptic plasticity and Gibbs distributions

We introduce a mathematical framework where the statistics of spikes trains, produced by neural networks evolving under synaptic plasticity, can be analysed.

Authors: B. Cessac, H. Rostro, J.C. Vasquez

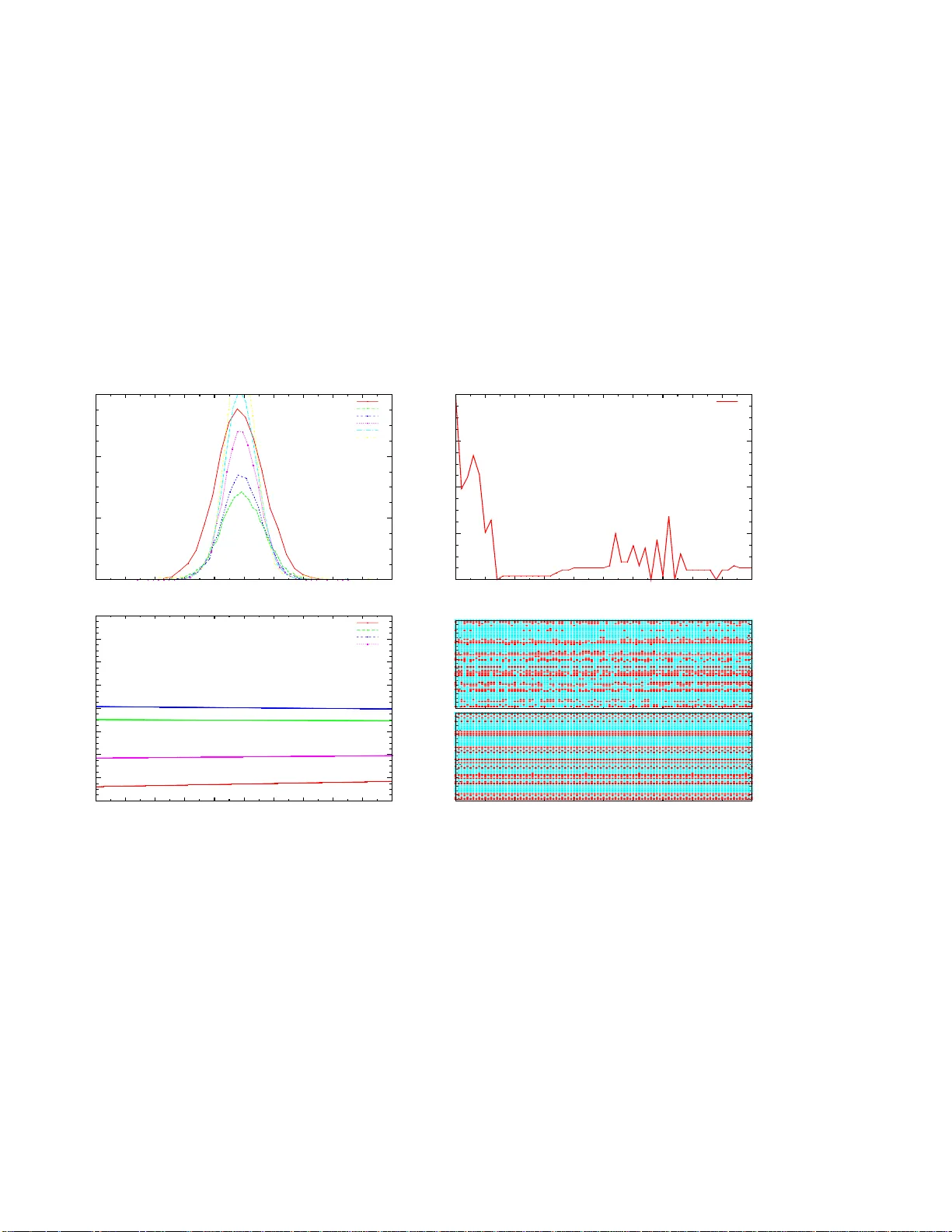

ST A TISTICS OF SPIKES TRAINS, SYN APTIC PLASTICITY AND GIBBS DISTRIBUTIONS. B. Cessac ∗ † ‡ , H. Rostro ∗ , J.C. V asq uez ∗ , T . V i ´ eville ∗ Abstract W e introduce a mathematical framew ork where the statist ics of spikes trains, produced by neural networks e volving under synaptic plasticity , can be analysed. 1 Intr oduction. Synaptic plasticity o ccurs at many levels o f organi- zation and time scales in the nerv ous system [1]. It is of course in v olved in m emory and learn ing me ch- anisms, but it also alters excitability of b rain area and regulates beh avioural states (e.g. transition b e- tween sleep and wakeful activity). Therefo re, un- derstandin g the effects of synap tic plasticity on n eu- rons dynam ics is a crucial ch allenge. Ho we ver , the exact r elation between the syn aptic prope rties (“mi- croscopic” lev el) and the effects induced on ne urons dynamics (meso-or macroscopic le vel) is still highly controversial. On experimental gr ounds, synaptic chang es can be induced by specific simulations conditions de- fined thro ugh the firing frequen cy of pre- and post- synaptic neu rons [2, 3], th e memb rane potential of the p ostsynaptic neu ron [4], spike timing [ 5, 6, 7] (see [ 8] f or a r evie w). Different mec hanisms h av e been exhibited fro m the Heb bian’ s ones [9] to Lo ng T erm Potentiation (L TP) and Lon g T erm Depr ession (L TD), and more recen tly to Sp ike T ime Dependent Plasticity (STDP) [6, 7] (see [10, 11, 12] for a re- view). Most often, these s imulation are perform ed in isolated neuro ns in in vitr o con ditions. Extrapo lat- ing the ac tion of these mech anisms to in viv o neural networks r equires both a bo ttom-up and up-b ottom approa ch. This issue is tackled, on theoretical grou nds, by infering “synaptic updates rules” or “learning rules” from biolog ical observations [13, 1, 1 4] (see [10, 11, 1 2] for a revie w) and extrapolating , b y the- oretical or numerical investigations, what are th e ef- fects o f such syn aptic ru le on such neural n etwork model . This appro ach relies on the belief that ther e are “cano nical neural models” and “canonical plas- ticity r ules” captur ing the most essential featur es of biology . Unfortun ately , this results in a p lethora of canonical “candidates” and a huge number of papers and controversies. E specially , STDP deserved a long discussion either on its biolog ical interpr etation o r on its practical implem entation [15]. Also, the dis- cussion of what is rele vant fo r neur on cod ing an d what h as to be measure d to co nclude on the effects of synaptic plasticity is a matter of long debates (ra te coding, rank coding, spike coding ?) [16, 17] In an attempt to clarify and un ify the overall vision, some research ers have prop osed to a ssociate learning rules and their dynamic al ef fects to gen- eral principles, a nd especially to“variational” or “op- timality” prin ciples, where some fun ctional h as to maximised o r m inimised (known examp les of vari- ational p rinciples in p hysics are least-action o r en- tropy maximizatio n). Dayan and Hau sser [18] h av e shown that STPD can be viewed as an optimal noise- removal filter for certain noise distributions, Rao and Sejnowski [19, 20] su ggested that STDP may be re- lated to optimal p rediction (a neu ron attemps to pr e- dict its membra ne potential at some time, given the past). Bohte and Mozer [21] pro poses that STPD minimizes response variability . Chechik [2 2] relates STDP to inform ation theory v ia max imisation of mutual infor mation between inp ut and output spike trains. In the same spirit, T oyoizumi et al [23, 24] have pro posed to associate STDP to an op timality principle wh ere transmission of info rmation between an ensemble of presy naptic spike trains and the oupu t spike train of the p ostsynaptic neuron is optimal, giv en some constraints im posed by bio logy (such as homeostasy and minimisation in the numb er of strong synap ses, wh ich is costly in view of co ntinued protein sy nthesis). “T ransmission of information ” is measured by the mu tual informa tion for the joint probab ility distribution of the input and output spike trains. Therefo re, in these “up -bottom ” appro aches, plasticity rules “emerge” from first princip les. Obviously , the validations of these theo ries re- quires a mo del of neuro ns d ynamics and a statisti- cal model for spike trains. Unfortu nately , often only isolated neuro ns are con sidered, subm itted to inpu t spike trains with ad hoc statistics (typically , Poisson distributed with indepen dent spikes [ 23, 24]) . How- ev er , adressing the effect of syn aptic plasticity in neural network s where dynamics is emer ging f rom collective effects and w here spike statistics are con - ∗ INRIA, 2004 Route des Lucioles, 06902 Sophia-Ant ipolis, France. † Laboratoi re Jean-Alexa ndre Dieudonn ´ e, U.M.R. C.N.R.S. N 6621, Nice, France. ‡ Uni versi t ´ e de Nice, Parc V alrose, 06000 Nice , France. 1 strained by this d ynamics seems to be of central im- portance . This issue is sub ject to two m ain difficulties. On the one h and, one mu st identity th e gen eric dynamica l regimes displayed b y a neural network model fo r different choices of p arameters (in clud- ing synaptic streng th). On the other hand, on e must analyse the effects of v arying synaptic weights when applying plasticity rules. This requ ires to handle a co mplex interwoven ev olution where neuron s dy - namics dep ends on synap ses and synapses evolu- tion depends on neur on dy namics. T he fir st aspect has be en adressed b y several auth ors using mean- field ap proach es (see [25] and r eference s th erein), “markovian appro aches” [2 6], or dynam ical system theory (see [27] an d refer ences ther ein). The sec- ond asp ect has, to the b est o f our knowledge, b een in vestigated theo retically in only a few exam ples with heb bian learning [2 8, 29, 30] or d iscrete time Integrate and Fire models with a n STDP like ru le [31, 32]. Follo wing these tr acks, we have investigated the d ynamical effects of a subclass of synaptic plasticity rules ( including som e impleme ntations of STDP) in neura l networks models where one has a fu ll cha racterization of the g eneric d ynamics [ 33, 34]. Thus, our aim is not to pr ovide gen eral state- ments about synaptic plasticity in biolo gical neural networks. W e simply want to hav e a go od mathem at- ical co ntrol of what is go ing on in specific m odels, with the hope that this analysis should some light on what happen s (or does not ha ppen) in “real world” neural systems. Using the framework of ergodic the- ory and thermo dynam ic forma lism, th ese plasticity rules can be form ulated as a variational principle for a quan tity , called the topolo gical pressure, clo sely re- lated to thermody namic potentials, like free en ergy or Gibb s p otential in statistical ph ysics [35]. As a main consequenc e of this f ormalism th e statistics o f spikes are more likely described b y a Gibbs proba - bility d istributions than b y the classical Poisson dis- tribution. In this co mmun ication, we give a brief o utline of this setting and provide a simple illustration. Fur- ther developments will be published in an extend ed paper . 2 General framework. Neuron dy namics. In this paper we consider a simple im plementatio n of the leaky In tegrate and Fire mod el, where time has be en discretized. This model has been in troduce d an d an alysed by mean- field technics in [32]. A fu ll characterisation of its dynamics has been done in [3 3] and most of the con- clusions extend to discrete time Integrate and Fire models with adaptive con ductanc es [34]. Call V i the membrane poten tial of neuron i . Fix a firing threshold θ > 0 . Then the dyn amics is gi ven by: V i ( t +1) = γ V i ( t ) (1 − Z [ V i ( t )])+ N X j =1 W ij Z [ V j ( t )]+ I ext i , (1) i = 1 . . . N , wh ere the “leak rate” γ ∈ [0 , 1[ and Z ( x ) = χ ( x ≥ θ ) where χ is the indicatrix function namely , Z ( x ) = 1 whenever x ≥ θ and Z ( x ) = 0 otherwise. W ij models the synaptic weight fr om j to i . Denote by W the matrix of synaptic weights. I ext i is some (time indep endent) external cur rent. Call I ( ext ) the vector of I ext i . W , I ( ext ) are contro l pa- rameters for the dynam ics. T o alleviate the no tation we write Γ = W , I ( ext ) . Thus Γ is a po int in a N 2 + N dimension al space of control parameters. It ha s been proved in [33] that the dyn amics of (1) admits generically finitely many perio dic o rbits 1 . Howe ver, in some region of the p arameters space, the perio d of th ese orbits can be qu ite larger than any numerically accessi ble time, leading to a regime practically indistinguishab le from chaos. Spikes dynamics. Provided that synaptic weights and currents are bounded, it is easy to show that the trajector ies of (1) stay in a compact set M = [ V min , V max ] N . For ea ch neuron on e can decomp ose the inter val I = [ V min , V max ] in to I 0 ∪ I 1 with I 0 = [ V min , θ [ , I 1 = [ θ , V max ] . If V ∈ I 0 the n euron is quiescent, oth erwise it fires. This splitting induces a partition P of M , that we call the “na tural partition”. Th e elements of P ha ve the following fo rm. Call Λ = { 0 , 1 } N , ω = [ ω i ] N i =1 ∈ Λ . Then, P = {P ω } ω ∈ Λ , where P ω = P ω 1 × P ω 2 × · · · × P ω N . Eq uiv a lently , if V ∈ P ω , then a ll neu rons such tha t ω i = 1 are firing while neu rons such that ω i = 0 are quiescent. W e call therefore ω a “spiking pattern”. T o each initial condition V ∈ M we associate a “raster plot” ˜ ω = { ω ( t ) } + ∞ t =0 such that V ( t ) ∈ P ω ( t ) , ∀ t ≥ 0 . W e write V → ˜ ω . Thus, ˜ ω is the sequen ce of spik- ing p atterns displayed by the neu ral network when prepare d with the initial co ndition V . W e denote by [ ω ] s,t the seq uence ω ( s ) , ω ( s + 1) , . . . , ω ( t ) of 1 As a side remark, we would like to point out that this models admits genericall y a finite Mark ov partition, which allows to describe the e volu tion of probab ility distrib utions via a Mark ov chain. This gi ves some support t o the app roach de vel opped in [26] th ough, our a nalysis al so sho ws, in the present exampl e, that, in opposition to what is assumed in [26], (i) the size of the Markov partition (and especially its finitness) does not only depend on membrane and synaptic time constant s, but on the values of the synaptic weights; (ii) the probabilit y that, exactl y j neurons in the network fire and exactl y N − j neurons does not fire at a giv en time, does not factori ze (see eq. (1) in [26]); (iii) the in varia nt measure is not unique . spiking pattern s. W e say that an infinite sequ ence ˜ ω = { ω ( t ) } + ∞ t =0 is an admissible raster p lot if the re exists V ∈ M such th at V → ˜ ω . W e call Σ Γ the set of a dmissible raster plots fo r the parameter s Γ . The dynam ics on the set of raster plots, induced by (1), is simp ly th e lef t shift σ Γ (i.e. ˜ ω ′ = σ Γ ˜ ω is such th at ω ′ ( t ) = ω ( t + 1) , t ≥ 0 ). Note that there is gen erically a one to one co rrespon dence b etween the o rbits of ( 1) and th e ra ster plots (i.e. r aster plo ts provide a symbolic coding for the orbits) [33]. Statistical properties of orbits. W e a re inter- ested in the statistical prop erties of raster p lots which are inher ited fro m the statistical prop erties of o rbits o f (1) via the correspo ndence ν [ A ] = µ [ { V → ˜ ω , ˜ ω ∈ A } ] , where ν is a p robab ility mea- sure on Σ Γ , A a measur able set (typically a cylin- der set) and µ an er godic measure o n M . ν ( A ) can be e stimated b y the empirica l average π ( T ) ˜ ω ( A ) = 1 T P T t =1 χ ( σ t Γ ˜ ω ∈ A ) where χ is the ind icatrix func- tion. W e are seeking asymptotic s tatistical properties correspo nding to taking the limit T → ∞ . L et : π ˜ ω ( . ) = lim T →∞ π ( T ) ˜ ω ( . ) , (2) be the e mpirical measur e fo r th e r aster p lot ˜ ω , then ν = π ˜ ω for ν -almost every ˜ ω . Gibbs measures. The explicit form of ν is not known in g eneral. Mo reover , one has e xperime n- tally only access to finite time r aster plots an d the conv ergence to π ˜ ω in (2) c an be quite slow . Thus, it is nece ssary to provide some a priori fo rm fo r th e probab ility ν . W e ca ll a statistical model an ergo dic probab ility measure ν ′ which can serve as a proto - type 2 for ν . Since there are many ergod ic measure, there is no t a uniqu e choice for the statistical mod el. Note a lso that it depend s on the paramete rs Γ . W e want to defin e a p rocedu re allowing one to select a statistical model. A Gibbs measur e with potential 3 ψ is an ergodic measure ν ψ ; Γ such that ν ψ ; Γ [ ω ] 1 ,T ∼ exp P T t =1 ψ ( σ t Γ ˜ ω ) Z ( T ) Γ ( ψ ) where Z ( T ) Γ ( ψ ) = P Σ ( T ) Γ exp P T t =1 ψ ( σ t Γ ˜ ω ) , Σ ( T ) Γ being th e set o f ad - missible spikin g patterns sequenc es of len gth T . Note th at for the forthcom ing discussion it is im- portant to m ake explicit the depende nce of th ese quantities in the parameters Γ . The topological pr essur e 4 is the limit P Γ ( ψ ) = lim T →∞ 1 T log( Z ( T ) Γ ( ψ ) . If φ is ano ther po tential one has : ∂ P Γ ( ψ + αφ ) ∂ α α =0 = ν ψ ; Γ [ φ ] , (3) the average value of φ with respect to ν ψ ; Γ . T he Gibbs measure o beys a variation al p rinciple . Let ν be a σ Γ -in variant measure. Call h ( ν ) the entro py of ν . L et M inv Γ be the set of in variant measures for σ Γ , then: P Γ ( ψ ) = sup ν ∈M inv Γ ( h ( ν ) + ν ( ψ )) = h ( ν ψ ; Γ )+ ν ψ ; Γ ( ψ ) . (4) Adaptation r ules. W e consider adap tation mech a- nisms where synaptic weights e volve in time accord- ing to the spikes emitted b y the pre- and p ost- synap- tic neuron. The variation of W ij at time t is a func- tion of the spiking seque nces of neurons i and j from time t − T to time t , wher e T is time scale charac - terizing the width of the spike trains influen cing the synaptic ch ange. In this paper we inv estigate the ef - fects of synaptic plasticity rules where the character- istic time scale T is q uite a bit larger than the tim e scale of e volution of the neurons. On prac tical/numerical grou nds we proce ed as follows. L et the neurons ev olve over a time win- dows of width T , called an “adaptatio n ep och” dur- ing which synaptic weights ar e constant, and record the spikes trains. From this rec ord, we update the synaptic weights and a new adaptatio n epoch begins. W e d enote by t the u pdate index of neuro n states in- side an a daptation epo ch, while τ ind icates the up - date index o f syn aptic weigh ts. Neuron al time is re- set at each new adaptation epoch . As an example we c onsider here an adaptation rule of type: δ W ( τ ) ij = W ( τ +1) ij − W ( τ ) ij = ǫ h ( λ − 1) W ( τ ) ij + 1 T − 2 C g ij ([ ω i ] 0 ,T , [ ω j ] 0 ,T ) i . (5) The first term parametrized by λ ∈ [0 , 1] mimics p as- si ve L TD while: g ij ([ ω i ] 0 ,T , [ ω j ] 0 ,T ) = T − C X t = C C X u = − C f ( u ) ω i ( t + u ) ω j ( t ) , with : f ( x ) = A − e x τ − , x < 0 ; A + e − x τ + , x > 0; 0 , x = 0 , (6) 2 e.g. by minimising the Kullba ck-Leiber div erg ence between the empirical measure and ν ′ . 3 Fix 0 < Θ < 1 . Define a metric on Σ Γ by d Θ ( ˜ ω , ˜ ω ′ ) = Θ p , where p is the lar gest integer such that ω ( t ) = ω ( t ′ ) , 1 ≤ t ≤ p − 1 . For a contin uous function ψ : Σ Γ → I R and n ≥ 1 define v ar n ψ = sup {| ψ ( ˜ ω ) − ψ ( ˜ ω ′ ) | : ω ( t ) = ω ′ ( t ) , 1 ≤ i ≤ n } . ψ is called a potential if var n ( ψ ) ≤ C Θ n , n = 1 , 2 . . . , where C is some positiv e constant [35]. 4 This quantity is analogous to thermodynamic potent ials in statisti cal mechanics like free energy or the grand potential Ω = U − T S − µN = − P V , where P is the thermodynamic pressure. Equation (3) expre sses that P Γ ( ψ ) is the generati ng function for the cumulants of the probabil ity distribu tion. Equation (4) relates Gibbs measures to equilib rium states (entropy maximisation under constraint s). and with A − < 0 , A + > 0 , provides an example of STDP imp lementation . Note that sin ce f ( x ) be- comes negligib le as so on as x ≫ τ + or x ≪ − τ − , we may consider th at f ( u ) = 0 whenever | u | > C def = 2 max( τ + , τ − ) . The par ameter ǫ > 0 is cho - sen small eno ugh to ensure adiaba tic changes while updating synaptic weights. Updating the synaptic weights has sev eral con- sequences. I t obviously modifies the structu re of the synaptic graph with an impact on neurons dynam ics. But, it can also modify the statistics of spikes trains and the structure of the s et of admissible raster plots. Let us now discuss these ef fects within more details. Dynamical ef fects of synaptic plasticity . Set S i ( ˜ ω ) = P C u = − C f ( u ) ω i ( u ) and H ij ( ˜ ω ) = ω j (0) S i ( ˜ ω ) . Then the adaptation rule wr ites, as T → ∞ , in terms of the empir ical measure π ˜ ω ( τ ) : δ W ( τ ) ij = ǫ ( λ − 1) W ( τ ) ij + π ˜ ω ( τ ) [ H ij ] , (7) where ˜ ω ( τ ) is the raster plot produ ced by neuro ns in the adaptation epoch τ . The term S i ( ˜ ω ) can be either negativ e, in duc- ing L TD or p ositiv e induc ing L TP . In particular, its av erage with respect to the em pirical measure π ˜ ω ( τ ) reads: π ˜ ω ( τ ) ( S i ) = β r i ( τ ) (8) where: β = " A − e − 1 τ − 1 − e − C τ − 1 − e − 1 τ − + A + e − 1 τ + 1 − e − C τ + 1 − e − 1 τ + . # (9) and where r i ( τ ) = π ˜ ω ( τ ) ( ω i ) is the f requen cy rate of neuron i in the τ -th ada ptation epoch. The term β n either depend on ˜ ω n or on τ , but on ly on th e adaptation rule para meters A − , A + , τ − , τ + , C . Equation (8) leads 3 regimes. • Cooperative regime. If β > 0 then π ˜ ω ( τ ) ( S i ) > 0 . Then synaptic weig hts h ave a tendency to become more positi ve. This cor- respond s to a coope rativ e system [36]. I n this case, dy namics become tri v ial with neurons firing at each time step or remainin g quiescent forever . • Competitive regime. On the opp osite if β < 0 syna ptic weights become negativ e. Th is cor - respond s to a com petitiv e system [36]. In this case, neuro ns remain qu iescent forev er . • Intermediate regime. The in termediate regime corr esponds to β ∼ 0 . Here no clear cut tenden cy can be distinguish ed from th e av- erage value of S i and spikes c orrelation s ha ve to be considered as well. In th is situa tion, the effects of synap tic plasticity o n neur ons dy- namics stron gly de pend on the values of th e parameter λ . A detailed discussion o f these ef - fects will b e pub lished else where. W e simply provide an e xample in Fig. 1. V ariational form. W e now in vestigate the effects of the adap tation ru le (7) o n the statistical distribution of the raster plot. T o th is purpo se we use, as a sta- tistical mo del for the spike train distribution in the adaptation ep och τ , a Gibbs distribution ν ψ ( τ ) with a potential ψ ( τ ) and a to pological pre ssure P ( ψ ( τ ) ) . Thus, the adaptation dynam ics results in a sequence of empirical measu res { π ˜ ω ( τ ) } τ ≥ 0 , an d cor respond - ing statistical mo dels ν ψ ( τ ) τ ≥ 0 , co rrespon ding to changes in the statistical proper ties of raster plots. These chan ges can b e smooth , in which case the average value of ob servables changes smo othly . Then, using (3) we may write the adapta tion rule (7) in the form: δ W ( τ ) = ν ψ ( τ ) [ φ ] = ∇ W = W ( τ ) P ψ ( τ ) + ( W − W ( τ ) ) . φ ( W ( τ ) , . ) , (10) where φ is the matrix of componen ts φ ij with φ ij ≡ φ ij ( W ij , ˜ ω ) = ǫ (( λ − 1) W ij + H ij ) and where we use th e no tation ( W − W ( τ ) ) . φ = P N i,j =1 ( W ij − W ( τ ) ij ) φ ij . This amou nts to sligh tly perturb the po tential ψ ( τ ) with a pertu rbation ( W − W ( τ ) ) . φ . In this case, for sufficiently small ǫ in the ada ptation ru le, the variation of p ressure between epoch τ and τ + 1 is giv en by: δ P ( τ ) ∼ δ W ( τ ) .ν ψ ( τ ) [ φ ] = δ W ( τ ) .δ W ( τ ) > 0 Therefo re the adaptation rule is a gradient system which tends to maximize the topologica l pr essur e . The effects of mod ifying synaptic weigh ts can also be shar p (cor respond ing typically to bifu rca- tions in (1)). W e observe in fact phases o f regular variations interrup ted by singular transitions (see fig. 1). These transitions have an in teresting inter preta- tion. When they happen the set of adm issible raster plots is typically sudd enly mod ified by the adap ta- tion rule. Thus, the set of admissible raster plo ts ob- tained af ter adap tation belon gs to Σ Γ ( τ ) ∩ Σ Γ ( τ +1) . In this way , ad aptation plays the r ole o f a selective mechanism where the set of admissible raster plots, viewed as a neu ral c ode, is g radually reducing , pr o- ducing after n steps of ad aptation a set ∩ n τ =1 Σ Γ ( τ ) which can be small (but not empty). If we consider the situation where (1) is a neu- ral network submitted to some stimu lus, where a raster plot ˜ ω enco des the spike response to the stim- ulus wh en the system was prepared in the initial condition X → ˜ ω , then Σ Γ is the set of all possible raster plots en coding this stimulu s. This illustrate s how da ptation results in a redu ction of th e possible coding, thu s reducin g the variability in the possible responses. Th is proper ty has also been ob served in [28, 32, 29, 30] Equilibrium distribution. An “eq uilibrium p oint” is d efined by δ W = 0 and it co rrespon ds to a maxi- mum of the pressure with : ν ∗ [ φ ] = 0 , where ν ∗ is a Gibbs measure with potential ψ ∗ . Th is implies: W ∗ = 1 1 − λ ν ∗ [ H ] (11) which gives, compone nt by compon ent, and makin g H explicit: W ∗ ij = 1 1 − λ C X u = − C f ( u ) ν ∗ [ ω j (0) ω i ( u )] , (12 ) where ν ∗ [ ω j (0) ω i ( u )] is the prob ability , in the asymptotic regime, that neu ron i fire s at time u and neuron j fires at time 0 . Hence, in this case, the synaptic weights are purely expre ssed in terms o f neur o ns pairs corr ela tions . As a fu rther consequence the e quilibrium is describ ed by a Gibbs d istribution with potential φ . Therefo re, if the adaptation rule (5) converges 5 to an eq uilibrium po int as τ → ∞ , it leads natura lly the system to a dynam ics wher e raster plots are dis- tributed accordin g to Gib bs distribution, with a pair potential having some analo gy with an Ising mod el Hamiltonian. This makes a very interesting link with the work of Schneidman et al [37] where they show , using theoretical argum ents as well as em- pirical e vidences o n experim ental d ata f or the sala- mander retina, that spike trains statistics is l ikely de- scribed by a Gibbs d istribution with an “Ising” like potential (see also [38]). 3 Conclusion. In this paper, we hav e introduced a mathematical framework whe re spikes train s statistics, produc ed by neu ral networks, possibly ev olving un der synap - tic plasticity , can be ana lysed. It is argued that Gibbs distribution, arising natur ally in e rgodic theo ry , are good candid ates to provid e efficient statistical mod - els f or raster plo ts distribution. W e h av e also shown that some plasticity rules can be associated to a v ari- ational p rinciple. Thoug h only on e example was pro - posed, it is easy to extend this result to more general examples of adaptatio n rules. This will be the subject of an extended forthcoming paper . Concernin g n euroscien ce th ere are several ex- pected outcomes. Th e approach p roposed her e is similar in spirit to the variational a pproac hes dis- cussed in the in troduc tion (especially [ 22, 2 3, 24]). Actually , the qu antity L minimized in [23, 24] can be obtain ed, in the present setting, with a suitable choice o f poten tial. But the p resent for malism con - cerns neu ral networks with intrinsic dynam ics (in - stead of isolated neur ons submitted to u ncorellated Poisson spikes trains). Also, m ore g eneral mod els could be taken into account (f or examp le Integrate and Fire models with adaptive con ductance s [34]). A second issue co ncerns spike trains statistics. As claime d by many autho rs and n icely pr oved by Schneidman and collab orators [37, 38], mo re e lab- orated statistical mo dels than uncorr elated Poisson distributions have to b e conside red to analy se spike trains statistics, especially tak ing into accoun t cor- relations between spikes [39], but a lso higher orde r cumulants. Th e present work show tha t Gibbs dis- tributions, ob tained from statistical inferen ce in re al data by Schneidma n and collaborators, may natu rally arise from synaptic adaptation mechanisms. W e a lso obtain an explicit form for the p robability distribu- tion depend ing e.g. on physical par ameters such as the time constants τ + , τ − or the L TD/L TP stren gth A + , A − appearin g in th e STDP rule. T his can b e compare d to real data. A lso, having a “good ” statis- tical mod el is a first step to be able to “r ead th e neur al code” in the spirit of [17], namely in fer the c ondi- tional probab ility that a stimulus has been app lied giv en the observed spike train , knowing the con- ditional prob ability that on e observes a spike train giv en the stimulus. Finally , as a last outcome, this approa ch open s up the p ossibility of obtain ed a specific spike train statistics from a d eterministic neu rons ev olution with a suitable syna ptic plasticity rule ( constrained e.g. by the potential φ ). Refer ences [1] E. L. Bienenstock, L. Cooper , and P . Munroe. Theory for the development of neuron selectivity: orientation specificity and binocular interaction in visual cortex. The Journal of Neur oscience , 1982. [2] T .V .P Bliss and A.R. Gardn er-Medwin. Long-lasting potent iation of synapti c transmissio n in the dentate area of the unanae sthetised rabbit fol lowing stimulatio n of t he perfor ant p ath. J Physiol , 232:357– 374, 1973. [3] S.M. Dudek and M. F. Bear . B idirectional long-t erm modification of synapti c effectiv eness in t he adult and immature hippocampus. J Neur osci. , 1993. [4] A. Artola, S. Brcher, and W . Singer. Different voltage-dependent thresholds for i nducing long-term depression and long-term potenti ation in slices of rat visual cortex. Nature , 1990. [5] W .B. Levy and D. Stewa rt. Temporal conti guity requirements for lo ng-term associative po tentia- tion/d epression in the hippocampus. Neuroscienc e , 1983. [6] H. Markram, J. L ¨ ubke, M. Fro tscher, and B. Sakmann. Regulation of syn aptic effica cy by coin ci- dence of postsynaptic ap and epsp. Science , 275(213), 1997. [7] G. Bi and M. Poo. Synapt ic modification by correlated acti vity: Hebb’s postulate revisited. Annual Rev iew of Neuroscience , 2001. [8] R. C. Malenka 1 and R. A. Nicoll. Long-term potentiation–a decade of progress? Science , 19 99. [9] D.O. Hebb . The organization of behavior . New Y ork : Wiley , 1949 . [10] P . Dayan and L . F . Abbott. Theor etical Neur oscience : Computat ional and Mathematical Modeling of Neural Systems . MIT Press, 2001. [11] W . Gerstner and W . M. Ki stler. Mathematical formulati ons of hebbian learning. Biol Cybern , 87:404–4 15, 2002. [12] L.N. Coop er, N. Intrator, B.S. Blais, and H.Z. Shouval. Theory of cortical plast icity . World Scien- tific, Singapore, 2004. [13] C. von der Malsburg. Self-organisation of or ientation sensitive cells in the stri ate cortex. Kyber- netik , 1973. [14] KD Miller, JB Keller , and MP Str yker. Ocular dominance column development: analysis and simulation. Science , 1989. 5 This con vergen ce is ensured for suffici ently small ǫ . [15] E.M. Izhikevich and N.S. De sai. Relating stdp to bcm. Neural Computati on , 15:1511–1523 , 2 003. [16] B. Cessac, H.Rostro-Gonzalez, J.C. V asquez, and T . Vie ville. To which e xtend is the ”neural code” a metric ? In Neurocomp 2008, poster , 2008. [17] F. Rieke, D. W arland, Rob de Ruyter von Steveninck, and W il liam Bialek. Spikes, Explori ng the Neural Code . The M.I.T . Press, 1996 . [18] P . Dayan and M. Hausser. Pl asticity kernels and t emporal statistics . Cambridge MA: MI T Press, 2004. [19] R. P . N. Rao and T . J. Sejnowski. Predictive sequence learning in r ecurrent neocortical circuits . Cambridge MA, MIT Press, 1991. [20] R. P . N. Rao and T . J. Sejnowski. Spike-timi ng-dependent hebbian plasticity a s temporal difference learning. Neural Computati on , 2001. [21] S. M . Bohte and M. C . Mozer . Reducing the variability of neural responses: A computati onal theory of spike-timi ng-dependent plasticity. Neural Computa tion , 2007. [22] G. Chechik. Spike-ti ming-dependent plasticity and releva nt mutual i nformation maximization. Neural Computat ion , 2003. [23] T. Toyoizumi, J.-P . Pfister, K. Aihara, and W . Gerstner. Generalized bienenstock-cooper-munro rule for spiking neurons t hat maximizes i nformation transmission. Pr oc. Natl. Acad. Sci. USA , 2005. [24] T. T oyoizumia, J.-P . Pfister, K. Aihara, , and W . Gerstner. Opt imality model o f unsupervised spike-timing dependent pl asticity: Syn aptic memory and weight distribution. Neural Computa- tion , 19:63 9–671, 2007. [25] M. Samuelides and B. Cessac. Random recurrent neural networks. EPJ Special T opics ”T op ics in Dynamical Neural N etworks : Fr om Large Scale N eural Networks t o Motor C ontrol and V isi on” , 142(1):89 –122, 2007. [26] H. Soula and C . C. C how . Neural Computation , Stochastic Dynamics of a Finite-Si ze Spi king Neural Networks. [27] B. Cessac and M. Samuelides. From neuron to neural networks dyn amics. EPJ Special topics: T opi cs in Dynamical Neural Networks , 142(1):7–88 , 2007. [28] E. Dauc ´ e, M. Quoy, B . Cessac, B. Doyon, a nd M. Samuelides. Se lf-organization and dynamics re- duction in recurrent network s: st imulus presentation and learning. Neural Networks , 11: 521–533, 1998. [29] B. Siri , H. Berry , B. Cessac, B. Delord, and M. Quoy . Effects of hebbian learning on the dynamics and str ucture of random networks with inhibi tory and excitatory n eurons. Journal of Physiolog y (P a ris) , 101((1-3)): 138–150, 2007. e-prin t: arXiv:070 6.2602. [30] B. Siri , H. Berry, B. Cessa c, B . Delord, and M. Quoy. A mathematical analysis of the effects of h ebbian learning rul es on the dynamics and stru cture of di screte-time random rec urrent neural networks. Neural Computation , 2008. t o appear . [31] H. So ula. Dynami que et pl asticit ´ e dans les r ´ eseaux de neurones ` a impul sions . PhD thesis, INSA L yon, 2005. [32] H. So ula, G. Beslon, and O. Mazet. Spontaneous dynamics of asymmetric random recurrent spik- ing neural networks. Neural Computation , 1 8(1), 2006. [33] B. Cessac. A discrete t ime neural network model with spi king neurons. ri gorous result s on t he spontaneous dynamics. Journal of Mathematical Biology , 56(3):311–345, 2008. [34] B. Cessa c and T . Vi ´ evil le. On dyn amics o f i ntegrate-and-fire neural n etworks wit h adaptive con- ductances. F r ontiers in Neuroscience , 2(2), July 2008. [35] G. Keller. Equilibrium States in Er godi c Theory . Cambridge Unive rsity Press, 1998. [36] M.W . Hirsch. Con ver gent activation dynamics in con tinuous time network s. N eur . Netwo rks , 2:331–34 9, 1989. [37] E. Schneidman, M.J. Berry, R. Se gev , and W . Bialek. We ak pair wise correlati ons imply str ongly correlated network states in a neural populatio n. Nat ure , page 04701, April 2006. [38] G. Tkacik, E. Schneidman, MJ. Berry II, a nd W Bialek. Isin g models for networks of real neurons. qbio.NC/0 611072 , 2006. [39] S. Nirenberg and P . Latham. Decoding neuronal spike trains: how important are correlation s. Pr oceeding of the Natu ral A cademy of Science , 2003. 0 0.05 0.1 0.15 -2.5 -2 -1.5 -1 -0.5 0 0.5 1 1.5 2 2.5 Weight histogram for STDP steps init 1000 2000 3000 4000 5000 1 2 3 4 5 0 500 1000 1500 2000 2500 3000 3500 4000 4500 5000 STDP steps Log 10 (period) log 10 (per) -0.6 -0.4 -0.2 0 0.2 0.4 0.6 0.8 1 0 100 200 300 400 500 600 700 800 900 1000 STDP steps STDP evolution of some synaptic weights W 1 W 2 W 3 W 4 0 10 20 30 40 50 neuron index initial and final rasterplots 0 10 20 30 40 50 0 20 40 60 80 100 neuron index time Figure 1 . Effect of s ynaptic plasticity o n: (up left) synaptic weig hts histogram; ( up right) Period of the orbit; (do wn left) some synaptic weights e volution; (do wn right) raster plot of a fe w neurons.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment