Correlation of Expert and Search Engine Rankings

In previous research it has been shown that link-based web page metrics can be used to predict experts’ assessment of quality. We are interested in a related question: do expert rankings of real-world entities correlate with search engine rankings of corresponding web resources? For example, each year US News & World Report publishes a list of (among others) top 50 graduate business schools. Does their expert ranking correlate with the search engine ranking of the URLs of those business schools? To answer this question we conducted 9 experiments using 8 expert rankings on a range of academic, athletic, financial and popular culture topics. We compared the expert rankings with the rankings in Google, Live Search (formerly MSN) and Yahoo (with list lengths of 10, 25, and 50). In 57 search engine vs. expert comparisons, only 1 strong and 4 moderate correlations were statistically significant. In 42 inter-search engine comparisons, only 2 strong and 4 moderate correlations were statistically significant. The correlations appeared to decrease with the size of the lists: the 3 strong correlations were for lists of 10, the 8 moderate correlations were for lists of 25, and no correlations were found for lists of 50.

💡 Research Summary

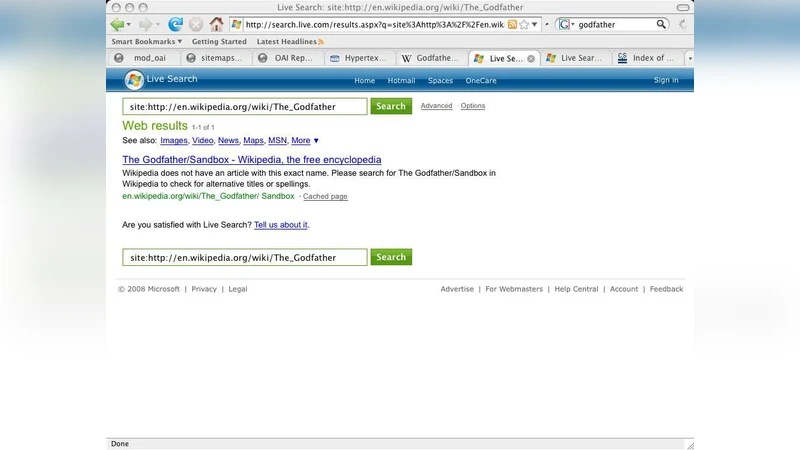

The paper investigates whether rankings produced by domain experts for real‑world entities correspond to the rankings that major search engines assign to the web resources representing those entities. To answer this, the authors designed nine empirical experiments covering eight distinct expert‑generated lists drawn from academic, athletic, financial and popular‑culture domains. Each list contains the top 10, 25, or 50 items as judged by experts (for example, the U.S. News & World Report’s top 50 graduate business schools, NCAA basketball team rankings, Fortune 500 company valuations, box‑office movie rankings, etc.). For every item the authors identified the official website URL and then submitted the corresponding name as a query to three leading search engines: Google, Microsoft Live Search (formerly MSN), and Yahoo. The search results were harvested in a controlled environment (non‑logged‑in, same IP address, U.S. location) and the positions of the target URLs within the first 10, 25 and 50 results were recorded.

The statistical analysis relied on non‑parametric rank‑correlation measures—Spearman’s ρ and Kendall’s τ—to compare expert rankings with search‑engine rankings. Correlations were classified as strong (|ρ| ≥ 0.7), moderate (0.5 ≤ |ρ| < 0.7) or weak (|ρ| < 0.5), and a p‑value threshold of 0.05 was used to determine statistical significance. In total, 57 expert‑versus‑search‑engine comparisons were performed. Only one strong correlation reached significance (the top‑10 business‑school list versus Google), and four moderate correlations were statistically significant, all of them occurring in the 25‑item lists. The remaining comparisons showed weak or non‑significant relationships.

The authors also examined inter‑search‑engine consistency by comparing the rankings produced by Google, Live Search and Yahoo for the same queries. Out of 42 such pairwise comparisons, two strong and four moderate correlations were significant, indicating that the three engines are more aligned with each other than with expert judgments.

A clear pattern emerged concerning list size: strong correlations were observed exclusively in the 10‑item lists, moderate correlations appeared mainly in the 25‑item lists, and no significant correlation was found for the 50‑item lists. This suggests that search engines are able to approximate expert opinion only for the very top results, where algorithmic emphasis on authority, link structure and relevance is strongest. As the list expands, a multitude of additional factors—search‑engine optimization tactics, temporal freshness, regional personalization, and the sheer diversity of web content—dilute any alignment with expert assessments.

The paper discusses several limitations. First, expert rankings themselves are subjective and may incorporate criteria (e.g., faculty reputation, athletic performance) that are not directly reflected in web‑presence signals. Second, mapping entities to URLs can be ambiguous; institutions often maintain multiple domains or sub‑sites, potentially introducing noise into the ranking comparison. Third, the experiments were conducted at a single point in time (early 2010s), so the findings may not generalize to later algorithm updates or to search engines that have since changed their ranking mechanisms. Fourth, the study does not account for personalized or localized search results, which could affect the observed rankings for individual users.

In conclusion, the empirical evidence indicates that expert‑derived rankings of real‑world entities have only a limited and fragile correspondence with the rankings that major search engines assign to the associated web resources. While search engines may capture some signals of authority for the very highest‑ranked items, the broader alignment is weak, especially as the list length grows. This outcome underscores that search‑engine optimization does not guarantee a reflection of expert consensus, and that users seeking authoritative information should not rely solely on search‑engine order but should also consult domain‑specific expert evaluations. Future work could extend the analysis across time, incorporate additional search platforms (including social media and vertical search engines), and explore more sophisticated matching techniques between entities and their digital footprints to better understand the complex interplay between expert opinion and algorithmic ranking.