Time-frequency representations of audio signals often resemble texture images. This paper derives a simple audio classification algorithm based on treating sound spectrograms as texture images. The algorithm is inspired by an earlier visual classification scheme particularly efficient at classifying textures. While solely based on time-frequency texture features, the algorithm achieves surprisingly good performance in musical instrument classification experiments.

Deep Dive into Audio Classification from Time-Frequency Texture.

Time-frequency representations of audio signals often resemble texture images. This paper derives a simple audio classification algorithm based on treating sound spectrograms as texture images. The algorithm is inspired by an earlier visual classification scheme particularly efficient at classifying textures. While solely based on time-frequency texture features, the algorithm achieves surprisingly good performance in musical instrument classification experiments.

With the increasing use of multimedia data, the need for automatic audio signal classification has become an important issue. Applications such as audio data retrieval and audio file management have grown in importance [1,16].

Finding appropriate features is at the heart of pattern recognition. For audio classification considerable effort has been dedicated to investigate relevant features of divers types. Temporal features such as temporal centroid, auto-correlation [11,2], zero-crossing rate characterize the waveforms in the time domain. Spectral features such as spectral centroid, width, skewness, kurtosis, flatness are statistical moments obtained from the spectrum [11,12]. MFCCs (mel-frequency cepstral coefficients) derived from the cepstrum represent the shape of the spectrum with a few coefficients [13]. Energy descriptors such as total energy, sub-band energy, harmonic energy and noise energy [11,12] measure various aspects of signal power. Harmonic features including fundamental frequency, noisiness and inharmonicity [4,11] reveal the harmonic properties of the sounds. Perceptual features such as loudness, shapeness and spread incorporate the human hearing process [20,10] to describe the sounds. Furthermore, feature combination and selection have been shown useful to improve the classification performance [5].

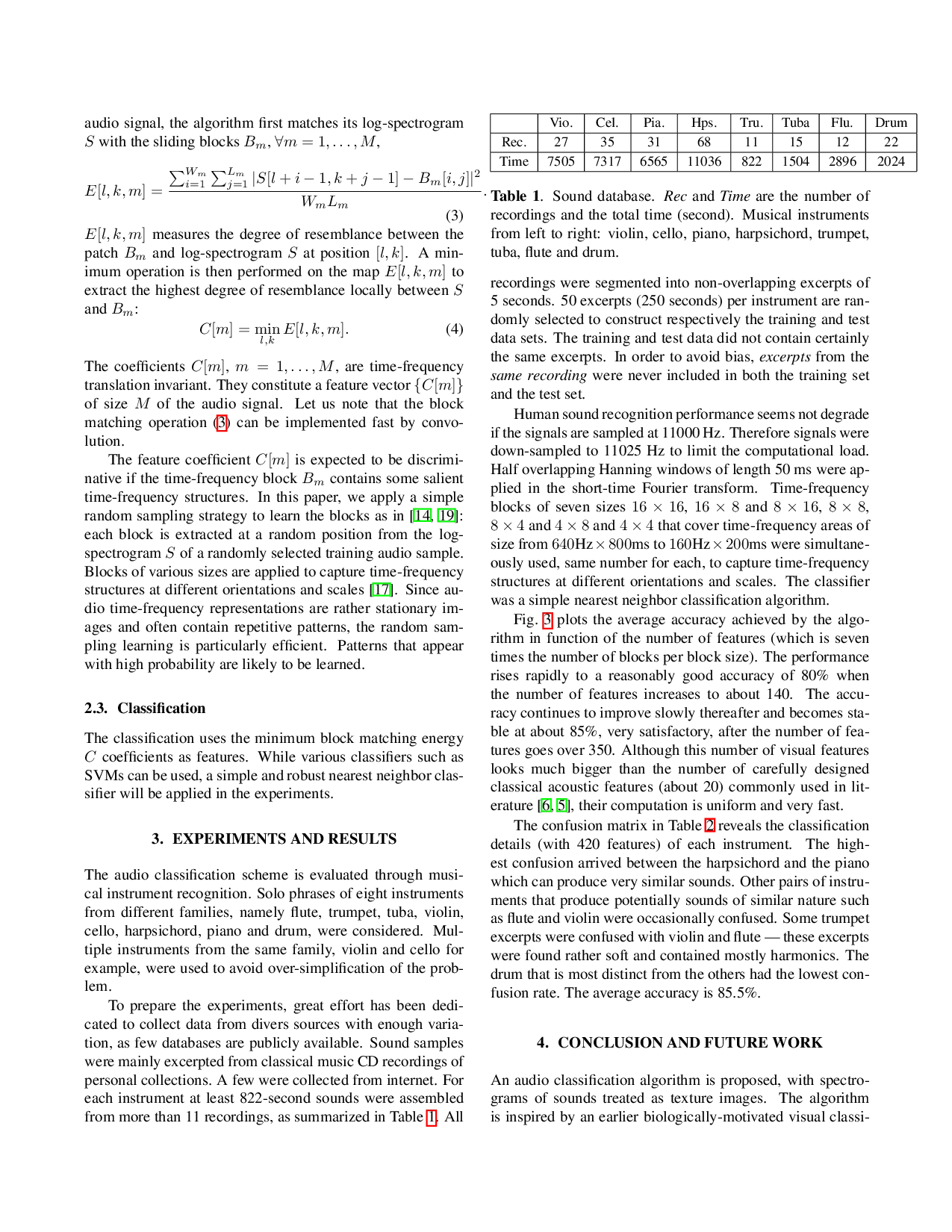

While most features previously studied have an acoustic motivation, audio signals, in their time-frequency representations, often present interesting patterns in the visual domain. Fig. 2 shows the spectrograms (short-time Fourier representations) of solo phrases of eight musical instruments. Spe-cific patterns can be found repeatedly in the sound spectrogram of a given instrument, reflecting in part the physics of sound generation. By contrast, the spectrograms of different instruments, observed like different textures, can easily be distinguished from one another. One may thus expect to classify audio signals in the visual domain by treating their time-frequency representations as texture images.

In the literature, little attention seems to have been put on audio classification in the visual domain. To our knowledge, the only work of this kind is that of Deshpande and his colleges [3]. To classify music into three categories (rock, classical, jazz) they consider the spectrograms and MFCCs of the sounds as visual patterns. However, the recursive filtering algorithm that they apply seems not to fully capture the texture-like properties of the audio signal time-frequency representation, limiting performance.

In this paper, we investigate an audio classification algorithm purely in the visual domain, with time-frequency representations of audio signals considered as texture images. Inspired by the recent biologically-motivated work on object recognition by Poggio, Serre and their colleagues [14], and more specifically on its variant [19] which has been shown to be particularly efficient for texture classification, we propose a simple feature extraction scheme based on timefrequency block matching (the effectiveness of application of time-frequency blocks in audio processing has been shown in previous work [17,18]). Despite its simplicity, the proposed algorithm relying only on visual texture features achieves surprisingly good performance in musical instrument classification experiments.

The idea of treating instrument timbres just as one would treat visual textures is consistent with basic results in neuroscience, which emphasize the cortex’s anatomical uniformity [9,7] and its functional plasticity, demonstrated experimentally for the visual and auditory domains in [15]. From that point of view it is not particularly surprising that some common algorithms may be used in both vision and audition, particularly as the cochlea generates a (highly redundant) time-frequency representation of sound.

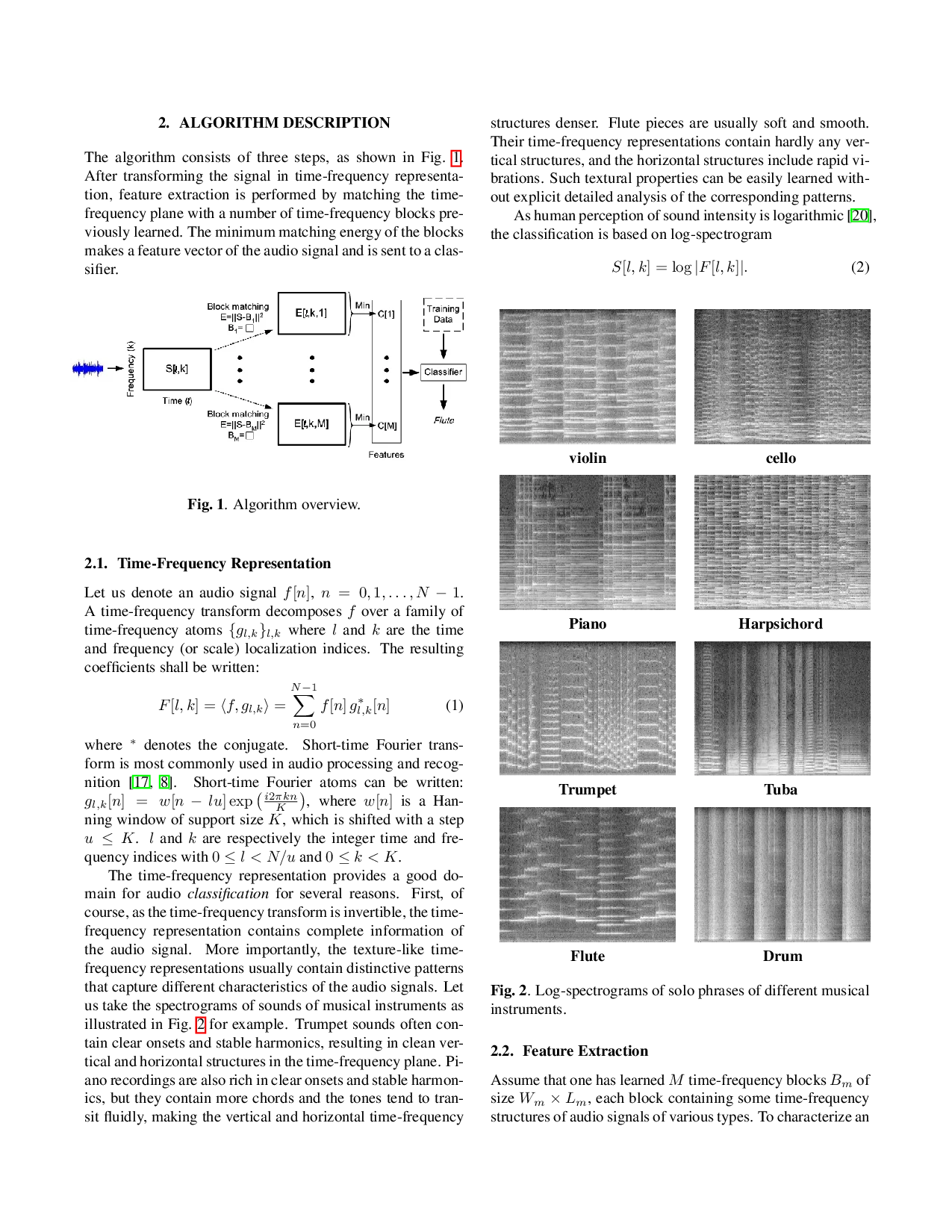

The algorithm consists of three steps, as shown in Fig. 1. After transforming the signal in time-frequency representation, feature extraction is performed by matching the timefrequency plane with a number of time-frequency blocks previously learned. The minimum matching energy of the blocks makes a feature vector of the audio signal and is sent to a classifier.

where * denotes the conjugate. Short-time Fourier transform is most commonly used in audio processing and recognition [17,8]. Short-time Fourier atoms can be written:

, where w[n] is a Hanning window of support size K, which is shifted with a step u ≤ K. l and k are respectively the integer time and frequency indices with 0 ≤ l < N/u and 0 ≤ k < K.

The time-frequency representation provides a good domain for audio classification for several reasons. First, of course, as the time-frequency transform is invertible, the timefrequency representation contains complete information of the audio signal. More importantly, the texture-like timefrequency representations usually contain distinctive patterns that capture different characteristics of t

…(Full text truncated)…

This content is AI-processed based on ArXiv data.