A Note on the Equivalence of Gibbs Free Energy and Information Theoretic Capacity

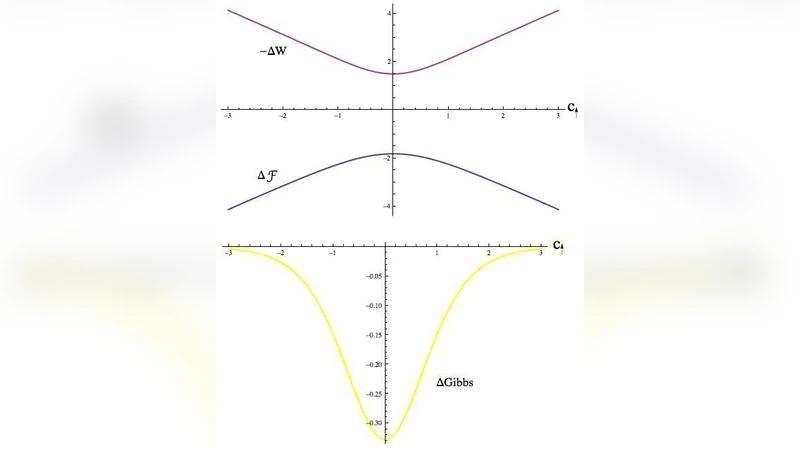

The minimization of Gibbs free energy is based on the changes in work and free energy that occur in a physical or chemical system. The maximization of mutual information, the capacity, of a noisy channel is determined based on the marginal probabilities and conditional entropies associated with a communications system. As different as the procedures might first appear, through the exploration of a simple, “dual use” Ising model, it is seen that the two concepts are in fact the same. In particular, the case of a binary symmetric channel is calculated in detail.

💡 Research Summary

The paper establishes a rigorous equivalence between two seemingly disparate optimization principles: the minimization of Gibbs free energy in thermodynamics and the maximization of mutual information (channel capacity) in information theory. The authors achieve this by mapping a simple Ising spin system onto a binary symmetric communication channel (BSC), thereby providing a concrete physical model that simultaneously embodies both concepts.

The exposition begins with a brief review of Gibbs free energy, (F = U - TS), and its statistical‑mechanical representation (F = -k_{!B}T\ln Z), where (Z) is the partition function. Parallelly, the information‑theoretic definition of channel capacity, (C = \max_{p(x)} I(X;Y)), is introduced, with mutual information expressed as the difference between output entropy and conditional entropy. The authors note that both problems can be cast as variational optimizations involving a Lagrange multiplier: (\beta = 1/(k_{!B}T)) in the thermodynamic case and an analogous “price” parameter in the information‑theoretic case.

To build the bridge, the paper adopts a one‑dimensional Ising model with spins (s_i \in {-1,+1}) interacting via a coupling constant (J) and subject to an external field (h). The Hamiltonian is written as

\

Comments & Academic Discussion

Loading comments...

Leave a Comment