Bin Packing Under Multiple Objectives - a Heuristic Approximation Approach

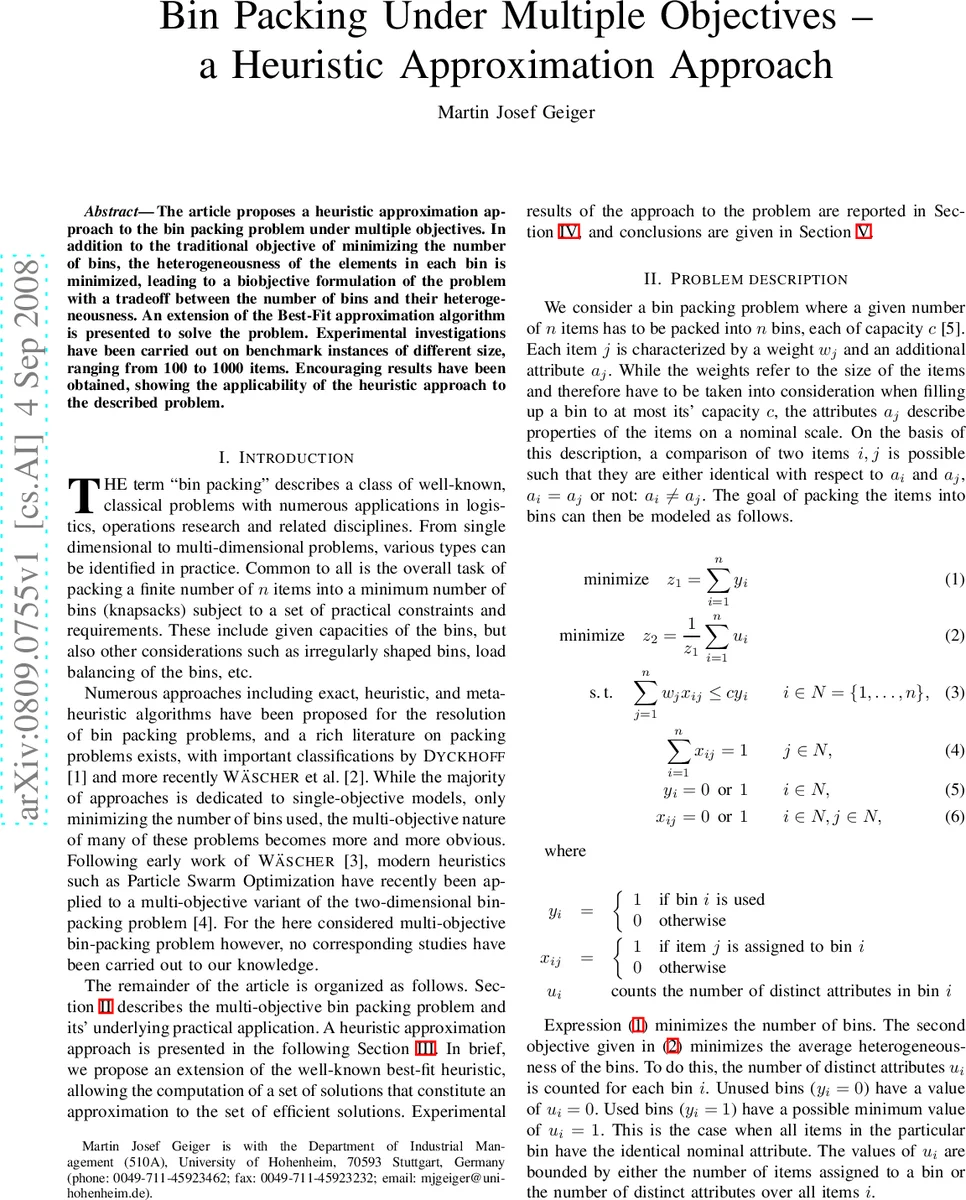

The article proposes a heuristic approximation approach to the bin packing problem under multiple objectives. In addition to the traditional objective of minimizing the number of bins, the heterogeneousness of the elements in each bin is minimized, leading to a biobjective formulation of the problem with a tradeoff between the number of bins and their heterogeneousness. An extension of the Best-Fit approximation algorithm is presented to solve the problem. Experimental investigations have been carried out on benchmark instances of different size, ranging from 100 to 1000 items. Encouraging results have been obtained, showing the applicability of the heuristic approach to the described problem.

💡 Research Summary

**

The paper addresses a bi‑objective version of the classic bin‑packing problem, where the conventional goal of minimizing the number of used bins (z₁) is complemented by a second objective that minimizes the average heterogeneity of the bins (z₂). Heterogeneity is defined as the number of distinct nominal attributes present in a bin; each item carries a weight wⱼ and an attribute aⱼ drawn from a small set (five in the experiments). The two objectives are naturally conflicting: using many bins allows each bin to be almost homogeneous (low z₂) but increases z₁, while packing tightly reduces z₁ but forces heterogeneous mixes, raising z₂. Consequently, the problem is formulated as a vector optimization task and the aim is to approximate the Pareto‑optimal set P.

To tackle this, the authors extend the well‑known Best‑Fit heuristic. Standard Best‑Fit assigns each incoming item to the bin with the smallest remaining capacity that can accommodate the item’s weight, ignoring any attribute considerations. The proposed “Multi‑objective Best‑Fit” introduces a controllable heterogeneity bound uₘₐₓ. Starting from uₘₐₓ = 1 (only perfectly homogeneous bins are allowed), the algorithm gradually relaxes this bound in steps of size s (set to 0.1 in the experiments) until it reaches the theoretical maximum possible heterogeneity u (the total number of distinct attributes). For each intermediate value of u, the algorithm performs m independent stochastic runs (m = 100). In each run, for every item the algorithm probabilistically chooses uₘₐₓ as either ⌊u⌋ or ⌈u⌉, with probabilities proportional to the fractional part of u, and then selects among the feasible bins (those whose current heterogeneity does not exceed uₘₐₓ) the one with the smallest residual capacity – exactly the Best‑Fit rule, but now constrained by attribute diversity. After all items are placed, the resulting solution x yields a pair (z₁, z₂). The algorithm maintains an archive Pₐₚₚᵣₒₓ of non‑dominated solutions: any newly generated solution that dominates an existing one removes the dominated entry; if it is not dominated, it is added to the archive. This process repeats for all u values, producing a set of solutions that approximates the true Pareto front.

The experimental study uses four benchmark families with n = 100, 200, 500, 1000 items. For each n, items are grouped into n/5 bins of capacity c = 1000; item weights are randomly split so that each bin can be perfectly filled, guaranteeing a theoretical lower bound of n/5 bins. Each item’s attribute is drawn uniformly from five possible values. The authors test three item ordering strategies: decreasing weight, increasing weight, and random order. In addition to the multi‑objective Best‑Fit, a “Random‑Fit” baseline is implemented, which selects a feasible bin uniformly at random (still respecting the current uₘₐₓ). All experiments are run on an Intel Pentium IV 1.8 GHz processor; each run finishes within a few seconds.

Results show that only a few Pareto‑efficient vectors are found for each instance size. For the smallest case (n = 100), both Best‑Fit and Random‑Fit achieve the best possible heterogeneity (z₂ = 1.000) when items are processed in decreasing weight order, with z₁ = 22 bins (the optimal lower bound is 20). Increasing weight order yields worse solutions (z₁ ≈ 25, z₂ ≈ 1.0). As n grows, the impact of item ordering becomes more pronounced. For n = 200 and n = 500, decreasing weight order still produces the most favorable trade‑offs, while increasing order leads to substantially higher z₁ and z₂ values. Random‑Fit occasionally discovers solutions not found by Best‑Fit (e.g., for n = 500, a solution with (z₁, z₂) = (101, 1.911) versus Best‑Fit’s (101, 2.020)), indicating that stochastic exploration adds value. However, in all cases the best z₁ found exceeds the theoretical minimum by one or two bins, highlighting the heuristic’s limitation in achieving absolute optimality for the primary objective.

The authors conclude that the multi‑objective Best‑Fit heuristic successfully generates a compact approximation of the Pareto front with negligible computational effort, making it suitable as a fast first‑stage method. They note that the algorithm’s performance is highly sensitive to the item processing order, especially for larger instances, and that decreasing weight order is essential for good results. The paper suggests that the obtained approximation set could serve as a high‑quality seed for more sophisticated meta‑heuristics (e.g., evolutionary multi‑objective algorithms) to further refine the Pareto front. Future work may explore extensions to multi‑dimensional attributes, variable bin shapes, or integration with exact methods for tighter bounds.

Comments & Academic Discussion

Loading comments...

Leave a Comment