A flexible Bayesian method for adaptive measurement in psychophysics

In psychophysical experiments time and the limited goodwill of participants is usually a major constraint. This has been the main motivation behind the early development of adaptive methods for the measurements of psychometric thresholds. More recently methods have been developed to measure whole psychometric functions in an adaptive way. Here we describe a Bayesian method to measure adaptively any aspect of a psychophysical function, taking inspiration from Kontsevich and Tyler’s optimal Bayesian measurement method. Our method is implemented in a complete and easy-to-use MATLAB package.

💡 Research Summary

The paper addresses a central challenge in psychophysical research: the need to obtain precise measurements of sensory functions while constrained by limited experimental time and participant endurance. Traditional adaptive methods, such as staircase or transformed‑up‑down procedures, focus primarily on estimating a single threshold (e.g., the point of 75 % correct performance) and are ill‑suited for mapping an entire psychometric function. Building on the optimal Bayesian measurement framework introduced by Kontsevich and Tyler, the authors develop a flexible Bayesian adaptive algorithm that can target any aspect of a psychometric function—whether the full curve, a specific parameter (slope, lapse rate, asymptote), or a particular stimulus region.

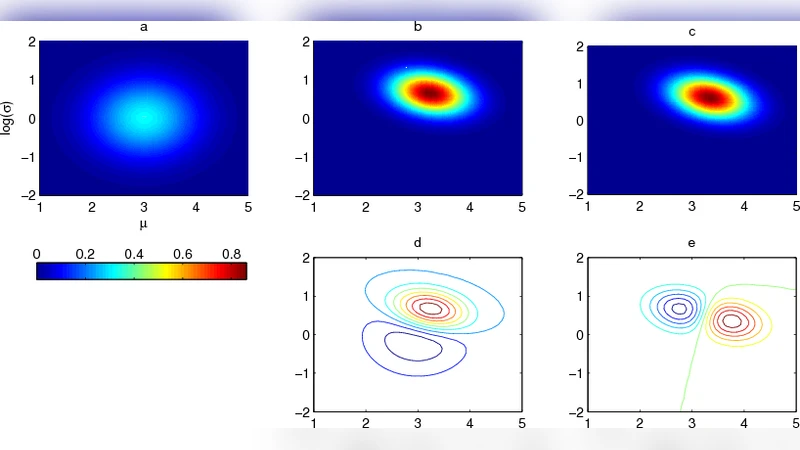

The method proceeds in four logical steps. First, the experimenter selects a parametric form for the psychometric function (e.g., logistic, Weibull, Gaussian) and defines prior distributions over its parameters. These priors may encode expert knowledge, results from pilot studies, or non‑informative assumptions. Second, after each trial the algorithm updates the posterior distribution using Bayes’ rule, thereby quantifying the current uncertainty about each parameter. Third, a set of candidate stimulus intensities is generated, and for each candidate the expected information gain is computed. Information gain is defined as the reduction in posterior entropy that would result, on average, from the possible responses to that stimulus. Finally, the stimulus with maximal expected gain is presented on the next trial, the participant’s binary response is recorded, and the cycle repeats.

A key innovation is the ability to weight the information‑gain criterion toward any user‑specified aspect of the function. For example, if the researcher is most interested in the slope around a particular intensity range, the algorithm can assign higher weight to reductions in uncertainty of that slope parameter, thereby concentrating trials where they are most informative for the target. This flexibility distinguishes the approach from earlier Bayesian adaptive methods that treat all parameters equally.

The authors implemented the algorithm as a comprehensive MATLAB toolbox. The toolbox offers both a graphical user interface and a script‑based API, allowing users to specify function forms, priors, stimulus bounds, and stopping criteria. During an experiment the toolbox visualizes the evolving posterior, the current best‑fit psychometric curve, and the selected stimulus sequence in real time. Its modular architecture permits easy addition of new psychometric models or custom priors without altering the core adaptive engine.

Performance was evaluated through simulations and two human‑subject experiments. In simulation, the Bayesian adaptive method achieved the same parameter‑estimation accuracy as traditional methods with roughly 30–50 % fewer trials. In the human experiments (each comprising 50–100 trials), the full psychometric function was reliably recovered, and participants reported lower fatigue compared with fixed‑stimulus designs. These results demonstrate that the method can dramatically increase data efficiency while preserving or improving measurement fidelity.

The paper also discusses limitations. Computing expected information gain requires integrating over the posterior predictive distribution, which becomes computationally intensive in high‑dimensional parameter spaces. The authors suggest possible remedies such as variational approximations, Monte‑Carlo sampling acceleration, or parallel processing. Additionally, the choice of prior can influence convergence; poorly chosen priors may bias estimates, underscoring the need for careful prior elicitation or empirical Bayes techniques.

In summary, this work delivers a versatile, user‑friendly Bayesian adaptive measurement framework that transcends simple threshold estimation. By allowing researchers to target any component of a psychometric function and by providing an out‑of‑the‑box MATLAB implementation, the method promises to streamline psychophysical data collection, reduce participant burden, and enable more sophisticated modeling of sensory processes across a wide range of experimental paradigms.

Comments & Academic Discussion

Loading comments...

Leave a Comment