Providing Virtual Execution Environments: A Twofold Illustration

Platform virtualization helps solving major grid computing challenges: share resource with flexible, user-controlled and custom execution environments and in the meanwhile, isolate failures and malicious code. Grid resource management tools will evolve to embrace support for virtual resource. We present two open source projects that transparently supply virtual execution environments. Tycoon has been developed at HP Labs to optimise resource usage in creating an economy where users bid to access virtual machines and compete for CPU cycles. SmartDomains provides a peer-to-peer layer that automates virtual machines deployment using a description language and deployment engine from HP Labs. These projects demonstrate both client-server and peer-to-peer approaches to virtual resource management. The first case makes extensive use of virtual machines features for dynamic resource allocation. The second translates virtual machines capabilities into a sophisticated language where resource management components can be plugged in configurations and architectures defined at deployment time. We propose to share our experience at CERN openlab developing SmartDomains and deploying Tycoon to give an illustrative introduction to emerging research in virtual resource management.

💡 Research Summary

The paper presents two open‑source systems that illustrate how platform virtualization can address fundamental challenges in grid computing: flexible sharing of resources, user‑controlled custom execution environments, and robust isolation of failures or malicious code. The first system, Tycoon, was developed at HP Labs as an economy‑driven resource manager. In Tycoon, users submit bids for CPU cycles, and a central auction service allocates virtual‑machine (VM) resources proportionally to the monetary value of each bid. The architecture follows a client‑server model: a central market server collects bids and determines pricing, while lightweight agents on each physical host enforce the allocation by adjusting VM CPU shares and, when necessary, migrating VMs to balance load. This dynamic, market‑based approach encourages efficient utilization, because idle cycles are priced low and high‑value workloads can out‑bid lower‑value ones. The authors report that deploying Tycoon on a large CERN openlab cluster reduced idle CPU time dramatically and provided a transparent mechanism for users to obtain the performance they needed without manual scheduling.

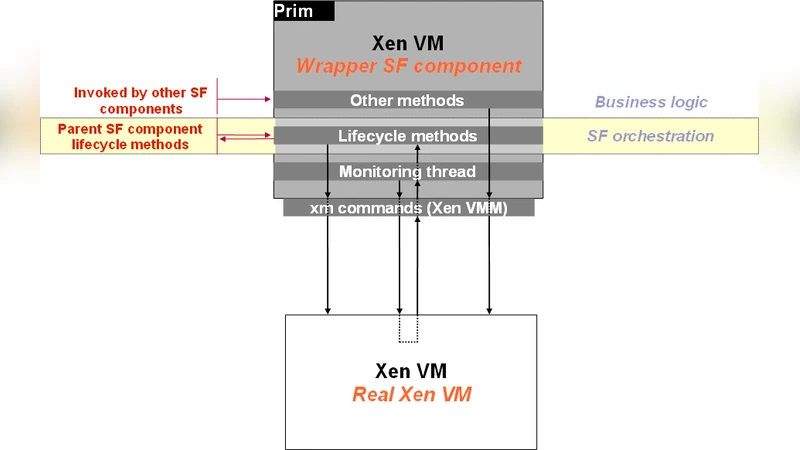

The second system, SmartDomains, also originates from HP Labs but adopts a peer‑to‑peer (P2P) paradigm. SmartDomains introduces a Domain Description Language (DDL) that lets administrators describe the desired virtual infrastructure in a declarative script: VM images, network topology, storage allocations, security policies, and monitoring hooks are all expressed as high‑level specifications. A Deployment Engine parses the DDL and automatically provisions the VMs, installs required software stacks, and interconnects them according to the described topology. The P2P layer distributes state information among participating nodes, enabling autonomous load‑balancing: when a node becomes overloaded, the engine can migrate VMs to less‑busy peers without a central coordinator. SmartDomains is extensible through plug‑ins, allowing researchers to insert custom resource‑management policies such as energy‑aware scheduling, Service Level Agreement (SLA) enforcement, or experiment‑specific monitoring. In the CERN openlab context, SmartDomains was used to spin up heterogeneous testbeds for different physics groups on demand, cutting the time and personnel effort required for environment setup from days to minutes.

Both projects demonstrate complementary approaches to virtual resource management. Tycoon showcases how VM capabilities (dynamic CPU throttling, live migration) can be leveraged in a market‑based, centrally coordinated system to maximize overall utilization and provide a transparent cost model for users. SmartDomains illustrates how a declarative, P2P‑driven framework can automate the lifecycle of complex virtual environments, reduce single points of failure, and enable rapid experimentation with novel scheduling or policy modules. The authors argue that these two case studies together provide a roadmap for the evolution of grid middleware: future resource managers will likely combine economic incentives, fine‑grained VM control, and declarative automation to meet the diverse needs of scientific collaborations.

The paper concludes by emphasizing that virtualization is not merely a technical convenience but a strategic enabler for next‑generation distributed computing. By abstracting physical resources into flexible, isolated VMs, grid infrastructures can support heterogeneous workloads, enforce security boundaries, and adapt to fluctuating demand without extensive manual intervention. The experiences at CERN openlab validate that both market‑driven and peer‑to‑peer virtual management models are viable in production‑scale environments, and they point toward a future where virtual resource brokers, policy plug‑ins, and declarative deployment languages become standard components of scientific computing ecosystems.

Comments & Academic Discussion

Loading comments...

Leave a Comment