Enabling Loosely-Coupled Serial Job Execution on the IBM BlueGene/P Supercomputer and the SiCortex SC5832

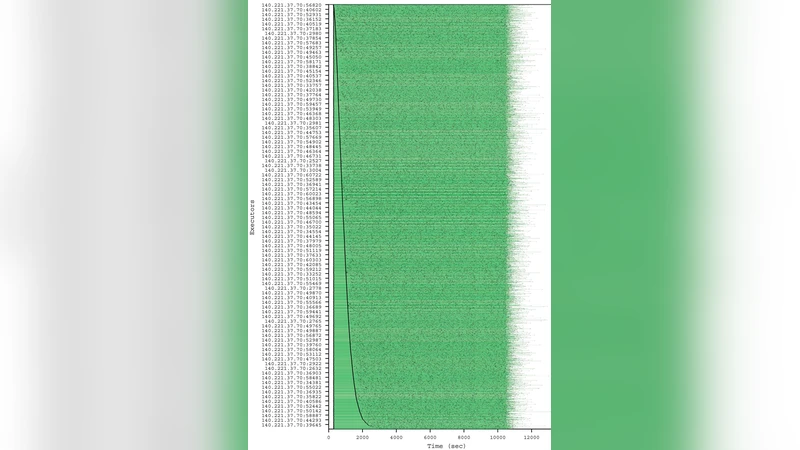

Our work addresses the enabling of the execution of highly parallel computations composed of loosely coupled serial jobs with no modifications to the respective applications, on large-scale systems. This approach allows new-and potentially far larger-classes of application to leverage systems such as the IBM Blue Gene/P supercomputer and similar emerging petascale architectures. We present here the challenges of I/O performance encountered in making this model practical, and show results using both micro-benchmarks and real applications on two large-scale systems, the BG/P and the SiCortex SC5832. Our preliminary benchmarks show that we can scale to 4096 processors on the Blue Gene/P and 5832 processors on the SiCortex with high efficiency, and can achieve thousands of tasks/sec sustained execution rates for parallel workloads of ordinary serial applications. We measured applications from two domains, economic energy modeling and molecular dynamics.

💡 Research Summary

The paper addresses a fundamental limitation of today’s petascale supercomputers: their design for tightly‑coupled, message‑passing MPI applications makes them inefficient for high‑throughput, loosely‑coupled workloads that consist of large numbers of independent serial jobs. The authors demonstrate that, without modifying the original applications, such workloads can be executed efficiently on IBM’s Blue Gene/P and the SiCortex SC5832 systems. Three complementary mechanisms enable this capability. First, multi‑level scheduling bridges the granularity gap between the local resource manager (LRM) and the needs of serial tasks. On Blue Gene/P the LRM (Cobalt) allocates resources in PSETs (64 compute nodes plus an I/O node). By allocating whole PSETs and then internally scheduling at the core level, the system can achieve near‑100 % utilization even when each job runs on a single core. Second, the Falkon task execution framework provides a lightweight, high‑throughput dispatcher that separates resource provisioning from task dispatch. Falkon’s worker processes can sustain dispatch rates of 3 000–4 000 tasks per second, orders of magnitude higher than traditional batch systems such as PBS or Condor. This high dispatch rate dramatically reduces the minimum task length required to maintain high efficiency. Third, extensive caching mitigates the shared‑filesystem bottleneck. Both platforms expose a RAM‑disk on each compute node in addition to a shared GPFS/NFS file system. By pre‑staging binaries, scripts, and static input data on the RAM‑disk and buffering intermediate output locally until sufficient data accumulates, the authors reduce I/O contention and keep overall execution efficiency above 90 % in most cases. The paper validates the approach with micro‑benchmarks and two real scientific applications: an economic energy model and a molecular dynamics simulation. Experiments on 4 096 cores of the Blue Gene/P and 5 832 cores of the SiCortex show sustained execution rates of thousands of tasks per second and efficiencies of 94 % or higher for tasks as short as 4–8 seconds. Theoretical analysis further shows that, even on a hypothetical 160 K‑core Blue Gene/P, a dispatch rate of 10 K tasks per second would allow 90 % efficiency for tasks as short as 3.75 seconds. In summary, by combining multi‑level scheduling, a high‑throughput dispatcher, and aggressive caching, the authors transform petascale HPC machines into effective high‑throughput computing platforms for loosely‑coupled, file‑based workflows. This work provides a practical blueprint for extending the utility of existing supercomputers to a broader class of scientific applications and offers insights that will be valuable as the community moves toward exascale systems.

Comments & Academic Discussion

Loading comments...

Leave a Comment