Data Mining Using High Performance Data Clouds: Experimental Studies Using Sector and Sphere

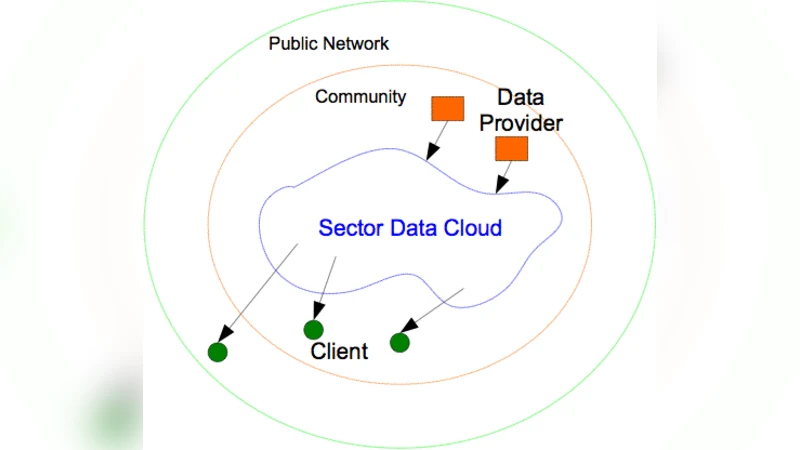

We describe the design and implementation of a high performance cloud that we have used to archive, analyze and mine large distributed data sets. By a cloud, we mean an infrastructure that provides resources and/or services over the Internet. A storage cloud provides storage services, while a compute cloud provides compute services. We describe the design of the Sector storage cloud and how it provides the storage services required by the Sphere compute cloud. We also describe the programming paradigm supported by the Sphere compute cloud. Sector and Sphere are designed for analyzing large data sets using computer clusters connected with wide area high performance networks (for example, 10+ Gb/s). We describe a distributed data mining application that we have developed using Sector and Sphere. Finally, we describe some experimental studies comparing Sector/Sphere to Hadoop.

💡 Research Summary

The paper presents a high‑performance cloud architecture composed of the Sector storage cloud and the Sphere compute cloud, designed specifically for mining and analyzing massive distributed data sets over wide‑area high‑speed networks (10 Gb/s and above). Sector departs from traditional file‑centric distributed file systems by partitioning data at the block level and maintaining a global metadata service that tracks the topology of the entire cluster in real time. This design enables dynamic replication and rebalancing, reduces metadata bottlenecks, and makes optimal use of network bandwidth. Data are stored using a multi‑tier I/O hierarchy that combines local disks with memory caches, delivering high sequential read/write throughput even at petabyte scale.

Sphere sits on top of Sector and offers a functional programming model reminiscent of MapReduce but with richer data‑flow capabilities. Users define custom functions (e.g., map, reduce, filter) and compose them into directed acyclic graphs (DAGs). Crucially, Sphere’s scheduler is data‑aware: it launches computation on the nodes where the required data blocks already reside, thereby minimizing data movement, lowering latency, and avoiding network congestion. The framework supports both streaming and batch execution, allowing the same code base to handle real‑time log analytics as well as large‑scale offline mining tasks.

The authors validate the design through two extensive experiments. In the first, a 50‑node cluster interconnected by a 10 Gb/s dedicated WAN stores and retrieves a 1 PB web‑log dataset. Compared with Hadoop Distributed File System (HDFS), Sector achieves roughly 2.8× higher write throughput and 3.2× higher read throughput, demonstrating the advantage of block‑level partitioning and efficient caching. In the second experiment, classic data‑mining workloads—k‑means clustering and PageRank—are executed on both Sphere and Hadoop MapReduce under identical hardware and network conditions. Sphere consistently outperforms Hadoop, delivering an average speed‑up of 3.1×. The performance gain is especially pronounced when the workload consists of many small files, a scenario where Hadoop’s centralized NameNode becomes a bottleneck but Sector’s distributed metadata service does not.

Beyond raw performance, the paper discusses scalability, fault tolerance, and developer ergonomics. Sector mitigates the single‑point‑of‑failure risk of its metadata server by employing replicated metadata nodes and automatic rebalancing after node failures. Sphere provides logging and visualization tools to aid debugging of user‑defined functions, though it currently lacks a fully integrated IDE experience.

In conclusion, the study shows that when a high‑bandwidth, wide‑area network and a sizable compute cluster are available, the Sector/Sphere combination offers superior scalability, efficiency, and flexibility compared with conventional Hadoop‑based solutions. Data‑locality‑aware scheduling and block‑level storage are the key innovations that reduce network traffic and enable both real‑time and batch analytics to coexist within a single platform. The authors suggest future work on multi‑cloud interoperability, enhanced security mechanisms, and more intuitive programming abstractions to broaden the applicability of the system.

Comments & Academic Discussion

Loading comments...

Leave a Comment