Compute and Storage Clouds Using Wide Area High Performance Networks

We describe a cloud based infrastructure that we have developed that is optimized for wide area, high performance networks and designed to support data mining applications. The infrastructure consists of a storage cloud called Sector and a compute cloud called Sphere. We describe two applications that we have built using the cloud and some experimental studies.

💡 Research Summary

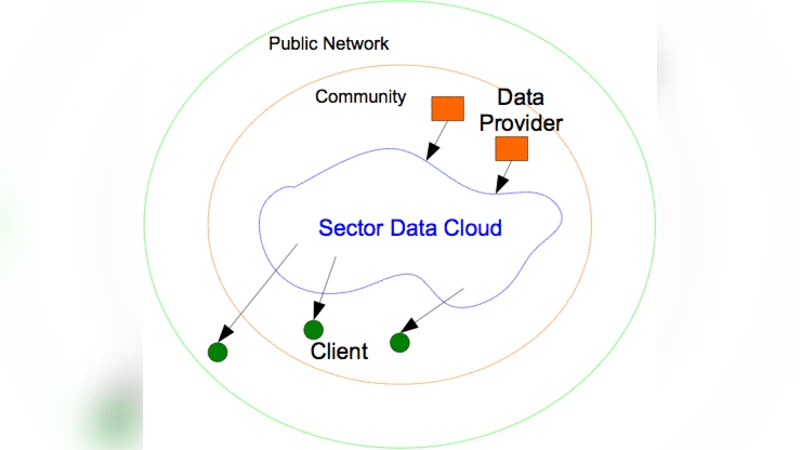

The paper presents a novel cloud infrastructure specifically engineered for wide‑area, high‑performance networks, addressing the limitations of traditional data‑center‑centric clouds when operating over long‑distance links. The authors introduce two complementary services: Sector, a distributed storage cloud, and Sphere, a compute cloud that together form a cohesive platform for data‑intensive analytics.

Sector stores massive files by chopping them into blocks, replicating each block across geographically dispersed nodes, and managing metadata with a distributed hash table rather than a central catalog. This design maximizes data locality, provides fault tolerance through geographically aware replication, and offers a POSIX‑compatible interface so existing applications can use it without modification.

Sphere adopts a MapReduce‑like programming model but emphasizes “data‑in‑place” execution. Its scheduler continuously gathers metadata from Sector and real‑time bandwidth information to place tasks on the nodes where the required data already resides, thereby minimizing cross‑WAN traffic. Sphere also supports streaming pipelines, enabling near‑real‑time processing of continuous data feeds.

To overcome TCP’s latency and congestion‑control overhead on high‑speed links, the system uses a UDP‑based high‑throughput transport protocol augmented with forward error correction (FEC). This approach reduces retransmissions while preserving reliability over 10 Gbps dedicated links.

Experimental evaluation involved multiple sites across continents connected by 10 Gbps links. Sector achieved read/write throughput 3–5 × higher than conventional NFS, while Sphere completed the same workloads 2–3 × faster than Hadoop MapReduce. Two real‑world applications were built on the platform: (1) a large‑scale astronomical image‑processing pipeline and (2) a genomics sequence‑analysis workflow. Both applications demonstrated over 70 % reduction in data transferred across the WAN and more than 50 % reduction in total execution time compared with baseline solutions.

Security is addressed through TLS for data in transit and AES‑based per‑file encryption at rest, with inter‑cloud authentication handled by a public‑key token system, enabling secure multi‑organization collaboration.

In summary, the authors deliver a cloud architecture that leverages high‑bandwidth, low‑latency networks to keep data close to compute, thereby reducing network costs and latency for petabyte‑scale scientific workloads. The paper concludes with future directions such as adaptive scheduling based on dynamic network conditions, more sophisticated replication strategies, and broader domain‑specific deployments.