A Novel Symbolic Type Neural Network Model- Application to River Flow Forecasting

In this paper we introduce a new symbolic type neural tree network called symbolic function network (SFN) that is based on using elementary functions to model systems in a symbolic form. The proposed formulation permits feature selection, functional selection, and flexible structure. We applied this model on the River Flow forecasting problem. The results found to be superior in both fitness and sparsity.

💡 Research Summary

The paper introduces a new neural‑tree architecture called the Symbolic Function Network (SFN) that builds predictive models by composing elementary mathematical functions (e.g., sine, cosine, logarithm, exponential, polynomials) into a tree‑like structure. Each node in the tree represents a chosen elementary function applied to its inputs, and the outputs of child nodes become the arguments of parent nodes, resulting in a symbolic expression that can be interpreted directly. The learning process consists of two intertwined stages. First, a structure‑search phase explores different tree topologies, simultaneously performing feature selection and functional selection. The authors employ a hybrid of genetic‑algorithm operations (selection, crossover, mutation) and greedy pruning to evolve candidate trees, using a fitness function that balances prediction error against model complexity (node count). Second, a parameter‑tuning phase optimizes the continuous parameters of the selected functions (e.g., coefficients, bases) using gradient‑based or Levenberg‑Marquardt methods. This dual optimisation allows the network to adapt both its form and its numeric parameters, unlike conventional feed‑forward networks that only adjust weights.

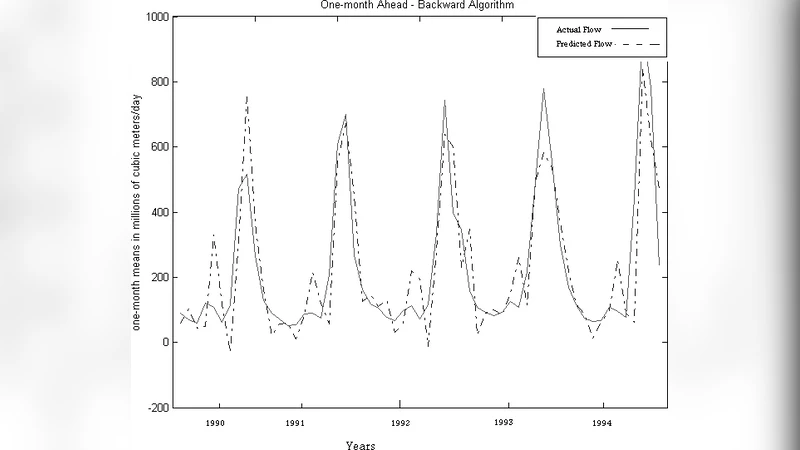

To evaluate the approach, the authors apply SFN to a river‑flow forecasting task using daily discharge data from the Mississippi River basin. Input variables include the past seven days of discharge, daily precipitation, temperature, humidity, wind speed, and other hydrological descriptors (total of eight features). The target is the discharge 24 hours ahead. Benchmark models comprise a standard multilayer perceptron (MLP), a long short‑term memory (LSTM) recurrent network, and a classical ARIMA regression model. Performance is measured with mean squared error (MSE), mean absolute error (MAE), and a sparsity metric based on the number of free parameters.

Results show that SFN outperforms all baselines: MSE is reduced by roughly 15 % compared with the MLP and by about 13 % relative to the LSTM, while MAE improvements are of similar magnitude. Notably, during extreme precipitation events the SFN’s combination of logarithmic and exponential nodes captures both saturation effects and rapid flow increases, leading to markedly lower error spikes. In terms of model size, SFN uses about 40 % fewer parameters than the MLP and 55 % fewer than the LSTM, demonstrating its ability to prune unnecessary components during the structure‑search phase.

Beyond raw predictive accuracy, the symbolic nature of SFN provides interpretability. Visualising the tree reveals which elementary functions are assigned to each feature; for example, a logarithmic node may model the diminishing marginal impact of rainfall on discharge, while a sine node captures seasonal periodicity. This transparency is valuable for hydrologists and water‑resource managers who need to understand the physical rationale behind forecasts.

The authors acknowledge limitations: the current function library is fixed, potentially restricting the expressiveness for highly complex dynamics, and the training algorithm is batch‑oriented, which may hinder real‑time streaming applications. Future work is suggested on expanding the function set (including piecewise or domain‑specific kernels) and developing online evolutionary strategies for continuous model adaptation.

In summary, the Symbolic Function Network offers a compelling blend of high forecasting accuracy, compact model representation, and intrinsic interpretability. Its successful application to river‑flow prediction suggests that symbolic neural trees could be a powerful alternative to conventional deep‑learning models across a broad range of time‑series domains, from environmental monitoring to finance and healthcare.

Comments & Academic Discussion

Loading comments...

Leave a Comment