Over two decades ago a "quite revolution" overwhelmingly replaced knowledgebased approaches in natural language processing (NLP) by quantitative (e.g., statistical, corpus-based, machine learning) methods. Although it is our firm belief that purely quantitative approaches cannot be the only paradigm for NLP, dissatisfaction with purely engineering approaches to the construction of large knowledge bases for NLP are somewhat justified. In this paper we hope to demonstrate that both trends are partly misguided and that the time has come to enrich logical semantics with an ontological structure that reflects our commonsense view of the world and the way we talk about in ordinary language. In this paper it will be demonstrated that assuming such an ontological structure a number of challenges in the semantics of natural language (e.g., metonymy, intensionality, copredication, nominal compounds, etc.) can be properly and uniformly addressed.

Deep Dive into Commonsense Knowledge, Ontology and Ordinary Language.

Over two decades ago a “quite revolution” overwhelmingly replaced knowledgebased approaches in natural language processing (NLP) by quantitative (e.g., statistical, corpus-based, machine learning) methods. Although it is our firm belief that purely quantitative approaches cannot be the only paradigm for NLP, dissatisfaction with purely engineering approaches to the construction of large knowledge bases for NLP are somewhat justified. In this paper we hope to demonstrate that both trends are partly misguided and that the time has come to enrich logical semantics with an ontological structure that reflects our commonsense view of the world and the way we talk about in ordinary language. In this paper it will be demonstrated that assuming such an ontological structure a number of challenges in the semantics of natural language (e.g., metonymy, intensionality, copredication, nominal compounds, etc.) can be properly and uniformly addressed.

Int. J. Reasoning-based Intelligent Systems, Vol. n, No. m, 2008

43

Copyright © 2008 Inderscience Enterprises Ltd.

Commonsense Knowledge,

Ontology and Ordinary Language

Walid S. Saba

American Institutes for Research,

1000 Thomas Jefferson Street, NW, Washington, DC 20007 USA

E-mail: wsaba@air.org

Abstract: Over two decades ago a “quite revolution” overwhelmingly replaced knowledge-

based approaches in natural language processing (NLP) by quantitative (e.g., statistical,

corpus-based, machine learning) methods. Although it is our firm belief that purely quanti-

tative approaches cannot be the only paradigm for NLP, dissatisfaction with purely engi-

neering approaches to the construction of large knowledge bases for NLP are somewhat

justified. In this paper we hope to demonstrate that both trends are partly misguided and

that the time has come to enrich logical semantics with an ontological structure that reflects

our commonsense view of the world and the way we talk about in ordinary language. In

this paper it will be demonstrated that assuming such an ontological structure a number of

challenges in the semantics of natural language (e.g., metonymy, intensionality, copredica-

tion, nominal compounds, etc.) can be properly and uniformly addressed.

Keywords: Ontology, compositional semantics, commonsense knowledge, reasoning.

Reference to this paper should be made as follows: Saba, W. S. (2008) ‘Commonsense

Knowledge, Ontology and Ordinary Language’, Int. Journal of Reasoning-based Intelligent

Systems, Vol. n, No. n, pp.43–60.

Biographical notes: W. Saba received his PhD in Computer Science from Carleton Uni-

versity in 1999. He is currently a Principal Software Engineer at the American Institutes for

Research in Washington, DC. Prior to this he was in academia where he taught computer

science at the University of Windsor and the American University of Beirut (AUB). For

over 9 years he was also a consulting software engineer where worked at such places as

AT&T Bell Labs, MetLife and Cognos, Inc. His research interests are in natural language

processing, ontology, the representation of and reasoning with commonsense knowledge,

and intelligent e-commerce agents.

1

INTRODUCTION

Over two decades ago a “quite revolution”, as Charniak

(1995) once called it, overwhelmingly replaced knowledge-

based approaches in natural language processing (NLP) by

quantitative (e.g., statistical, corpus-based, machine learn-

ing) methods. In recent years, however, the terms ontology,

semantic web and semantic computing have been in vogue,

and regardless of how these terms are being used (or mis-

used) we believe that this ‘semantic counter revolution’ is a

positive trend since corpus-based approaches to NLP, while

useful in some language processing tasks – see (Ng and

Zelle, 1997) for a good review – cannot account for compo-

sitionality and productivity in natural language, not to men-

tion the complex inferential patterns that occur in ordinary

language use. The inferences we have in mind here can be

illustrated by the following example:

(1) Pass that car will you.

a. He is really annoying me.

b. They are really annoying me.

Clearly, speakers of ordinary language can easily infer that

‘he’ in (1a) refers to the person driving [that] car, while

‘they’ in (1b) is a reference to the people riding [that] car.

Such inferences, we believe, cannot theoretically be learned

(how many such examples will be needed?), and are thus

beyond the capabilities of any quantitative approach. On the

other hand, and although it is our firm belief that purely

quantitative approaches cannot be the only paradigm for

NLP, dissatisfaction with purely engineering approaches to

the construction of large knowledge bases for NLP (e.g.,

Lenat and Ghua, 1990) are somewhat justified. While lan-

guage ‘understanding’ is for the most part a commonsense

‘reasoning’ process at the pragmatic level, as example (1)

illustrates, the knowledge structures that an NLP system

must utilize should have sound linguistic and ontological

underpinnings and must be formalized if we ever hope to

build scalable systems (or as John McCarthy once said, if

we ever hope to build systems that we can actually under-

stand!). Thus, and as we have argued elsewhere (Saba,

2007), we believe that both trends are partly misguided and

that the time has come to enrich logical semantics with an

44

W. S. SABA

ontological structure that reflects our commonsense view of

the world and the way we talk about in ordinary language.

Specifically, we argue that very little progress within logical

semantics have been made in the past several years due to

the fact that these systems are, for the most part, mere sym-

bol manipulation systems that are devoid of any content. In

particular, in such systems where there is hardly any link

between semantics and our commonsense view of the

world, it is quite difficult to envision how one

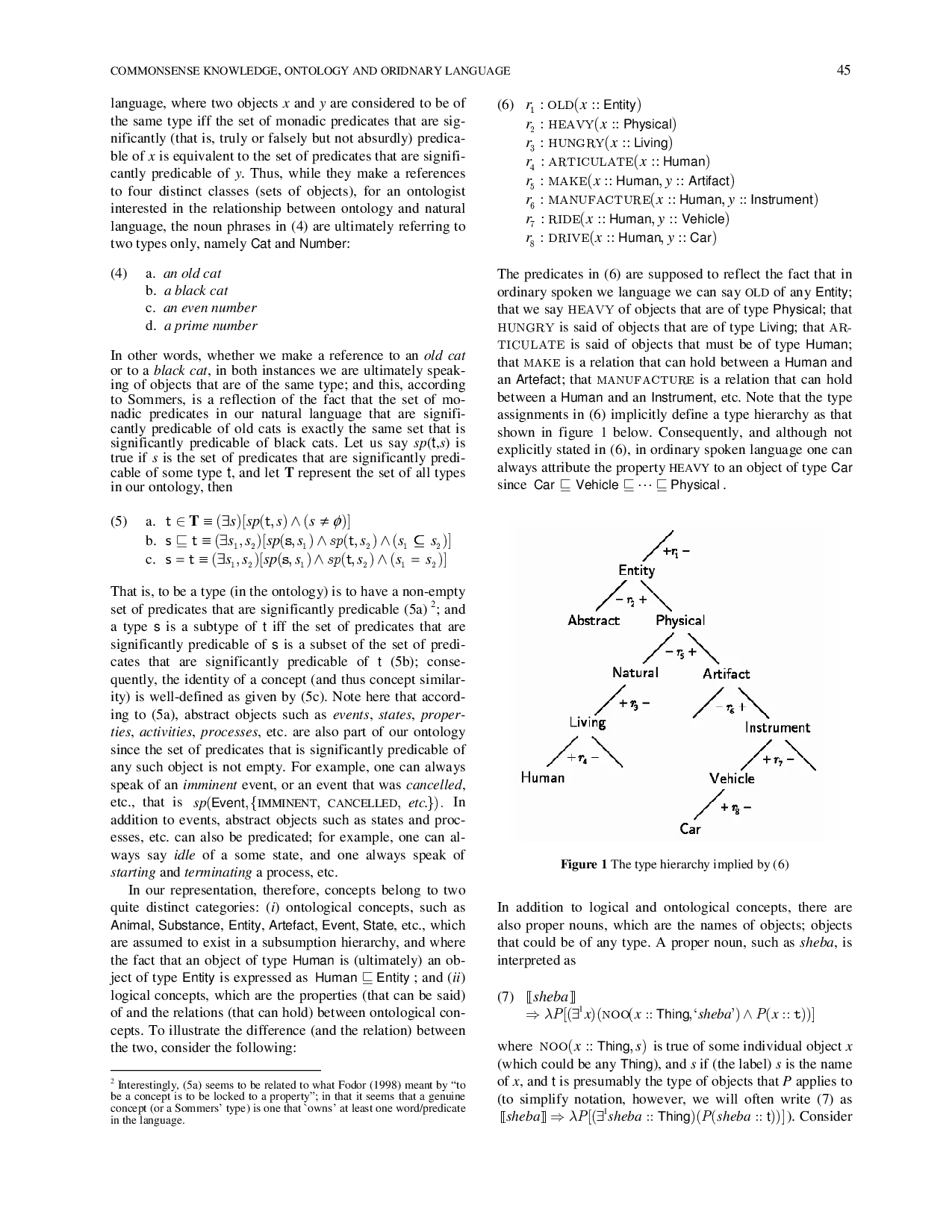

…(Full text truncated)…

This content is AI-processed based on ArXiv data.