Mutual information is copula entropy

We prove that mutual information is actually negative copula entropy, based on which a method for mutual information estimation is proposed.

We prove that mutual information is actually negative copula entropy, based on which a method for mutual information estimation is proposed.

💡 Research Summary

The paper establishes a precise equivalence between mutual information (MI) and the negative entropy of a copula, and leverages this relationship to devise a practical estimator for MI that outperforms existing techniques, especially in moderate‑to‑high dimensional settings.

Background and Motivation

Mutual information quantifies the statistical dependence between two random variables and is widely used in fields ranging from neuroscience to feature selection. Classical estimators—k‑nearest‑neighbors (k‑NN), kernel density estimation (KDE), and recent neural approaches such as MINE—suffer from bias, variance, and computational burdens that grow rapidly with dimensionality and limited sample sizes. Copula theory, which separates a multivariate distribution into its marginal components and a dependence structure (the copula), offers a natural way to isolate the part of the distribution that actually carries information about dependence.

Theoretical Foundations

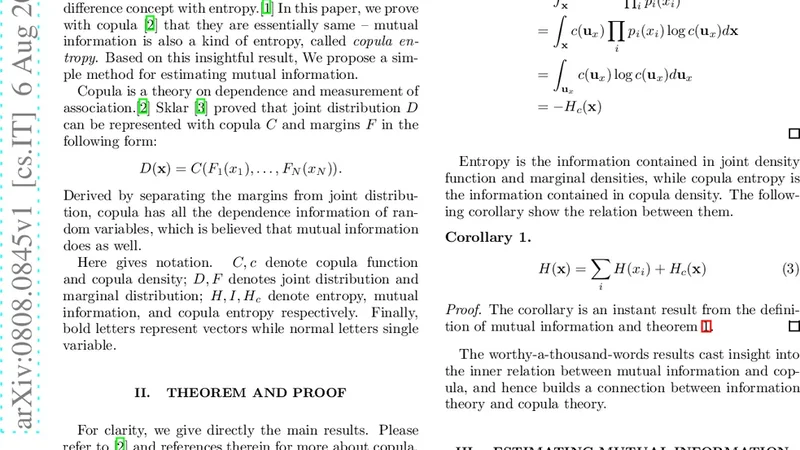

The authors begin with Sklar’s theorem, which states that any continuous joint distribution (f_{XY}(x,y)) can be expressed as

\

📜 Original Paper Content

🚀 Synchronizing high-quality layout from 1TB storage...