A Cutting Plane Method based on Redundant Rows for Improving Fractional Distance

In this paper, an idea of the cutting plane method is employed to improve the fractional distance of a given binary parity check matrix. The fractional distance is the minimum weight (with respect to l1-distance) of vertices of the fundamental polyto…

Authors: Makoto Miwa, Tadashi Wadayama, Ichi Takumi

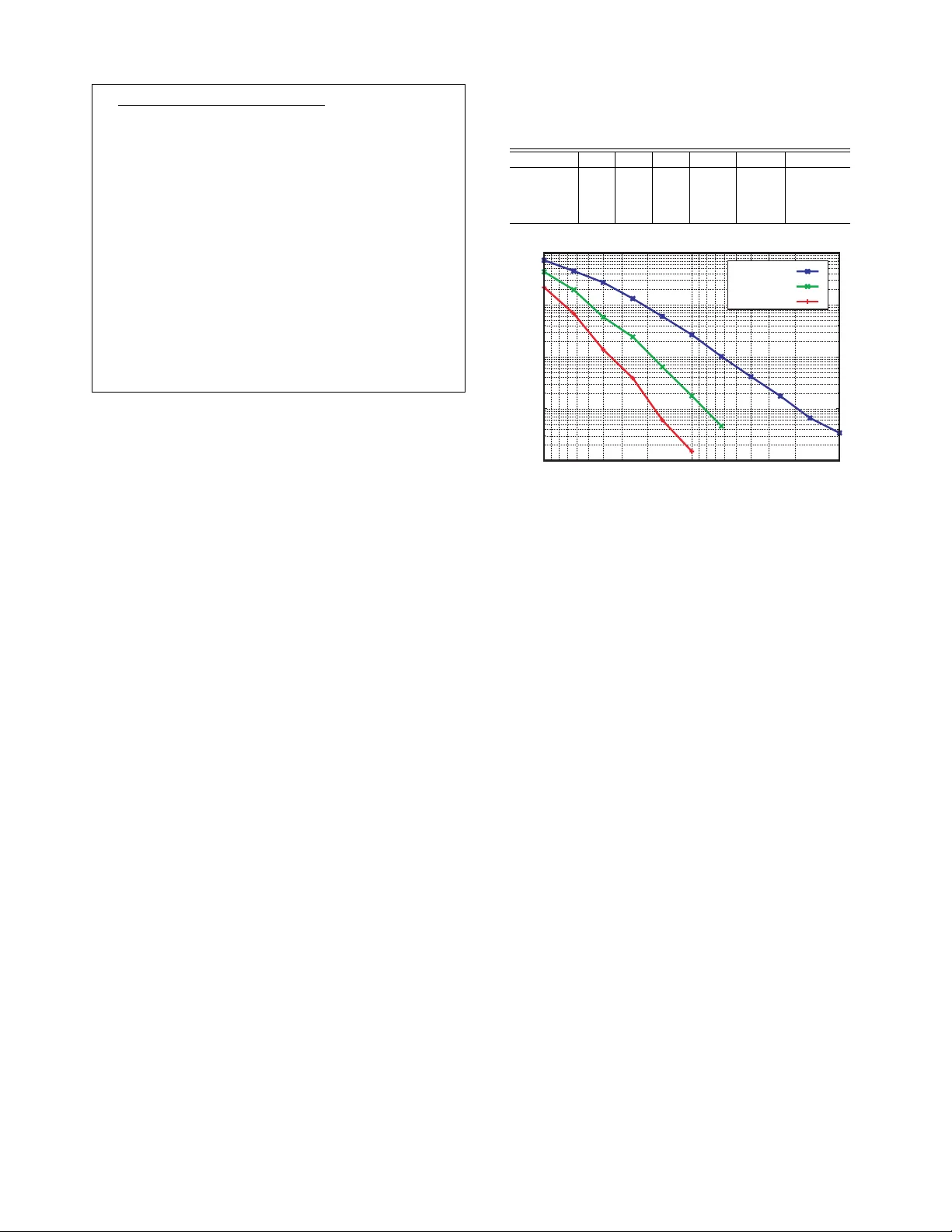

A Cutting Plane Method based on Redundant Ro ws for Impro ving Fractional Distance Makoto Miwa ∗ , T adashi W adayama ∗ and Ichi T akumi ∗ ∗ Graduate School of Engineering Nagoya Institute of T echnology , Gokiso-cho, Showa-ku, Nagoya, 466-8555 Japan Email: mkt-m@ics.nitech.ac.jp, wadayama@nitech.ac.jp, takumi@ics.nitech.ac.jp Abstract — In this paper , an idea of the cutting plane method is employed to improve the fractional distance of a given binary parity check matrix. The fractional distance is the minimum weight (with respect to ` 1 -distance) of vertices of the fundamental polytope. The cutting polytope is defined based on redundant ro ws of the parity check matrix and it plays a key role to eliminate unnecessary fractional vertices in the fundamental polytope. W e propose a greedy algorithm and its efficient implementation for impro ving the fractional distance based on the cutting plane method. I . I N T RO D U C T I O N Linear programming (LP) decoding proposed by Feldman [3] is one of the promising decoding algorithms for lo w density-parity check (LDPC) codes. The in vention of LP decoding opened a new research field of decoding algorithms for binary linear codes. Recently , a number of studies on LP decoding have been made such as [13][7][8]. LP decoding has se veral virtues that belief propagation (BP) decoding does not possess. One of the advantages of LP decoding is that the behavior of the LP decoder can be clearly understood from a viewpoint of optimization. The decoding process of an LP decoder is just a minimization process of a linear function subject to linear inequalities corresponding to the parity check conditions. The feasible set of this linear programming problem is called the fundamental polytope [3]. The fundamental polytope is a relaxed polytope that includes the con vex hull of all the code words of a binary linear code. Decoding performance of LP decoding is thus closely related to geometrical properties of the fundamental polytope. Another adv antage of LP decoding is that its decoding per- formance can be improv ed by including additional constraints in the original LP problem. The additional constraints tighten the relaxation and they lead to improv ed decoding perfor- mance. Of course, additional constraints increase the decoding complexity of LP decoding but we can obtain flexibility to choose a trade-off between performance and complexity . The goal of the paper is to design an efficient method to find additional constraints that improve this trade-of f for a giv en parity check matrix. In this paper, we will propose a new greedy type algorithm to enhance the fractional distance based on the cutting poly- tope based on the redundant rows that eliminates unnecessary fractional vertices in the fundamental polytope. The additional constraints generated by the proposed method is based on redundant ro ws of a parity check matrix. In other words, the proposed method can be considered as a method to generate redundant parity check matrices from a gi ven original parity check matrix. Therefore, the present work has close relationship to the works on elimination of stopping sets (SS) by using redundant parity check matrices and on stopping redundancy such as [12] [1] [6]. The cutting plane method is a well established technique for solving an integer linear programming (ILP) problem based on LP [10]. The basic idea of the cutting plane method is simple. In the first phase, an ILP problem is relaxed to an LP problem and then it is solved by an LP solver . If we get a fractional solution (i.e., a vector with elements of fractional number), a cutting plane (i.e., an additional linear constraint) matched to the fractional solution is added to the LP problem in the second phase. The cutting plane is actually a half space which e xcludes the fractional solution but it includes all the ILP solutions. In the third phase, the extended LP problem is solved and the abov e process continues until we obtain an integral solution. The fractional distance of a binary linear code is the ` 1 - weight of the minimum weight vertex of the fundamental polytope. The fractional distance is known to be an appropriate geometrical property which indicates the decoding perfor- mance of LP decoding for the binary symmetric channel (BSC). It is prov ed that the LP decoder can successfully correct bit flip errors if the number of errors is less than half of the fractional distance [3]. In contrast to the minimum distance of a binary linear code, we can ev aluate the fractional distance efficiently with an LP solv er . Efficient e valuation of the fractional distance is especially important in the proposed method. The idea for improving LP decoding performance using redundant ro ws of a gi ven parity check matrix was discussed in [13] [4]. In their methods, the redundant rows are effi- ciently found based on a short cycle of a T anner graph of the parity check matrix. Their results indicate that addition of redundant rows is a promising technique to improv e LP decoding performance and they suggest that further studies on this subject are meaningful to pursue more systematic ways to find appropriate redundant rows that achieves better trade-offs between decoding performance and complexity . I I . P R E L I M I NA R I E S In this section, notations and definitions required throughout the paper are introduced. A. Notations and definitions Let H be a binary m × n matrix where n > m ≥ 1 . The binary linear code defined by H , C ( H ) , is defined by C ( H ) ≡ x ∈ F n 2 : x H T = 0 m , (1) where F 2 is the Galois field with two elements { 0 , 1 } and 0 m is the zero vector of length m . In the present paper , the bold face letter , like x , denotes a row vector . The elements of a vector is expressed by corresponding normal face letter with subscript; e.g., x = ( x 1 , x 2 , . . . , x n ) . The follo wing definition of the fundamental polytope is due to Feldman [3]. Definition 1: (Fundamental polytope) Assume that t is a binary row vector of length n . Let X ( t ) ≡ { S ⊆ Supp( t ) : | S | is odd } , (2) where Supp( t ) denotes the support set of the v ector t defined by Supp( t ) ≡ { i ∈ { 1 , . . . , n } : t i 6 = 0 } . (3) The single parity polytope of t is the polytope defined by U ( t ) ≡ { f ∈ [0 , 1] n : ∀ S ∈ X ( t ) , X j ∈ S f j + X j ∈ (Supp( t ) \ S ) (1 − f j ) ≤ | Supp( t ) | − 1 } , (4) where [ a, b ] ≡ { v ∈ R : a ≤ v ≤ b } . The symbol R denotes the set of real numbers. For a given binary m × n parity check matrix H , the fundamental polytope P ( H ) is defined by P ( H ) ≡ m \ i =1 U ( h i ) , (5) where h i = ( h i 1 , h i 2 , . . . , h in ) is the i -th ro w vector of H . The con vex hull of C ( H ) is the intersection of all con vex sets including C ( H ) 1 . It is known that the fundamental polytope P ( H ) contains the con vex hull of C ( H ) as a subset and that P ( H ) ∩ { 0 , 1 } n = C ( H ) holds. Let M denote the number of the linear constraints (inequal- ities) that forms P ( H ) . Assume that these linear constraints are numbered from 1 to M and that the k -th linear constraint has the form: α k 1 f 1 + · · · + α kn f n ≤ β k , (6) for k ∈ { 1 , . . . , M } . W e call the k -th constraint Const k . The hyper plane corresponding to Const k is given by F k ≡ { f ∈ R n : α k 1 f 1 + · · · + α kn f n = β k } , (7) and the half space satisfying Const k is defined by H k ≡ { f ∈ R n : α k 1 f 1 + · · · + α kn f n ≤ β k } . (8) 1 In this context, we implicitly assume that C ( H ) is embedded in R n . The fundamental polytope P ( H ) is thus the intersection of the half spaces such that P ( H ) = H 1 ∩ H 2 ∩ · · · ∩ H M . (9) Let F act ( H ) be the set of indices of the acti ve constraints defined by F act ( H ) ≡ { k ∈ { 1 , . . . , M } : 0 n ∈ F k } . (10) The activ e constraints are the linear constraints whose hyper plane contains the origin 0 n . In a similar manner , we define F inact ( H ) , which is the set of indices of the inactiv e con- straints, by F inact ( H ) ≡ { k ∈ { 1 , . . . , M } : 0 n / ∈ F k } . (11) The fundamental cone K ( H ) is the cone defined by the active constraints: K ( H ) ≡ { f ∈ [0 , 1] n : ∀ k ∈ F act ( H ) , f ∈ H k } . (12) I I I . C U T T I N G P L A N E M E T H O D In this section, the main idea of the cutting plane method based on redundant rows will be introduced and then an application to Hamming code is shown as an example. A. F ractional distance The fractional distance d frac ( H ) is the ` 1 -distance between a codeword and the nearest v ertex of P ( H ) [3]. Definition 2: (Fractional distance) Let V ( X ) be the set of all vertices of a polytope X . For a given binary m × n parity check matrix H , the fractional distance of H is defined by d frac ( H ) ≡ min x ∈ C ( H ) f ∈V ( P ( H )) x 6 = f n X i =1 | x i − f i | . (13) The importance of the fractional distance is stated in the following lemma due to Feldman [3]. Lemma 1: Assume that the channel is BSC. Let e be the number of the bit flip errors. If d frac ( H ) 2 − 1 ≥ e, (14) holds, then all the bit flip errors can be corrected by the LP decoder . (Proof) The proof is giv en in [3]. Based on the geometrical uniformity of the fundamental polytope (called C-symmetry in [3]), it has been prov ed that d frac ( H ) is the ` 1 -weight of the minimum weight verte x of P ( H ) e xcept for the origin, which is expressed by d frac ( H ) = min f ∈V ( P ( H )) \ 0 n n X i =1 f i . (15) Let Γ( H ) be a set of the minimum weight vertices of P ( H ) : Γ( H ) ≡ ( p ∈ V ( P ( H )) : n X i =1 p i = d frac ( H ) ) , (16) and let d min be the minimum distance of C ( H ) . Since any code word of C ( H ) is a verte x of P ( H ) , it is obvious that the inequality d frac ( H ) ≤ d min holds for any H . The fractional distance d frac ( H ) depends on the representation of a giv en binary linear code (i.e., the parity check matrix) and there are a number of parity check matrices that define the same binary linear code. This means that the parity check matrices of a binary linear code can be ranked in terms of its fractional distance. It is hoped to find a better parity check which achiev es larger factional distance for a giv en binary linear code because such a parity check matrix may improve the performance/complexity trade-of f of LP decoding for the target code. B. Cutting polytope In this subsection, we will define the cutting polytope based on the redundant ro ws. The next definition gives the definition of the r edundant r ow . Definition 3: (Redundant ro w) Let H be a binary m × n parity check matrix of the tar get code C . A redundant row h is a linear combination of the row vectors of H such that h = a 1 h 1 + a 2 h 2 + · · · + a m h m , (17) where a i ∈ F 2 ( i ∈ { 1 , . . . , m } ) . The single parity polytope of a redundant row h includes all the codew ords of C ( H ) because any codew ord x ∈ C ( H ) satisfies xh T = 0 [12]. The next lemma asserts a cutting property of a single parity polytope satisfying a certain condition. Lemma 2: Let H be a binary m × n parity check matrix. Assume that a point p ≡ ( p 1 , . . . , p n ) ∈ R n and t ∈ F n 2 are giv en. If ∃ j ∈ Supp( t ) , p j > X l ∈ Supp( t ) \{ j } p l , (18) holds, then p / ∈ U ( t ) holds. (Proof) Let j ∗ be the index satisfying p j ∗ > X l ∈ Supp( t ) \{ j ∗ } p l . (19) The above inequality is equi v alent to the following inequality: X j ∈{ j ∗ } p j + X l ∈ Supp( t ) \{ j ∗ } (1 − p l ) > | Supp( t ) | − 1 . (20) From the definition of U ( t ) , it is e vident that p / ∈ U ( t ) . The cutting polytope defined below is used to cut a fractional vertex in the proposed method for improving the fractional distance. Definition 4: (Cutting polytope) Assume that p = ( p 1 , p 2 , . . . , p n ) ∈ P ( H ) . If a redundant row h with the form: h = a 1 h 1 + a 2 h 2 + · · · + a m h m (21) satisfies p j > X l ∈ (Supp( h ) \{ j } ) p l , (22) where j = arg max i ∈ Supp( h ) p i , then U ( h ) is called a cutting polytope of p . The next theorem introduce a tighter relaxation of the con- ve x hull of C ( H ) which may impro ve the fractional distance. Theor em 1: Let p = ( p 1 , p 2 , . . . , p n ) ∈ P ( H ) and U ( h ) be a cutting polytope of p . The following relations hold: C ( H ) ⊂ P ( H ) ∩ U ( h ) (23) and p / ∈ P ( H ) ∩ U ( h ) . (24) (Proof) The claim of the theorem is directly derived from C ( H ) ⊂ U ( t ) and Lemma 2. The theorem implies that the cutting polytope of p also contains C ( H ) b ut excludes p . In other words, the intersection P ( H ) ∩ U ( h ) is a tighter relaxation of the con vex hull of C ( H ) compared with P ( H ) . Let H 0 be the parity check matrix obtained by stacking H and h , namely , H 0 ≡ H h . (25) Note that P ( H 0 ) = P ( H ) ∩ U ( h ) has also geometrical uniformity because P ( H 0 ) is a fundamental polytope. This means that the cutting polytope cuts not only p but also some non-codew ord vertices of P ( H ) which are geometrically equiv alent to p . The fractional distance of H 0 , d frac ( H 0 ) , thus can be larger than the fractional distance d frac ( H ) because the point p ∈ Γ( H ) is excluded from the new fundamental polytope P ( H 0 ) . Furthermore, the multiplicity (i.e., size of Γ( H ) ) can be reduced as well by eliminating a fractional verte x with the minimum ` 1 -weight. Figure 1 illustrates the idea of the cutting polytope. Codeword Fundamental polytope Cutting polytope Fig. 1. The idea of the cutting polytope C. Cutting plane method: an example In this subsection, we will examine the idea of the cutting plane method described in the previous subsection thorough a concrete example. Let H be a parity check matrix of (7 , 4 , 3) Hamming code: H = 1 0 1 1 1 0 0 1 1 0 1 0 1 0 0 1 1 1 0 0 1 . (26) In this case, we hav e the index sets: Supp( h 1 ) = { 1 , 3 , 4 , 5 } , Supp( h 2 ) = { 1 , 2 , 4 , 6 } , Supp( h 3 ) = { 2 , 3 , 4 , 7 } and X ( h 1 ) = {{ 1 } , { 3 } , { 4 } , { 5 } , { 3 , 4 , 5 } , { 1 , 4 , 5 } , { 1 , 3 , 5 } , { 1 , 3 , 4 }} , X ( h 2 ) = {{ 1 } , { 2 } , { 4 } , { 6 } , { 2 , 4 , 6 } , { 1 , 4 , 6 } , { 1 , 2 , 6 } , { 1 , 2 , 4 }} , X ( h 3 ) = {{ 2 } , { 3 } , { 4 } , { 7 } , { 3 , 4 , 7 } , { 2 , 4 , 7 } , { 2 , 3 , 7 } , { 2 , 3 , 4 }} . The fundamental polytope of H is the set of points in [0 , 1] 7 satisfying X j ∈ S f j + X j ∈ (Supp( h i ) \ S ) (1 − f j ) ≤ 3 (27) for any i ∈ { 1 , 2 , 3 } and any S ∈ X ( h i ) . From some computations (details of computation are described in the next section), we can obtain the set of ` 1 -minimum weight vertices of P ( H ) (i.e., Γ( H ) ): „ 0 , 2 3 , 2 3 , 2 3 , 0 , 0 , 0 « , „ 2 3 , 0 , 2 3 , 2 3 , 0 , 0 , 0 « , „ 2 3 , 2 3 , 0 , 2 3 , 0 , 0 , 0 « . Therefore, in this case, d frac ( H ) is equal to 2 . Assume that we choose h = (1 , 0 , 1 , 0 , 0 , 1 , 1) as a redun- dant ro w that is the sum of the second and third rows of H . Let p = (0 , 2 / 3 , 2 / 3 , 2 / 3 , 0 , 0 , 0) ∈ Γ( H ) . It is easy to check that p 3 = 2 3 > X l ∈ Supp( h ) \{ 3 } p l = 0 (28) holds where Supp( h ) = { 1 , 3 , 6 , 7 } . This means that U ( h ) is a cutting polytope of p . By stacking H and h , we get a new parity check matrix H 0 whose fundamental polytope does not contain p as its vertex. In a similar manner , continuing the abov e process (appending redundant rows to H for cutting the vertices in Γ( H ) ), we eventually obtain a parity check matrix H ∗ : H ∗ = 1 0 1 1 1 0 0 1 1 0 1 0 1 0 0 1 1 1 0 0 1 1 0 1 0 0 1 1 1 1 0 0 1 0 1 0 0 0 1 1 1 1 0 1 1 0 1 1 0 . (29) The fractional distance of H ∗ is equal to 3 which is strictly larger than the fractional distance of H ( d frac ( H ) = 2 ). The details of a way to find an appropriate redundant rows will be discussed in the subsequent sections. It has been observed that the vectors in Γ( H ∗ ) are inte gral ; namely , they are the minimum weight codew ords of Hamming code. D. A gr eedy algorithm for cutting plane method The previous example on Hamming code suggests that iterativ e use of the cutting plane method for a giv en parity check matrix may yield a parity check matrix with redundant rows which is better than the original one in terms of the fractional distance. The following greedy algorithm, called gr eedy cutting plane algorithm , is naturally obtained from the abov e observation. Greedy cutting plane algorithm Step 1 Ev aluate Γ( H ) . Step 2 Pick up p ∈ Γ( H ) . Step 3 Find a redundant row h of H which giv es a cutting polytope of p . If such h does not exist, exit the procedure. Step 4 Update H by H := H h . (30) Step 5 Return to Step 1. Details on the process of finding a redundant ro w h is shown in the next section. The most time consuming parts of the abov e algorithm are e valuation of Γ( H ) and search for a redundant row . In the ne xt section, we will discuss ef ficient implementations for these parts that are indispensable to deal with codes of long length. I V . E FFI C I E N T I M P L E M E N TA T I O N A. Efficient computation of d frac ( H ) and Γ( H ) As described in the previous section, ev aluation of d frac ( H ) and Γ( H ) is required for finding a redundant parity check ma- trix with better fractional distance. An algorithm for computing d frac ( H ) has been proposed by Feldman [3]. Firstly , we revie w the Feldman’ s method. For any k ∈ { 1 , . . . , M } , Let d k be d k ≡ minimize n X i =1 f i s . t . f ∈ ( P ( H ) ∩ F k ) (31) where this LP problem is denoted by LP k . Thus, d k can be considered as the ` 1 -weight of the minimum weight vertex on the facet F k of P ( H ) . Since there exists at least one facet of P ( H ) which includes p for any vector p in Γ( H ) , it is evident that d frac ( H ) = M min k =1 δ k (32) holds where δ k = d k if d k > 0 ; otherwise δ k = ∞ . The LP problems LP k can be ef ficiently solv ed with an LP solver based on the simplex algorithm or the interior point algorithm. From the solution of these LP problems, we can obtain the fractional distance of H by (32). The number of constraints related to a fundamental polytope defined by (5) is an exponential function of the ro w weight of H . Thus the number of ex ecutions of the LP solver rapidly in- creases as the row weight of H grows. Another formulation of the fundamental polytope proposed by Y ang et al.[15] can be used to reduce the number of constraints. In their formulation, high weight ro ws of H are di vided to some lo w weight ro ws by introducing auxiliary variables. Although their method is effecti ve for ev aluating the fractional distance as well, we here propose another efficient method for ev aluating d frac ( H ) in this section. In our method, a fundamental polytope is relaxed to a fundamental cone. This method can be combined with Y ang et al. ’ s formulation. W e need to prepare a relaxed version of the fractional distance before discussing another expression of the fractional distance. For k ∈ F inact ( H ) , let d (relax) k be d (relax) k ≡ minimize n X i =1 f i s . t . f ∈ ( K ( H ) ∩ F k ) . (33) This relaxed LP problem is denoted by LP (relax) k . The relaxed fractional distance d (relax) k is defined by d (relax) frac ( H ) ≡ min k ∈ F inact ( H ) d (relax) k . (34) The next theorem states a useful equi valence relation. Theor em 2: For a gi ven H , the following equality holds: d (relax) frac ( H ) = d frac ( H ) . (35) (Proof) See appendix. A merit of Theorem 2 is that the ev aluation of d (relax) frac ( H ) takes less computational complexity than that of the ev aluation of d frac ( H ) using the Feldman’ s method. The reduction on the computational complexity comes from the following two reasons. One reason is that the feasible region of LP (relax) k is based on the fundamental cone K ( H ) (instead of P ( H ) ) which can be expressed with fewer linear constraints than the fundamental polytope. In the case of a regular LDPC code with row weight w r , the number of linear constraints required to define LP (relax) k is mw r + 1 . On the other hand, to express the fundamental polytope required in LP k , m 2 w r − 1 linear constraints are needed. Another reason of the reduction on complexity is that fewer executions of the LP solver are required for ev aluating LP (relax) k because we can focus only on the inactive linear constraints in the case of LP (relax) k . B. Searc h for redundant r ows A straightforward way to obtain a redundant row h that giv es a cutting polytope of a given point p is the exhausti ve search. Namely , each redundant ro w is checked whether it sat- isfies the condition (18) or not. Ho we ver , this nai ve approach is prohibitiv ely slo w e ven for codes of moderate length because there are 2 m redundant ro ws. W e thus need a remedy to narro w the search space. The following theorem gives the basis of the reduction on the search space. Theor em 3: Assume that there exists a cutting polytope of p ∈ Γ( H ) . The polytope U ( h ∗ ) is such a cutting polytope of p and the redundant row h ∗ is given by h ∗ = P m i =1 a ∗ i h i . Then, U ( h ) is also a cutting polytope of p where h = P m i =1 a i h i and a i = a ∗ i i ∈ Q 0 i / ∈ Q. (36) The index set Q is defined by Q ≡ { i ∈ { 1 , . . . , m } : ∃ j ∈ Supp( p ) , h ij 6 = 0 } . (37) (Proof) Let j ∗ ∈ Supp( h ∗ ) ∩ Supp( p ) be the index satisfying p j ∗ > X l ∈ Supp( h ∗ ) \{ j ∗ } p l . (38) Since p j = 0 if j / ∈ Supp( p ) , the abo ve condition is equi valent to p j ∗ > X l ∈ (Supp( h ∗ ) ∩ Supp( p )) \{ j ∗ } p l . (39) From the definition of h and Q , we hav e Supp( h ∗ ) ∩ Supp( p ) = Supp( h ) ∩ Supp( p ) . (40) This equality leads to the inequality p j ∗ > X l ∈ (Supp( h ) ∩ Supp( p )) \{ j ∗ } p l = X l ∈ Supp( h ) \{ j ∗ } p l . (41) The abov e inequality implies that U ( h ) is a cutting polytope of p . The significance of the abov e theorem is that we can fix a i = 0 for i / ∈ Q in a search process without loss of the chance to find a redundant ro w generating a cutting polytope. Therefore, computational complexity to find a redundant row can be reduced by using this property . Let V be a sub-matrix of H composed from the columns of H corresponding to the support of p . The inde x set Q consists of the row indices of non-zero rows of V . Thus, in the case of LDPC codes, the size of Q is expected to be small when the size of Supp( p ) is small because of sparseness of the parity check matrices. In such a case, the search space of the redundant ro ws are limited in a reasonable size. For example, in the case of the LDPC code “96.33.964” [9]( n = 96 , m = 48 , ro w weight 6, column weight 3), the size of Supp( p ) is 7( p ∈ Γ( H )) and the size of Q is 8 . Let H Q be the | Q | × n sub-matrix of H composed from a row vectors whose indices are included in Q . From Theorem 3, we can limit the search space to the linear combinations of rows of H Q . In the following, we will present an ef ficient search algorithm for a redundant row . Let τ = ( τ 1 , . . . , τ n ) denote an indices vector that satisfies p τ 1 ≥ p τ 2 ≥ · · · ≥ p τ n . Redundant row search algorithm Step 1 Construct H Q . Step 2 Permute columns of H Q to the follo wing form: H Q Π = v τ 2 v τ 3 · · · v τ n v τ 1 , where Π denotes a column permutation matrix and v j , j ∈ { 1 , . . . , n } denotes the j -th column vector of H Q . Step 3 Con vert H Q Π into U of row echelon form by applying elementary row operations. Step 4 Let i ∗ ≡ arg min { i ∈ { 1 , . . . , | Q |} : u i satisfies (22) } , where u i = ( u i 1 , . . . , u in ) denotes the i -th row vector of U Π − 1 . Step 5 Output u i ∗ . The idea of the redundant ro w search algorithm is based on the fact that u i (a candidate of desirable redundant rows) tends to satisfy condition (22). This can be explained as follo ws. Assume that u i,τ 1 = 1 . From the definition of row echelon form, u i,τ 2 = u i,τ 3 = · · · = u i,τ K = 0 holds where K is an integer larger than or equal to i − 1 . This means that | Supp( u i ) ∩ Supp( p ) | ≤ | Supp( p ) | − i + 1 (42) holds for i ∈ { 1 , . . . , | Q |} . Let η i be η i ≡ X l ∈ (Supp( u i ) ∩ Supp( p )) \{ τ 1 } p l . (43) From the inequality (42), it is evident that { η 1 , η 2 , . . . } is a decreasing sequence. W e thus can expect that condition (22), i.e., p τ 1 > η i , ev entually holds as i grows 2 . It may be reasonable to choose the smallest index i satisfying (22) because such u i would be sparser in the case of a low density matrix. A sparse redundant row is advantageous since it is able to cut other fractional vertices with small weight. V . R E S U LT S A. Application to Hamming, Golay and LDPC codes In this subsection, we applied the cutting plane method to Hamming code ( n = 7 , m = 4 ), Golay code ( n = 24 , m = 12 ), and a short regular LDPC code “204.33.484” [9] ( n = 204 , m = 102 , row weight 6, column weight 3). W e here use a parity check matrix of Golay code described in [12]. Let d frac be the fractional distance of the original parity check matrices and d after frac be the fractional distance of parity check matrices generated by the cutting plane method. Let N d be the number of the appended redundant rows. The results are sho wn in T able ID F or example, addition of 100 rows to the original parity check matrix of Golay code increases the 2 Note that we cannot state that u i satisfying (22) always exists because this argument is based on the assumption u i,τ 1 = 1 . T ABLE I F R AC TI O NA L D IS TA NC E S O F R E D U ND AN T P A R IT Y C H E C K M A T R I CE S O B T A I N E D B Y T H E C UT T I N G P L AN E M E T H OD Code n m N d d frac d after frac d min Hamming 7 3 4 2.000 3.000 3 Golay 24 12 40 2.625 3.429 8 Golay 24 12 100 2.625 3.895 8 LDPC 204 102 9 5.526 5.646 unknown 10 -4 10 -3 10 -2 10 -1 10 0 10 -3 10 -2 10 -1 Block Error Probability Crossover Probabilit y on BSC +100rows +40rows original Fig. 2. Comparison on block error probabilities of parity check matrices of Golay code (original 24 × 12 matrix, redundant 24 × 52 matrix, redundant 24 × 112 matrix ) fractional distance from 2 . 625 to 3 . 895 . It is expected that these matrices constructed by the cutting plane method shows better LP decoding performance compared with the original matrices because d after frac is greater than d frac for all the cases. B. Decoding performances In this subsection, we will present decoding performance of redundant parity check matrices obtained by the cutting plane method. W e here assume BSC as a target channel and LP decoding [3] as a decoding algorithm used in a recei ver . Figure 2 shows block error probabilities of the 24 × 12 parity check matrix of Golay code given in [12] (labeled “original”), the 24 × 52 parity check matrix with 40 redundant rows (labeled “+40rows”), and the 24 × 112 parity check matrix with 100 redundant rows (labeled “+100ro ws”). From Figure 2, we can see that the block error probability of the redundant matrix (+100rows) is approximately two order of magnitude lo wer than that of the original matrix when a crossov er probability is 10 − 2 . Figure 3 sho ws block error prob- abilities of the original parity check matrix of “204.33.484” [9] and that the parity check matrix with 9 redundant rows (labeled “+9ro ws”). From Figure 3, it can be observed that the slope of the error curve of “+9rows” is steeper than that of “original”. V I . C O N C L U S I O N In this paper , the cutting plane method based on redundant rows of a parity check matrix for improving the fractional distance has been presented. In order to reduce the search 10 -4 10 -3 10 -2 10 -1 10 0 10 -1 Block Error Probability Crossover Probability on BSC 10 -1.2 10 -1.4 10 -1.6 +9rows original Fig. 3. Comparison of block error probability of parity check matrices of the LDPC code ( n = 204 , m = 102 ) complexity to find an appropriate redundant row , we intro- duced an efficient technique to compute the fractional distance and prov ed that the limited search space indicated in Theorem 3 is sufficient to find a redundant row generating a cutting polytope. Some numerical results obtained so far are encour- aging. The redundant parity check matrices constructed by the cutting plane method hav e larger fractional distance than that of the original matrices. The simulation results support that improv ement on the fractional distance actually leads to better decoding performance under LP decoding. A P P E N D I X Pr oof 1 (Theorem 1): For proving Theorem 1, we will prov e the follo wing two inequalities: d (relax) frac ( H ) ≤ d frac ( H ) , (44) d (relax) frac ( H ) ≥ d frac ( H ) . (45) W e first assume d (relax) frac ( H ) > d frac ( H ) for proving in- equality (44) by contradiction. Assume that the index i ∈ { 1 , . . . , M } is given by i ∈ arg min M k =1 δ k . Let p be the solution of LP i . This means that p ∈ Γ( H ) and p ∈ ( P ( H ) ∩ F i ) where P ( H ) ∩ F i is the feasible set of LP i . Note that p is not the origin 0 n because δ k = ∞ holds when p = 0 n . Thus, Const i is an inactiv e constraint, namely i ∈ F inact ( H ) . Since the fundamental corn K ( H ) contains the fundamental polytope P ( H ) as a subset, the relation p ∈ ( K ( H ) ∩ F i ) . holds as well. The intersection K ( H ) ∩ F i is the feasible set of LP (relax) i . Let the solution of LP (relax) i be p ∗ . Since the objective function of LP i is identical to that of LP (relax) i and the feasible set of LP (relax) i includes that of LP i , the ` 1 -weight of p ∗ is smaller than or equal to the ` 1 -weight of p . This implies d (relax) frac ( H ) ≤ d frac ( H ) but it contradicts the assumption. The proof of the inequality (44) is completed. W e next assume d (relax) frac ( H ) < d frac ( H ) for pro v- ing inequality (45) by contradiction. Assume that i ∈ arg min k ∈ F inact ( H ) d (relax) k . Let p (relax) be the solution of LP (relax) i . From the definition of LP (relax) i , it is e vident that p (relax) ∈ ( K ( H ) ∩ F i ) holds. In the following, we will discuss the two cases: (i) p (relax) ∈ P ( H ) , (ii) p (relax) / ∈ P ( H ) . W e start from case (i). If p (relax) ∈ P ( H ) holds, then p (relax) ∈ ( P ( H ) ∩ F i ) holds because of the relation P ( H ) ⊂ K ( H ) . Due to almost the same argument, we obtain d (relax) frac ( H ) = d frac ( H ) which contradicts the assumption. W e then mo ve to case (ii). If p (relax) / ∈ P ( H ) holds, there e xists Const l satisfying p (relax) / ∈ H l , 0 n ∈ H l , l ∈ F inact ( H ) . (46) This is because p (relax) ∈ K ( H ) but p (relax) / ∈ P ( H ) . Let Seg( p (relax) ) be the line segment between p (relax) and the origin: Seg( p (relax) ) ≡ n p ∈ R n : 0 < t < 1 , p = t p (relax) o . (47) The line segment Seg( p (relax) ) passes through F l . Thus, there exists the point p l ∈ ( F l ∩ Seg( p (relax) )) . Note that the line segment Seg( p (relax) ) is totally included in K ( H ) because p (relax) ∈ K ( H ) . This leads to p l ∈ ( K ( H ) ∩ F l ) and it means that p l is included in the feasible set of LP (relax) l . The ` 1 -weight of p l is smaller than that of p (relax) . This implies that the ` 1 -weight of p l is smaller than d (relax) frac ( H ) . Ho we ver , it contradicts the definition of d (relax) frac ( H ) . The proof of the inequality (45) is completed. A C K N O W L E D G M E N T W e would like to thank the anonymous revie wers of T urbo Coding 2008 for helpful suggestions to improve the presen- tation of the paper . This work w as supported by the Ministry of Education, Science, Sports and Culture, Japan, Grant-in- Aid for Scientific Research on Priority Areas (Deepening and Expansion of Statistical Informatics) 180790091 and a research grant from SRC (Storage Research Consortium). R E F E R E N C E S [1] K. A. S. Abdel-Ghaffar and J.H. W eber , “On parity-check matrices with optimal stopping and/or dead-end set enumerators, ” in Proceedings of T urbo-coding 2006, Munich (2006). [2] M. Chertkov and M. Stepanov , “Pseudo-codeword landscape, ” IEEE International Symposium on Information Theory , Nice, France, June 2007. [3] J. Feldman, “Decoding error-correcting codes via linear programming, ” Massachusetts Institute of T echnology , Ph D. thesis, 2003. [4] J. Feldman, M. J. W ainwright, and D. R. Karger , “Using linear program- ming to decode binary linear codes, ” IEEE T ransactions on Information Theory , vol. 51, pp. 954-972, 2005. [5] J. Han and P . H. Siegel, “ Impro ved upper bounds on stopping redun- dancy , ” arXiv: cs.IT/0511056, 2005. [6] H.D.L. Hollmann and L.M.G.M. T olhuizen, “On parity check collections for iterative erasure decoding that correct all correctable erasure patterns of a given size, ” arXiv: cs.IT/0507068 [Online] (2005). [7] C. Kelley , D. Sridhara, J. Xu and J. Rosenthal, “Pseudocodeword weights and stopping sets, ” IEEE International Symposium on Information The- ory , Chicago, IL, USA, June 27 - July 3, 2004 [8] R. K oetter , W . W . Li, P . O. V ontobel and J. L. W alker , “Characterizations of pseudo-codewords of LDPC codes, ” arXiv: cs.IT/0508049, 2005. [9] D.J.C. MacKay , “Encyclopedia of sparse graph codes, ” http://www .inference.phy .cam.ac.uk/mackay/codes/data.html [10] C.H. Papadimitriou and K. Steiglitz, “Combinatorial optimization: algo- rithm and complexity , ” Dover , 1998. [11] A. Schrijver , “Theory of linear and integer programming, ” John Wiley and Sons, 1998. [12] M. Schwartz and A. V ardy , “On the stopping distance and the stopping redundancy of codes, ” IEEE T ransactions on Information Theory ,V ol. 52, pp. 922 - 932, 2006. [13] M. H. T aghavi and P . H. Siegel, “ Adaptive linear programming decod- ing, ” IEEE International Symposium on Information Theory 2006, Seattle, W A, USA, July 9 - 14, 2006. [14] S. Xia, and F . W ei, “Minimum pseudo-weight and minimum pseudo- codew ords of LDPC codes, ” arXiv: cs/060605, 2006. [15] K. Y ang, X. W ang and J. Feldman, “ A ne w linear programming approach to decoding linear block codes, ” IEEE Transactions on Information Theory , V ol. 54(3), pp.1061-1072, March 2008.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment