Gaussian Processes and Limiting Linear Models

Gaussian processes retain the linear model either as a special case, or in the limit. We show how this relationship can be exploited when the data are at least partially linear. However from the perspective of the Bayesian posterior, the Gaussian pro…

Authors: Robert B. Gramacy, Herbert K. H. Lee

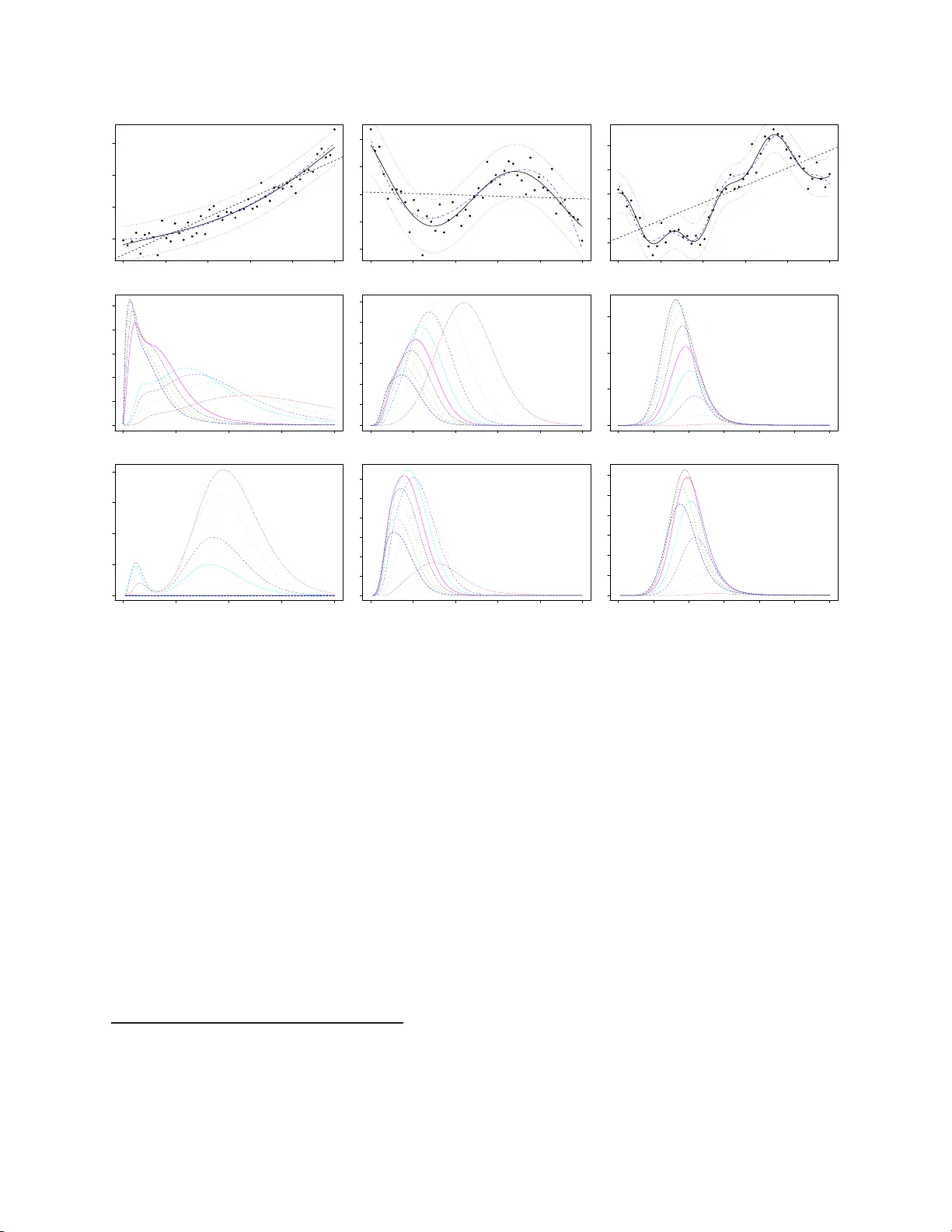

Gaussian Pro cesses and Limiting Linear Mo dels Rob ert B. Gra macy and Herb ert K. H. Lee ∗ Abstract Gaussian pro cesses retain the linea r mo del e ither as a sp ecial c ase, or in the limit. W e show ho w this r e lationship can b e exploited wh en the d ata are at least partially linear. Ho w ev er from the p ersp ectiv e of the Ba y esian p osterior, the Gaussian pr ocesses whic h enco de the linear mo del either ha v e p robabilit y of nearly zero or are otherwise unattainable without the explicit construction of a prior with the limiting linear mo del in mind. W e deve lop su c h a prior, and show that its practical b enefits extend w ell b ey ond the computational and conceptual simp li cit y of the linear mo del. F or example, linearit y can b e extracted on a p er-dimension basis, or can b e com b ined with treed partition mo dels to yield a highly efficien t n onstat ionary mo del. Our approac h is demonstrated on syn th e tic and r e al datasets of v arying linearit y and dimensionalit y . Key w ords: Gaussian pro cess, nonstationary sp at ial mo del, semiparametric regres- sion, partition mo deling 1 In tro d uction The Ga ussian Pro cess (GP) is a common mo del for fitting a r bit r a ry functions or surfaces, b ecaus e of its nonparametric flexibilit y ( C ressie, 1991). Suc h mo dels and their extensions are ∗ Rob ert Grama cy is a Le cturer in the Statistical Lab oratory , Univ ersity of Cambridge, UK (Email: bo bb y@s ta tslab.cam.ac.uk) and Herb ert Lee is a Pr o fessor in the Department o f Applied Mathematics and Statistics, University of Ca lifornia, Santa Cruz, CA 950 64 (Email: herbie@ ams.ucsc.edu). This work was partially suppo rted b y NASA aw ards 08008-002 -011-000 and SC 200302 8 NAS2-0 3144, Sandia N ational Labs grant 49642 0 , and Nationa l Science F ounda tion grants DMS 0233 710 and 05 04851. 1 used in a wide v ariety of applications, suc h as computer exp erimen ts (Kennedy and O’Ha g an, 2001; Santner et al., 2 003), en vironmen tal mo deling (G illeland and Nychk a , 2005; Calder , 2007), and geology ( C hil ´ es and D e lfiner, 1999). The mo deling flexibilit y of GPs comes with large computational requiremen ts and complexities. Sometimes a GP will pro duce a very smo oth fit, i.e., o ne that app ears linear 1 . In these cases, there is a lot of computational o v erkill in fitting a GP , when a linear mo del (LM) will fit just as w ell. LMs, whic h can b e seen as a limiting case of the GP , a lso av oid num erous issues to do with n umerical instabilit y . It may therefore b e desirable to b e able to c ho ose a daptiv ely b et w een a LM and a GP . The goal of this pap er is to link GPs with standard linear mo dels . One ma jor b ene fit is the reten tion of mo deling flexibilit y while greatly improving the computational situation. W e can make further gains b y com bining this union with treed GPs (Gramacy and Lee, 2008), and we demonstrate results f o r b oth treed G Ps as w ell as for stationary GP mo dels. The remainder o f the pap er is org anize d as follows. Section 2 reviews the Gaussian pro cess (GP) a nd the treed GP . Section 3 in tro duces the concept o f the limiting linear mo del (LLM) of a G P . Therein w e argue that, without further in terven tion, none of the limiting parameterizations, whic h are at the extreme s of the parameter space, lead to a feasible mo del selection prior. How ev er, a more thorough exploratory analysis rev eals that there is a bro ad con tinuum of GP par a me terizations that b eha v e lik e the LM and, imp ortan tly , tend to ha v e high p osterior supp ort when the data is indeed linear. It is t hese parameterizations whic h w e use to motiv ate our prior, g iv en in Section 4, in order to seek out a more parsimonious mo del. W e prop ose a laten t v ariable for mulation that leads to a prior o ver a family of semi- parametric mo dels lying b et w een the GP and its L L M that can b e used to in ves tigate the nature of the influence (in terms of linear ve rsus non- linear) of predictors on the resp onse. Section 5 co ve rs some details p ertaining to efficien t implemen t a tion, and giv es the results of 1 W e use “smo oth” in this co lloquial sens e thr oughout to mean near ly linear, o r the oppos ite o f “ wavy”, as oppo sed to the mo r e technical “infinitely differentiable” sense. 2 extensiv e experimen tation on real and syn thetic data, a s w ell a s a comparison of our metho ds to other mo dern approa c hes to regression. Section 6 concludes with a brief discussion. 2 Gaussian Pro ce sses and T reed Gaussian Pro ce sses Consider the follo wing Ba y esian hierarc hical mo del for a GP with a linear mean f or n inputs X of dimension m X , a nd n resp onses y : y | β , σ 2 , K ∼ N n ( F β , σ 2 K ) σ 2 ∼ I G ( α σ / 2 , q σ / 2) β | σ 2 , τ 2 , W ∼ N m ( β 0 , σ 2 τ 2 W ) τ 2 ∼ I G ( α τ / 2 , q τ / 2) (1) β 0 ∼ N m ( µ , B ) W − 1 ∼ W (( ρ V ) − 1 , ρ ) where F = ( 1 , X ) has m = m X + 1 columns. N , I G and W are the Normal, In v erse-Gamma and Wishart distributions, res p ectiv ely . Constan ts µ , B , V , ρ, α σ , q σ , α τ , q τ are treated a s kno wn. The matrix K is constructed from a function K ( · , · ) o f the form K ( x j , x k ) = K ∗ ( x j , x k ) + g δ j,k where δ · , · is the Kronec ker delta function, g is called the nugget parameter and is included in order to in terject measuremen t error (or random noise) in to the sto c hastic pro cess , and K ∗ is a true correlation whic h w e t a k e to b e from the separable p ow er fa mily with p i = 2: K ∗ ( x j , x k | d ) = exp ( − m X X i =1 ( x ij − x ik ) p i d i ) . (2) Generalizations are straigh tforw a rd, e.g., see Section 6. The sp ecification o f prior s for K , K ∗ , and their parameters d a nd g will b e deferred until later, as their construction will b e a central part o f this pap er. The separable p o w er fa mily allows some input v ariables to b e mo deled as more highly correlated than others. The isotropic p o w er family is a sp ecial case (when d = d i and p = p i , f o r i = 1 , . . . , m X ). P osterior inference and estimation is straigh tfo rw ard using the Metropolis–Hastings (MH) 3 and G ibbs sampling a lgorithms (Gramacy and Lee, 2008). It can b e sho wn tha t the regres- sion co efficien ts ha ve full conditionals β | rest ∼ N m ( ˜ β , σ 2 V ˜ β ), and β 0 | rest ∼ N m ( ˜ β 0 , V ˜ β 0 ), where V ˜ β = ( F ⊤ K − 1 F + W − 1 /τ 2 ) − 1 , ˜ β = V ˜ β ( F ⊤ K − 1 y + W − 1 β 0 /τ 2 ) , (3) V ˜ β 0 = B − 1 + W − 1 P R ν =1 ( σ τ ) − 2 − 1 , ˜ β 0 = V ˜ β 0 B − 1 µ + W − 1 P R ν =1 β ( σ τ ) − 2 . Analytically integrating out β and σ 2 giv es a marg inal p o s terior for K (Berger et a l., 2001) that can b e used to o btain efficien t MH draw s. p ( K | y , β 0 , W , τ 2 ) = | V ˜ β | (2 π ) − n | K || W | τ 2 m 1 2 q σ 2 α σ / 2 Γ 1 2 ( α σ + n ) 1 2 ( q σ + ψ ) ( α σ + n ) / 2 Γ α σ 2 p ( K ) , (4) where ψ = y ⊤ K − 1 y + β ⊤ 0 W − 1 β 0 /τ 2 − ˜ β ⊤ V − 1 ˜ β ˜ β . The pr edicted v alue of y at x is normally distributed with mean and v ariance ˆ y ( x ) = f ⊤ ( x ) ˜ β + k ( x ) ⊤ K − 1 ( y − F ˜ β ) , ˆ σ ( x ) 2 = σ 2 [ κ ( x , x ) − q ⊤ ( x ) C − 1 q ( x )] , (5) where ˜ β is the p osterior mean estimate of β , C − 1 = ( K + τ 2 FWF ⊤ ) − 1 , q ( x ) = k ( x ) + τ 2 FWf ( x ) , κ ( x , y ) = K ( x , y ) + τ 2 f ⊤ ( x ) Wf ( y ), f ⊤ ( x ) = (1 , x ⊤ ), a nd k ( x ) is a n − v ector with k ( x ) j = K ( x , x j ), for all x j ∈ X , the training data. A treed GP (Gramacy and Lee, 2008) is a generalization of the CAR T (Classification and Regression T ree) mo del ( Chipman et al., 1 998) that uses GPs at the leav es of the tree in place of the usual constan t v alues or the linear regressions of Chipman et al. (20 02 ). The Ba y esian in terpretation requires a prior b e placed on the tree and GP parameterizations. Sampling commences with Rev ersible Jump (R J) MCMC whic h allo ws for a sim ultaneous fit o f the tree and t he GPs at its leav es. The predictiv e surface can b e discon tin uous across 4 the pa r t ition b oundaries of a pa rticular t r ee T . Ho wev er, in the aggr ega te of samples col- lected from the joint p osterior distribution of {T , θ } , the mean tends to smo oth out near lik ely partition b oundaries as t he RJ–MCMC integrates ov er trees and GPs according to the p osterior distribution (Gramacy and Lee, 2008). The treed GP a pproac h yields an extremely fast implemen ta tion of nonstationary GPs, pro viding a divide-and-conquer approach to spatial mo deling. Soft w are implemen t ing the treed G P mo del and all of its sp ecial cases (e.g., stationary GP , CAR T & the treed linear mo del, linear mo del, etc.), including the extensions discussed in this pap er, is av ailable as an R pac k age ( R Dev elopmen t Core T eam , 2004), and can b e obtained fr o m CRAN: http://www. cran.r-proj ect.org/web/packages/tgp/ind ex .html . The pack age implemen ts a family of default prior sp ecific ations for the know n constan t s in Eq. ( 1 ), and those describ ed in the follow ing sections, whic h are use d throughout unless otherwise noted. F or more details see the tgp do cumen tation (Gramacy and T addy, 2 008) and t ut o rial (Gramacy, 2 0 07 ). 3 Limiting Lin ear Mo dels A sp ec ial limiting case of the GP mo del is t he standard linear mo del (LM). Replacing the top (like liho o d) line in the hierarchic al mo del give n in (1) y | β , σ 2 , K ∼ N n ( F β , σ 2 K ) with y | β , σ 2 ∼ N n ( F β , σ 2 I ) , where I is the n × n iden tit y matrix, giv es a parameterization of a LM. F rom a phenomeno- logical p erspectiv e, G P regression is more flexible than standa r d linear r e gression in that it can capture nonlinearities in the in teraction b et w een co v ariates ( x ) and resp onses ( y ). F ro m a mo deling p ersp ec tiv e, the GP can b e more than just o v erkill for linear data. P arsimony 5 and o v er-fitting considerations are just the tip of the iceb erg. It is also unnecessarily compu- tationally exp ensiv e, as well as n umerically unstable. Sp ecifically , it requires the in vers ion of a large co v ariance matrix—an op eration whose computing cost grows with the cub e of the sample size, n . Moreov er, large finite d para me ters can b e problematic from a n umerical p erspectiv e b ecause, unless g is a ls o large, the resulting co v ariance matrix can b e n umerically singular when the off-diag o nal elemen ts of K are nearly one. It is common practice t o scale the inputs ( x ) either to lie in the unit cub e, or to hav e a mean of zero and a range of one. Scaled data and mostly linear predictiv e surfaces can result in almost singular co v ariance matrices ev en whe n the range parameter is relativ ely small (2 < d i ≪ ∞ ). So for some parameterizations, the GP is operat io nally equiv alen t to the limiting linear mo del (LLM), but comes with none of it s benefits (e.g. sp eed and stabilit y). As this pap er demonstrates, exploiting and/or manipulating suc h equiv alence can b e of great practical benefit. As Ba ye sians, this means constructing a prior distribution on K that makes it clear in whic h situations eac h mo del is preferred ( i.e., when should K → c I ?). Our k ey idea is to sp ecify a prior on a “jumping” criterion b et w een the G P and its LLM b y taking adv antage of a latent v ariable formulation, th us setting up a Bay esian mo del selection/a veraging framew o rk. Theoretically , there are only tw o para me terizations of a GP correlatio n structure ( K ) whic h enco de the LLM. Though they are indeed w ell–kno wn, without interv en tion they are quite unhelpful from the p erspectiv e of pr ac t ic al estimation and inference. The first one is when the range parameter ( d ) is set to zero. In this case K = ( 1 + g ) I , a nd the result is clearly a linear mo del. The other parameterization ma y b e less ob vious. Cressie (1991, Section 3.2.1) analyzes t he “effect of v ariog ram parameters on kriging ” pa ying sp ecial attention to the nugget ( g ) and it s inte raction with the range parameter. He remarks that the larger the nugget the more the kriging in terp olator smo othes and in the limit predicts with the linear mean. P erhaps mor e relev an t to the forthcoming discussion is 6 his later remarks on the in terpla y b et we en the range a nd n ugget para me ters in determining the kriging n eighb orho o d . Specifically , a la r g e n ugget coupled with a large ra nge dr ives the in terp olator to wards the linear mean. T his is refreshing since constructing a prior for the LLM b y ex ploiting the former G P para me terization (range d → 0 ) is difficult, and for the latter (n ugget g → ∞ ) near imp o s sible. W e regard these para me terizations, which are situated at the extremes of the para me ter space, as a dead–end a s far as serving as the ba sis for a mo del–sele ction prior. F ortunately , Cressie’s though ts on the kriging neigh b orho o d rev eal that an (essen tially) linear mo del ma y b e atta inable with no nz ero d and finite g . 3.1 Exploratory analysis Here w e shall conduct an exploratory analysis to study the kriging neighborho o d and lo ok for a platform from which to “jump” to the LLM. The analysis will fo cus on studying lik eliho o ds and p osteriors for G Ps fit to data generated from the linear mo del y i = 1 + 2 x i + ǫ , where ǫ i iid ∼ N (0 , 1) (6) using n = 10 ev enly spaced x -v alues in the range [0 , 1]. 3.1.1 GP likelih o o d s on linear data Figure 1 sho ws t w o in teresting samples from (6). Also plot ted are the generating line (dot- dashed), the maximum lik eliho o d (ML) linear mo del ( ˆ β ) line (dashed), the predictiv e mean surface of the ML G P , maximized ov er r a nge d , i.e., the one–v ector d , n ugget g , and [ σ 2 | d, g ] (solid), a nd its 9 5% error bars (dotted). The ML v alues of d and g are also indicated in eac h plot. The GP lik eliho o ds were ev aluated for ML estimates of the regression co efficien ts ˆ β . 7 Conditioning o n g and d , the ML v ariance w as computed b y solving 0 ≡ d dσ 2 log N n ( y | F ˆ β , σ 2 K ) = − n σ 2 + ( y − F ˆ β ) ⊤ K − 1 ( y − F ˆ β ) ( σ 2 ) 2 . This gav e an MLE with the fo r m ˆ σ 2 = ( y − F ˆ β ) ⊤ K − 1 ( y − F ˆ β ) /n . F or the linear mo del the lik eliho o d w a s ev aluated as P ( y ) = N 10 ( F ˆ β , ˆ σ 2 I ), and for the GP as P ( y | d, g ) = N 10 h F ˆ β , ˆ σ 2 K { d,g } i , where K { d,g } is the co v ariance matrix generated using K ( · , · ) = K ∗ ( · , ·| d )+ g δ · , · for K ∗ ( · , ·| d ) from the p o w er family with range parameter d . 0.0 0.2 0.4 0.6 0.8 1.0 1.0 1.5 2.0 2.5 10 samples from y=1+2x+e, e~N(0,1) x y d = 0 g = 0 0.0 0.5 1.0 1.5 2.0 0 1 2 3 4 likelihood: Linear & GP d likelihood: Linear & GP(d,nug) 0.0 0.2 0.4 0.6 0.8 1.0 1.0 1.5 2.0 2.5 3.0 10 samples from y=1+2x+e, e~N(0,1) x y d = 2 g = 0.41667 0.0 0.5 1.0 1.5 2.0 0 20 40 60 80 100 likelihood: Linear & GP d likelihood: Linear & GP(d,nug) Figure 1: Tw o sim ulations ( r ows ) from y i = 1 + 2 x i + ǫ i , ǫ i ∼ N (0 , 1). L eft column sho ws GP fit (solid) with 95% error bars (dotted), maxim um lik eliho o d ˆ β (dashed), and generating linear mo del ( β = (1 , 2)) (dot-dashed). Right column sho ws GP( d, g ) lik eliho o d surfaces, with each curv e represen ting a different v alue of the nugget g . The (maxim um) lik eliho o d ( ˆ β ) of the linear mo del is indicated b y the solid horizon tal line. Both samples and fits plotted in Figure 1 ha ve linear lo oking predictiv e surfaces, but only fo r the one in the top row do es the linear mo del ha v e the maxim um lik eliho o d. Though 8 the predictiv e surface in the b ottom-left panel could b e mistak en as “linear”, it was indeed generated from a GP with lar ge range parameter ( d = 2) and mo dest n ugget setting ( g ) as this par ame terization had higher lik eliho o d than the linear mo del. The right column of Figure 1 sho ws lik eliho o d surfaces corresp onding to the samples in the left column. Eac h curv e corresp onds to a differen t setting of the n ugget, g . Also sho wn is the like liho o d v alue of the MLE ˆ β of the linear mo del (solid horizontal line). The lik eliho o d surfaces for eac h sample lo ok drastically differen t. In t he top sample the LLM ( d = 0) uniformly dominates all other GP parameterizations. Contrast this with the lik eliho o d of t he second sample. There, the resulting predictiv e surface lo oks linear, but the lik eliho o d of the L L M is comparat ively lo w. 0 2 4 6 8 10 12 0.01 0.02 0.03 0.04 0.05 0.06 0.07 likelihood for increasing nugget (g) log(g) likelihood with d=1 Figure 2: Like liho o ds as the nu gget gets large for single sample of size n = 10 0 from Eq. ( 6). The x -axis is (log g ), the range is fixed at d = 1 ; the likelih o o d of the LLM ( d = 0) is shown for comparison. Figure 2 illustrates the other LLM parameterization b y sho wing how , a s the n ugget g increases, the lik eliho o d of the G P approaches that of t he linear mo del for a sample of size n = 100 from (6) with d fixed to one. Observ e that t he n ugget m ust b e quite la rge relative to the actual v ariability in the data b efore the like liho o ds of the G P and LLM b ecome comparable. Most sim ulations from (6) gav e predictiv e surfaces like the upp er left of Figure 1 and 9 0.0 0.2 0.4 0.6 0.8 1.0 1.0 1.5 2.0 2.5 3.0 10 samples from y=1+2x+e, e~N(0,1) x y d = 0.032064 g = 0.16667 0.0 0.5 1.0 1.5 2.0 0 5 10 15 20 25 30 likelihood: Linear & GP d likelihood: Linear & GP(d,nug) 0.0 0.2 0.4 0.6 0.8 1.0 1.0 1.5 2.0 2.5 3.0 10 samples from y=1+2x+e, e~N(0,1) x y d = 0.024048 g = 0 0.0 0.5 1.0 1.5 2.0 0 10 20 30 40 likelihood: Linear & GP d likelihood: Linear & GP(d,nug) Figure 3: GP( d, g ) fits ( left ) and lik eliho o d surfaces ( right ) for tw o of samples of the LM (6). corresp onding lik eliho o ds like the upp er-right . But this is not alwa ys the case. Occasionally a sim ulation w ould giv e high lik eliho o d to GP parameterizations if the sample w as slow ly w aving. This is not uncommon for small sample sizes suc h as n = 10 . F or example, consider those show n in Figure 3. W avines s b ecomes less lik ely as the sample size n grow s. Figure 4 summarizes the ra tio of the ML GP parameterization ov er the ML LM based on 1000 simulations of ten ev enly spaced random draws from (6). A lik eliho o d ra t io of one means that t he LM w as b est for a particular sample. The 90%-quantile histogram and summary statistics in F igure 4 sho w that the GP is seldom m uc h b etter than the LM. F or some samples the ratio can b e really large ( > 9000) in fa vor of the GP , but more than t wo-thirds of the ratios are close to one—approx imately 1/3 (362 ) w ere exactly one but 2/ 3 (616) ha d ratios less than 1.5. This means tha t the p osterior inference fo r b o rderline linear data is lik ely to dep end hea vily the prior sp ec ification of K ( · , · ). 10 ratio: GP/Linear Likelihoods maximum likelihood Frequency 2 4 6 8 10 0 100 200 300 400 500 600 700 Summary: Min 1.000 1st Q u. 1.0 00 Median 1.141 Mean 19.160 3rd Qu. 2.619 Max 9419.0 Figure 4 : Histogram of the ra tio of the maxim um lik eliho od G P pa ramete rization o ver the lik eliho o d of the limiting linear mo del with full summary statistics. F or visual clarit y , the upp er tail of the histogram is not sho wn. F or some of the smaller n ugget v alues, in particular g = 0, a nd larger range settings d , the lik eliho o ds for the GP could no t b e computed b ecaus e the corresp onding co v ariance matrices w ere n umerically singular, and could not b e decomp osed. This illustrates a phenomenon noted b y Neal ( 1997) who adv o cates tha t a non-zero n ug get (o r jitter ) should b e included in the mo del to increase n umerical stability . Numerical instabilities may also b e a v oided by allo wing p i < 2 in (2), by using t he Mat´ ern family of correlation functions (see Section 6), or by simply using an LM where appropria te. 3.1.2 GP p osteriors on linear data Supp ose that rather tha n examining the m ultiv ariate normal lik eliho o ds of the linear and GP mo del, using the ML ˆ β and ˆ σ 2 v alues, the marginalized p oste rior p ( K | y , β 0 , τ 2 , W ) of Eq. (4) w as used, whic h in tegrates out β and σ 2 . Using (4) requires sp ecifi cation of the prior p ( K ), whic h for the p ow er family means sp ecifying p ( d, g ). Alternativ ely , one could consider dropping the p ( d, g ) term from (4) and lo ok solely a t the marginalized lik eliho o d. Ho we v er, in ligh t o f the argumen ts ab o v e, there is reason to b eliev e t ha t the prior sp ecific ation will 11 carry significant w eigh t. If it is susp ected that the data might b e linear, this bias should b e someho w enco ded in the prior. This is a no n- trivial task, giv en the nature of the G P parameterizations whic h enco de the LLM. Pushing d to wards zero is problematic b ecause small non-zero d causes the predictiv e surface to b e quite wiggly—certainly far fr o m linear. D ec iding ho w small t he range parameter ( d ) should b e b efore treating it as zero—a s in Sto c hastic Searc h V ariable Selection (SSVS) of Georg e a nd McCullo c h (19 93 ), or Chapter 12 of Gilks et al. (1996)—while still allo wing a GP to fit t ruly non-linear data is no simple task. The large n ug g e t approa c h is also out o f the question b ecause putting increasing prior densit y on a parameter as it gets large is imp ossible. Rescaling the resp onses migh t w ork, but constructing the prior w ould b e nontrivial. Moreo v er, suc h an appro a c h w ould preclude its use in man y applications, particularly for adaptiv e sampling or sequen tial design of experiments when one hop es to learn a b out the range of resp onse s, and/or search for extrema. Ho wev er, we hav e seen that for a contin uum of large d v alues (say d > 0 . 5 on the unit in terv al) the predictiv e surface is practically linear. Consider a mixture of gammas prior fo r d : p ( d, g ) = p ( d ) p ( g ) = p ( g )[ G ( d | α = 1 , β = 20) + G ( d | α = 10 , β = 10)] / 2 . (7) It giv es ro ughly equal mass to small d (mean 1 / 20) represen ting a p opulation of GP parame- terizations for w a vy surfaces, and a separate p opulation fo r tho s e whic h are quite smo oth or appro ximately linear. Figure 8 depicts p ( d ) via a histog ram, ignoring p ( g ) whic h is usually tak en to b e a simple exp onen tial distribution. Alternativ ely , one could enco de t he prior as p ( d, g ) = p ( d | g ) p ( g ) and then use a reference prior (Berger et al., 20 01 ) for p ( d | g ) . W e c hose the more deliberate, indep enden t, sp ecification in order to enco de our prio r b elief that there are essen tially tw o kinds of pro cess es: w a vy (small d ) and smo oth (larg e d ). Obse rv e 12 that d = 0 is “closer” to the w avy parameterizations—in fact it lies at the limit of extreme w avine ss. W e argue, how ev er, that extreme smo othness ma y not only b e a more intuitiv e de- piction of linearit y , but that lar ge d -v alues (though further aw a y) serv e as a b etter platf o rm from whic h to jump to the LLM. Ev aluation of the marginalized p o s terior (4) requires settings f or the prio r mean co effi- cien ts β 0 , co v ariance τ 2 W , and hierarc hical sp ecifications ( α σ , γ σ ) for σ 2 . F or no w, these parameter settings are fixed to those whic h w ere know n to g enerate the data. 0.0 0.2 0.4 0.6 0.8 1.0 1.0 1.5 2.0 2.5 3.0 10 samples: y=1+2x+e, e~N(0,0.25) x y d = 0.9697 g = 0.066667 s2 = 1.0358 0.0 0.2 0.4 0.6 0.8 1.0 1.5 2.0 2.5 3.0 10 samples: y=1+2x+e, e~N(0,0.25) x d = 0.84848 g = 0.016667 s2 = 1.5625 0.0 0.2 0.4 0.6 0.8 1.0 1.5 2.0 2.5 10 samples: y=1+2x+e, e~N(0,0.25) x d = 0.020202 g = 0 s2 = 0.25417 0.0 0.5 1.0 1.5 2.0 0.0 0.2 0.4 0.6 0.8 1.0 likelihood : Linear & GP(d,nug) d likelihood : Linear & GP(d,nug) 0.0 0.5 1.0 1.5 2.0 0 1 2 3 4 5 likelihood : Linear & GP(d,nug) d 0.0 0.5 1.0 1.5 2.0 0.00 0.01 0.02 0.03 0.04 0.05 0.06 0.07 likelihood : Linear & GP(d,nug) d 0.0 0.5 1.0 1.5 2.0 0.00 0.02 0.04 0.06 0.08 posterior : Linear & GP(d,nug) d posterior : Linear & GP(d,nug) 0.0 0.5 1.0 1.5 2.0 0.0 0.5 1.0 1.5 2.0 2.5 3.0 posterior : Linear & GP(d,nug) d 0.0 0.5 1.0 1.5 2.0 0.000 0.005 0.010 0.015 0.020 0.025 0.030 posterior : Linear & GP(d,nug) d Figure 5: T op r ow shows the G P( d , g ) fits; Midd le r ow sho ws lik eliho ods and b ottom r ow sho ws the integrated p osterior distribution for range ( d , x-a x is) and nugget ( g , lines) settings for three samples, one p er eac h column. Note that s2 ≡ ˆ σ 2 in the top ro w legend(s). Figure 5 sho ws three samples fro m the linear mo del (6) along with lik eliho o d and p osterior surfaces. Occasionally the lik eliho o d and p osterior lines suddenly stop due to a numerically 13 unstable parameterization (Neal, 1997). The G P fits sho wn in the to p row of the fig ure are based on t he maxim um a p osteriori (MAP) estimates of d , g , and σ 2 . The p osteriors in the b ottom row clearly sho w the influence of the prior. Nev ertheless, the p osterior densit y for la r ge d - v alues is disprop ortionately high relative to t he prior. Larg e d - v alues represen t at least 9 0% of the cum ulativ e p osterior distribution. Samples from these p osteriors w ould yield mo stly linear predictiv e surfaces. The last sample is particularly in teresting as we ll as b eing the most represen tativ e. Here, the LLM ( d = 0) is the MAP G P parameterization, and uniformly do min ates all o ther para me terizations in p osterior densit y . Still, the cum ulat ive p osterior densit y f a v o rs large d -v alues, th us fav oring linear “lo oking” predictiv e surfaces ov er the actual (limiting) linear parameterization. Figure 6 ( top left ) shows a represen tative MAP GP fit for a sample of size n = 10 0 fro m (6). Since larger samples ha v e a low er probabilit y of coming out w avy , t he lik eliho o d of the LLM (on the right ) is m uch higher than other GP parameterizations, though it is sev erely p eak ed. Small, nonzero, d - v alues ha v e extremely lo w lik eliho o d. By con trast, the p osterior in the b ottom panel puts high densit y on larg e d - v alues. The MAP predictiv e surface ( top left ) ha s a very small, but noticeable, amoun t of curv ature. Ideally , linear lo oking predictiv e surfaces should not hav e to b ear the computational burden implied b y full–fledged GPs. But since the LLM ( d = 0) is a p oint-mass (whic h is the only parameterization that a ctually giv es an iden tity cov aria nc e matrix), it has zero probability under the p osterior. It w ould nev er b e sampled in an MCMC, ev en when it did happ en to b e the MAP estimate. 3.1.3 GP p osteriors and lik eliho o ds on non-linea r data F or completeness, Figure 7 and sho ws fits, likelihoo ds, and p osteriors on non-linear data. The first column of Figure 7 corresp onds t o a sample with quadratic mean, a nd eac h successiv e column corresp onds to a sample whic h is increasingly wa vy . Eac h sample is o f size n = 50 . The shap e of the prior loses its influence as the dat a b ecome more non-linear. Altho ugh in 14 0.0 0.2 0.4 0.6 0.8 1.0 0.5 1.0 1.5 2.0 2.5 3.0 3.5 100 samples: y=1+2x+e, e~N(0,0.25) x y d = 0.9899 g = 0.066667 s2 = 1.3025 0.0 0.5 1.0 1.5 2.0 0e+00 1e−09 2e−09 3e−09 4e−09 likelihood : Linear & GP(d,nug) d likelihood : Linear & GP(d,nug) 0.0 0.5 1.0 1.5 2.0 0e+00 1e−38 2e−38 3e−38 4e−38 5e−38 6e−38 posterior : Linear & GP(d,nug) d posterior : Linear & GP(d,nug) Figure 6: T op-left sho ws the G P( d , g ) fit with a sample of size n = 100; top-right sho ws the lik eliho o d a nd b ottom-right sho ws the in t egr a ted p osterior f or range ( d , x-axis) and n ugget ( g , lines) settings. all three cases the MLEs do not correspo nd to the MAP estimates, the corresp onding ML and MAP predictiv e surfaces lo ok remark ably similar (not shown ). This is probably due to the fact that t he p osterior integrates out β and σ 2 , whereas the lik eliho ods we re computed with p oin t estimates of these pa ramete rs. 4 Mo del sel ection prio r With the ideas outlined abov e, we set out to construct a prior for the “mixture” of the GP with its LLM b y fo cus ing o n large range parameters rather than d = 0 or g → ∞ . The k ey idea is an augmentation of the parameter space b y m X laten t indicator v ariables b = { b } m X i =1 ∈ { 0 , 1 } m X . The b o olean b i is in tended to select either the G P ( b i = 1) or its 15 0.0 0.2 0.4 0.6 0.8 1.0 0 1 2 3 50 samples: y=3x^2+e, e~N(0,0.25) x y d = 0.94949 g = 0.025 s2 = 2.7426 0.0 0.2 0.4 0.6 0.8 1.0 −1.5 −1.0 −0.5 0.0 0.5 50 samples: y=−20(x−0.5)^3+2x−2+e, e~N(0,0.25) x d = 0.090909 g = 0.088889 s2 = 0.64505 0.0 0.2 0.4 0.6 0.8 1.0 −1.0 −0.5 0.0 0.5 1.0 50 samples: y=sin(pi*x/5) + 0.2*cos(4pi*x/5), e~N(0,0.18) x d = 0.018788 g = 0.066667 s2 = 0.43093 0.0 0.5 1.0 1.5 2.0 0e+00 1e−05 2e−05 3e−05 4e−05 5e−05 likelihood : Linear & GP(d,nug) d likelihood : Linear & GP(d,nug) 0.0 0.1 0.2 0.3 0.4 0.5 0e+00 1e−05 2e−05 3e−05 4e−05 5e−05 6e−05 likelihood : Linear & GP(d,nug) d 0.00 0.01 0.02 0.03 0.04 0.05 0.06 0.00 0.05 0.10 0.15 likelihood : Linear & GP(d,nug) d 0.0 0.5 1.0 1.5 2.0 0e+00 2e−12 4e−12 6e−12 8e−12 posterior : Linear & GP(d,nug) d posterior : Linear & GP(d,nug) 0.0 0.1 0.2 0.3 0.4 0.5 0.0e+00 5.0e−11 1.0e−10 1.5e−10 2.0e−10 2.5e−10 3.0e−10 posterior : Linear & GP(d,nug) d 0.00 0.01 0.02 0.03 0.04 0.05 0.06 0.0e+00 2.0e−07 4.0e−07 6.0e−07 8.0e−07 1.0e−06 1.2e−06 posterior : Linear & GP(d,nug) d Figure 7: T op r ow shows the G P( d , g ) fits; Midd le r ow sho ws lik eliho ods and b ottom r ow sho ws the integrated p osterior distribution for range ( d , x-a x is) and nugget ( g , lines) settings for four samples, one p er each column .As the samples b ec ome less linear the d -axis (x-axis) shrinks in order to f o cus in on the mo de. LLM for the i th dimension. The actual range parameter used b y the correlation function is multiplied by b : e.g. K ∗ ( · , ·| bd ) 2 . T o enco de our preference that GPs with larger ra nge parameters should b e more like ly to “jump” to the L L M , the prior on b i is sp ecified as a function o f the range parameter d i : p ( b i , d i ) = p ( b i | d i ) p ( d i ). Probabilit y mass functions whic h increase as a function o f d i , e.g., p γ ,θ 1 ,θ 2 ( b i = 0 | d i ) = θ 1 + ( θ 2 − θ 1 ) / (1 + exp {− γ ( d i − 0 . 5) } ) (8) 2 i.e., comp onen t–w is e m ultiplication—like the “ b .* d ” o peration in Matl ab 16 p(d) = G(1,20)+G(10,10) and p(b|d) d density 0.0 0.5 1.0 1.5 2.0 0.0 0.5 1.0 1.5 2.0 2.5 3.0 p(b) = 1 p(b|d) Figure 8: Histogram of the mixture o f gammas prior p ( d ) as giv en in Eq. (7), with the prior distribution for the b o olean ( b ) superimp osed on p ( d ) from Eq.(8) using ( γ , θ 1 , θ 2 ) = (10 , 0 . 2 , 0 . 95) . with 0 < γ and 0 ≤ θ 1 ≤ θ 2 < 1, can enco de suc h a preference b y calling for the exclusion o f dimensions i with with large d i when constructing K . Th us b i determines whether the GP or the LLM is in c harge of the marginal pro cess in the i th dimension. Accordingly , θ 1 and θ 2 represen t minim um and maxim um probabilities of jumping to the LLM, while γ gov erns the rate at which p ( b i = 0 | d i ) grows to θ 2 as d i increases. Figur e 8 plots p ( b i = 0 | d i ) for ( γ , θ 1 , θ 2 ) = (1 0 , 0 . 2 , 0 . 95) sup erimp osed on the mixture of gammas prior p ( d i ) fro m (7). The θ 2 parameter is taken t o b e strictly less than one so as not to preclude a GP which mo dels a genuin ely nonlinear surface using a n uncommonly larg e range setting. The implied prio r probability of the full m X -dimensional LLM is p (linear mo del) = m X Y i =1 p ( b i = 0 | d i ) = m X Y i =1 θ 1 + θ 2 − θ 1 1 + exp {− γ ( d i − 0 . 5) } . (9) Observ e that the resulting pro cess is still a GP if an y of the b o oleans b i are one. The primary computatio na l adv an ta ge asso ciated with the L L M is foregone unless all of the b i ’s are zero. How ev er, the in termediate result offers an impro v emen t in n umerical stability in addition to describing a unique tra nsitiona r y mo del lying somewhere b et w een the GP and the LLM. Sp e cifically , it allo ws for the implem en tat io n of sem iparametric sto c hastic pro cesses lik e 17 Z ( x ) = β f ( x ) + ε ( ˜ x ), represen ting a piecemeal spatial extension of a simple linear mo del. The first part ( β f ( x )) of the pro ces s is linear in some known function of the full set of cov aria tes x = { x i } m X i =1 , and ε ( · ) is a spatial random pro cess (e.g., a GP) whic h a cts on a subset of the co v ariates ˜ x . Such mo dels a re commonplace in the statistics comm unit y (Dey et al., 1998). T raditionally , ˜ x is determined and fixed a priori . The separable b o olean prior in (8 ) implemen ts a n adaptiv ely semiparametric pro cess where the subset ˜ x = { x i : b i = 1 , i = 1 , . . . , m X } is giv en a prior distribution, instead of b eing fixed. So ev en if the computational adv an tage of the LLM is not presen t b ecause some b i 6 = 0 w e still hav e that a n y b i = 0 setting releases us fro m the burden of sampling the corr esp onding d i . It also imparts on us the kno wledge that the resp onse has (at b est) a linear relationship with the i th co v ariat e . This approac h ma y also increase the scop e for analysis of higher dimensional datasets, where data sparseness in higher dimensions (the “curse o f dimensionalit y”) can b e ameliorated by using linear mo de ls in most dimensions and GP mo dels only in the dimensions where they will ha v e the most effect, thus reducing the dimension of the GP mo del space. Note that if an isotropic correlatio n function is used, whic h has only a single range parameter, then only o ne b o olean b is needed, and the pro duct can b e dropp ed from (9). In this case, how ev er, the adv an tage of b eing able to detect linearit y , marginally , in eac h dimension is lost. 4.1 Prediction Prediction under the LLM parameterization of the GP mo del ( 5 ) can b e simplified when all o f the b o oleans are zero, whence it is kno wn that K = (1 + g ) I . A c haracteristic of the standard linear mo del is that all input configurations ( x ) are treated as independent conditional on know ing β . This additionally implies that in (5) the terms k ( x ) and K ( x , x j ) are zero for all x . Thus , the predicted v alue o f y at x is normally distributed with mean 18 ˆ y ( x ) = f ⊤ ( x ) ˜ β and v ariance σ 2 [1 + τ 2 f ⊤ ( x ) Wf ( x ) − τ 2 f ⊤ ( x ) WF ⊤ ((1 + g ) I + τ 2 FWF ⊤ ) − 1 FWf ( x ) τ 2 ] . It is helpful to re-write the ab o v e expression for the v ariance as ˆ σ ( x ) 2 = σ 2 [1 + τ 2 f ⊤ ( x ) Wf ( x )] (10) − σ 2 " 1 + τ 2 f ⊤ ( x ) Wf ( x ) − τ 2 1 + g f ⊤ ( x ) WF ⊤ I + τ 2 1 + g FWF ⊤ − 1 FWf ( x ) τ 2 # . A matrix in ve rsion lemma called the W o o dbury form ula (Golub and V an Loan, 199 6, pp. 51) or the Sherman–Morrison–W o o dbury form ula (Bernstein, 2005, pp. 67 ) states that for ( I + V ⊤ A V ) no n-singular ( A − 1 + V V ⊤ ) − 1 = A − ( A V )( I + V ⊤ A V ) − 1 V ⊤ A . T aking V ≡ F ⊤ (1 + g ) − 1 2 and A ≡ τ 2 W in (10) g iv es ˆ σ ( x ) 2 = σ 2 " 1 + f ⊤ ( x ) W − 1 τ 2 + F ⊤ F 1 + g − 1 f ( x ) # . (11) Eq. (11) is no t o nly a simplification of the predictiv e v ariance give n in (5), but it should lo ok familiar. W riting V ˜ β with K − 1 = I / (1 + g ) in (3) gives V ˜ β = W − 1 τ 2 + F ⊤ F 1 + g − 1 and t hen: ˆ σ ( x ) 2 = σ 2 1 + f ⊤ ( x ) V ˜ β f ( x ) . (12) This is just the usual p osterior predictiv e densit y at x under the standard linear mo del: y ( x ) ∼ N [ f ⊤ ( x ) ˜ β , σ 2 (1 + f ⊤ ( x ) V ˜ β f ( x ))]. This means that w e hav e a c hoice when it comes to obtaining samples fr o m the p oste rior predictiv e distribution under the LLM. W e prefer (12) ov er (5) b ecaus e the latter in v olve s inv erting the n × n matrix I + τ 2 FWF ⊤ / (1 + g ), whereas the former only requires the in v ersion of an m × m matrix. Of course, if a n y of t he b o o leans are non-zero, then G P prediction mus t pro ceed as usual, fo llowing Eq. (5). 19 GP fits to t ypical nonlinear resp onse surfaces may seldom achiev e b i = 0 f o r all i . How- ev er, the fo llo wing section illustrates ho w treed partitioning can dr a matically increase the probabilit y o f jumping to the LL M pa ramete rization (in at least part of the input space) where the more thrifty predictiv e equations (12) ma y b e exploited. 5 Implemen t a tion, result s, and comparisons Here, the GP with jumps to the LLM (hereafter G P LLM) is illustrated on syn thetic and real data. This w or k grew out of researc h fo cuse d on extending the reac h of the treed GP mo del presen ted by Gramacy a nd Lee (2008), whereb y the data are r e cursiv ely partitioned and a separate GP is fit in each partition. Th us most of our experimen ts are in this context, though in Section 5.3 w e demonstrate an example without treed partitioning. P artition mo dels are an ideal setting for ev aluating the utilit y of the GP LLM, as linearit y can b e extracted in large areas of the input space. The result is a uniquely tractable nonstationary semiparametric spatia l mo del. A separable correlation function is used t hro ughout this section for brevit y a nd consis- tency , ev en though in some cases the pro cess whic h generated the data is clearly isotropic. Prop osals for the b o oleans b are drawn from the prior, conditional o n d , and accepted and rejected o n the basis o f the constructed co v ariance matrix K . The same prior parameteriza- tions are used fo r a ll experiments unless o therw ise noted, the idea b eing to deve lop a metho d that works “right out of the b ox ” as muc h as p ossible. 5.1 Syn thetic exp onen tial data Consider the 2-d input space [ − 2 , 6] × [ − 2 , 6 ] in whic h the true resp onse is giv en by Y ( x ) = x 1 exp( − x 2 1 − x 2 2 ) + ǫ , where ǫ ∼ N (0 , σ = 0 . 001). Figure 9 summarizes the consequences of estimation and prediction with the treed GP LL M for a n = 200 random sub-sample of 20 x1 x2 Z(x1,x2) simple exponential data exp: areas under limiting linear model portion of area in domain (x) Density 0.0 0.2 0.4 0.6 0.8 0 2 4 6 8 Figure 9: L eft: ex p onen tial data GP LLM fit. Right: histogram o f the areas under the LLM. this data from a regula r grid of size 441. The partitio ning structure of t he tr eed GP LLM first splits the region in to t wo halves , one of which can b e fit linearly . It then r ecursiv ely partitions the half with the action in to a piece whic h requires a GP and ano t he r piece whic h is also linear. The left pane sho ws a mean predictiv e surface wherein the LLM w as used o ve r 66% of the domain (on av erage) whic h was o btained in less than ten seconds on a 1.8 GHz A tha lo n. The right pane sho ws a histogra m of the areas of the domain under the LLM ov er 20-fold rep eated exp erimen ts. The four mo des of the histogram clump around 0%, 25%, 50%, and 75% sho wing tha t most often the ob vious three–quarters of t he space is under the LLM, although sometimes one of the t wo partitions will use a v ery smo oth G P . The treed GP L L M w as 40% fa ster than the treed GP alone when com bining estimation and sampling from the p osterior predictiv e distributions at the remaining n ′ = 24 1 p oin ts f r o m the grid. 5.2 Motorcycle Data The Motorcycle Acciden t D ataset (Silv erman, 1 985) is a classic for illustrating nonstationary mo dels. It samples the acceleration force on t he head of a mo t o rcy cle rider as a function of time in the first momen ts after an impact. Figure 1 0 show s the da t a and a fit using the 21 treed G P LLM. The plo t sho ws the mean predictiv e surface with 90% quan tile error bars, along with a t ypical partition. On a v erage, 29% of the domain w as under the LLM, split b et w een the left lo w–noise region (b efore impact) and the noisier righ tmost regio n. 10 20 30 40 50 −100 −50 0 50 Estimated Surface x z(x) Figure 10: Motorcycle Data fit by treed GP LLM. Rasm ussen a nd Ghahra mani (2 0 02 ) analyzed this data using a Dirichlet pro cess mixture of Gaussian pro cess (DPGP) exp erts whic h rep ortedly to ok one hour on a 1 GHz P en tium. Suc h times ar e typic al of inference under nonstationar y mo dels b ecause of the computational effort required to construct and in v ert larg e cov ariance matrices. In con trast, the treed GP LLM fits this dataset with comparable accuracy but in less than one minute on a 1 .8 GHz A tha lo n. W e identify three things whic h mak e the treed GP LLM so fast relativ e to most nonsta- tionary spatial mo dels. (1) P artitioning fits mo dels to less data, yielding smaller matrices to in vert. (2) Jumps to the LLM mean few er in v ersions all tog ethe r. (3) MCMC mixes b etter b ecaus e under the LLM the par a me ters d and g are out o f the picture and all sampling can b e p erformed via Gibbs steps. 22 5.3 F riedman data This F riedman dataset is the first one of a suite that w as used to illustrate MARS (Multi- v ariate Adaptive Regression Splines) (F riedman , 199 1 ). There are 10 co v ariates in the data ( x = { x 1 , x 2 , . . . , x 10 } ), but the function that describes the r esp onses ( Y ), observ ed with standard No r mal noise, E ( Y | x ) = µ = 10 sin( π x 1 x 2 ) + 20( x 3 − 0 . 5) 2 + 10 x 4 + 5 x 5 (13) dep end s only on { x 1 , . . . , x 5 } , th us com bining nonlinear, linear, and irrelev an t effects. W e mak e comparisons on this data to results pro vided for sev eral other mo dels in recen t lit- erature. Chipman et al. (2 002) used this data to compare their treed LM algorithm to four other methods of v arying parameterization: linear regress ion, greedy tree, MARS, and neural net w orks. The statistic they use for comparison is ro ot mean–squared error, RMSE = q P n i =1 ( µ i − ˆ Y i ) 2 /n , where ˆ Y i is the mo del–predicted resp onse fo r input x i . The x ’s are randomly distributed on the unit interv al. RMSE’s are gathered for fift y rep eated sim ulations of size n = 100 fr o m (13). Chipman et al. pro vide a nice collection of b o xplots sho wing the results. How ev er, they do not prov ide an y numeric al results, so we hav e ex- tracted some key num b ers fr om their plots and refer the reader to their pap er for the full results. W e duplicated the exp erimen t using b oth a stationary GP and our GP LLM. F o r this dataset, w e use a single mo del, not a treed mo del, as the f unc tion is esse n t ia lly stationary in the spatial statistical sens e (so if w e w ere to try to fit a treed GP , it w o uld kee p all of the data in a single partition). Linearizing b o o lean prior parameters ( γ , θ 1 , θ 2 ) = (1 0 , 0 . 2 , 0 . 9) w ere used, whic h ga ve the LLM a relativ ely lo w pr io r probability of 0.35, for larg e range parameters d i . The RMSEs that we obtained for the stationary GP and the GP L L M are summarized in the table b elo w. 23 Min 1st Qu. Median Mean 3rd Qu. Max GP LLM 0.4341 0.5743 0.6233 0.6258 0.6707 0.7891 GP 0.8196 0.8835 0.9131 0.9232 0.9 710 1 .0440 Linear 1.710 2.165 2.291 2.325 2.500 2.794 Results on the linear mo del are rep orted for calibration purp oses, and can b e seen to b e essen tially the same as those rep orted b y Chipman et al. RMSEs for the GP LLM are on av erage significan tly b etter than al l of those rep orted f or the ab o v e metho ds, with low er v ariance. F or example, the b est mean RMSE shown in the b o xplot is ≈ 0 . 9. That is 1.4 times higher than the w orst o ne we obtained for GP LLM. F urther comparison to the b o xplots pro vided b y Chipman et al. sho ws that the G P LLM is the clear winner. It is also clear that jumping to a linear mo del in the relev an t dimensions pro vides a more stable fit tha t gives impro ved predictiv e p erformance relative to a f ull (stationa r y ) GP . In fitting the mo del, the Mark ov Chain quic kly key ed in on the fact that only the first three cov ar iates con tr ibute nonlinearly . After burn–in, t he b o oleans b almost nev er devi- ated from (1 , 1 , 1 , 0 , 0 , 0 , 0 , 0 , 0 , 0). F rom the follo wing table summarizing the p osterior for the linear regression co efficien ts ( β ) w e can see that the coefficien t s for x 4 and x 5 (b e- t ween double-bars) w ere estimated accurately , and that the mo del correctly determined that { x 6 , . . . x 10 } w ere irrelev an t (i.e. not included in the GP , and had β ’s close to zero). x 4 x 5 x 6 x 7 x 8 x 9 x 10 5% Q u. 8.40 2.60 -1.23 -0.89 -1.82 -0.60 - 0.91 β Mean 9.75 4.59 -0 .1 90 0.049 -0.612 0.326 0.06 6 95% Qu. 10.99 9.98 0.92 1.00 0.68 1.21 1.02 F or a final comparison w e consider a Support V ector Mac hine (SVM) metho d ( D ruc k er et al., 1996) illustrated on this data and compared to Bagging (Breiman, 199 6 ). W e note tha t the SVM metho d required cross-v alidation (CV) to set some of its para me ters. In the compar i- son, 100 randomized training sets of size n = 200 w ere used, and RMSEs w ere collected for a (single) test set of size n ′ = 1000. An av erage MSE of 0.67 is rep orted, sho wing t he SVM to 24 b e uniformly b etter the Bagg ing metho d with an MSE of 2.2 6. W e rep eated the exp erimen t for the GP LLM (whic h requires no CV!), a nd obtained an a v erage MSE of 0.293 , whic h is 2.28 times b etter tha n t he SVM, and 7 .71 times b etter than Bagging. 5.4 Boston housing data A commonly used da tase t for v alidating m ultiv ariate mo dels is the Boston Housing Data (Harrison and Rubinfeld, 1978), whic h contains 506 resp onses o v er 1 3 co v ariates. Chipman et al. (2002) sho we d that their (Ba y esian) treed LM gav e low er RMSEs, o n a v erage, compared to a n umber of p opular tec hniques ( t he same ones listed in the previous section). Here w e em- plo y ed a treed GP LLM, which is a generalization of their treed LM, retaining the original treed LM as an accessible sp ecial case. Though computationally mo r e intens iv e than t he treed LM, the t r eed G P L L M giv es impressiv e results. T o mitigate some of t he computa- tional demands, the LLM can b e used to initialize the Mark ov Chain b y breaking the larger dataset into smaller partitions. Before treed GP burn–in b egins, the mo del is fit using only the faster (limiting) treed LM mo del. O nc e the tr e ed partitio ning has stabilized , this fit is tak en as the start ing v alue f or a f ull MCMC exploration of the po sterior for the treed GP LLM. This initialization pro cess allow s us to fit G Ps on smaller segmen ts of the data, reducing the size of matrices that need to b e in verted and greatly reducing computation time. F or the Boston Housing data we use ( γ , θ 1 , θ 2 ) = (10 , 0 . 2 , 0 . 95), whic h give s t he LLM a prior probability of 0 . 9 5 13 ≈ 0 . 51, when the d i ’s are large. Exp eriments in the Ba y esian t r eed LM pap er (Chipman et al., 2 002) consis t of calculating RMSEs via 1 0 -fold CV. The data are randomly partitioned in to 10 groups, iterativ ely trained on 9 /10 of the data, and tested on the remaining 1/10. This is rep eat ed for 20 random partitions, and b o xplots are sho wn. Note that the logarithm o f the resp onse is used and that CV is only used to assess predictiv e error, not to tune parameters. Samples are g a there d from the p osterior predictiv e distribution of the treed LM for six parameterizations using 20 25 restarts of 4000 it era t io ns . This seems excessiv e, but we follow ed suit for the treed G P LLM in order to obtain a fair comparison. Our “b ox plot” for training and testing RMSEs are summarized in the table b elo w. As b efore, linear regression (on the lo g r esp onses) is used for calibratio n. Min 1st Qu. Median Mean 3rd Qu. Max train GP LLM 0.070 1 0.0716 0.0724 0.072 8 0.0730 0.0818 Linear 0.1868 0.1869 0.1869 0.186 9 0.1869 0.1870 test GP LLM 0.1321 0.1327 0.134 6 0.13 46 0.1356 0.1389 Linear 0.1 9 26 0.1945 0.19 5 0 0.19 50 0.1953 0.1982 Notice that the RMSEs for the linear mo del hav e extremely low v aria bilit y . This is similar to the results pro vided by Chipman et al. and w as a k ey factor in determining that our exp eriment w as w ell–calibrated. Up on comparison of the ab ov e n umbers with the b ox plots in Chipman et al., it can readily b e seen that the treed GP LLM is leaps a nd b ounds b etter than the treed LM, and al l of the other metho ds in the study . Our w or st training RMSE is almost t w o times low er than the b est ones from the b o xplot. All of our testing R M SEs are lo w er than the low est ones from the b o xplot, and our median RMSE (0.134 6) is 1.2 6 times lo w er than the low est median RMSE ( ≈ 0 . 17 ) from the b o xplot. More recen tly , Ch u et al. (2004) p erformed a similar exp erime n t (see T able V) , but in- stead of 10 -fold CV, they randomly partit io ne d the data 100 times into training/test sets of size 481/25 and rep orted av erage MSEs on the un-transformed respo ns es. They compare their Ba yes ian SVM regression algorithm (BSVR) to other high-p o were d tec hniques like Ridge Regression, Relev ance V ector Machine , G Ps , etc., with a nd without ARD (automatic relev ance determination— e ssen tially , a separable cov a r iance function). Rep e ating their ex- p erimen t for the treed GP LLM gav e an a verage MSE of 6.96 compared to that of 6.9 9 for the BSVR with ARD, making the t w o algorit hms b y fa r the b est in the comparison. How ev er, without ARD the MSE of BSVR w as 12.34, 1.77 times higher than the treed GP LLM, and the w or st in the comparison. The r ep orted results fo r a GP with (8.32) and without (9.1 3) 26 ARD sho we d the same effect, but to a lesser degree. Perhaps not surprisingly , the av erage MSEs do not tell the whole story . The 1st, median, and 3rd quartile MSEs w e obta ine d f o r the treed GP LLM w ere 3.72, 5.32 and 8.48 resp ectiv ely , sho wing that its distribution had a hea vy right–hand tail. W e take this as a n indicatio n t ha t sev eral resp onses in the dat a are either misleading, noisy , or otherwise v ery hard to predict. 6 Conclus ions Gaussian pro cess es are a flexible mo deling to ol whic h can b e an ov erkill f o r man y applica- tions. W e ha v e sho wn how its limiting linear mo del can b e b oth useful and accessible in terms of Ba yes ian p osterior estimation, and prediction. The b enefits include sp eed, parsi- mon y , and a relativ ely straightforw ard implemen tation of a semiparametric mo del. When com bined with treed partitioning the GP L L M extends the t ree d LM, r esulting in a uniquely nonstationary , tractable, and hig hly accurate regression to ol. W e ha v e fo cused on the separable p o w er family of correlation functions, but the metho d- ology is by no means restricted to this family . All tha t is required is that the relev an t family ha v e a range parameter ( like d ) that yields the limiting (scaled) iden tit y cov aria nce matr ix c hara cterizing the LLM (lik e d → 0). F or example, allowing a p o w er 0 < p i 6 = 2 in the p o w er family of Eq. ( 2) is straightforw ard. The separable Mat ´ ern family also has t he desired prop ert y: K ( x j , x k | ρ, φ, d ) = m X Y i =1 π 1 / 2 φd 2 ρ i 2 ρ − 1 Γ( ρ + 1 / 2) ( || x ij − x ik || /d i ) ρ K ρ ( || x ij − x ik || /d i ) , where K ρ is a mo dified Bessel function of the second kind. Unlik e the p o w er family , the isotropic Mat´ ern family do es not arise as a sp ecial case where d i = d , for i = 1 , . . . , m X , but, as men tioned in Section 4, the LLM metho dology remains similarly applicable when there 27 is only one range parameter. One o b vious extension of this w or k is to allo w a larger class of “simple” mo dels for the mean function o f the pro cess, suc h as higher-or de r p olynomials or interactions b et w een linear terms. Cho osing among simpler mo dels can b e done straigh tforw ardly within the Ba y esian framew ork (George and McCullo c h, 1993; Gew ek e, 1996; Jo seph et al., 2008). Suc h an extension w ould like ly a llo w more frequen t selection of the simpler mo de l, reducing the need for the GP . How ev er, the clear understanding of what limiting cases lead to jumping from a GP to a linear mo del, as well as ho w to construct a set of b o oleans and their priors, w ould be lost in mo ving b ey ond linear mo dels. Ov er a lo cal region, a quadratic function w ould b e w ell–approxim ated b y a GP with a range parameter that could b e neither v ery small nor large. Th us the approac h con t a ine d herein w ould need further thought to efficien tly extend it. There is also the tradeoff of the extra computing resources needed to test a larger set of mo dels , whereas linear mo dels can b e w orked directly into the curren t mo del fitting (via the auxiliary b o oleans), and do not require separate mo del selection steps. W e b eliev e that a large contribution o f the GP LLM will b e in the domain o f seque n tia l design of computer exp erimen ts (Gramacy and Lee, 2008 ) whic h was the inspiration for m uc h of the work presen ted here. Empirical evidenc e suggests that man y computer exp erimen ts are nearly linear. That is, either the resp onse is linear in most of its input dimensions, or the pro cess is en tirely linear in a subset of the input domain. Supremely relev an t, but larg e ly ignored in this pap er, is that the Ba yes ian t r eed GP LLM provides a ful l p osterior predictiv e distribution (particularly a nonstationa r y and thus region– sp ecific estimate of predictiv e v ariance) which can b e used tow ards activ e learning in the input domain. Exploitation of these c haracteristics should lead to an efficien t framew ork for the adaptiv e exploration of computer exp eriment parameter spaces. 28 References Berger, J. O., de Oliveira, V., and Sans´ o, B. (2001). “Ob jectiv e Bay esian Analysis of Spatially Correlated D ata.” Journal of the Americ an Statistic al Asso ciation , 96, 456, 13 61–1374. Bernstein, D . (2005). Matrix Mathematics . Princeton, NJ: Princeton Univ ersity Press. Breiman, L. (199 6). “ Bagging Predictors.” Machine L e arning , 24, 2, 1 23–140. Calder, C. A. (2007). “D ynamic factor pro ces s con volution mo de ls for multiv ariate spacetime data with a pplic ation to air quality assess men t.” Envir on mental and Ec olo gic al S t atistics , 14, 229 –247. Chil ´ es, J. and Delfiner, P . (1999 ). Ge ostatistics: Mo deling Sp atial Unc ertainty . John Wiley and Sons, Inc. Chipman, H., George, E., and McCulloch, R. (1998). “Bay esian CAR T Mo del Searc h (with discussion).” Journal of the Americ an Statistic al Asso ciation , 93, 935 – 960. — (200 2). “Ba yes ian T reed Mo dels.” Machin e L e arning , 48 , 303–324. Ch u, W., Keerthi, S. S., and Ong, C. J. (2004). “Bay esian Supp ort V ector R e gression using a Unified Loss F unction.” IEEE T r ansactions o n Neur al Networks , 15(1), 29–44. Cressie, N. (19 9 1). S t atistics for Sp atial Data . John Wiley and Sons, Inc. Dey , D., M ¨ uller, P ., and Sinha, D. (1998). Pr actic al Nonp ar ametric and Semip ar ametric Bayesian Statistics . New Y ork, NY, USA: Springer- V erlag New Y ork, Inc. Druc k er, H., Burges, C. J. C., Kaufman, L ., Smola, A. J., and V apnik, V. (1996). “Supp ort V ector R egr ession Mac hines.” In A dvanc es in Neur al Info rmation Pr o c essin g Systems , 155–161. MIT Press. 29 F riedman, J. H. ( 1 991). “Multiv ariate Adaptiv e Regression Splines.” A nnals of Statistics , 19, No. 1, 1–67. George, E. I. and McCullo c h, R. E. ( 1 993). “V ar ia ble selec tion via G ibbs sampling.” Journal of the A meric an Statistic al Asso ciation , 88 , 881–88 9. Gew ek e, J. (19 96). “V ariable selection and mo del comparison in regression.” In In Bayesian Statistics 5 , eds. J. Bernardo, J. Berger, A. Dawid, and A. Smith, 6 0 9–620. Oxford Press. Gilks, W., Ric hardson, S., and Spiegelhalter, D. (1996). Markov Chain Monte Carlo in Pr actic e . London: Chapman & Hall. Gilleland, E. and Nyc hk a, D. (20 05). “Statistical mo dels f o r monitoring and regula t ing ground-lev el ozone.” Envir onmetrics , 16, 5 3 5–546. Golub, G. H. and V an Loan, C. F. ( 1 996). Matrix Computations . Baltimore, MD: Johns Hopkins. Gramacy , R. B. (2007). “ tgp : An R P ack age fo r Ba y esian Nonstationary , Semiparametric Nonlinear Regression and Design by T reed Gaussian Pro cess Mo dels.” Journal of Statis- tic al Softwar e , 19, 9. Gramacy , R. B. and Lee, H. K. H. (2 008). “ Ba y esian treed Gaussian pro cess mo dels with an application to computer mo deling.” Journal of the Americ an Statistic al Asso ciation , to app ear. Gramacy , R. B. and T a ddy , M. A. (2008). tgp: Bayesian tr e e d Gaussian pr o c ess mo dels . R pac k age v ersion 2.1-2. Harrison, D . and Rubinfeld, D. L. (1978). “Hedonic Housing Prices and the Demand for Clean Air.” Journal of Envir onm ental Ec onomics a nd Manage ment , 5, 81–102 . 30 Joseph, V. R., Hung, Y., and Sudjianto, A. (2008). “Blind Kriging: A New Method for Dev eloping Metamo dels.” AMSE Journal of Me ch anic al Design . T o app ear. Kennedy , M. and O’Hagan, A. (2001 ). “Bay esian Calibratio n of Computer Mo dels (with discussion).” Journal of the R oyal Statistic al So cie t y, Series B , 63 , 425–46 4. Neal, R. (1997). “Monte Carlo implemen tation of Gaussian pro cess mo dels for Ba y esian regression and classification”.” T ec h. R ep. CR G –TR–97–2, Dept. of Computer Science, Univ ersit y of T oron to. Rasm ussen, C. and Ghahramani, Z. (2002). “Infinite Mixtures of G a us sian Pro cess Exp erts.” In A dvanc es in Neur al Information Pr o c essing Systems , v ol. 14 , 881–888 . MIT Press. San t ner, T. J., Williams, B. J., and Notz, W. I. (2003). The D esign and A nalysis of Computer Exp eriments . New Y or k , NY: Springer-V erlag. R Dev elopmen t Core T eam (2 0 04). R : A language and envir onment for statistic al c omputing . R F o undat ion for Statistical Computing, Vienna, Aus. ISBN 3-90 0051-00-3. Silv erman, B. W. (1985) . “Some Asp ects of the Spline Smo othing Approach to Non- P ara me tric Curve Fitting.” Journal of the R oyal Statistic al So ciety Seri es B , 47, 1–52. 31

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment