A Dynamic Programming Approach To Length-Limited Huffman Coding

The ``state-of-the-art'' in Length Limited Huffman Coding algorithms is the $\Theta(ND)$-time, $\Theta(N)$-space one of Hirschberg and Larmore, where $D\le N$ is the length restriction on the code. This is a very clever, very problem specific, techni…

Authors: Mordecai Golin, Yan Zhang

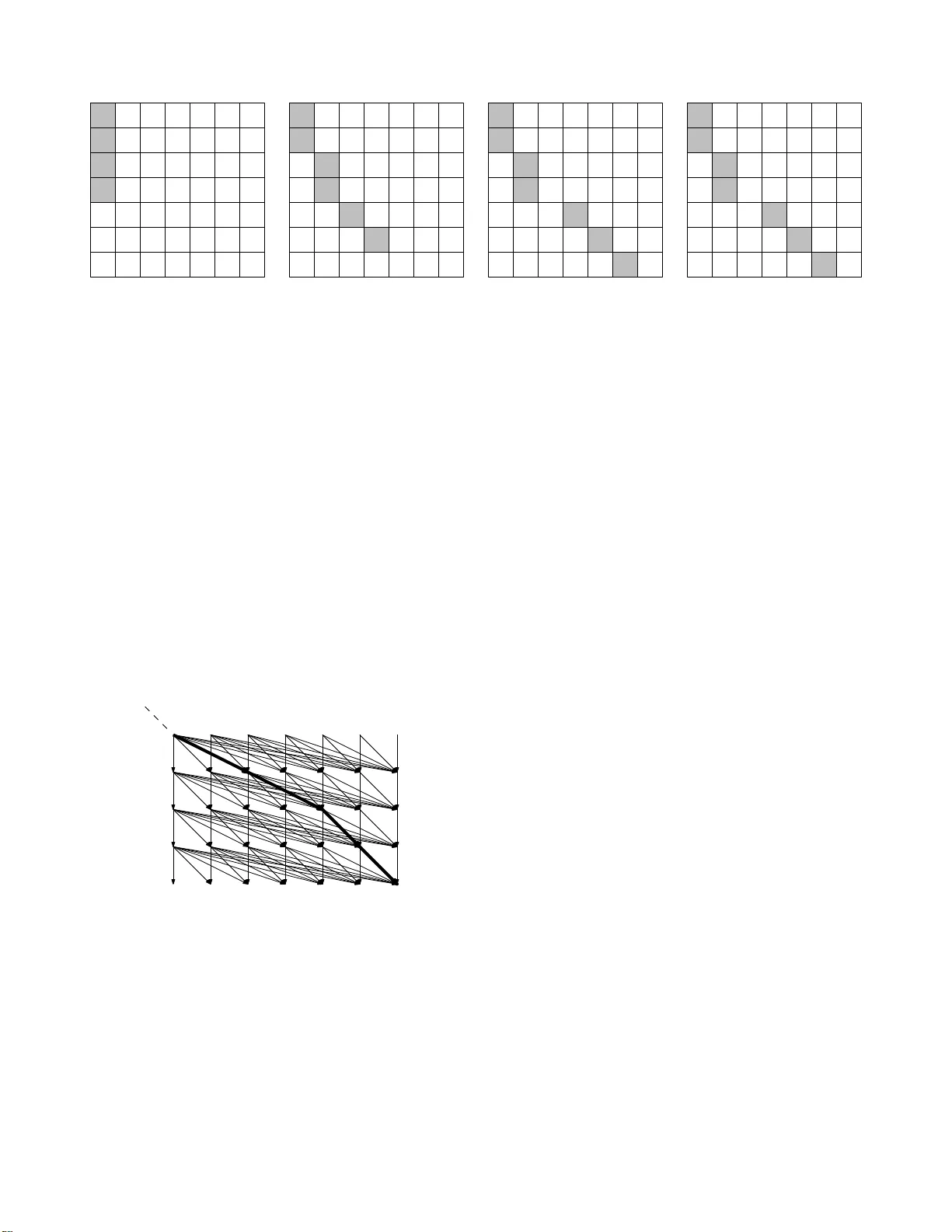

1 A Dynamic Programming App roach T o Length-Limited Huf fman Coding Mordecai Golin, Member , I EEE, and Y an Zhan g Abstract —The “state-of-the-art” in Length Limited Huffman Coding algorithms is the Θ( N D ) -ti me, Θ( N ) -space one of Hirschberg and Larmore, where D ≤ N is the length restriction on the code. This is a very clev er , very problem specific, technique. In this note we show that there is a simple Dynamic-Programming (DP) method that solves t h e problem with the same t i me and space bounds. The fact that ther e was an Θ( N D ) ti me DP algorithm was pre viously known; it is a straig htforwar d DP with t h e Monge p roperty (which p ermits an order of magnitud e speedup). It was not interesting, though, because it also required Θ( N D ) space. The main result of th is paper is the technique dev eloped for reducing the space. It is quite simple and applicable to many other problems modeled by DPs with the Monge property . W e illustrate this with examples from web-p roxy design and w i reless mobile p aging. Index T erms —Prefix-Fr ee Codes, Hu ffman Coding, Dynamic Progra mming, W eb-P roxies, Wir eless Paging, the Monge prop- erty . I . I N T RO D U C T I O N O ptimal pr efix-free co ding, or Huffman codin g , is a stan- dard co mpression techniq ue. Giv en an encoding alph a- bet Σ = { σ 1 , . . . , σ r } , a cod e is just a set of words in Σ ∗ . Giv en n pro babilities or nonnegativ e freque ncies { p i : 1 ≤ i ≤ n } , and associated code { w 1 , w 2 , . . . , w n } the cost of the code is P n i =1 p i | w i | where | w i | den otes the length of w i . A co de is pr e fix-fr ee if n o codeword w i is a prefix of any other co dew ord w j . An optima l prefix- free c ode f or { p i : 1 ≤ i ≤ n } is a prefix-fr ee code that minimizes its cost among all prefix- free codes. In [1], Huffman ga ve the now classical O ( n log n ) time algorithm fo r solving this problem . If the p i ’ s are g i ven in sorted ord er , Huffman’ s algorithm can be improved to O ( n ) time [2]. I n this note we will always a ssume that th e p i ’ s are presorted and that p 1 ≤ p 2 ≤ . . . ≤ p n . In som e applicatio ns, it is desirable that th e length of all code words are bound ed by a con stant, i.e. , | w i | ≤ D where D is giv en. Th e prob lem of finding the minimal cost prefix - free code among all codes satisfying this length con straint is the length-limited Huffman coding (LLHC) pro blem, wh ich we will consider h ere. Fig. 1 g iv es an examp le of inpu ts for which the Hu ffman code is no t the same as th e length-limited Huffman cod e. The fir st alg orithm fo r LL HC was due to Ka rp [ 3] in 19 61; his a lgorithm is based o n integer linear prog ramming (IL P), M. Golin and Y . Zhang are with the Department of Computer Science & Engineeri ng, Hong Kong UST , ClearW at er Ba y , Ko wloon, Hong K ong. T heir research was parti ally supported by HK RGC CERG grants HKUST 6312/04E and 613105. which, using standard ILP solving techniques, leads to an exponential time algo rithm. Gilbert [4] in 1 971 was interested in this problem becau se of th e issue of inaccur ately kn own sources; since the p robabilities p i ’ s are no t known precisely , a set of codes with limited length will, in some sense, be “safe”. The algorithm p resented in [4] was an enum eration one and therefor e also runs in expone ntial time. In 1972 Hu and T an [ 5] d ev eloped an O ( nD 2 D ) time Dyna mic Program ming (DP) algorithm. T he first polynom ial time algorithm, runnin g in O ( n 2 D ) time and using O ( n 2 D ) space, was p resented by Garey in 19 74 [6]. Garey’ s alg orithm was based on a DP formu lation similar to th at developed by Knu th fo r deriving optimal bin ary search tre es in [7] a nd h ence only works for binary e ncoding alphabets. A decade later , Larmor e [8] gave an algorithm ru nning in O ( n 3 / 2 D log 1 / 2 n ) time a nd using O ( n 3 / 2 D log − 1 / 2 n ) sp ace. Th is algo rithm is a hyb rid of [ 5] and [6], and there fore also on ly works fo r th e binary ca se. This was finally improved by Larmore and Hirschbe rg [9 ] who gave a totally different algo rithm running in O ( nD ) time and using O ( n ) space. In that paper , the authors fir st tra nsform th e length-limited Huffman coding p roblem to th e Coin Collec- tor’ s proble m, a special ty pe of Knapsack problem , an d then , solve the Co in Collecto r’ s p roblem by what they n ame the P ac kage-Mer ge algorithm. Their result is a very clever sp ecial case algorithm developed for th is specific prob lem. Theoretically , Larmor e and Hirschb erg’ s result was later superseded for the case 1 D = ω (log n ) by two alg orithms based on the p arametric sear ch p aradigm [10]. The algo- rithm by Agg arwal, Schieb er and T okuyama [11] runs in O ( n √ D log n + n log n ) time and O ( n ) space. A later im- provement by Schieb er [12] runs in n 2 O √ log D log log n time and uses O ( n ) space. These algor ithms are very complicated , though , and e ven for D = ω (log n ) , the Larmore-Hir schberg one is th e one used in pr actice [1 3], [14]. For completen ess, we point out th at the algorithm s of [9], [1 1], [12] are a ll o nly claimed for the binary ( r = 2 ) case but they can be exten ded to work for the non-b inary ( r > 2) case u sing observations similar to those we provide in Ap pendix A for th e deriv atio n of a DP for the generic r -ary LLHC pr oblem. Shortly af ter [ 9] app eared, Larm ore and Przytycka [15], [16], in the co ntext o f par allel progra mming, g av e a simp le dynamic pr ogramm ing formulation for the bin ary Huffman coding pro blem. Although their DP was for regular Huffman coding and not the LL HC pro blem, we will see that it is quite easy to modify their DP t o model the LLHC problem. It is then straightfor ward to show that their for mulation also perm its 1 f ( n ) = ω ( g ( n )) if ∃ N, c > 0 such that ∀ n > N , f ( n ) ≥ g ( n ) . 2 constructing th e optimal tree in Θ( nD ) time b y co nstructing a size Θ( nD ) DP table. This is don e is Section II. This straight DP appr oach would not be as goo d as the Larmore - Hirschberg on e, though , because, like many DP algorithms, it requires maintaining the entire DP table to permit backtr acking to constru ct the solutio n, wh ich would r equire Θ( nD ) space. The m ain resu lt of this no te is the d ev elopmen t of a simple technique (sectio n I II) that permits red ucing the DP space consump tion down to O ( n ) , thus match ing the Larmo re- Hirschberg performance with a straightforward DP model. Our technique is not restricted to L ength-Lim ited codin g. It can be used to reduce space from O ( nD ) to O ( n + D ) in a variety o f O ( nD ) time DPs in the literatur e. In Sectio n IV we illustrate with examples from th e D-median on a line pro blem ( placing web p roxies on a linea r top ology network) [17] and wireless paging [18]. I I . T H E D Y N A M I C P RO G R A M M I N G F O R M U L AT I O N Set S 0 = 0 and S m = P m i =1 p i for 1 ≤ m ≤ n . Larmore and Przytycka [ 16] fo rmulated the bin ary Huffman cod ing problem as a DP (1) wher e H (0) = 0 and for 0 < i < n : H ( i ) = min max { 0 , 2 i − n }≤ j 0 , 0 ≤ i < n (2) where H ( D , n − 1) will den ote the cost of th e optimal length - limited Huffman code and c ( d ) i,j = 0 i = j = 0 S 2 i − j max { 0 , 2 i − n } ≤ j < i ∞ otherwise. (3) In the next subsection we will see an inter pretation of th is DP (which also provid es an interpretation of (1)). In order to make this note self-con tained, a co mplete deriv ation o f the DP for the r -ary alphab et case is p rovided in Appen dix A. As far as ru nning time is con cerned, (1) appear s to a- priori requ ire O ( n 2 ) time to fill in its co rrespond ing DP table. [16] used the inhe rent co ncavity of S m to reduce this time down to O ( n ) by transfo rming th e problem to an instance of the Con cav e Least W eight Subsequen ce (CL WS) p roblem and using on e o f the known O ( n ) time algorith ms, e.g., [20], for solving that proble m. Similarly , (2) appears to a-prior i r equire Θ( n 2 D ) time to fill in its DP table. W e will see that we may again use the conc avity of S m to redu ce this down by an o rder of magnitud e, to O ( nD ) by using the SMA WK algorithm [2 1] for find ing row-minima of matrices a s a subr outine. Unlike the CL WS algorithm s, the SMA WK o ne is very simp le to cod e and very efficient imp lementations are available in different packages, e. g., [22], [23]. In the co nclusion to this note, after the ap plication o f the techniq ue beco mes un derstandab le, we will e xplain why [16] n eeded to use the mo re comp licated CL WS rou tine to so lve the basic DP while we c an use th e simpler SMA W K one. The O ( nD ) DP algor ithm for solving the LL CH prob lem, while seemingly never explicitly stated in the literature , was known as folklore. Even though it is much simpler to imple- ment than the O ( nD ) Larmor e and Hirsch berg [9] Package- Merge algorithm it suf f ers from the drawback of requiring Θ( nD ) space. The main contribution o f this note is th e observation that its spac e ca n be red uced d own to O ( n + D ) making it com parable with Package-Merge. Note that since, for the LLHC problem we may trivially assume D ≤ n , th is implies a space require ment o f O ( n ) . Further more, our space improvement will work not only for the LLHC problem b ut for all DPs in form (2) where the c ( d ) i,j satisfy a particu lar property . A. The meanin g of The DP W e quickly sketch the m eaning of the DP (2) for th e binary case. Figure s 1 and 2 illustrate this sketch. W e n ote that in or der to stress the parts im portant to our analysis, our for malism is a bit different than [16], [ 19]. A c omplete deriv ation of the DP fo r the r -ary ca se with the app ropriate general versions of the lem mas and observations stated below along with their proof s, is pr ovided in Appen dix A. It is stand ard th at th ere is a 1 − 1 cor respond ence between binary prefix-fr ee code with n words and binary tree with n leav es. T he set of edges from a n internal node to its children are labeled by a 0 or 1 . Eac h leaf correspo nds to a code word, which is th e conc atenation of the character s o n th e roo t-to- leaf path. Th e cost of the code eq uals the weig h ted external path length of the tre e. So we are r eally interested in fin ding a binary tree with minimu m weigh ted external path leng th. Denote th e heig ht of the tree by h. T he bottommo st leaves are on level 0 ; the r oot on lev el h . Optimal assignmen ts o f the p i ’ s to the leaves alw ays assign smaller valued p i ’ s to lea ves at lower levels. A node in a b inary tree is co mplete if it has two child ren and a tree is complete if a ll of its intern al nodes are complete. A min-co st tree must b e comple te, so we restrict ourselves to complete trees. A c omplete tree T of height h can be completely represented by a sequen ce ( i 0 , i 1 , . . . , i h ) , where i k denotes the num ber of in ternal no des at lev els ≤ k . Note th at, by definition, i 0 = 0 , i h = n − 1 . Also note th at e very level must contain at least on e intern al node so i 0 < i 1 < · · · < i h . Finally , it is straightfor ward (see App endix A) to show that the to tal number of lea ves o n level < k is 2 i k − i k − 1 , so 2 i k − i k − 1 ≤ n fo r all k . For techn ical r easons, becau se we will be dealing with trees having height at most h ( but not n ecessarily equ al to h ), we allow initial pad ding of the sequence by 0 s so a sequence repr esenting a tree will be o f the form ( i 0 , i 1 , . . . , i h ) that has the following pr operties Definition 1: Sequ ence ( i 0 , i 1 , . . . , i h ) is valid if • ∃ t > 0 such tha t i 0 = i 1 = · · · = i t = 0 , • 0 < i t +1 < i t +2 < · · · < i h ≤ n − 1 • 2 i k − i k − 1 ≤ n fo r all 1 ≤ k ≤ h . 3 p 1 p 2 p 3 p 4 p 5 p 6 p 7 k i k 2 i k − i k − 1 0 1 2 3 4 5 0 1 3 4 5 6 2 5 5 6 7 p 1 p 2 p 3 p 4 p 5 p 6 p 7 k i k 2 i k − i k − 1 0 1 2 3 4 0 2 4 5 6 4 6 6 7 Co de[1] = 00000 Co de[2] = 00001 Co de[3] = 0001 Co de[4] = 0010 Co de[5] = 0011 Co de[6] = 01 Co de[7] = 1 Co de[1] = 0000 Co de[2] = 0001 Co de[3] = 0010 Co de[4] = 0011 Co de[5] = 010 Co de[6] = 011 Co de[7] = 1 0 1 0 0 0 0 0 1 1 1 1 1 0 1 0 0 0 0 0 1 1 1 1 1 Fig. 1. T wo trees and their correspo nding sequence s I and codes. The left tree has sequence I 1 = (0 , 1 , 3 , 4 , 5 , 6) . The right tree has sequence I 2 = (0 , 2 , 4 , 5 , 6) . Note that, for both trees, 2 i k − i k − 1 is the number of le av es belo w le vel k . For input frequenc ies ( p 1 , . . . , p 7 ) = (1 , 1 , 2 , 2 , 2 , 4 , 5 , 9) . The left tree is an optimal Huffma n code while the right tree is an optimal length -limited Huffman code for D = 4 . Note t hat we allo w padding seque nces with initia l 0 s, so the right tree could also be represent ed by seque nces (0 , 0 , 2 , 4 , 5 , 6) , (0 , 0 , 0 , 2 , 4 , 5 , 6) , etc.. 0 0 0 0 0 ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ 2 2 2 2 6 6 6 6 13 10 10 10 19 18 18 35 32 31 57 54 0 0 0 0 – – – – 0 0 0 0 0 1 1 1 0 1 1 1 2 3 3 3 4 4 5 5 H ( d, i ) J ( d, i ) d i 0 1 2 3 4 0 1 2 3 4 5 6 d i 0 1 2 3 4 0 1 2 3 4 5 6 Fig. 2. Solving the DP in equati on 2 for ( p 1 , . . . , p 7 ) = (1 , 1 , 2 , 2 , 2 , 4 , 5 , 9) with D = 4 . H ( d, i ) is the valu e defined by (2); J ( d, i ) is the inde x j for which the v alue H ( d, i ) in (2) is achie ved. The circl ed entries yield the seque nce (0 , 2 , 4 , 5 , 6) (th e 6 comes from the fact that we are calcula ting H (4 , 6 ) ) which is e xactly the sequence I 2 from Figure 1. T he righth and tree in Figure 1 is therefore an optimal lengt h-limite d Huffman code for D = 4 . A sequence is complete if it is valid and i h = n − 1 . W e can rewrite the c ost function for a tree in terms of its complete sequence. Lemma 1: If complete seq uence ( i 0 , i 1 , . . . , i h ) represen ts a tree, then the cost of the tree is P h k =1 S 2 i k − i k − 1 . (Note that padding complete sequen ces with initial 0 s d oes not change the cost of the sequen ce.) W e may mechan ically extend th is cost fu nction to all valid sequences as follows. Definition 2: For valid I = ( i 0 , i 1 , . . . , i h ) , set cost ( I ) = h X k =1 S 2 i k − i k − 1 . I is optimal if cost ( I ) = min I ′ cost ( I ′ ) wh ere the minimum is taken over all len gth h sequen ces I ′ = ( i ′ 0 , i ′ 1 , . . . , i ′ h ) with i ′ h = i h , i.e., all sequenc es o f the same length that end with the same value. Our g oal is to find op timal trees b y usin g the DP to optimize over valid sequences. An imm ediate issue is that no t all complete seq uences represent trees, e.g., I = (0 , 3 , 4 , 5) is complete fo r n = 6 but, by observation, d oes not represent a tree. Th e saving fact is tha t even th ough not all complete sequences represent tr ees, all optimal complete sequen ces represent trees. Lemma 2: An o ptimal valid sequence en ding in i h = n − 1 always rep resents a tree. Thus, to solve th e LLH C prob lem of finding an optimal tree of heig ht ≤ D , we only n eed to find an optimal valid seque nce of length h = D end ing with i D = n − 1 (reconstructin g the tree f rom the sequenc e can be done in O ( n ) time). In the DP defined by equation s (2) and ( 3), H ( d, j ) clearly models the recurr ence for findin g an o ptimal valid sequen ce ( i 0 , i 1 , . . . , i d ) of len gth d with i d = j so this D P solves the problem . Note that, a-p riori, filling in th e DP table H ( · , · ) one 4 entry a t a time seems to requ ire O ( n 2 D ) time. W e will now sketch the standard way of reducing this time down to O ( nD ) . Befor e do ing so we mu st disting uish b etween the value pr oblem and th e construction pr o blem . The value problem would be to calculate the value of H ( D , n − 1) . The co nstruction p roblem would be to con struct an optimal valid sequ ence I = ( I 1 , I 2 , . . . , I D ) with I D = n − 1 and cost ( I ) = H ( D , n − 1) . This would requ ire backtrack ing throug h the DP table b y setting I 0 = 0 , I D = n − 1 and finding I 1 , I 2 , . . . I D − 1 such that ∀ 0 < d ≤ D , H ( d, I d ) = H ( d − 1 , I d − 1 ) + c ( d ) I d ,I d − 1 . (4) B. Solvin g the V alue pr oble m in O ( nD ) time Definition 3: An n × m matrix M is Monge 2 if for 0 ≤ i < n − 1 and 0 ≤ j < m − 1 M i,j + M i +1 ,j +1 ≤ M i +1 ,j + M i,j +1 (5) The Monge pro perty can be thought o f as a discrete version of concavity . It appears implicitly in many optimization problem s for which it perm its speeding up their solutions ([24]) provides a nice survey). One of th e classic techniques u sed is the SMA W K algorithm for finding row-minima. Giv en an n × m matrix M , th e minimu m of r ow i , i = 1 , . . . , n is the entry of r ow i that has the smallest value; in case of ties, w e take the rig htmost entry . Thus, a solutio n of the row-minima problem is a collection of indices j ( i ) , i = 1 , . . . , n su ch that M i,j ( i ) = min 0 ≤ j j , the righthan d side o f (7) is ∞ , so (7) is satisfied. If j + 1 < i and 2 ( i + 1) − n ≤ j , (7) can be rewritten as S 2 i − j + S 2( i +1) − ( j +1) ≤ S 2 i − ( j +1) + S 2( i +1) − j (8) It is easy to verify S 2 i − j + S 2( i +1) − ( j +1) − S 2 i − ( j +1) − S 2( i +1) − j = p 2 i − j − p 2 i − j + 2 ≤ 0 Hence, (8) holds. Thus, from the discussion a bove, we can find all of the H ( d, i ) in Θ( nD ) time . In par ticular , H ( D , n − 1) will be the 5 0 2 6 13 ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ ∞ 0 2 6 13 ∞ ∞ ∞ ∞ 6 10 24 ∞ ∞ ∞ 12 19 ∞ ∞ ∞ 21 35 ∞ ∞ ∞ ∞ ∞ ∞ ∞ 0 2 6 13 ∞ ∞ ∞ ∞ 6 10 24 ∞ ∞ ∞ 12 19 ∞ ∞ ∞ 18 32 ∞ ∞ 32 ∞ ∞ 57 ∞ 0 2 6 13 ∞ ∞ ∞ ∞ 6 10 24 ∞ ∞ ∞ 12 19 ∞ ∞ ∞ 18 32 ∞ ∞ 31 ∞ ∞ 54 ∞ M (1) M (2) M (3) M (4) Fig. 3. The matrices used for calculati ng the DP tables in Fig. 2 . The shaded entries are the row minima. The ro w m inima for M ( i ) are exact ly the row entrie s in the H ( d, i ) ta ble in Fig. 2. The column indices of the corre sponding row mini ma are the J ( d, i ) entries. cost of the optimal tree with h eight at most D which is the required cost of the optimu m D -limited cod e. W e ha ve thus seen how to solve the value pr oblem in O ( nD ) time. Th e d ifficulty is that con structing the optimal tree associated with H ( D , n − 1) would require fin ding the associated op timal valid sequence with i D = n − 1 . This would require solving the construction problem by fin ding all indices I d in (4). The standard way of solving this problem is to main tain an arra y storing th e J ( d, i ) values return ed by the algorithm . Star ting from H ( D , n − 1) an d backtr ack throug h the j ( · , · ) ar ray , con structing the correspo nding sequen ce by setting I D = n − 1 a nd I d − 1 = j ( d, I d ) . Unfo rtunately , this requires main taining a size Θ( nD ) aux iliary array , which requires too much space. I I I . S O LV I N G T H E C O N S T RU C T I O N P RO B L E M I N O ( nD ) T I M E A N D O ( n + D ) S PAC E i d 0 1 2 3 4 5 6 0 1 2 3 4 Fig. 5. The drop ping-le vel graph associa ted with the example from Figures 2 and 3. The bold edges are the minimum cost path from (0 , 0) to (6 , 4) . Note that the i coordi nates of t he pa th are (0 , 2 , 4 , 5 , 6) which is ex actly the sequence of J ( d, i ) ’ s corre sponding to optimal solution of the problem, which is also the sequence corresponding to the opti mal tree. Let V be the grid no des ( d, i ) with 0 ≤ d ≤ D and 0 ≤ i < n . Consid er the dire cted g raph G = ( V , E ) in which ( d, i ) points to all nod es imm ediately b elow it and to its righ t, i.e., E = { ( ( d, j ) , ( d + 1 , i ) ) | ( d, j ) ∈ V , d < D, j ≤ i } See Figure 5. Such graph s are sometime s c alled dr opp ing level-graphs [25]. Now a ssign edge ( ( d − 1 , j ) , ( d, i ) ) the weight c ( d ) i,j . The le ngth of a p ath in G will ju st b e the sum o f the weights of the edges in the path. The i mportan t observation is that H ( d, i ) in DP (2) is simply the length of the m in-cost path from (0 , 0) to ( d, i ) in this weighted G . More specifically , the value p roblem is to find the length of a shortest pa th and the construction prob lem is to find an actu al shortest path. A-priori, finding such a path seems to require O ( nD ) space. There are tw o different algorith ms in the literature for reducing the space down to O ( n + D ) in related p roblems. The fir st was for finding a max imum com mon subseque nce of two sequ ences. This red uced do wn to the pro blem of finding a max-length path in somethin g very similar to a drop ping lev el-graph in which each vertex h as boun ded indegree and bound ed outdegree. Hirsch berg [26] developed an Θ( nD ) time, Θ( n + D ) space alg orithm for this pro blem. His al- gorithm was very influential in th e bioinfo rmatics co mmunity and its techn ique is inco rporated into many later algorith ms e.,g [27], [28]. Th e techn iques’ s perf ormance is very depe n- dent u pon the bo unded degree of the vertices, which is no t true in our case. The second, due to Mun ro an d Ramirez [25], was exactly for the pro blem of constructing min-co st paths in full drop ping lev el-graph s. Their algo rithm ran in Θ( n 2 D ) time and Θ( n + D ) space. Their Θ( n 2 D ) time is too expensi ve for us. W e will now see how to re duce this down to Θ( nD ) using th e Monge speedup while still maintainin g the Θ( n + D ) space. The gener al pro blem will be to constru ct an optimal u - w path in G where u = ( d u , i u ) is a bove and n ot to the left of w = ( d w , i w ) , i.e., d u < d w and i u ≤ i w . Let G ( u, w ) be th e subgrid with up per-left c orner u and lower - right corner w (with a ssociated indu ced edg es from G ). First note that, because G is a dr opping level-graph, a ny optim al (m in or max cost) u - w path in G must lie com pletely in G ( u, w ) . Both algorithm s [26], [25] start from the same o bservation, which is to build the path recurs ively i.e., by first (a) fin ding a po int v = ( ¯ d, ¯ i ) halfway (by link distance) o n the optima l u - w pa th in G ( u, w ) and the n (b) outpu t the recursively constru cted optimal u - v path in G ( u, v ) and optimal v - w path in G ( v , w ) . For drop ping level-graphs, if u = ( d 1 , i 1 ) an d w = ( d 2 , i 2 ) then th e midlevel must be ¯ d = ⌊ ( d 1 + d 2 ) / 2 ⌋ . Su ppose that we ha d an algorithm M id ( u, w ) that re turned a point v = ( ¯ d, ¯ i ) on a shortest u - w path in G ( u, w ) . Then, translated into our no tation and with app ropriate termination cond itions the 6 construction algorith m can be written as: Path ( u, w ) 1. If u = ( d, j ) an d w = ( d + 1 , i ) then 2. output edge ( u, w ) 3. Else if u = ( d, i ) a nd w = ( d ′ , i ) then 4. Output vertical path from u to w 5. Else 6. set v = M id ( u, w ) 7. Path ( u, v ) ; Path ( v , w ) Fig. 6. The algori thm for constructi ng a min-cost u - w path. (Figure 7 illustrates this idea.) T o solve the or iginal problem we just call Path ( u 0 , w 0 ) where u 0 = (0 , 0) and w 0 = ( D, n − 1) . Correctn ess follows from the fact that at each recursive call, the vertical distance d w − d u decreases so the recursion must terminate. Furthermore , when the recursion terminates, either (i) u = ( d, j ) and w = ( d + 1 , j ) so the only u - w path in G ( u, w ) is th e edge ( u, w ) or ( ii) u = ( d, i ) and w = ( d ′ , i ) so the only u - w path in G ( u, w ) is the vertical path going down fr om u to w . The efficiency of the resulting algorithm, both in time and space, will d epend upon how efficiently v = M id ( u, w ) can be found. Note that with th e e xception of the calls of type M id ( u, w ) , the rest of the execution of Path ( u 0 , w 0 ) (includin g all recursive calls) only req uires a total of O ( D ) space, since each recur si ve call u ses only O (1) sp ace and there are at most O ( D ) such ca lls. Thu s, if M id ( u, w ) can be fo und using O ( n + D ) space, then the en tire procedu re requ ires only O ( n + D ) space. This is actu ally how bo th [2 6], [25] achieve their space bounds. The two algorithms differ in ho w they calculate v . Althoug h both their appro aches can be used for our pro blem, we will work with a mod ified version o f that of [25], since it will be simpler to explain. W e now describ e how to use the SMA WK algo rithm to fin d M id ( u 0 , w 0 ) in O ( nD ) time and O ( n ) space. The extension to general M id ( u, w ) will fo llow later . Recall that the pr oce- dure Fill _Table fro m Figure 4 used the fact that H ( · , · ) was Mong e and the SMA WK algorithm to iterativ ely fill in the rows H ( d, · ) , for d = 1 , 2 , . . . , D. Given r ow H ( d − 1 , · ) , the pro cedure calculated H ( d, · ) in O ( n ) time u sing SMA WK, and then threw away H ( d − 1 , · ) . Consider an arbitrary no de ( d, i ) o n level d > ¯ d. The shortest p ath from u 0 to ( d, i ) m ust pa ss thr ough so me node on le vel ¯ d . W e now modif y Fill_Tab le to “remembe r” this node. M ore sp ecifically , o ur a lgorithm will calculate a uxiliary data pred ( d, i ) . • For d < ¯ d , pre d ( d, i ) will be undefin ed. • For d ≥ ¯ d , pr ed ( d, i ) will be an in dex j such that nod e ( ¯ d, j ) app ears on some sh ortest path from u 0 to ( d, i ) . So, when th e proced ure terminates, v = ( ¯ d, pr ed ( d, n − 1)) will be M id ( u 0 , w 0 ) . By definition, on level ¯ d , we have pr ed ( ¯ d, i ) = i. For d > ¯ d suppo se ( d − 1 , j ′ ) is th e immed iate pr edecessor of ( d , i ) on the shortest path from u 0 to ( d, i ) . Then (i) a shortest path fro m u 0 to ( d − 1 , j ′ ) followed by (ii) the edge from ( d − 1 , j ′ ) to ( d, i ) is (iii) a sho rtest p ath from u 0 to ( d, i ) ; we may therefor e set pr ed ( d, i ) = pred ( d − 1 , j ′ ) . W e can use this obser vation to m odify Fill_Table to calculate the pred ( d, · ) informatio n. M id ( u 0 , w 0 ) For d = 1 to ¯ d SMA WK ( M ( d ) ) ∀ 0 ≤ i < n set H ( d, i ) = M ( d ) i,J ( d,i ) ∀ 0 ≤ i < n set pr ed ( ¯ d, i ) = i ; For d = ¯ d + 1 to D SMA WK ( M ( d ) ) ∀ 0 ≤ i < n , set H ( d, i ) = M ( d ) i,J ( d,i ) ∀ 0 ≤ i < n , set pr ed ( d, i ) = pred ( d − 1 , j ( d, i )) Fig. 8. Returns the midp oint, by lin k distance, on min-cost u 0 - w 0 path. Note that M id ( u 0 , w 0 ) can throw away all o f the values pre d ( d − 1 , · ) and H ( d − 1 , · ) after the values pr ed ( d, · ) an d H ( d, · ) h av e b een calculated , so it only uses O ( n ) space. Similarly to the analysis of Fill_Table , it uses only O ( nD ) time since each call to th e SMA WK algorith m uses only O ( n ) time. So far, we have o nly shown h ow to fin d v = M id ( u 0 , w 0 ) . Note that the only assumptions we used were that H ( · , · ) satisfies DP (2) and is Mong e, i.e., the c ( d ) i,j satisfy ( 7). Now suppose that we are g i ven u = ( d u , i u ) , w = ( d w , i w ) with d u < d w and i u ≤ i w . G ( u, w ) is a dro pping level-graph on its o wn nodes so the cost of the shortest pa th from u to a ny n ode ( d u + d, i u + i ) ∈ G ( u, w ) is ˜ H ( d, i ) defined by ˜ H ( d, i ) = 0 if d = 0 , i = 0 ∞ if d = 0 , 0 < i < N min 0 ≤ j ≤ i ˜ H ( d − 1 , j ) + ˜ c ( d ) i,j if d > 0 , 0 < N (9) where N = i w − i u + 1 an d ˜ c ( d ) i,j = c ( d ) i u + i,i u + j . No te th at this n ew DP is exactly in th e sam e f orm as ( 2), just with a different n an d shifte d c ( d ) i,j . Sin ce the origin al c ( d ) i,j satisfy (7 ), so do the ˜ c ( d ) i,j . Thus (9) with the ˜ c ( d ) i,j is Mong e as well. Therefo re, we can run exactly the same algo rithm written in Figure 8 to find the m idpoint v = ( ¯ d, ¯ i ) = M id ( u, w ) , of the min-cost u - w path in O (( d w − d u ) N ) time and O (( d w − d u ) + N ) = O ( D + n ) space. As discussed p reviously , if M id ( u, w ) o nly requ ires O ( n + D ) space, th en Path ( u, w ) o nly requires O ( n + D ) space, so we have completed the space analysis. It remains to analyze run ning time. Set Area ( u, w ) = ( N − 1)( d w − d u ) to be the “area” o f G ( u, w ) . Recall that line 3 of Path ( u, w ) implies tha t d u 6 = d w when M i d ( u, w ) is c alled. Therefo re N ≥ 1 and the runn ing time of M id ( u, w ) is O (( d w − d u ) N ) = O ( Are a ( u , w )) . 7 Fig. 7. An illustration for findi ng the optimal path. Here , D = 8 and there are 3 lev els of recursions. The sol id circl es are the intermediate nodes found by the M id ( u, v ) procedures. T he first lev el of recursi on finds the midpoi nt on l e vel 4; the second lev el, the midpoints on lev els 2 and 6 ; t he third the m idpoint s on le vels 1 , 3 , 5 , 7 . At that point all subprob lems are of height one and easily solv able. Note that eac h recursi ve call splits a problem on a box of he ight 2 i into two pro blems on disjoi nt boxes of he ight 2 i − 1 . W e now analyze the run ning time of Path ( u 0 , w 0 ) . First consider the recu rsi ve calls when lines 1-4 occu r , i.e., the recursion terminates. The total work performed by such calls is the total numb er of ed ges outputted. Since an edge is outputted only on ce and the total p ath co ntains D edge s, th e total work perfor med is O ( D ) . Next c onsider the calls when line 5-7 occur . Since each such call returns a vertex v on the path , there ar e only D − 1 such calls so lines 6 and 7 ar e only called O ( D ) times and their total work, with the exception of the call to M id ( u, v ) , is O ( D ) . Finally c onsider th e work performe d b y th e M id ( u, w ) calls. Partition th e c alls into levels. • Level 1 is the original call M id ( u 0 , w 0 ) . • Level 2 con tains the recursive calls dir ectly mad e by th e lev el-1 call. • In general, le vel i contains th e recursive calls dire ctly made by the lev el- ( i − 1 ) ca lls. Note that if M id ( u, w ) is a level i call with u = ( d u , i u ) and w = ( d w , i w ) ) then D 2 i ≤ d w − d u < D 2 i + 1 . Furthermo re, by induction , if M id ( u, w ) and M id ( u ′ , w ′ ) ar e two different level i calls, then horizo ntal rang es [ d u , d w ] and [ d u ′ , d w ′ ] are disjoin t except for possibly d w = d u ′ or d u = d w ′ . Fix i. Let ( u j , w j ) j = 1 , . . . t be the calls at level i . The facts that each gr id G ( u j , w j ) has heigh t ≤ D 2 i + 1 and th at the horizo ntal range s of the g rids are disjoint implies t X j =1 Area ( u j , w j ) ≤ n D 2 i + 1 . Thus th e total of all level- i calls is O n D 2 i + 1 . Sum- ming over the ⌈ log D ⌉ le vels we ge t that the total work perfor med by all of the M id ( u, w ) calls on line 6 is O X i n D 2 i + 1 ! = O ( nD ) . Thus, the total work perf ormed by Pa th ( u 0 , w 0 ) is O ( nD ) and we are finished. I V . F U RT H E R A P P L I C AT I O N S W e just saw h ow , in Θ( nD ) tim e an d Θ( n + D ) space, to solve th e con struction pr oblem for any DP in for m (2) that satisfies the M onge p roperty (7). Θ ( nD ) time was known pre- viously; the Θ( n + D ) spa ce bound , is the new improvement. There are many oth er DP pro blems besides the b inary LLHC that satisfy (7) and whose space can thus b e im proved. W e illustrate with three examples. The r -a ry LLHC problem : W e have discussed th e binary LLHC p roblem in which | Σ | = 2 . The general r -ary alph abet case with N probab ilities is still modeled by a DP in form (2) but with n = N − 1 r − 1 + 1 . The on ly difference is that (3) is r eplaced by c ( d ) i,j = S r i − j if max { 0 , ri − N } ≤ j < i ∞ otherwise. (10) A fu ll derivation of this DP is giv en in Appendix A. The p roof that the c ( d ) i,j satisfy the Mong e property (7) is similar to the proof of Lemma 4. Thus, we can con struct a solu tion to the r -ar y LLHC problem in Θ( N D ) time and Θ ( N ) space as well. D medians on a line: W e are gi ven n − 1 custom ers loca ted o n the positi ve real line; customer i is at loca tion v i . Without loss of generality , assume v 1 < v 2 < · · · < v n − 1 . The re are D ≤ n service centers located on the line and a customer is serviced by the closest service center to its left (thu s we always assume a service center at v 0 = 0 ). Each customer has a serv ice req uest w i > 0 . The cost of servicin g customer i is w i times the distan ce to its ser vice center . I n [17], motiv ated by the ap plication of optimally p lacing web p roxies on a linear topology network, W o eginger sho wed that this problem could be modeled by a DP in form in form (2) where c ( d ) i,j = i X l = j +1 w l ( v l − v j +1 ) and proved that the se c ( d ) i,j satisfy Monge property (7). He then used the SMA WK alg orithm to construct a solution in O ( nD ) time and O ( nD ) sp ace. Using the techniq ue we just described, this can be redu ced to O ( nD ) time an d O ( n ) space. W e also mention that th ere is an undirecte d variant o f this problem in which a node is serv iced b y its closest service 8 center look ing both left a nd rig ht. There ar e many algorithm s in th e literature that ( explicitly or implicitly) u se con cavity to construct solutions for this problem in O ( nD ) tim e using O ( n ) sp ace, e.g., [29], [30], [31]. [3 1] does this by u sing a DP fo rmulation that is in the DP form (2) an d satisfies th e Monge pr operty (7) so the tech nique in this paper c an red uce the space for this pro blem d own to O ( n ) as well. W ireless Paging: The third application com es from wireless mobile paging . A user can be in o ne of N different cells. W e are g iv en a probab ility distribution in which p i denotes the prob ability that a user will be in cell i and want to minimize the band width needed to send pagin g requests to id entify the cell in which the user re sides. This p roblem was origin ally conjectured to be NP-complete, but [32] developed a DP algo rithm for it. Th e input of the pr oblem is the n probabilities p 1 ≥ p 2 ≥ · · · ≥ p n and an integer D ≤ n (corr espondin g to the n umber of paging round s used). The DP developed by [32] is exactly in ou r DP form (2) with c ( d ) i,j = ( i P i ℓ = j +1 p ℓ if d − 1 ≤ j < i ∞ otherwise. (11) The g oal is to com pute H ( D , n ) , which will be the minimum expected bandwid th need ed. Solv ing the c onstruction version of this DP per mits constructing th e actual pag ing protocol tha t yields this minimum bandwid th. [32] used the naive alg orithm to solve th e DP in Θ( n 2 D ) time and Θ( nD ) space. [33] p roved that the c ( d ) i,j defined by (11) satisfy the the Monge pr operty (7) and thus reduced the time to Θ( nD ) , but still required Θ( nD ) space. The algorith m in this paper per mits improving th e space complexity of constructing the proto col down to Θ ( n ) . V . C O N C L U S I O N The standar d appro ach to solving the Length -Limited Huff- man Coding ( LLHC) problem is via the sp ecial purpo se Package-Merge algorithm of Hirschberg a nd L armore [9] which run s in O ( nD ) tim e and O ( n ) spa ce, where n is the number of codewords and D is the length- limit on the code. In th is no te we po int out th at this p roblem can be solved in the same time and space u sing a straightforward Dy namic Programm ing form ulation. W e started by noting that it w as known that the LLHC p roblem could b e mod eled using a DP in the form H ( d, i ) = 0 if d = 0 , i = 0 ∞ if d = 0 , 0 < i < n min 0 ≤ j ≤ i H ( d − 1 , j ) + c ( d ) i,j if d > 0 , 0 < i < n (12) where H ( d, n ) will denote the minimum cost of a code with longest word at most d and the c ( d ) i,j are easily calculable con- stants. This imp lies an O ( n 2 D ) time O ( nD ) space algor ithm. W e then no te that, using standard DP spee dup techniq ues, e.g., the SMA WK algor ithm, th e time cou ld be r educed down to O ( nD ) . The m ain con tribution of this paper is to n ote tha t, once the prob lem is expressed in this for mulation, the space can be reduced down to O ( n ) while maintaining the time at O ( nD ) . The space reductio n developed for this pr oblem was also sh own to apply to o ther p roblems in the literature that previously had been thou ght to req uire Θ( nD ) space. W e co nclude by noting that if we’ r e on ly interested in solving the standard Huffman codin g problem and not the LLHC one then DP (12) with c ( d ) i,j defined by (10) collap ses down to H ( i ) = min max { 0 ,ri − N }≤ j 0 and this repr esents the forest composed of i 1 complete trees each of height 1 so the lemm a is trivially correct. Now let h > 1 . Set I h = i h − i h − 1 and I h − 1 = I h − 2 − I h − 1 . 11 i k 0 1 3 7 i k 0 1 3 7 10 i k 0 1 3 5 8 p 1 p 2 p 3 p 4 p 5 p 6 p 7 p 8 p 9 p 10 p 11 p 1 p 2 p 3 p 4 p 5 p 6 p 7 p 8 p 9 p 10 p 11 p 12 p 13 p 1 p 2 p 3 p 4 p 5 p 6 p 7 p 8 p 9 p 10 p 11 (a) (b) (c) Fig. 9. Illustra tion of the tw o cases in the proof of Lemma 8. Here, r = 2 and h = 4 . (a) is the forest F ′ correspond ing to the old sequence I ′ = (0 , 1 , 3 , 7) . (b) illustrat es case 1 : if i h = 10 then I h = 3 and 2 I h = 6 ≥ 4 = I h − 1 so we can create a forest corresponding to the new sequence (0 , 1 , 3 , 7 , 10) . (c) illustra tes case 2 : if i h = 8 then I h = 1 and 2 I h = 2 < 4 = I h − 1 . In this case the sequen ce ¯ I = (0 , 1 , 3 , 5 , 8) (correspo nding to the forest pictur ed) has cost S 2 + S 5 + S 7 + S 11 . This is cheaper than the cost S 2 + S 5 + S 11 + S 9 of the sequence I = (0 , 1 , 3 , 7 , 8) . As noted in the proof, ¯ I is constructe d by lifti ng two subtrees in the forest in (a) and the n writing do wn the correspondi ng sequence. Define I ′ = ( i 0 , i 1 , . . . , i h − 1 ) . Since I ′ is o ptimal, by induction , I ′ represents a fo rest F ′ with I h − 1 roots at level h − 1 and a to tal o f L h − 1 = r i h − 1 − i h − 2 leav es. The re are now two cases: see Figure 9. Case 1: rI h ≥ I h − 1 : Then I r epresents a fo rest with I h roots whose r I h children are exactly the I h − 1 roots fro m F ′ and ano ther rI h − I h − 1 ≥ 0 leav es. So the Lemma is correct. Case 2: rI h < I h − 1 : W e will show th at this contradicts the optimality of I an d is therefor e impo ssible. Thu s Case 1 will b e th e only po ssible case and the Lemma corre ct. Assume now that r I h < I h − 1 and set s = I h − 1 − r I h > 0 . This can be r ewritten as r ( i h − i h − 1 ) + s = r ( i h − 1 − i h − 2 ) so ri h − i h − 1 = ri h − 1 − i h − 2 − s = L h − 1 − s. Now consider F as being labe led with the L h − 1 smallest p i and constru ct a new forest ¯ F as f ollows. Cho ose s trees from ¯ F c ontaining the s largest weigh ts in the f orest, i.e., p j , j = L h − 1 , L h − 1 − 1 , . . . , L h − 1 − ( s − 1) . Mov e those s forests up one level so their r oots are n ow at heigh t h an d not h − 1 . Now add I h new nodes to level h . Make them the p arents of the rem aining rI h nodes on lev el h − 1 . Th is forest is a legal forest. Call its repr esentativ e sequence ¯ I = ( ¯ i 0 , ¯ i 1 , . . . , ¯ i h ) . W e now observe (a) ¯ i h − 1 = i h − 1 − s so ¯ i h = ¯ i h − 1 + s + I h = i h − 1 + I h + s = i h . (b) Thus r ¯ i h − ¯ i h − 1 = ri h − ( i h − 1 − s ) = L h − 1 and S r ¯ i h − ¯ i h − 1 = S L h − 1 = S r i h − i h − 1 + L h − 1 X j = L h − 1 − s +1 p j (c) Let ¯ F ′ be levels 0 - ( h − 1) of ¯ F . Since every complete tree contains at least r node s, th e s tre es raised contain at least the s n odes p j where L h − 1 − s < j ≤ L h − 1 and o ne oth er node. Since e very such node was raised on e level, h − 1 X m =1 S r ¯ i m − ¯ i m − 1 = cost ( ¯ F ′ ) < cost ( F ′ ) − L h − 1 X j = L h − 1 − s +1 p j = h − 1 X m =1 S r i m − i m − 1 ! − L h − 1 X j = L h − 1 − s +1 p j Combining ( b) and (c) shows th at cost ( ¯ I ) < cost ( I ) . Th is is a contradiction since b oth I and ¯ I are valid seq uences of length h that end with th e same value i h and I is optimal. Thus the case r I h < I h − 1 can n ot h appen and we are finished.

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment