Malleable Coding: Compressed Palimpsests

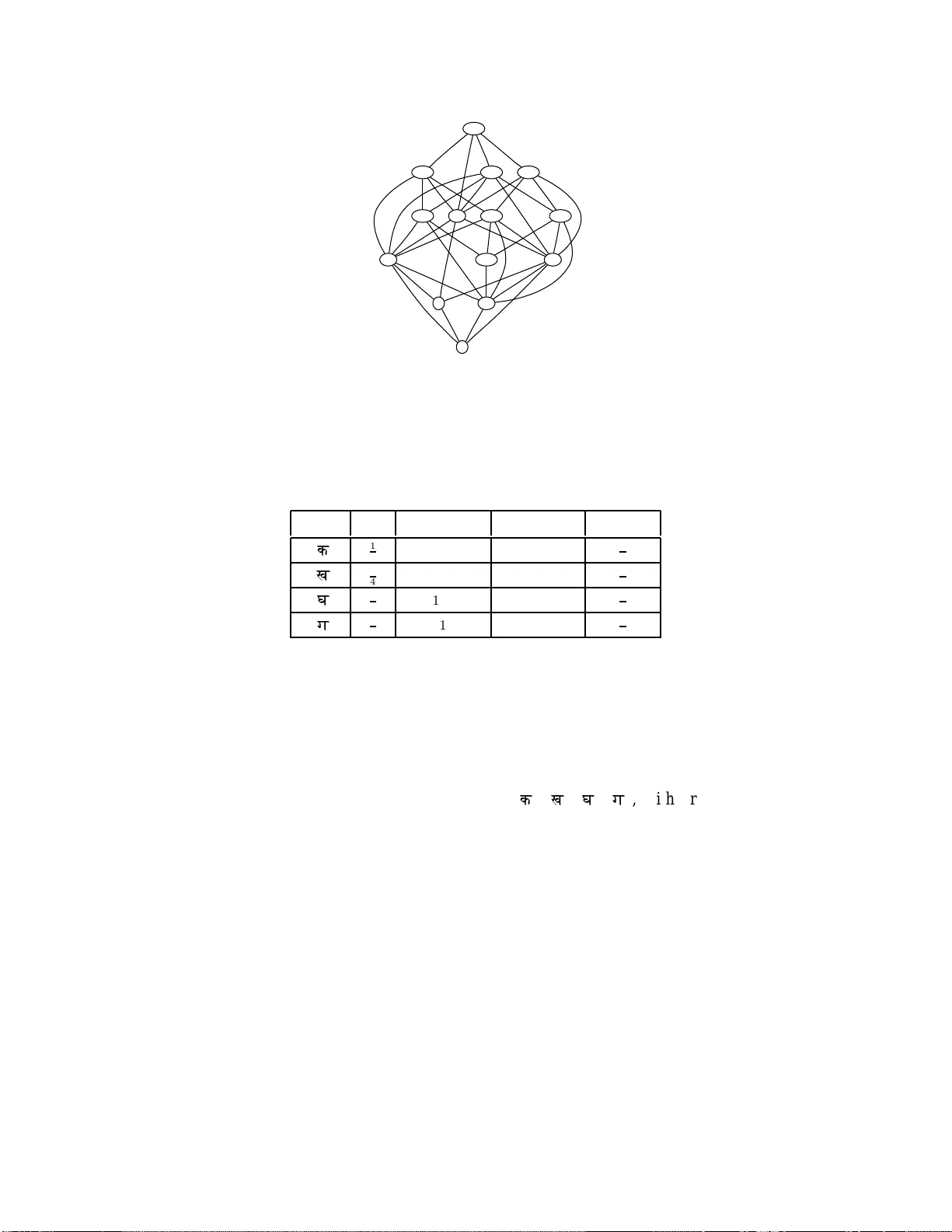

A malleable coding scheme considers not only compression efficiency but also the ease of alteration, thus encouraging some form of recycling of an old compressed version in the formation of a new one. Malleability cost is the difficulty of synchroniz…

Authors: Lav R. Varshney, Julius Kusuma, Vivek K Goyal