Algorithmic Based Fault Tolerance Applied to High Performance Computing

We present a new approach to fault tolerance for High Performance Computing system. Our approach is based on a careful adaptation of the Algorithmic Based Fault Tolerance technique (Huang and Abraham, 1984) to the need of parallel distributed computa…

Authors: George Bosilca, Remi Delmas, Jack Dongarra

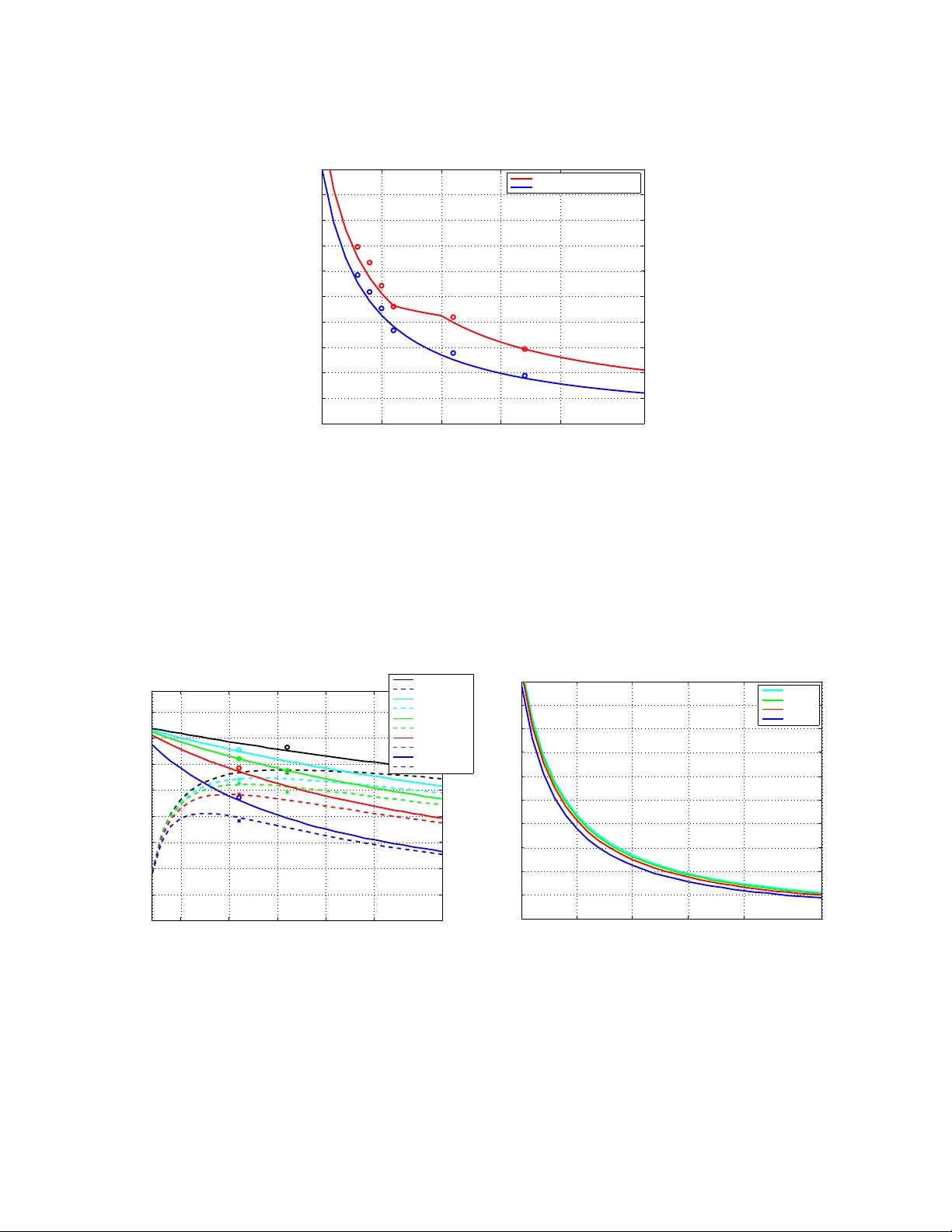

Algorithmic Based F ault T olerance A pplied to High P erf ormance Computing May 23, 2008 George Bosilca Department of Electrical Engineering and Computer Science, Uni versity of T ennessee R ´ emi Delmas Department of Electrical Engineering and Computer Science, Uni versity of T ennessee Jack Dongarra Department of Electrical Engineering and Computer Science, Uni versity of T ennessee Julien Langou Department of Mathematical and Statistical Sciences, Uni versity of Colorado Den v er Abstract: W e present a new approach to fault tolerance for High Performance Computing system. Our approach is based on a careful adaptation of the Algorithmic Based Fault T olerance technique (Huang and Abraham, 1984) to the need of parallel distributed computation. W e obtain a strongly scalable mechanism for fault tolerance. W e can also detect and correct errors (bit-flip) on the fly of a computation. T o assess the viability of our approach, we hav e dev eloped a fault tolerant matrix- matrix multiplication subroutine and we propose some models to predict its running time. Our parallel fault-tolerant matrix-matrix multiplication scores 1.4 TFLOPS on 484 processors (cluster jacquard.nersc.gov ) and returns a correct result while one process failure has happened. This represents 65% of the machine peak efficienc y and less than 12% ov erhead with respect to the fastest failure-free implementation. W e predict (and have observed) that, as we increase the processor count, the ov erhead of the fault tolerance drops significantly . 1 Intr oduction Much research has been conducted into the checkpointing of message passing applications [ 6 ]. The earlier project proposing checkpoint/restart facilities for a parallel application based on MPI was CoCheck [ 30 ] and some of the most current and widely used systems are LAM/MPI [ 7 ], MPICH-V/V2/V3 [ 6 ], CHARM++ [ 1 ] and Open MPI [ 5 ]. The foundation for all of these projects is that the system does not force the MPI application de velopers to handle the failures themselv es, i.e., the underlying system, be it the Operating System or the MPI library itself, is responsible for failure detection and application recov ery . The user of the system is not directly burdened with this at the MPI API layer . System-le vel checkpointing in parallel and distributed computing settings has been studied extensiv ely . The issue of coordinating checkpoints to define a consistent recov ery line has recei ved the attention of scores of papers, ably summarized in the surve y paper of Elnozahy et al [ 12 ]. Nearly all implementations of checkpointing (e.g. [ 2 , 8 , 11 , 13 , 19 , 20 , 23 , 25 , 27 , 29 , 30 ]) are based on globally coordinated checkpoints, stored to a shared stable storage. The reason is that system-lev el checkpointers are complex pieces of code, and therefore real implementations typically keep the synchronization among checkpoints and processors simple [ 17 ]. While there are various techniques to improv e the performance of checkpointing (again, see [ 12 ] for a summary), it is widely agreed upon that the overhead of writing data to stable storage is the dominant cost. Diskless Checkpointing has been studied in various guises by a few researchers. As early as 1994, Silv a et al explored the performance gains of storing complete checkpoints in the memories of remote proces- sors [ 28 ]. Kim et al. [ 24 ] presented a similar idea of diskless checkpointing in 1994 as well. This technique was subsequently revised for SIMD machines by Chiueh [ 10 ]. More recently , diskless checkpointing of 1 FFTs has been studied in [ 15 ]. Evaluations of diskless and diskless/disk-based hybrid systems have been performed by V aidya [ 31 ]. Lu presents a comparison between diskless checkpointing and disk based check- pointing [ 21 ]. While these techniques can be ef fecti ve in some specific cases, o verall, automatic application–oblivious checkpointing of message passing applications do suf fer from scaling issues and in some cases can incur considerable message passing performance penalties. A rele vant set-up of experimental conditions considers a constant failure rate per processor . Therefore, as the number of processors increases, the ov erall reliability of the system decreases accordingly . Elnozahy and Plank [ 14 ] proved that, in these conditions, checkpointing-restart is not a viable solution. Since the failure rate of the system is increasing with the number of processors, a scalable application requires its recov ery time to decrease as the number of processors increases. The checkpoint-restart mechanism does not enjoy this property (at best the cost for reco very is constant). Our contribution with respect to existing HPC fault tolerant research is to present a methodology to enrich existing linear algebra kernels with fault tolerance capacity at a low computational cost. W e believe it is the first time that a technique is de vised that enables a fault-tolerant application to be able to reduce the fault tolerance overhead while the number of processors increases and the problem size is kept constant. Moreov er not only can our method recover from process failures, it can also detect, locate and correct “bit- flip” errors in the output data. The cause of these errors (communication error , software error , etc.) is not rele vant to the o verall detection/location/correction process. Algorithm-based fault tolerance (ABFT), originally dev eloped by Huang and Abraham [ 16 ], is a low- cost fault tolerance scheme to detect and correct permanent and transient errors in certain matrix operations on systolic arrays. The key idea of the ABFT technique is to encode the data at a higher level using checksum schemes and redesign algorithms to operate on the encoded data. These techniques and the flurry of papers that augmented them [ 3 , 4 , 22 , 26 ] opened the door for jettisoning system-lev el techniques and instead focused on high-performance fault-tolerance of matrix operations. The present manuscript focuses on exposing a ne w technique specific to linear algebra based on the ABFT approach from Huang and Abraham [ 16 ]. Our contribution with respect to the original work of Huang and Abraham is to extend ABFT to the parallel distributed context. Huang and Abraham were concerned with error detection, location and recov ery in linear algebra operation. Once a matrix-matrix multiplication is performed (for example), then ABFT enables to recov er from errors. This scenario is not ideal for HPC where we want to be able to recov er an errorless en vironment immediately after a failure. The contribution is therefore to create algorithms for which ABFT can be used on the fly . This manuscript focuses on obtaining an ef ficient fault-tolerant matrix-matrix multiplication subr ou- tine (PDGEMM). This application does not respond well to memory exclusion techniques [ 18 ], therefore standard checkpointing techniques perform poorly . Matrix-matrix multiplication is a kernel of fundamental importance to obtain efficient linear algebra subroutines. Our claim is that we can encapsulate all the fault tolerance needed by the linear algebra subroutines in ScaLAP A CK in a fault-tolerant Basic Linear Algebra Subr outines (BLAS). The third contribution of this manuscript is the presentation of model to predict the running time of our routines. The fourth contribution of this manuscript is a software based on FTMPI and some experimental re- sults. Our parallel fault-tolerant matrix-matrix multiplication scores 1.4 TFLOPS on 484 processors (cluster jacquard.nersc.gov ) and returns a correct result while one process failure has happened. This repre- sents 65% of the machine peak ef ficiency and less than 12% o verhead with respect to the fastest failure-free implementation. 2 2 A new approach for HPC fault tolerance 2.1 Additional processors to store redundant information During a computation, our data is spread across different processes. If one of these processes fails, we need an efficient way to reco ver the lost part of the data. In that respect, we use additional processes to store redundant information in a checksum format. Checksums represent an efficient and memory–effecti ve way of supporting multiple failures. If a vector of data x is spread across p processes where x i is held by process i , then an additional process is added for the storage of y such that y = a 1 x 1 + . . . + a p x p . (For the sake of simplicity , we assume the size of x is constant on all the processes). In case of a single process failure, using the information in the additional checksum process and the non-failed processes, the data on the failed process can tri vially be restored. (Assuming the a i are not 0.) This fault-tolerant mechanism is classically known as diskless checkpointing and was first introduced by Plank et al. [ 24 ]. The name diskless comes from the fact that checksums are stored on additional processes as opposed to being stored on disks. In order to support f failures, f additional processes are added and f checksums are performed with the follo wing linear relation y 1 = a 11 x 1 + . . . + a 1 p x p , . . . y f = a f 1 x 1 + . . . + a f p x p . A sufficient condition to recover from any f -failure set is that any f –by– f submatrix of the f –by– p matrix A is nonsingular . Checksums are traditionally performed in Galois Field arithmetic. Another natural choice to encode floating-point numbers is to use the floating-point arithmetic. Galois Field always guarantees bit-by-bit accuracy . Floating-point arithmetic suffers from numerical errors during the encoding and the recov ery . Ho wev er , cancellation errors in the checksum are typically of the same order as the ones arising in the numerical methods, therefore of no concern for the quality of the final solution; additionally , the f –by– f recov ery submatrix is statistically guaranteed to be well-conditioned if we take the checkpoint matrix A with random v alues [ 9 ]. The ABFT technique presented in this manuscript is based on a floating-point checksum. 2.2 ABFT approaches to fault tolerance in FT -LA In 1984, Huang and Abraham [ 16 ] proposed ABFT (Algorithm Based Fault T olerance) to handle errors in numerical computation. This section relies heavily on their idea. T o illustrate the ABFT technique, we consider two vectors x and y , spread among p processes, where process i holds x i and y i . An additional checksum process has been added to store the checksum x c = x 1 + . . . + x p and the checksum y c = y 1 + . . . + y p . The checksums are performed in floating-point arithmetic. Assume now that one wants to realize z = x + y . This in v olves the local computation on process i , i = 1 , . . . , p : z i = x i + y i and the update of the checksum z c . Instead of computing z c as z 1 + . . . + z p , as proposed in Section 2.1 , ABFT simply performs z c = x c + y c . While the traditional checkpoint method would require a global communication among the n processes and an additional computational step, ABFT performs a local operation (no communication in v olved) on an additional process during the time the active processes perform the same kind of operation. Therefore, to maintain the checksum of z c consistent with the vector z , the penalty cost with respect to the non-fault-tolerant case is an additional process. The same idea applies to all linear algebra operations. For example matrix-matrix multiplication, LU factorization, Cholesk y factorization or QR factorization (see [ 3 , 4 , 16 , 22 , 26 ]). 3 As an example, we hav e studied ABFT in the context of the matrix-matrix multiplication. Assuming that A and B hav e been checkpointed such that A F = A A C R C T C A C T C A C R and B F = B B C R C T C B C T C B C R , where C C and C R are the checksum matrices; then performing the matrix-matrix multiplication A C T C A B B C R = AB AB C R C T C AB C T C AB C R = ( AB ) F , (1) we obtain the result ( AB ) F which is consistent , that is to say , it verifies the same checkpoint relation as A F or B F . This consistency enables us to detect, localize and correct errors. In the context of erasure, one has to be a more careful since we want not only the input and output matrices to be consistent, but we also w ant any intermediate quantity to be consistent. Using the outer product version of the matrix-matrix product ( for k=1:n, C k = C k + A : , k B k , : ; end ), as C k is updated along the loop in k , the intermediate C k matrices are maintained consistently . Therefore a failure at any time during the algorithm can be recov ered (see Figure 1 ). Figure 1: Algorithm-Based F ault-T olerant DGEMM If p 2 processes are performing a matrix-matrix multiplication, maintaining the checksum consistent requires ( 2 p − 1 ) extra processes. The cost with respect to a non-failure-tolerant application occurs when the information of the matrix A is broadcasted along the process rows. In this case, one extra processor needs to recei ve the data. And vice versa for the matrix B : when the information of the matrix B is broadcasted along the process columns, one extra processor needs to receive the data. These are the only extra costs for being fault tolerant. From this analysis, we deduce that the main cost to enable ABFT in a matrix- matrix multiplication is to dedicate ( 2 p − 1 ) processes o ver p 2 for fault-tolerance sake. Therefore, the more processors, the more adv antageous the ABFT scheme is! 3 Model 3.1 PBLAS PDGEMM It is behind the scope of this manuscript to describe e very aspect of parallel matrix-matrix multiplication, and we refer the reader to [ 32 ] for more information. During a matrix-matrix multiplication, each block of A in the current column (of size ml oc ∗ nb ) is broadcasted along the row to all other processes. The same happens to B : each block in the current row (of size nb ∗ nl oc ) is broadcasted along the columns. After these two broadcasts, each process on the grid 4 computes a local D G E M M . This step is repeated until the whole A and B matrices have been broadcasted to e very process. For the sake of simplicity , we consider that the matrices are square, of size n –by– n , and distributed ov er a square grid of p –by– p processes. In SUMMA, we implement the broadcast as passing a message around the logical ring that forms the ro w or column. In that case the time complexity becomes ( √ p − 1 )( α + n p ( p ) β ) + ( √ p − 1 )( α + n √ p β ) (2) + k ( 2 n 2 p γ + α + n √ p β + α + n √ p β ) (3) + ( c − 2 )( α + n √ p β ) + ( √ p − 2 )( α + n √ p β ) (4) + 2 n 3 p γ (5) = 2 n 2 ( n + 1 ) p γ + 2 ( n + 2 √ p − 3 )( α + n √ p β ) (6) where α is the in verse of the bandwidth, β is the latency and γ is the in v erse of the flop rate. Equation ( 2 ) is the time required to fill the pipe (that is the time for the messages originating from A and B to reach the last process in the row and in the column). The next term ( 3 ) is the time to perform the sequential matrix-matrix multiplication and passing the messages. Then, contribution ( 4 ) is the time for the final messages to reach the end of the pipe. The last term is the time for the final update at the node at the end of the pipe. This complexity is then approximately 2 n 3 p γ + 2 ( n + 2 √ p − 3 )( α + n √ p β ) (7) and the estimated ef ficiency is E ( n , p ) = 1 1 + O ( p n 2 ) + O ( √ p n ) (8) W e can see that the method is weakly scalable: if we increase the number of processors while maintaining constant the memory use per node (thus having p n 2 constant), this algorithm maintains its ef ficiency constant. In the remainder , we neglect the latenc y term ( β ) in the communication cost. 3.2 ABFT PDGEMM (0 failure) In this section, we deri ve a model for ABFT PDGEMM. If we perform a traditional matrix-matrix mul- tiplication, the result will be a checkpointed matrix (see Equation 1 ). In the outer-product v ariant of the matrix-matrix multiplication algorithm, rank- nb updates are applied to the global matrix C . Therefore, the checksum stays consistent throughout the execution of the algorithm provided that all the processes go at the same speed. The last row of A (checksum) and the last column of B (checksum) are sent exactly in the same way as the rest of the data (see Figure 1 ). When no failure occurs, the o verhead of the fault tolerance are: 5 Figure 2: Ov erhead between no fault and one fault in ABFT SUMMA • the initial checksum. Howe v er , if we call ABFT BLAS functions the ones after the other , we do not hav e to recompute the checksum between each call. As a consequence, we do not consider the cost of the initial checksum. • the computation of an ( n + nl oc ) –by– n –by– ( n + nl oc ) matrix–matrix multiplication, instead of n –by– n –by– n for a non fault-tolerant code. • the broadcast which needs to be performed on q + 1 processes along the rows and p + 1 processes along the columns, instead of respecti vely p and q for a non fault-tolerant matrix-matrix multiplication. Using Equation ( 6 ), we can then write the complexity for ABFT PDGEMM with no f ault : 2 ( n + nl oc ) 2 n p γ + 2 ( n + 2 √ p − 3 )( n + nl oc √ p β ) (9) The modification in the first term of the sum comes from the fact that the matrix-matrix multiplication no w in v olves n –by– ( n + nl oc ) matrices on the same number of processors. The modification in the second term sho ws that the pipeline is now longer than in re gular SUMMA. 3.3 ABFT PDGEMM (1 failure) When one fault happens, the timeline is illustrated in Figure 2 and e xplained below . • T detection : All non-failed processes must be notified when a failure happens. Since processes are only notified when attempting an MPI communication, odds are that at least one process will be doing a full local D G E M M before being notified. So, basically , this overhead is more or less equal to the time of one local D G E M M . • T restart : FT -MPI must spawn a ne w process to replace the failed one. This is a blocking operation and the time for this step depends solely on the total number of processes in v olved in the computation. 6 Figure 3: Data distribution in the ABFT BLAS framew ork for vectors and matrices over a 4-by-6 process grid • T pushdata : The non-failed processes must reach the same consistent state. The time overhead consists in filling and emptying the pipe once. • T checksum : The last step in the recov ery is to reconstruct the lost data of the failed process. This cost is the cost of an M P I R educe. 4 Experimental results 4.1 ABFT BLAS framework A prototype framew ork ABFT BLAS has been designed and implemented. The ABFT BLAS application relies on the FT -MPI library for handling process failures at the middle ware level. The ABFT BLAS re- sponsibilities is to (ef ficiently) recover the data lost during a crash. V ectors over processes are created and then destroyed. Several operations o ver the vectors are possible: scalar product, norm, vector addition, etc. V ectors are re gistered to the fault tolerant context. When a failure occurs, all data registered in the fault tolerant context is reco vered and the application is continued. The default encoding mode is diskless checkpointing with floating-point arithmetic but an option is to perform Galois Field encoding (although this rules out ABFT). The same functionality have been implemented for dense matrices. Dense matrices are spread on the processor in the 2D-block cyclic format, and checksums can be performed by row , by columns or both (see Figure 3 ). Regarding matrix and vector operations, av ailable functionality are matrix-vector products and sev eral implementations of matrix-matrix multiplications. One of the main features of the ABFT BLAS library is that the user is able to stack fault-tolerant parallel routines the ones on top of the others. The code looks like a sequential code but the resulting application is parallel and f ault-tolerant. 7 4.2 Experimental setup All runs where done on the jacquard.nersc.gov cluster , from the National Energy Research Scientific Computing Center (NERSC). This cluster is a 512-CPU Opteron cluster running a Linux operating system. Each processor runs at a clock speed of 2.2GHz and has a theoretical peak performance of 4.4 GFLOPS/sec. Measured peak (on a 3000–by–3000–by–3000 D G E M M run) is 4.03 GFLOPS/sec. The nodes are intercon- nected with a high-speed InfiniBand network. The latency is 4.5 µ sec, while the bandwidth is 620 MB/sec. Belo w are more details on the experimental set-up: • we ha ve systematically use square processor grids. • the blocksize for the algorithm is alw ays 64. • process failure were performed manually . W e introduced an E X I T statement in the application for one arbitrary non-checksum process. Although our application support failures at any point in the ex ecution (see Section 4.3 ), we find that having a constant failure point was the most practical and reproduceable approach for performance measurements. • when we present results of ABFT PDGEMM on a p –by– p grid, this means that the total number of processors used is p 2 . Therefore ( p − 1 ) 2 processors are used to process the data while ( 2 p − 1 ) are used to checkpoint the data. • The performance model parameters are flops rate: 3.75 GFLOPS/sec bandwidth: 52.5 MBytes/sec. These two machine-dependent parameters and our models (see Section 3 ) are enough to predict the running time for PBLAS PDGEMM and ABFT BLAS PDGEMM (0 f ailure). T o model ABFT BLAS PDGEMM (1 failure), we also need the time for a checkpoint ( M P I R educe) and the time for the FT -MPI library to reco ver from a failure (see Section 3.3 ). 4.3 Stress test In order to assess the robustness of our library , we have designed the following stress test. W e set up an infinite loop where, at each loop, we initialize the data ( A and B ), checkpoint the data, perform a matrix- matrix multiplication C ← AB , and check the result with residual checking. Residual checking consists in taking a random v ector x and checking that k C x − A ( Bx ) k / ( n ε k C kk x k ) is reasonably small. If this test passes, then we have high confidence of the correctness of our matrix-matrix multiplication routine. During the execution, a process killer is acti vated. This process killer kills randomly in time and in the location any process in the application. Our application hav e successfully returned from tens of such failures. This testing not only stresses the matrix-matrix multiplication subroutine but it also stresses the whole ABFT BLAS library since failure can occurs at any time. 4.3 Perf ormance results In Figure 4 , we present a weak scalability experiment. While the number of processors increases from 64 (8–by–8) to 424 (22–by–22), we keep the local matrix size constant ( nl oc = 1000 , . . . , 4000) and observe the performance for PBLAS PDGEMM and ABFT BLAS PDGEMM with no failure. 8 64 81 100 121 256 484 0 0.5 1 1.5 2 2.5 3 3.5 4 4.4 PBLAS PDGEMM # procs GFLOPS / sec / proc 4000 3000 2000 1000 64 81 100 121 256 484 0 0.5 1 1.5 2 2.5 3 3.5 4 4.4 ABFTBLAS PDGEMM GFLOPS / sec / proc # procs Figure 4: Performance (GFLOPS/sec/proc) of PBLAS PDGEMM (left) and ABFT BLAS PDGEMM with 0 failure (right). The solid lines represent model while the circles represent experimental points. The first observation is that our model performs very well. W ith only two parameters ( α and γ ), we are able to predict the 48 experimental v alues within a fe w percents. W e observe that PBLAS PDGEMM is weakly scalable. That is the performance is constant when we increase the number of processors. W e also observe that as the processor count increases, the performance of ABFT PDGEMM increases. In Figure 5 , T able 1 , Figure 6 , and T able 2 , we present the weak scalability of our application when nl oc is kept constant with nl oc = 3000. W e present performance results for PBLAS PDGEMM, ABFT BLAS PDGEMM with 0 failure and ABFT BLAS PDGEMM with 1 failure. W e see that as the number of processors increases the cost of the fault tolerance con v erges to 0. The fact that matrix-matrix multiplication performs n 3 operations enables us to hide a lot of n 2 operations (for example checkpointing) in the background. This renders weak scalability easily feasible. In Figure 7 , we present a strong scalability experiment. The right graph assesses our claim. W e see that for a fixed problem size, when we increase the number of processors, the ov erhead of the fault tolerance decreases to 0. W e also observe that the problem size is not rele vant in term of ov erhead, the ov erhead is only governed by the number of processors. W e do not kno w of any other fault tolerant scheme that possesses these two qualities. Refer ences [1] Charm++ web site. http://charm.cs.uiuc.edu/u/ft/. [2] A. Agbaria and R. Friedman. Starfish: Fault-tolerant dynamic MPI programs on clusters of worksta- tions. In 8th IEEE International Symposium on High P erformance Distributed Computing , 1999. [3] P . Banerjee and J. A. Abraham. Bounds on algorithm-based fault tolerance in multiple processor systems. IEEE T r ansactions on Computers , 35(4):296–306, April 1986. 9 4 25 100 225 400 625 1024 0 0.5 1 1.5 2 2.5 3 3.5 4 4.4 nloc=3000 GFLOPS/proc # procs PBLAS PDGEMM ABFTBLAS PDGEMM (0 failure) ABFTBLAS PDGEMM (1 failure) Figure 5: Performance (GFLOPS/sec/proc) of PBLAS PDGEMM, ABFT BLAS PDGEMM (0 failure), and ABFT BLAS PDGEMM (1 failure). The solid lines represent model while the circles represent experimental points. This is a weak scalability experiment with nl oc = 3000. (See also T able 1 .) performance per processor (GFLOPS/sec/proc) 64 81 100 121 256 484 PBLAS PDGEMM 3.14 (3.09) 3.16 (3.09) 3.14 (3.10) 3.10 (3.10) 3.12 (3.12) 3.13 (3.13) ABFTBLAS PDGEMM (0 failure) 2.43 (2.49) 2.51 (2.55) 2.56 (2.60) 2.62 (2.65) 2.74 (2.79) 2.86 (2.88) ABFTBLAS PDGEMM (1 failure) 2.33 (2.40) 2.40 (2.46) 2.47 (2.52) 2.52 (2.53) 2.58 (2.63) 2.73 (2.74) cumul performance (GFLOPS/sec) 64 81 100 121 256 484 PBLAS PDGEMM 201 (198) 256 (251) 314 (310) 375 (376) 799 (798) 1515 (1513) ABFTBLAS PDGEMM (0 failure) 156 (159) 203 (207) 256 (260) 317 (320) 701 (714) 1384 (1395) ABFTBLAS PDGEMM (1 failure) 149 (154) 194 (200) 247 (252) 305 (306) 660 (672) 1321 (1327) T able 1: Performance (GFLOPS/sec/proc) of PBLAS PDGEMM, ABFT BLAS PDGEMM (0 f ailure), and ABFT BLAS PDGEMM (1 failure). The number without parenthesis is the experimental result, while the number in between parenthesis corresponds to the model value. This is a weak scalability experiment with nl oc = 3000. (See also Figure 5 .) 64 81 100 121 256 484 PBLAS PDGEMM 100.0 100.0 100.0 100.0 100.0 100.0 ABFTBLAS PDGEMM (0 failure) 129.2 125.9 122.7 118.3 113.9 109.4 ABFTBLAS PDGEMM (1 failure) 134.8 131.7 127.1 123.0 120.9 114.7 T able 2: Overhead of the fault tolerance with respect to the non-failure-resilient application PBLAS PDGEMM. This is a weak scalability experiment with nl oc = 3000. (See also Figure 6 .) 10 25 100 225 400 625 1024 100 105 110 115 120 125 130 135 140 145 150 % time overhead compared to PBLAS PDGEMM # procs nloc = 3000 ABFTBLAS PDGEMM (1 failure) ABFTBLAS PDGEMM (0 failure) Figure 6: Overhead of the fault tolerance with respect to the non-failure-resilient application PBLAS PDGEMM. The plain curves correspond to model while the circles correspond to experimental data. This is a weak scalability experiment with nl oc = 3000. (See also T able 2 .) 4 25 100 225 400 625 1024 0 0.5 1 1.5 2 2.5 3 3.5 4 4.4 GFLOPS/proc # procs N=60000 PBLAS N=60000 ABFT N=40000 PBLAS N=40000 ABFT N=30000 PBLAS N=30000 ABFT N=20000 PBLAS N=20000 ABFT N=10000 PBLAS N=10000 ABFT (a) Performance (GFLOPS/sec/proc) 25 100 225 400 625 1024 100 105 110 115 120 125 130 135 140 145 150 % time overhead compared to PBLAS PDGEMM # procs N=40000 N=30000 N=20000 N=10000 (b) Overhead with respect to PBLAS PDGEMM Figure 7: Strong scalability of PBLAS PDGEMM and ABFT PDGEMM with 0 failure. On the left, performance for PBLAS PDGEMM (plain) and performance for ABFT PDGEMM (dashed). On the right, ov erhead of ABFT PDGEMM with respect to PBLAS PDGEMM. 11 [4] P . Banerjee, J. T . Rahmeh, C. Stunkel, V . S. Nair, K. Roy , V . Balasubramanian, and J. A. Abraham. Algorithm-based fault-tolerance on a hypercube multiprocessor . IEEE T r ansactions on Computers , 35(9):1132–1145, September 1990. [5] A. Bouteiller , G. Bosilca, and J. Dongarra. Redesigning the message logging model for high per- formance. In ISC 2008, International Super computing Confer ence, Dr esden, Germany , J une 17-20 , 2008. [6] A. Bouteiller , P . Lemarinier , G. Krawezik, and F . Cappello. Coordinated checkpoint versus message log for fault tolerant MPI. In Pr occeedings of Cluster 2003, Honk Hong , December 2003. [7] G. Burns, R. Daoud, and J. V aigl. LAM: An open cluster en vironment for MPI. In Pr oceedings of Super computing Symposium , pages 379–386, 1994. [8] J. Casas et al. MIST: PVM with transparent migration and checkpointing. In 3r d Annual PVM Users’ Gr oup Meeting, Pittsb ur gh, P A , 1995. [9] Z. Chen and J. Dongarra. Condition numbers of Gaussian random matrices. SIAM Journal on Matrix Analysis and Applications , 27(3):603–620, 2005. [10] T . Chiueh and P . Deng. Efficient checkpoint mechanisms for massi vely parallel machines. In 26th International Symposium on F ault-T oler ant Computing, Sendai , June 1996. [11] A. Clematis and V . Gianuzzi. CPVM - extending PVM for consistent checkpointing. In 4th Eur omicr o W orkshop on P ar allel and Distributed Pr ocessing, Braga , January 1996. [12] E. N. Elnozahy , L. Alvisi, Y .-M. W ang, and D. B. Johnson. A surv ey of rollback-recov ery protocols in message-passing systems. A CM Computing Surveys , 34(3):375–408, September 2002. [13] E. N. Elnozahy , D. B. Johnson, and W . Zwaenepoel. The performance of consistent checkpointing. In 11th Symposium on Reliable Distributed Systems , October 1992. [14] E. N. Elnozahy and J. S. Plank. Checkpointing for peta-scale systems: A look into the future of practical rollback-recovery . IEEE T ransactions on Dependable and Secure Computing , 1(2):97–108, 2004. [15] C. Engelmann and G. A. Geist. A diskless checkpointing algorithm for super-scale architectures ap- plied to the fast fourier transform. In Challenges of Lar ge Applications in Distributed En vir onments , 2003. [16] K. Huang and J. Abraham. Algorithm-based fault tolerance for matrix operations. IEEE T rans. on Comp. (Spec. Issue Reliable & F ault-T oler ant Comp.) , 33:518–528, 1984. [17] Y . Huang and Y .-M. W ang. Why optimistic message logging has not been used in telecommunication systems. 1995. [18] Y . Kim, J. S. Plank, and J. Dongarra. Fault tolerant matrix operations for networks of workstations using multiple checkpointing. In High P erformance Computing on the Information Superhighway , HPC Asia ’97, Seoul, K or ea , 1997. [19] J. Leon, A. L. Fisher, and P . Steenkiste. Fail-safe PVM: A portable package for distributed pro- gramming with transparent recov ery . T echnical Report CMU-CS-93-124, Carne gie Mellon Uni versity , February 1993. 12 [20] W .-J. Li and J.-J. Tsay . Checkpointing message-passing interface (MPI) parallel programs. In P acific Rim International Symposium on F ault-T oler ant Systems , December 1997. [21] C. D. Lu. Scalable Diskless Checkpointing for Lar ge P arallel Systems . Ph.D. dissertation, University of Illinois at Urbana-Champaign, 2005. [22] F . T . Luk and H. Park. An analysis of algorithm-based fault tolerance techniques. J ournal of P arallel and Distributed Computing , 5:172–184, 1988. [23] V . K. Naik, S. P . Midkiff, and J. E. Moreira. A checkpointing strategy for scalable recov ery on dis- tributed parallel systems. In SC97: High P erformance Networking and Computing, San Jose, CA , 1997. [24] J. S. Plank, Y . Kim, and J. Dongarra. Algorithm-based diskless checkpointing for fault tolerant matrix operations. In 25th International Symposium on F ault-T oler ant Computing, P asadena, CA , June 1995. [25] J. Pruyne and M. Li vny . Managing checkpoints for parallel programs. In W orkshop on Job Scheduling Strate gies for P arallel Pr ocessing (IPPS ’96) , 1996. [26] A. Roy-Cho wdhury and P . Banerjee. Algorithm-based fault location and recov ery for matrix compu- tations. In 24th International Symposium on F ault-T olerant Computing , Austin, TX , 1994. [27] L. M. Silva and J. G. Silv a. Global checkpoints for distributed programs. In 11th Symposium on Reliable Distributed Systems, Houston TX , 1992. [28] L. M. Silva, B. V eer , and J. G. Silva. Checkpointing SPMD applications on transputer networks. In Scalable High P erformance Computing Confer ence, Knoxville , TN , May 1994. [29] G. Stellner . Consistent checkpoints of PVM applications. In F irst Eur opean PVM User Gr oup Meeting, Rome, Italy , 1994. [30] G. Stellner . CoCheck: Checkpointing and process migration for MPI. In Pr oceedings of the 10th International P arallel Pr ocessing Symposium (IPPS’96), Honolulu, Hawaii , April 1996. [31] N. H. V aidya. A case for two-le vel distributed recovery schemes. In ACM SIGMETRICS Conference on Measur ement and Modeling of Computer Systems, Ottawa, CA , May 1995. [32] R. A. van de Geijn and J. W atts. SUMMA: scalable uni versal matrix multiplication algorithm. Con- curr ency: Practice and Experience , 9(4):255–274, 1997. 13

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment