Linear code-based vector quantization for independent random variables

In this paper we analyze the rate-distortion function R(D) achievable using linear codes over GF(q), where q is a prime number.

Authors: Boris Kudryashov, Kirill Yurkov

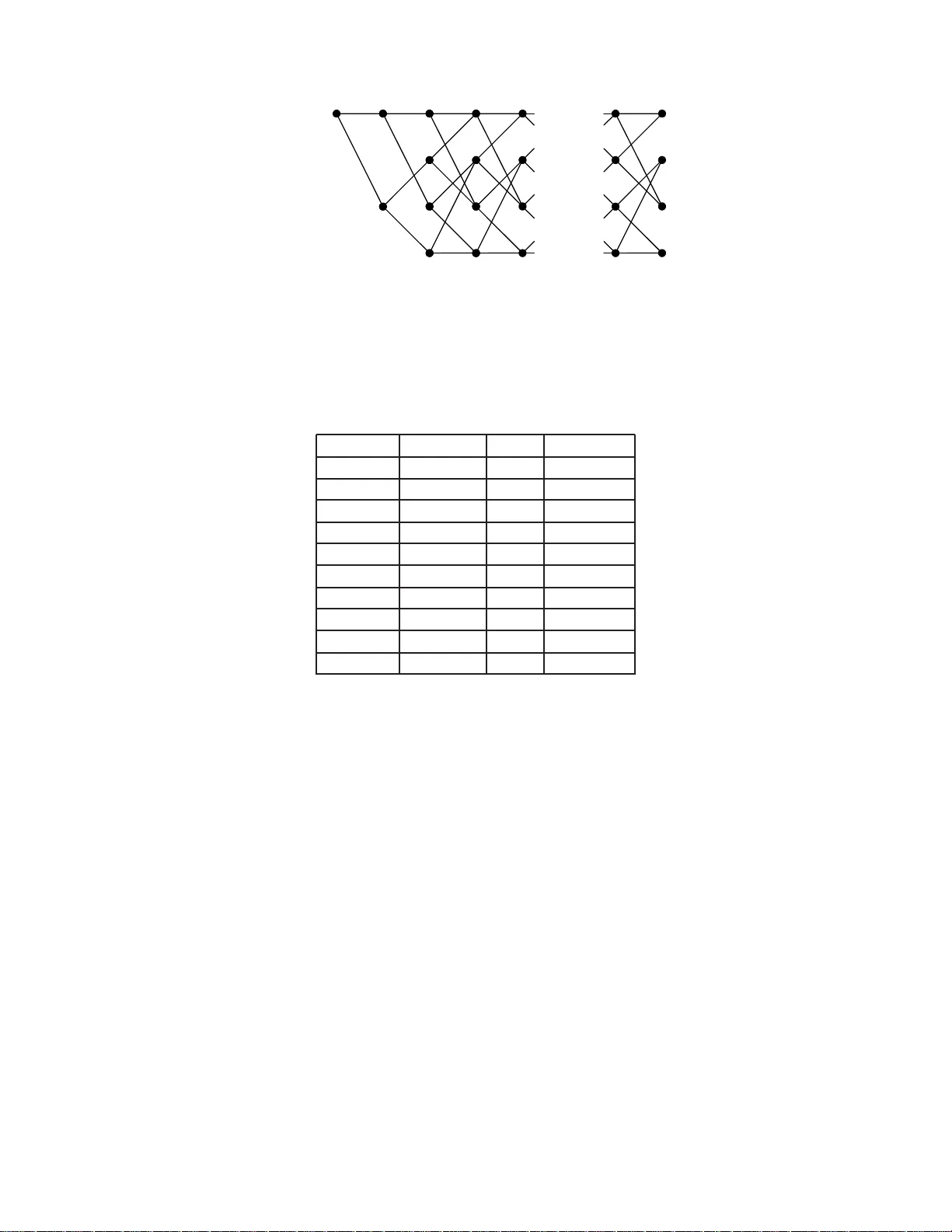

1 Linear code-based v ector quantizati on for independent random v ariable s Boris D. Kudryasho v Department of Information S ystems, St. Petersbur g Uni v . of Information T echnologi es, Mecha nics and Optics St. Petersbur g 197101, Russia Email: boris@eit.lth.se Kirill V . Y urkov Department of Information S ystems, St. Petersbur g Uni versity on Aer ospace Instrumentation, St. Petersbur g, 190000, Russia Email: yourko vkirill@mail.ru Abstract The compu tationally effi cient vector quantizatio n for a discrete time source can be perf ormed using lattices over block linear codes or conv olutional co des. For high rates (low distortion) and un iform distribution the performan ce of a m ultidimension al lattice depend s main ly o n the norm alized secon d mo ment (NSM) of the lattice. F or relatively low rates (high d istortions) an d n on-un iform distrib utions the lattice-based quantizatio n can b e no n-optim al in terms of achieving the Shan non rate-d istortion f unction H ( D ) . In this paper we analy ze the rate-d istortion f unction R ( D ) ach iev able u sing linear c odes over GF ( q ) , where q is a prime nu mber . W e show that even fo r q = 2 the NSM of code- based qua ntizers is close to the minim um achiev able limit. I f q → ∞ , then NSM → 1 / (2 π e ) which is the NSM of the infinite dimension sp here. By exhaustiv e search over q -ary time-inv ariant conv o lutional codes with mem ory ν ≤ 8 , the NSM-optimu m codes for q = 2 , 3 , 5 were f ound. For low rates (hig h distortions) we use a n on-lattice q uantizer obtain ed from a lattice quantizer by extendin g the “zer o zon e”. Further more, we modif y th e enco ding by a properly assigned metric to approxim ation values. Due to suc h m odifications for a wide cla ss o f pro bability distributions and a wid e r ange o f bit rates we ob tained u p to 1 dB signal-to -noise ratio imp rovement compared to cu rrently known results. Index terms —Arithmetic coding, con volutional codes, lattice, l attice quantizatio n, normalized second moment, random coding, rate-dist ortion function, scalar quantization, vector quanti zation I . I N T RO D U C T I O N For an arbitrary discrete tim e memoryless continuous station ary s ource X = { x } , approxim ation alphabet Y = { y } , and a given distortion measure d ( x, y ) the th eoretical limit of th e cod e rate R under the condition that the av erage distortion does not exceed D i s determined by the Shannon rate-distortion function H ( D ) [1], H ( D ) = min { f ( y | x ) } :E[ d ( x,y )] ≤ D { I ( X ; Y ) } where I ( X ; Y ) denot es the mutu al information between X and Y . An encoder of a discrete time cont inuous sou rce is often referred to as a vector quant izer , and the code as a codeboo k [2]. Coding theorems about achiev ability of H ( D ) are proved by random cod ing techniques without imposing an y restrictions on the codebook structure. The encoding complexity f or such a codebook in general is p roportional to t he codebook si ze; i.e., the encoding c omplexity for sequences of leng th n with rate R bi ts per s ample is propo rtional to 2 Rn . 2 A lar ge step towards re ducing the quant ization complexity is due to using quantizers based on mul- tidimensi onal latti ces [3]. In particul ar , Conway and Sloane in [4] and [5] proposed a si mple encoding algorithm, which, in essence, requires a proporti onal to dimension n of a codebook number of scalar quantization operations and subsequent exhaustive search over the lat tice points i n vicinity of the source sequence. The complexity of this quanti zation s till grows exponentially i n the dimensi on n of the vectors, but m uch slower th an 2 Rn . Important results related to the vector q uantization effi ciency are obtain ed by Zador [6], who h as shown that, in the case of small quanti zation errors, t he characteristics of the qu antizer depend on a parameter G n which is determined b y the shape of the V oronoi po lyhedrons of t he codebook entries. For lattice quantizers, i.e., when all V oronoi regions are congruent to each other , G n is equal to the s o-called normalized second moment (NSM) o f the latt ice (see (6) in Section 2 below). Constructions of lattices with good v al ues of NS Ms a re pre sented in [3]. F or example, for the Leech lattice Λ 24 NSM = 0.0658. Since for latti ce Z (th e set of in tegers) NSM = 1 /12, the so called “ granular gain ” of a latt ice quantizer in d B is compu ted as 10 log 10 (12NSM) . Therefore t he granular gain of Λ 24 with respect to Z i s equal to 1.029 dB whi ch is 0.513 dB away from the theoretical li mit 10 log 10 (12 / 2 π e ) = 1 . 54 dB corresponding to covering the Euclidean space by n -dim ensional spheres when n → ∞ . Granular gains achie ved by dif ferent code constructions were reported in [7]. In particular , the Marc ellin- Fischer [8] trellis-based quanti zers are presented in [7] as an NSM record-holder . For example, by a trellis with 256 st ates at each level MSN=1.36 dB is achieved (only ≈ 0.17 dB from the s phere-cov ering bound). The Marcellin-Fisher [8] quantizer nowadays is stil l the best-known i n the sense of granul ar gain. It m eans that th ese quant izers are good for uniformly distributed random variables. Meanwhile results reported in [8] for the Laplacian and Gaussian distributions were later improved in the papers [9], [10], [11], and [12]. Furthermore, the best currently known results for the generalized Gaussi an distri bution with parameter α = 0 . 5 is presented in [13]. Despite numerous attem pts to construct good quantization schemes, the gap b etween asym ptotically achie vable resul ts and the best existi ng quantizers is still large. For example, for 32-state t rellises and bit rate 3 bits/sampl e, t his gap is equal to 1 .10, 1.53 , and 1.78 dB for the Gaussian distrib ution, Laplacian distribution, and generalized Gaus sian distribution with α = 0 . 5 , respectively [13]. Since th e best c odes a nd coding alg orithms for non-uniform distributions are not the same as for the uniform distribution, the fol lowing qu estion arises: could it be that codes which deliberately are no t good in sense of granular gain be very g ood for a non-uniform distribution? The posit iv e answer to this question is one of th e result s of this paper . B elow we present the coding scheme which with a 32-state t rellis at 3 bits/ sample i s not more than 0.32, 0.35, and 0.44 d B away from the Shannon l imit for the three di stributions ment ioned above. Therefore, the achie ved gain over existing trellis quanti zers exceeds 1 dB at high rates for a wide class of probabi lity dis tributions. W e have searched good quantizers among lattice quantizers over linear codes. The mo tiv ati on is that the constructions of some good l attices are cl osely related to the constructions of good blo ck error-correcting codes (e.g., t he Hamming cod e and Golay codes). One mo re argument in fav or of such trellises app ears in [14], [15] and [16], wh ere it was shown that th ere exist asym ptotically optim al lattices over q -ary linear codes as q tends to infinit y . Although the NSM decreases (the granular gain increases) wit h alphabet size q , it was found by simu- lations that the quantizati on performance b eha vior for non-uniform quantizati on is d iffe rent: b inary codes appear to be better than codes over larger fields . Replacing the quaternary codes, typi cally consi dered t he in trellis-based qu antization lit erature, b y binary codes is th e first step towa rds improving the quantization performance. Another step is based on the important o bserva tion (see, e.g., [17], [18]) that t he entropy-coded uniform scalar quantizati on with properly chosen reconstruction values provides near the same codin g effi ciency as the opti mum entropy-constrained scalar quantization (ECSQ) does. Moreover , choosing zero quant l ar ger than others, we obtain a simple scalar quantizer with performance e xtremely close to the ECSQ for all rates. In [18] this q uantizer is called Extending Zero Zone (EZ Z) q uantizer . Not ice t hat EZZ quantization 3 does not follow the minim um Euclidean distance criterion. Therefore, it would b e reasonabl e not to use the Eucli dean distance for vector quantization (or t rellis quantizati on as a s pecial case) as well. W e begin in Section 2 with revie wing the random codi ng bounds [14], [15] on the NSM value for linear code based lattices. T he asymp totic b eha vior of th e NSM as a functio n of the code alphabet size is studied and we show that code-based lattices are asym ptotically op timal w hen t he alp habet size tends to infinit y . In Section 3 the code search results are presented. In Section 4 we present entropy-constrained cod e- based quantization. By generalizing the EZZ approach to latt ice qu antization, we obtain ed near optim um SNR v alues for all code rates for the classes of no n-uniform probabili ty distributions ment ioned above. Concluding remarks are given in Section 5. I I . C O D E - BA S E D Q UA N T I Z A T I O N An n -dimensional lattice can b e defined by its generator matrix G ∈ R n × n as [3] Λ( G ) = { λ ∈ R n |∃ z ∈ Z n : λ = G z } . (1) Let q be a prime number and C denote q -ary linear ( n, k ) code. Then a lattice over the code C is defined as Λ( C ) = { λ ∈ Z n |∃ c ∈ C : λ ≡ c ( mo d q ) } . (2) In o ther words, the lattice Λ( C ) consi sts of integer -valued sequences which are equal to codewords from C by modul o q . In the case of b inary cod es t he least sign ificant bits of th e sequences λ ∈ Λ( C ) are code words of C . The lattice defined by (2) is known as Cons truction A [3]. When a n -dimensional lattice is used as a codebook, t he source sequence x ∈ R n is approx imated by some latti ce poi nt λ ∈ Λ( C ) . The whole set Λ( C ) is ass umed to be ordered and a n umber representing t he optimal approxi mation poi nt λ i s transm itted instead of x . Therefore, we need some rul e wh ich assig ns a number to each point o f Λ( C ) . T o suggest such a rule we consider th e following representation of Λ( C ) : Λ( C ) = q k [ m =1 { q Z n + c m } . (3) In ot her words, Λ( C ) i s a union of q k cosets which leaders are cod erwords of C . T his representation allows a one-to-one mapping of all λ ∈ Λ( C ) onto the set of int eger vectors ( m, b ) , where m is a code word num ber and b = ( b 1 , ..., b n ) ∈ Z n defines t he poin t number in q Z n . The latt ice point λ can be reconstructed from ( m, b ) by the formula λ = c m + q b . (4) The latti ce Λ( C ) or its scaled version a Λ( C ) , a > 0 can be used as a codeboo k for vector quantization. Belo w we alw ays assume a = 1 since inst ead of quantizing th e random vector x with a Λ( C ) we can quantize x /a with Λ( C ) . W e assum e also th at • T he stati onary memoryless source sequences are drawn from X n = { x | x = ( x 1 , . . . , x n ) } ⊆ R n with a known prob ability density fun ction (pdf) f ( x ) = Q n i =1 f ( x i ) . • T he squared Eucli dean distance d ( x , y ) = 1 n k x − y k 2 = 1 n n X i =1 ( x i − y i ) 2 is used as a dis tortion measure. 4 The q uantization procedure can be described as a mapping y = Q ( x ) , where x ∈ X n , y ∈ Λ( C ) . The mean squ are error (MSE) of the quantizer D = 1 n M || x − Q ( x ) || 2 = 1 n X i Z R i || x − λ i || 2 f ( x ) d x (5) is mini mized by the m apping Q ( x ) = λ i if x ∈ R i where R i are V orono i regions [3] of lattice poi nts, i.e., R i , R ( λ i ) = { x ∈ R n : || x − λ i || 2 ≤ || x − λ j || 2 ∀ j 6 = i } . All V oronoi regions of lattice points are cong ruent to each other . W e denote th e V oronoi region whi ch contains the origin as R . W e use the con ventional notati ons V ( R ) = Z R d x G n ( R ) = 1 n R R k x k 2 d x V ( R ) 1+2 /n (6) for the volume and the normalized second mo ment (NSM) of R , respectiv ely . By ent ropy codin g the rate R of the lattice quantizer can be arbitrary close to the entropy of the lattice points R = − 1 n X i p i log p i , where the probabilities p i of the l attice poin ts are equal t o p i , p ( λ i ) = Z R i f ( x ) d x , i = 1 , 2 , . . . . The rate-distorti on function R ( D ) of the latt ice quantizer can b e easil y estim ated under th e assumpt ion that th e quantizatio n errors are so s mall that the pd f f ( x ) can be approximated by a constant value inside each region R ( λ i ) . In t his case, t he rate can be expressed via the differ ential entropy of the source h ( X ) = − Z f ( x ) log f ( x ) dx and the volume V ( R ) of the V oronoi region R , R ( D ) ≤ h ( X ) − 1 n log V ( R ) . (7) For the av erage distort ion va lue D from (5) and (6) we ha ve D = G n ( R ) V ( R ) 2 /n . (8) From the l ast two equatio ns and the Shannon l ower bound, H ( D ) ≥ h ( X ) − 1 2 log(2 π eD ) we obtain the in equalities H ( D ) ≤ R ( D ) ≤ H ( D ) + 1 2 log ( 2 π eG n ( R )) . (9) Therefore to prove that the optimal quanti zation would be asy mptotically achiev able by lattice quanti- zation (i.e., R ( D ) → H ( D ) , i f n → ∞ ) we need t o prove that G n ( R ) → 1 / (2 π e ) when n → ∞ . 5 T ABLE I R A N D O M C O D I N G U P P E R B O U N D S O N N S M F O R O P T I M U M C O D E R AT E A N D F O R C O D E R AT E R C = 1 / 2 q R 0 G ∞ ( q ) R C = R 0 R C = 1 / 2 2 0.4144 0.0598 0.0631 3 0.4633 0.0587 0.0592 5 0.5000 0.0586 0.0586 7 0.5000 0.0585 0.0585 ∞ 0.5000 0.0 585 0.0585 Consider a latt ice Λ ( C ) over a q -ary li near ( n, k ) code, and denote the code rate R C = k /n . It can be shown (see [14]) that the overall volume of the q k V oronoi regions of the code words of C coincides with the volume o f the n -dimens ional cube w ith edg e l ength equal to q . It means that V ( R ) = q n /q k = q n − k and from (8) follows D = G n ( R ) q 2(1 − R C ) . (10) Formally we cannot us e equation (10) for finding NSM for a lattice over a code by estim ating th e quantization error for a random v ariable uniformly distributed over the cube. The reason is that the n - dimensional cube with length of the edge q does not contain p recisely q k complete V oronoi regions. Some regions near the boundaries of the cube p rotrude from t he cube. T o solve this probl em the “cyclic” met ric ρ ( x, y ) = min { ( x − y ) 2 , ( | x − y | − q ) 2 } (11) was i ntroduced in [14]. It was shown that the NSM of the lattice satisfies (10) if t he l attice points of the cube are us ed as codebook entries and the distortio n D is computed with respect to the metric ρ ( · , · ) . Belo w we will use (10 ) to estimate the NSM of conv olu tional codes and in [14] this equation was used to obtain the random coding bound on the ac hiev able NSM va lue. The reformulated result of [14] (see comments in Appendix) is presented here without a pro of. Theor em 1: For any prim e nu mber q and an y ε > 0 , there exists a sequence C 1 , C 2 , C 3 . . . , of q -ary linear codes, wh ere C n is a code of l ength n , and such a nu mber N , that for all n ≥ N | G n ( q ) − d 0 q 2( R 0 ( q ) − 1) | < ε (12) where G n ( q ) is the second normalized m oment o f th e l attice based on the code C n , d 0 is a solution of the equatio n d = 2 Z 1 / 2 0 ∂ ∂ s g ( s, x ) g ( s, x ) dx s = − 1 2 d (13) and R 0 ( q ) is defined as R 0 ( q ) = 1 ln q − 1 2 − 2 Z 1 / 2 0 ln g (1 / (2 d 0 ) , x ) d x (14) where g ( s, x ) = 1 q q − 1 X k =0 e sρ ( x,k ) (15) and ρ ( · , · ) is defined by (11). The values G ∞ ( q ) = lim n →∞ G n ( q ) for q = 2 , 3 , 5 , 7 are given in T abl e II. Al so we show in the table the code rate value R C = R 0 ( q ) which minim izes the random coding estim ate on the NSM va lue G ∞ ( q ) and the NSM value achie vable when we consider codes wi th nonopti mal rate R C = 1 / 2 . Presented estimates 6 giv e the achie vable NSM values if long enough codes are used for quant ization. In particular , the search results p resented i n S ection III show that the l attices obtai ned f rom c on volutional codes with a number of encoder s tates above 512 are roughly 0. 2 dB away from the asymptoticall y achie vable NSM value for a given alphabet s ize q . On the other hand, rate R C = 1 / 2 binary codes with 64 s tates hav e ev en better NSM than the NSM computed by av eraging over random ly chosen infinit ely long rate R C = 1 / 2 codes. An analysis of the asymptoti c behavior of G ∞ ( q ) and R 0 ( q ) wi th q → ∞ leads to t he following resu lt: Cor olla ry 1 : lim q →∞ G ∞ ( q ) = 1 2 π e (16) lim q →∞ R 0 ( q ) = 1 2 . (17) The proof i s given in App endix. I I I . N S M - O P T I M U M C O N V O L U T I O N A L C O D E S It follows from Corollary 1 that codes wi th rate R C = 1 / 2 provide asym ptotically o ptimum NSM value for l ar ge code alphabet size q . It follows from the data in T able I I that codes with rate R C = 1 / 2 gi ve near -optimum NSM except for q = 2 . Below we con sider only rate R C = 1 / 2 codes since they are most con venient for practical applications. Moreover , we will search for good lin ear codes for quantizatio n among truncated con volutional codes, since for such codes t he quanti zation (search for a codeword closest to the sou rce sequence) can be easily performed usin g the V iterbi algorit hm. A q -ary , rate R C = 1 / 2 con volutional encoder ov er GF ( q ) with m emory m can be described by the polynomial encoding matrix G ( D ) = ( g 1 ( D ) g 2 ( D )) where g i ( D ) = g i 0 + g i 1 D + · · · + g im D m , i = 1 , 2 are q -ary pol ynomials such th at max i { deg g i ( D ) } = m . In polynomi al notations the i nfinite length i nput informati on sequence u ( D ) = u 0 + u 1 D + · · · + u i D i + · · · and the correspon ding infinite l ength codeword v ( D ) = v 0 + v 1 D + · · · + v i D i + · · · , where v i = ( v 1 i , v 2 i ) satisfy the matrix equati on v ( D ) = u ( D ) G ( D ) . (18) The transformati on u ( D ) to v ( D ) is in vertible if at least one of two p olynomials is delay-free ( g 10 = 1 or g 20 = 1 ). The con volutional encoder (18) is non-catastroph ic if g 1 ( D ) and g 2 ( D ) are relative ly prime ([19]). Since for any con volutional code both catastrophic and non-catastroph ic encoders do exist, we can consider only no n-catastrophic encoders without lo ss of optim ality . For any p olynomial a ( D ) = a 0 + a 1 D + · · · + a i D i + · · · let ⌈ a ( D ) ⌉ n = a 0 + a 1 D + · · · + a n D n denote the finite d egree polynomial obtained from a ( D ) by t runcating it t o degree n . Furthermore we consider q -ary l inear ( n, k = n/ 2) codes which codew ords are coeffi cients of truncated vector poly nomials ⌈ v ( D ) ⌉ n/ 2 obtained from degree k = n/ 2 informati on po lynomial u ( d ) by encoding according to (18). The trellis representation of ( n, n/ 2) binary linear code obtained from (1 + D + D 2 , 1 + D 2 ) conv olu tional encoder is shown i n Fig. 1. For q -ary cod es, q > 2 , similar t rellises can be const ructed but with q b ranches lea ving each node and merging in each node of the trell is diagram. 7 00 11 11 11 11 00 00 00 10 01 01 10 01 10 11 00 00 01 01 10 11 11 11 00 00 10 01 10 … … … 01 10 11 0 = t 1 2 3 4 1 − n n 10 Fig. 1. T rellis representation of ( n, n/ 2) binary linear code obtained from the (1 + D 2 , 1 + D + D 2 ) con volutional encode r T ABLE II O P T I M U M B I N A RY C O N V O L U T I O N A L C O D E S A N D T H E I R N S M S . T H E G E N E R A T O R S A R E G I V E N I N O C TA L N O TA T I O N . states num. generator G n En. gain, dB 2 [3;1] 0.0733 0.5571 4 [7;5] 0.0665 0.9800 8 [17;13] 0.0652 1.0657 16 [31;23] 0.0643 1.1261 32 [61;57] 0.0634 1.1873 64 [165;127] 0.0628 1.2286 128 [357;251] 0.0623 1.2633 256 [625;467] 0.0620 1.2843 512 [1207;117 1] 0.0618 1.2983 ∞ — 0.0598 1.4389 T o find the NSM for a ( g 1 ( D ) , g 2 ( D )) con volutional encoder we first estimate the quantization error for source sequences uniformly d istributed in the hypercube [0 , q ] n , when the “cyclic metric” ρ ( · , · ) (11) is used as the di stortion measure (see [14]). Th en this error i s recalculated into the NSM value using (10). T o speed up t he exhaustive search for the optimum encoders ove r the set o f all memory m encoders with generators ( g 1 ( D ) , g 2 ( D )) we used the following rules t o reduce the search space without loss o f optimalit y , • O nly delay-free poly nomials are consid ered g 1 0 = g 2 0 = 1 . • Sin ce the cod es with the generators ( g 1 ( D ) , g 2 ( D )) and ( g 2 ( D ) , g 1 ( D )) are equiv alent, we con- sider only e ncoders with deg( g 1 ( D )) ≥ deg( g 2 ( D )) . If deg( g 1 ( D )) = deg ( g 2 ( D )) then we re quire | g 1 ( D ) | ≥ | g 2 ( D ) | , wh ere we denote | a ( D ) | = deg( a ( D )) X i =0 a i q i . In other w ords, we interpret the po lynomial coef ficients as positions of a number writ ten in q -ary form. Thereby we we assign a n integer number (v alue) to each polynomi al and only pairs of generators sorted in descendin g order of the correspon ding values are used as candidates. • O nly non-catastrophic encoders are consi dered, i.e., g cd( g 1 ( D ) , g 2 ( D )) = 1 . Our search results are p resented in T ables II, III, and IV for q = 2,3, and 5, respecti vely . It i s easy to see t hat the NSM values of the opt imum codes decrease when q g rows, but the i mprovement provided by 8 T ABLE III O P T I M U M T E R N A RY C O N V O L U T I O N A L C O D E S A N D T H E I R N S M S . states num. generator G n En. gain, dB 3 [12;11] 0.0720 0.6349 9 [121;111] 0.0663 0.9931 27 [1211;11 12] 0.0641 1.1396 81 [11222;10 121] 0.0626 1.2424 243 [1 10221;1012 11] 0.0617 1.3053 729 [1000112 ;112122] 0.0614 1.3265 ∞ — 0.0586 1.5231 T ABLE IV O P T I M U M Q U I N A RY C O N VO L U T I O N A L C O D E S A N D T H E I R N S M S . states num. generator G n En. gain, dB 5 [14;13 ] 0.071 6 0.6591 25 [131;102] 0.0642 1.1328 125 [1323 ;1031] 0.0622 1.2703 625 [10314;10 133] 0.0613 1.3336 ∞ — 0.0585 1.5229 ternary o r q uinary codes over b inary codes is s mall and does not worth the compli cation of the encoding. Notice also, t hat the determin ed NSM values correspond to less shaping gain than 1.36 dB reported in [8] for the 256-states quaternary codes. T o achiev e the same shaping gain we hav e t o have NSM=0.0609 whereas th e best found 625-state 5-ary cod e provides NSM=0.0613 whi ch is app roximately 0.02 dB worse than the value for the Marcellin -Fischer code. Notice, howe ver , that the Marcellin-Fischer quantizer [8] i s not a l attice qu antizer . Therefore, the NSM values reported here are the best among kn own multid imensional lattices . I V . A R I T H M E T I C C O D E D L A T T I C E Q U A N T I Z A T I O N Let us consi der the lattice over a linear ( n, k ) code C (not necessarily a truncated con volutional code). The entropy quanti zation of the input sequ ence x can be performed in two s teps: • A pproximation, i.e., fi nding the vector of indices b and the code word number m ∈ { 0 , ..., q k − 1 } that mini mizes the dist ortion value d ( x , λ ) = n − 1 k x − λ k 2 = n − 1 k x − ( c m + q b ) k 2 (see (4)). • E ntropy lossless coding o f the pair (m, b ). 9 First, we consider the approximation step. From (4) the follo wing reformul ation of the encoding problem follows, min λ k x − λ k 2 = min m min b k x − ( c m + q b ) k 2 = min m min b ( n X t =1 ( x t − c mt − q b t ) 2 ) = min m ( n X t =1 min b t ( x t − c mt − q b t ) 2 ) = min m ( n X t =1 µ ( x t , c mt ) ) = min m { µ ( x , c m ) } . (19) Here we i ntroduced the additive metric µ ( x , c ) = n X t =1 µ ( x t , c t ) , µ ( x, c ) = min b ( x − c − q b ) 2 = ( x − c − q b 0 ) 2 (20) where the optim um in dex value b 0 can be determined by “scalar quantization” with step q appli ed t o ( x − c ) , b 0 = x − c q . (21) By h·i we denote rounding to the nearest integer . It f ollows from (19), (20), a nd (21) that latt ice quantization can be split i nto tw o steps. First, for all t = 1 , ..., n and c = 0 , ..., q − 1 , the values x t − c s hould be scalar quant ized and the n × q indices b tc and the corresponding metrics µ tc = ( x t − c − q b tc ) 2 are to be computed. In the second step, the codeword c m yielding the m inimum of the metrics µ ( x , c m ) among all codew ords c m ∈ C , m ∈ { 0 , ..., q k − 1 } shoul d be fou nd. The code word number m and vector of i ndices b = ( b tc m 1 , ..., b tc mn ) descr ibe the trellis point closest to x . The formal descriptio n of th e algorithm is presented in Fig. 2. The problem o f searching for the opti mum entry over the in finite cardinality m ultidim ensional lattice codebook is now divided into t wo steps: q n scalar quantizatio n operations followed by searching for the best codew ord amo ng th e q k code words of the q -ary linear ( n, k ) code. The latter step i s sim ilar to soft decision maximum-li kelihood decoding of error correcting linear block codes and there are many such decoding algo rithms known; in particular for truncated con volutional codes the V iterbi algo rithm is a reasonable choice. Now let us consider an al gorithm for a variable-length lossl ess codin g of the pair ( m, b ) obtained on the approxi mation step. For a rate R C = 1 / 2 truncated conv olu tional ( n, k = n/ 2) -code, the code word is determined by length k information sequence u = ( u 1 , ..., u k ) and the codew ord can be repre sented as a sequence of k tuples of length 2, i.e., c = ( c 1 , ..., c k ) , c t = ( c 1 t , c 2 t ) . W e write th e corresponding vector of indices in tuples of length 2 too, i.e., b = ( b 1 , ..., b k ) , b t = ( b 1 t , b 2 t ) . Denote by σ t = ( u t − 1 , ..., u t − m ) the encoder st ate at tim e t , where we assum e u t = 0 for t ≤ 0 . T o describe arithmetic cod ing (for details see [20]) it is enou gh to define a probabilit y distribution o n the pairs ( u , b ) . Thus we introduce t he following two probabi lity distributions, { ϑ ( u t | σ t ) = ϑ ( u | σ ) , σ ∈ GF m q } 10 Input : Source sequence x = ( x 1 , x 2 , . . . , x n ) . Output : Number m of codew ord c m ∈ C , index sequence b = ( b 1 , b 2 , . . . , b n ) . Metrics and indices computation: f or t = 1 to n do f or c = 0 to q − 1 do b t ( c ) = D x t − c q E µ t ( c ) = ( x t − c − q b t ( c )) 2 end end Codeword search: Find the cod e word c m = ( c m 1 , c m 2 , . . . , c mn ) that minimizes metric µ ( x , c m ) = P n t =1 µ t ( c mt ) . Result: The algorithm outputs the codew ord number m and the index vector b = ( b 1 ( c m 1 ) , b 2 ( c m 2 ) , . . . , b n ( c mn )) . Fig. 2. Approximation step of lattice quantization. { ϕ ( b i t | c i t ) = ϕ ( b | c ) , b ∈ Z , c ∈ GF q , i = 1 , 2 } which we estimate for a g iv en source pdf by training using a long simu lated data sequence. The required probability distri bution p ( u , b ) is d efined as p ( u , b ) = k Y t =1 ϑ ( u t | σ t ) ϕ ( b 1 t | c 1 t ) ϕ ( b 2 t | c 2 t ) where code s ymbols c i t , i = 1 , 2 , are uniquely defined by u t and σ t . Notice that similar entropy codi ng for trellis quantization was described in [21], [8], and [13]. The diffe rence is that in these papers the approxim ation alphabet is finite, whereas in our algorithm t he approximation alphabet is not necessary finite since we allow a ny lattice point to be an approximation vector . Simulation results o f the described arithmeti c-coded quantization will be presented in next section. Belo w we present a modification which is ef ficient for lo w quantization rates for non-uniform probability distributions. As it w as mentioned in the Introduction, the codes whi ch are good in terms of granular gain (NSM v alue) are not necessarily optimal for non-uniform distri butions. The reason is that spheres are not necessarily good V oronoi re gions in this case. Moreover , V oronoi regions are not optimal quantization cells in th is case. T o understand thi s better , we consider properties of optim al entropy-constrained scalar quanti zation. First of all, notice that for a wide class of probability dist ributions the simulation result s f or optimal non-uniform scalar q uantization are presented in [17]. It is easy to verify that, for example, for GGD random variables with parameter α ≤ 0 . 5 , for all bit rates the best trellis quantizers lo ose with respect to optimum scalar quantizers [13]. The analysis of optimu m and near-optimum scalar quantizers from [17], [18] show that: • For GGD random variables the optim um ECSQ quantizer has the larger quanti zation cells near zero and smaller cells for l ar ge values. • T he quanti zation th resholds are not equ al to half of the sum of the neigh boring approxim ation values. Therefore, th e mini mum MSE (or minim um Euclidean dist ance) quantizers are no t optimu m ECSQ quantizers. 11 Input : Source sequence x = ( x 1 , x 2 , . . . , x n ) . Output : Number m of codew ord c m ∈ C , index sequence b = ( b 1 , b 2 , . . . , b n ) . Metrics and indices computation: f or t = 1 to n do ξ = 0 ; if x t < − ∆ then ξ = x t + ∆ end if x t > ∆ then ξ = x t − ∆ end f or c = 0 to q − 1 do b t ( c ) = D ξ − c q E µ t ( c ) = ( ξ − c − q b t ( c )) 2 end end Codeword search: Find the cod e word c m = ( c m 1 , c m 2 , . . . , c mn ) that minimizes metric µ ( x , c m ) = P n t =1 µ t ( c mt ) . Result: The algorithm outputs the codew ord number m and the index vector b = ( b 1 ( c 1 ) , b 2 ( c 2 ) , . . . , b n ( c n )) . Fig. 3. Approximation with an extended zero zone • T he quantizers wh ich hav e all quanti zation cells of equal size except t he one sym metrically located around zero (so c alled “extended zero zone” (EZZ) quant izer), has performances very clos e t o that of the op timum ECSQ quant izer . It follows from t hese observations that good trellis quantizers should be obtained as a generalization of the EZZ quantizer to the m ultidimens ional case. (Another method of extending the zero z one for trell is quantization, so called New T rellis Source Code (NTSC), have been presented in [13]). T o describe the m ultidim ensional EZZ quantizer w e need to generalize (20) and (19) to the case when the inpu t data from som e interval [ − ∆ , ∆] , ∆ > 0 are artificially interpreted as zeroes. Therefor we replace each input r .v . x by another r .v . defined as ξ = x + ∆ x < − ∆ 0 , − ∆ ≤ x ≤ ∆ x − ∆ x > ∆ . (22) Then we apply (20) and (19) to the r .v . ξ . The generalized version of the algori thm describing the approximation step of lattice quantization is s hown in Fig. 3. The p arameter ∆ is dif ferent for dif ferent pdf ’ s and qu antization rates. For each particular pdf and rate we m inimize av erage distortio n v alue by selecting the best value of ∆ from the set 2 − s , s = 0 , 1 , 2 , 3 . Simulati on results will be presented in next section. Now we consider the reconstruction of the approxi mation vector y = ( y 1 , ..., y n ) by the decoder from the receive d pair ( m, b = ( b 1 , ..., b n )) . First, w e introduce an additional integer p arameter L which defines the amount of approxim ation v alues which are estimated using a l ong training sequ ence (in our sim ulations we used L = 10 ). For the training so urce sequence x = ( x 1 , ..., x N ) we performed the lattice quanti zation 12 described above and found the optimum codew ord c = ( c 1 , ..., c N ) and t he corresponding sequence of indices b = ( b 1 , ..., b N ) . Then for all b ∈ {− L, ..., − 1 , 1 , ...L } and c ∈ 0 , . . . , q − 1 we computed “refined” approximation values β cb = 0 , b = 0 , c = 0 P i ∈ J cb x i | J cb | + ∆ , b ∈ { 1 , ...L } , c ∈ { 0 , . . . , q − 1 } or b = 0 , c ∈ { 1 , . . . , q − 1 } P i ∈ J cb x i | J cb | − ∆ , b ∈ {− L, ..., − 1 } , c ∈ { 0 , . . . , q − 1 } where J cb = { t | c t = c, b t = b } . The s et of values β cb is assumed t o be kn own to d ecoder . When t he p air ( m, b = ( b 1 , ..., b n )) is received, t he decoder first finds t he cod e word c m = ( c m 1 ..., c mn ) and then reconstructs the approximation vector y = ( y 1 , ..., y n ) as y t = β c mt b t b t {− L, ..., − 1 , 1 , ...L } c mt + q b t b t / ∈ {− L, ..., − 1 , 1 , ...L } . V . S I M U L A T I O N R E S U L T S This section contains the results of the arithm etic cod ed lattice quant ization for differ ent types o f sources. W e c onsider the parametric class o f generalized Gauss ian distributions. The probability densit y function is p arametrized by the parameter α and expressed as p ( x ) = αη ( α, σ ) 2Γ(1 /α ) exp {− [ η ( α , σ ) | x | ] α } where η ( α , σ ) = σ − 1 Γ(3 /α ) Γ(1 /α ) 1 / 2 , α > 0 Γ( z ) = Z ∞ 0 t z − 1 e − t dt and σ denotes the standard d e viation. The Laplacian and Gaussi an distributions are members of the family of generalized Gaussian dist ributions with parameters α = 1 . 0 and α = 2 . 0 , respectively . Simulation results for the Gaussian distribution are presented in T able V. The SNR achie ved by the “Ne w T rellis Source Code” (NTSC) [13] and by the “Entropy Cons trained Scalar Quantization” (ECSQ) [17] are also shown in the table. W e can s ee that the estimated SNR value for the encoder with 512 states is only about 0.2 dB below Shannon limit. For encoders wit h 4 and 32 stat es we obtain s ignificant improvements over t he NTSC-based qu antization. Simulation resul ts for the Laplacian dist ribution and for the GGD wi th α = 0 . 5 are presented in T ables VI and VII, respectively . W e show the quantization efficiency both without EZZ ( ∆ = 0 ) and wi th EZ Z ( ∆ > 0 ). Al so data from [13] are giv en in parenth esis. It follows from the si mulation results that the EZZ is effi cient for low quantization rates (below 2 bit/sample) and pro vides an SNR gain of about 0 .1 dB for the Laplacian source and about 0.4 dB for the GGD with α = 0 . 5 . Th e gap between the achiev ed SNR and the theoretical limi t H ( D ) for thes e two probabilit y distributions is nearly the same: 0.2 – 0.3 dB. No tice al so, that for th e GGD with α = 0 . 5 , l ow-comple xity (less than 32 encoder states) low rate quantizers do n ot perform better than the ECSQ. Therefore, constructi ng efficient quantizati on algorit hms for low rate coding for the GGD with sm all α remains t o be an open problem. 13 T ABLE V G A U S S I A N S O U R C E . D A TA F O R N T S C [ 1 3 ] I N PA R E N T H E S I S . Rate T rellis states H ( D ) EC SQ 2 4 8 16 32 64 128 256 512 0.5 2.29 2.50 2.55 2.61 2.64 2.69 2.69 2.76 2.76 3 .01 2.10 1 5.06 5 .53 5.61 5.66 5.71 5.74 5.74 5.85 5.85 6.02 4.64 (5.33) (5.64) 2 11 .08 11.50 11.63 11.69 11.72 11.77 11.77 11.82 11.84 12.04 10.55 (11.15) (11.37) 3 17 .11 17.55 17.64 17.70 17.74 17.80 17.80 17.85 17.87 18.06 16.56 (16.71) (16.96) T ABLE VI L A P L A C I A N S O U R C E . D AT A F O R N T S C [ 1 3 ] I N PA R E N T H E S I S . Rate ∆ Trellis states H ( D ) ECSQ 2 4 8 16 32 64 128 256 51 2 0.5 0 2.92 3.03 3.06 3.09 3.11 3.14 3.17 3.18 3.21 3.54 3.11 0.25 3.0 6 3.15 3.17 3. 18 3.20 3.22 3.23 1 0 5.69 6 .05 6.14 6 .18 6.23 6.29 6.3 0 6.3 3 6.33 6.62 5.76 0.25 5.8 7 6.14 6.20 6. 27 6.33 6.40 6.42 (5.93) (6.07) 2 0 11.68 12. 15 12.22 1 2.29 12. 32 1 2.38 12. 41 12.4 3 12.44 12.66 11.31 0.125 11. 74 12.15 12. 24 (11.49 ) (11.72 ) 3 0 17.74 18. 15 18.23 1 8.29 18. 33 1 8.39 18. 41 18.4 4 18.44 18.68 17.20 (17.00 ) (17.15 ) T ABLE VII G E N E R A L I Z E D G A U S S I A N S O U R C E W I T H α = 0 . 5 . D A TA F O R N T S C [ 1 3 ] I N PA R E N T H E S I S . Rate ∆ T rellis states H ( D ) ECSQ 2 4 8 16 32 0.5 0 4.74 4.81 4.83 4.83 4.84 5.6 2 5.37 0.5 5 .19 5.22 5.23 5 .23 5.24 1 0 8.00 8 .13 8.18 8.22 8 .22 9. 21 8.61 0.5 8 .53 8.53 8.62 8 .64 8.64 (8.11) (8.29) 2 0 14.31 14.71 14.80 14. 90 14.94 1 5.60 14. 58 0.25 14 .56 14.9 0 15.03 15 .10 15.14 (14.13 ) (14.47 ) 3 0 20.54 20.96 21.04 21. 10 21.16 2 1.70 20. 49 0.25 20 .62 21.0 6 21.14 21 .20 21.26 (19.61 ) (19.92 ) 14 A P P E N D I X Comments to f ormulation of Theorem 1. In [14] expressions (13) and (14) were given i n the fol lowing form d = 1 q Z q 0 ∂ ∂ s g ( s, x ) g ( s, x ) dx s = − 1 2 d (23) R 0 ( q ) = 1 ln q − 1 2 − 1 q Z q 0 ln g (1 / (2 d 0 ) , x ) dx . (24) The simplification is due to t he observation that g ( s, x ) is a periodical function of x with period 1 a nd inside the int erv al [0 , 1] th e function g ( s, x ) is sy mmetric with respect to the m iddle poin t x = 1 / 2 , i.e., g ( s, x ) = g ( s, 1 − x ) . Pr oof o f Corollary 1. Here we analyze the asymptotic behavior of the estimat e of G n ( q ) given by Theorem 1 when q → ∞ . From definitions (11 ) and (15) for x ∈ [0 , 0 . 5 ] we obt ain ρ ( x, k ) = ( x − k ) 2 , k = 0 , ..., q − 1 2 ( k − x − q ) 2 , k = q +1 2 , ..., q − 1 (25) g ( s, x ) = 1 q q − 1 2 X k =0 e s ( x − k ) 2 + 1 q q − 1 X k = q +1 2 e s ( k − x − q ) 2 (26) = 1 q q − 1 2 X k = − q − 1 2 e s ( x − k ) 2 , x ∈ [0 , 0 . 5] . (27) T o prove th e Corollary , we choo se s = − π e q (28) The first step is to verify that for large q this choice m akes (13 ) valid, i.e., d 0 = − 1 2 s = q 2 π e . (29) The second step is t o subs titute thi s expression into (14) and sh ow that for large q we have R 0 ( q ) → 1 / 2 . After these two steps we immediately obtain the m ain result, lim q →∞ G ∞ ( q ) = lim q →∞ d 0 q 2( R 0 ( q ) − 1) = 1 2 π e . W e start t he first st ep of deriv ations by demonst rating that asymptoticall y for lar ge q the generating function g ( s, x ) does no t depend on x . By straightforward computation s i t is easy to verify that max x { g ( s, x ) } = g ( s, 0) = 1 q q − 1 2 X k = − q − 1 2 e sk 2 (30) min x { g ( s, x ) } = g ( s, 1 / 2 ) = 1 q q − 1 2 X k = − q − 1 2 e s (2 k − 1) 2 / 4 . (31) 15 In order to estimate t hese sum s we wi ll use [22], formula 552.6, that is, e − x 2 + e − 2 2 x 2 + e − 3 2 x 2 + ... = − 1 2 + √ π x 1 2 + e − π 2 /x 2 + e − 2 2 π 2 /x 2 + e − 3 2 π 2 /x 2 + ... . (32) For large q we obtain from (30) that g ( s, 0) ≤ 1 q + 2 q ∞ X k =1 e sk 2 = 1 q + 2 q − 1 2 + r − π s 1 2 + e π 2 /s + e 2 2 π 2 /s + e 3 2 π 2 /s + ... . Substitutin g (28) and upp er -bounding the sum by the geom etric progression f or q ≥ 2 , we obtain th e upper boun d g ( s, x ) ≤ max x g ( s, x ) ≤ 1 √ q e + e − q . (33) T o obtain a lower bo und on the su m in (31) we rewrite it in the form g ( s, 1 / 2 ) = 2 q q − 1 2 X k =1 e s (2 k − 1) 2 / 4 + 1 q e sq 2 / 4 . Notice that t he in teger (2 k − 1) in the exponent of summands runs over odd values. If we add terms corresponding to i ntermediate eve n values the sum increases less than twice. Therefore, g ( s, 1 / 2 ) ≥ 1 q q X k =1 e sk 2 / 4 = 1 q ∞ X k =1 e sk 2 / 4 − 1 q ∞ X k = q +1 e sk 2 / 4 . Next we estim ate second su m by the geomet ric progression and esti mate first sum using (32). After straightforward computations we obtain g ( s, x ) ≥ min x g ( s, x ) ≥ 1 √ q e − 1 q . (34) W e can subs titute (33) and (34) i nto (13) to obtain lower and upper bound s on d 0 ( q ) . In both cases we hav e to esti mate the following integral 2 Z 1 / 2 0 ∂ ∂ s g ( s, x ) dx = Z 1 / 2 − 1 / 2 1 q q − 1 2 X k = − q − 1 2 ( x − k ) 2 e s ( x − k ) 2 dx = 1 q q − 1 2 X k = − q − 1 2 Z − k +1 / 2 − k − 1 / 2 x 2 e sx 2 dx = 1 q Z q / 2 − q / 2 x 2 e sx 2 dx = √ q 2 π e 3 / 2 erf √ π eq 2 − q 2 4 π e e − πeq 4 . 16 From inequali ties ([22], formul a 592) 1 − e − x 2 x √ π ≤ erf ( x ) ≤ 1 follow the bounds √ q 2 π e 3 / 2 − q 2 2 π e e − πeq 4 ≤ 2 Z 1 / 2 0 ∂ ∂ s g ( s, x ) dx ≤ √ q 2 π e 3 / 2 . Substitutin g t hem together wit h (33) and (34) int o (13) we obtain q 2 π e 1 − O q 2 e − q ≤ d 0 ≤ q 2 π e 1 + O 1 √ q . Therefore (29) i s proven and the first step completed. T o fulfil the second step and the proof of C orollary , we ha ve to substitute t he bounds (33) and (34) into expression (14). After simpl e deriv ation s we immediately obtain (17) R E F E R E N C E S [1] T . M. Cover and J. A. Thomas, Elments of Information Theory . Ne w Y ork: John W i ley & Sons, 1991. [2] R . M. Gray and D. L. Neuhof f., “Quantization, ” IEEE T rans . on Inf. Theory , vol. 44, pp. 2325 –2383, Oct. 1998. [3] J. H. Conway and N. J. A. Sl oane, Spher e P ackings, Lattices, and Group s . Ne w Y ork: Springer-V erlag, 2nd edition ed., 1993. [4] J. H. Conway and N. J. A. Sl oane, “Fast quantizing and decoding and algorithms for l attice quantizers and codes, ” IEEE Tr ans. on Inf. Theory , vol. 28, pp. 227–23 2, Mar 1982. [5] J. H. Conway and N. J. A. S loane., “ A fast encoding method for latt ice codes and quantizers, ” IEEE T rans. on Inf. Theory , vo l. 29, pp. 820– 824, Nov 1982. [6] P . L . Zador , Develo pment and Evaluation of P r ocedur es for Quantizing Multivariate Distributions . P hD thesis, Stanford Univ ., 1963. [7] M. V . Eyuboglu and G. D. Forney , “Latti ce and trellis quantization with lattice- and trellis-bounded codebooks—high-rate theory for memoryless sources, ” IE EE T rans. Inform. T heory , vol. 39, pp. 46–59 , January 1993. [8] M. Marcellin and T . Fischer, “T r ellis coded quantization of memoryless and gauss-mark ov sources, ” IEEE Tr ans. Commun. , vol. COM- 38, pp. 82–9 3, January 1990. [9] T . R. Fischer and M. W ang, “Entropy -constrained trellis-coded quantization, ” IE EE T rans. Info. Th. , vol. IT -38, pp. 415 – 426, Jan. 1992. [10] R. Laroia and N. Farv ardin, “T rellis-based scalar-v ector quantizer for memoryless sources, ” IEEE T rans. Inform. Theory , vol. IT -40, pp. 860 – 870, May 1994. [11] M. W . Marcellin, “On entrop y-constrained trellis coded quantization, ” IEEE T rans. Comm. , vol. C OM-42, pp. 14 – 16, Jun. 1994. [12] R. J. van der Vleuten and J. H. W eber, “Construction and e valua tion of trellis-coded quantizers for memoryless sources, ” IE EE Tr ans. Inform. Theory , vol. IT -41, pp. 853 – 858, May 1995. [13] L. Y ang and T . R. Fi scher , “ A ne w trellis source code for memoryless sources, ” IEEE T rans. on Inf. T heory , vol. 44, pp. 3056–3063, Nov ember 1998. [14] B. D. Kud ryashov and K. V . Y urkov , “Random coding bound for the second moment of multidimensional lattices, ” Problems of Information T ransmission , v ol. 43, pp. 57 – 68, March 2007. [15] K. V . Y urko v and B. D. K udryasho v , “Random quan tization bounds for lattices ov er q -ary linear codes, ” in ISIT 2007 , pp. 236– 240, June 2007. [16] U. Erez, S. L itsyn, and R. Z amir ., “Latt ices which are goo d for (almost) e verything, ” IEEE T rans. on Inf. Theory , vol. 51, pp. 3401– 3416, Oct. 2005 . [17] N. Farv ardin and J. W . Modestino , “Optimum quantizer performance for a class of non-gaussian memoryless sources, ” IEE E T rans. on Inf. Theory , vol. IT -30, pp. 485– 497, May 1984. [18] B. D. Kudryasho v , E. Oh, and A. V . Porov , “Scalar quantization for audio data coding, ” IEEE T rans. Au dio, Speech, and Languag e Pr ocessing , 2007. submitted for publication. [19] R. Johan nesson and K. S. Zigangirov , Fundamentals of Con volutional Coding . New Y ork: IE EE Press, Piscataw ay , 1999. [20] I. H. W itten, R. M. Neal, and J. G. Cleary , “ Arithmetic coding for data compression, ” Commun. ACM , vol. 30, pp. 520–540, June 1987. [21] R. L. Joshi, V . J. Cramp, and T . R. Fischer , “Image subband coding using arithmetic coded trelli s coded quantization, ” IEEE T rans. on Ciruits and Systems for V ideo T echno logy , vo l. 5, pp. 515–523 , Dec. 1995. [22] H. B. Dwight, T ables of Inte grals and Other Mathema tical Data , 4th. ed. Ne w Y ork: McMillan, 1961 .

Original Paper

Loading high-quality paper...

Comments & Academic Discussion

Loading comments...

Leave a Comment