Maximum likelihood estimation of a multidimensional log-concave density

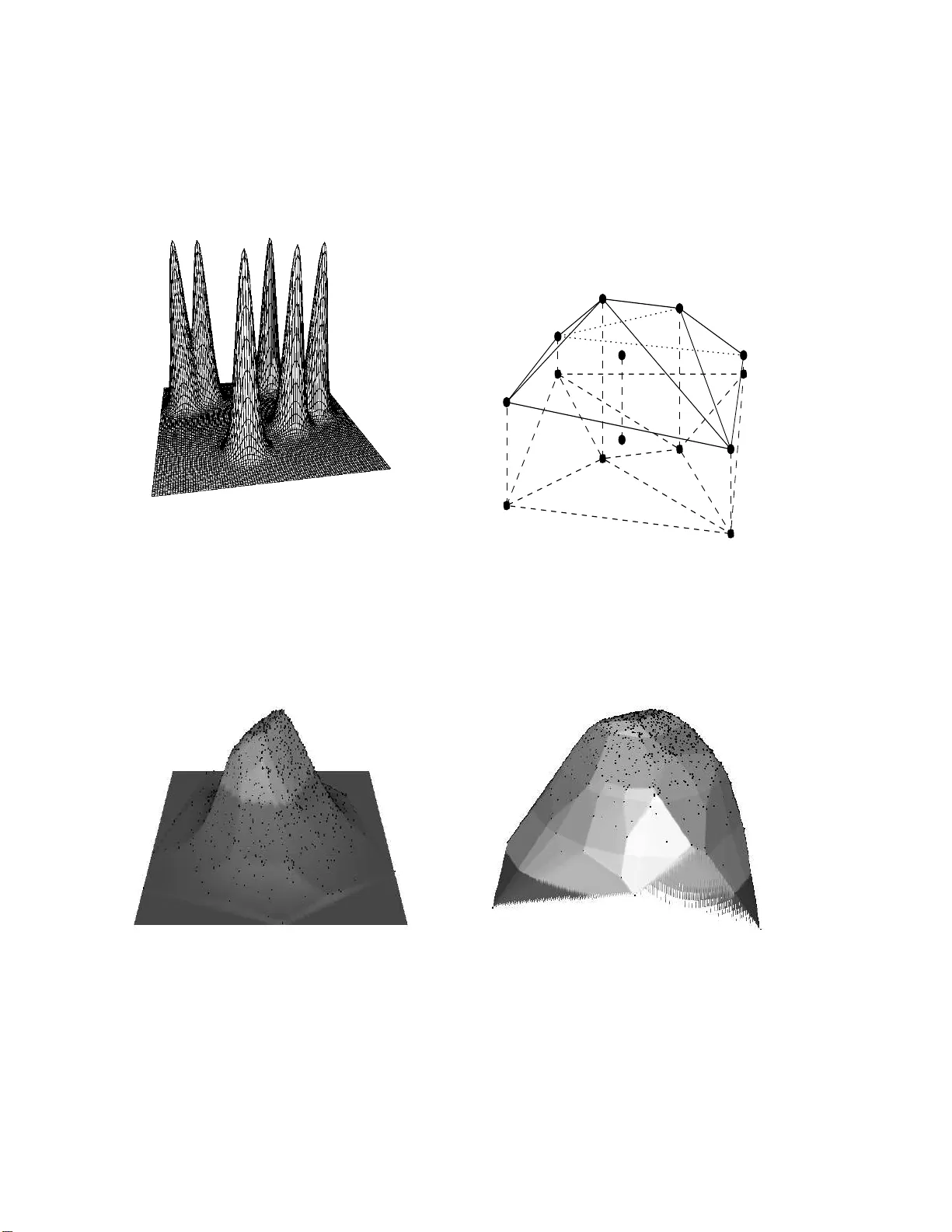

Let X_1, ..., X_n be independent and identically distributed random vectors with a log-concave (Lebesgue) density f. We first prove that, with probability one, there exists a unique maximum likelihood estimator of f. The use of this estimator is attr…

Authors: Madeleine Cule, Richard Samworth, Michael Stewart