In this paper, we develop an approach for optimizing the explicit binomial confidence interval recently derived by Chen et al. The optimization reduces conservativeness while guaranteeing prescribed coverage probability.

Deep Dive into Optimal Explicit Binomial Confidence Interval with Guaranteed Coverage Probability.

In this paper, we develop an approach for optimizing the explicit binomial confidence interval recently derived by Chen et al. The optimization reduces conservativeness while guaranteeing prescribed coverage probability.

1 Explicit Formula of Chen et al.

Let X be a Bernoulli random variable defined in probability space (Ω, F , Pr) with distribution Pr{X = 1} = 1 -Pr{X = 0} = p ∈ (0, 1). It is a frequent problem to construct a confidence interval for p based on n i.i.d. random samples X 1 , • • • , X n of X.

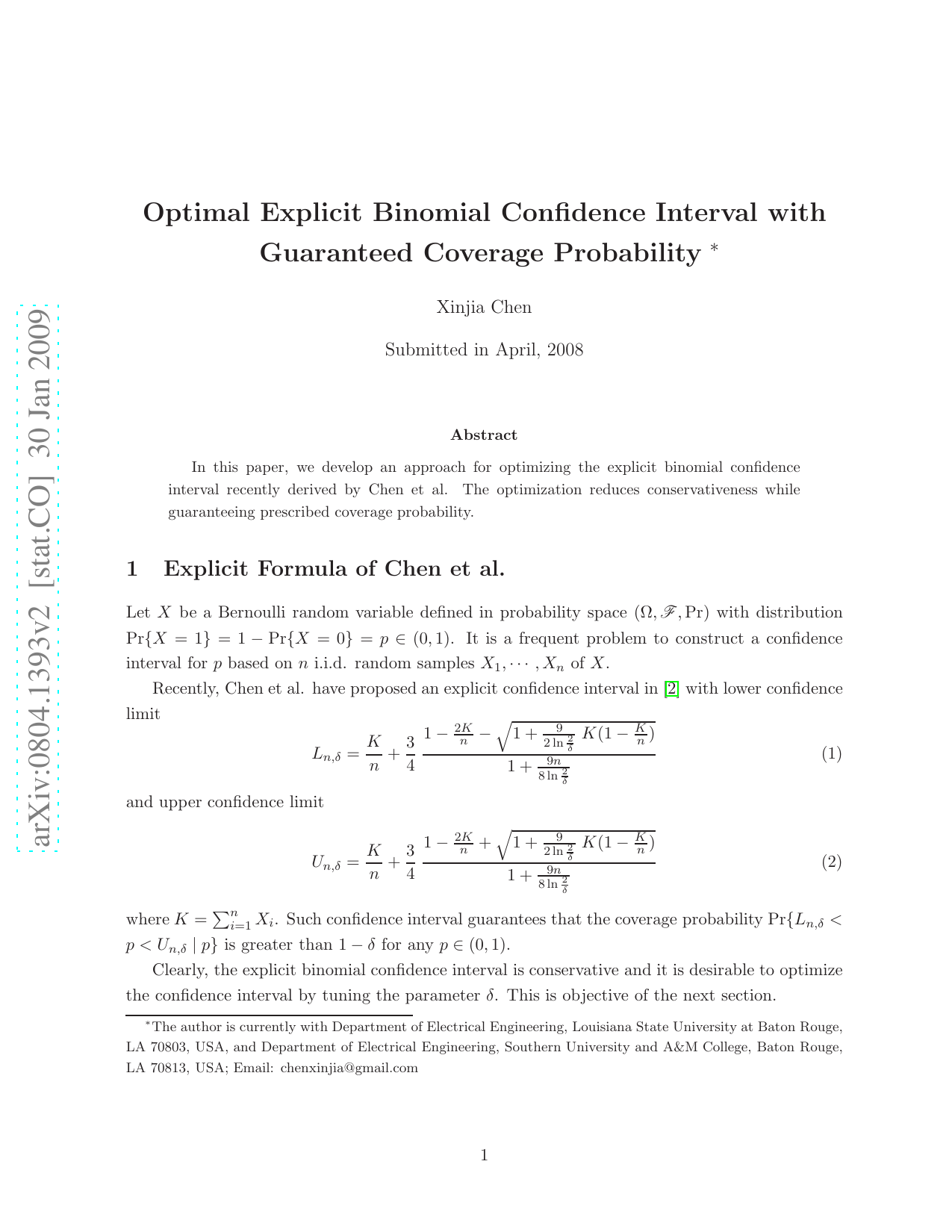

Recently, Chen et al. have proposed an explicit confidence interval in [2] with lower confidence limit

and upper confidence limit

where K = n i=1 X i . Such confidence interval guarantees that the coverage probability Pr{L n,δ < p < U n,δ | p} is greater than 1δ for any p ∈ (0, 1).

Clearly, the explicit binomial confidence interval is conservative and it is desirable to optimize the confidence interval by tuning the parameter δ. This is objective of the next section.

As will be seen in Section 3, it can be shown that Theorem 1 For any fixed n and p ∈ (0, 1), the coverage probability of confidence interval [L n,δ , U n,δ ] decreases as δ increases.

Hence, it is possible to find δ > α such that

for α ∈ (0, 1). To reduce conservatism of the confidence interval, we consider the following optimization problem: For a given α ∈ (0, 1), maximize δ subject to the constraint that inf p∈(0,1)

A similar problem is to maximize δ subject to the constraint that inf p∈(0,1)

As a result of Theorem 1, the maximum δ can be obtained from (α, 1) by a bisection search. In this regard, it is essential to efficiently evaluate inf p∈(0,1) Pr{L n,δ ≤ p ≤ U n,δ | p} and inf p∈(0,1) Pr{L n,δ < p < U n,δ | p}. This is accomplished by the following theorem derived from the theory of random intervals established in [3].

for p ∈ (0, 1) and

Then, the following statements hold true: (I): inf p∈(0,1) Pr{L n,δ ≤ p ≤ U n,δ | p} equals to the minimum of

(II): inf p∈(0,1) Pr{L n,δ < p < U n,δ | p} equals to the minimum of

The proof of Theorem 2 is provided in Section 4.

For simplicity of notations, define λ = 9n 8 ln 2 δ and z = k n Then, for K = k, the upper and lower confidence limits are U n,δ = U (z) and L n,δ = L(z) respectively, where Clearly, to show ∂y ∂λ < 0, it suffices to show that the right-hand side of the above equation is negative for any z ∈ [0, 1] and λ > 0. That is, to show 2z(1z)

This content is AI-processed based on ArXiv data.