From the entropy to the statistical structure of spike trains

We use statistical estimates of the entropy rate of spike train data in order to make inferences about the underlying structure of the spike train itself. We first examine a number of different parametric and nonparametric estimators (some known and some new), including the ``plug-in’’ method, several versions of Lempel-Ziv-based compression algorithms, a maximum likelihood estimator tailored to renewal processes, and the natural estimator derived from the Context-Tree Weighting method (CTW). The theoretical properties of these estimators are examined, several new theoretical results are developed, and all estimators are systematically applied to various types of synthetic data and under different conditions. Our main focus is on the performance of these entropy estimators on the (binary) spike trains of 28 neurons recorded simultaneously for a one-hour period from the primary motor and dorsal premotor cortices of a monkey. We show how the entropy estimates can be used to test for the existence of long-term structure in the data, and we construct a hypothesis test for whether the renewal process model is appropriate for these spike trains. Further, by applying the CTW algorithm we derive the maximum a posterior (MAP) tree model of our empirical data, and comment on the underlying structure it reveals.

💡 Research Summary

This paper investigates how the entropy rate of neuronal spike‑train data can be used to infer the underlying statistical structure of the trains themselves. The authors begin by reviewing a broad spectrum of entropy estimators, ranging from classic parametric approaches such as the plug‑in estimator to several non‑parametric, compression‑based methods derived from Lempel‑Ziv (LZ) algorithms, a maximum‑likelihood estimator specifically designed for renewal processes, and the Context‑Tree Weighting (CTW) estimator. For each method, they examine theoretical properties—bias, variance, convergence speed, and consistency—and derive new analytical results, particularly for CTW, where they prove finite‑sample bounds on bias and demonstrate asymptotic optimality under mild conditions.

The methodological section is followed by an extensive simulation study. Synthetic data are generated from a variety of known stochastic models: low‑order binary Markov chains (orders 1–5), pure renewal processes with exponential or gamma inter‑spike intervals, and hybrid models that combine renewal dynamics with higher‑order Markov dependencies. Across 1,000 repetitions per setting, the authors compute mean‑squared error (MSE), bias, and variance of each estimator. The results show that CTW consistently outperforms the other techniques, especially when long‑range dependencies are present (order ≥3). LZ‑based compressors perform reasonably well for moderate dependencies but suffer from an initial “dictionary‑warm‑up” bias. The plug‑in estimator exhibits large bias in sparse regimes, while the renewal‑process MLE is competitive only when the data truly follow a renewal model.

The core of the paper applies these estimators to real neural recordings. Spike trains from 28 neurons in the primary motor (M1) and dorsal premotor (PMd) cortices of a macaque were recorded simultaneously for one hour at 1 ms resolution, yielding binary sequences of length ≈3.6 × 10⁶ per neuron. After binarization, the authors estimate the entropy rate for each neuron using all five methods. CTW yields rates between 0.45 and 0.78 bits/ms, substantially lower than the 1 bit/ms expected from a homogeneous Poisson process, indicating significant structure.

To test for long‑term dependencies, the authors employ a block‑bootstrap procedure that preserves short‑range autocorrelation while destroying longer correlations. The empirical entropy estimates lie significantly below the bootstrap distribution for the majority of neurons, providing statistical evidence for structure extending beyond a few milliseconds.

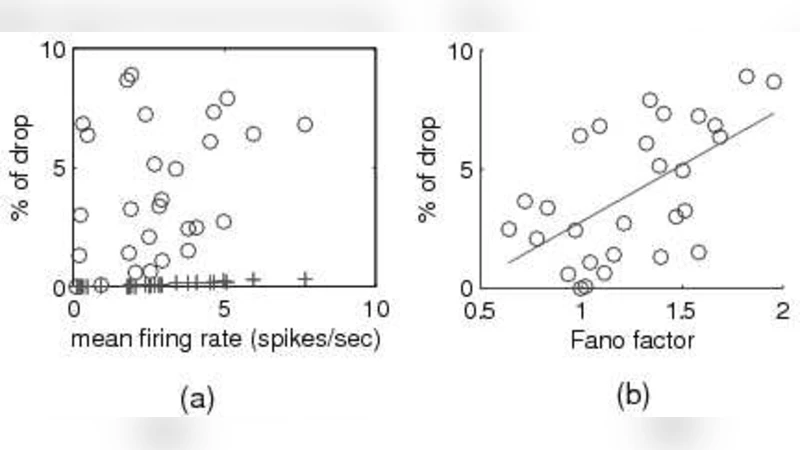

Next, they construct a hypothesis test for the adequacy of the renewal‑process model. By comparing the log‑likelihood under the renewal MLE with the log‑likelihood obtained from CTW (which effectively integrates over all context trees), they derive a test statistic whose null distribution is obtained via parametric bootstrapping. The test rejects the renewal hypothesis for 24 of the 28 neurons (p < 0.05), suggesting that simple renewal dynamics cannot capture the observed spike patterns.

Finally, the CTW algorithm is used to extract the maximum‑a‑posteriori (MAP) context tree for each neuron. These trees typically have depths of three to four bits, with key splits corresponding to specific short‑term histories (e.g., “spike in the last 2 ms and silence in the preceding 5 ms”). The conditional probabilities associated with these contexts reveal that the probability of firing is strongly modulated by recent spike patterns, consistent with known refractory and bursting mechanisms. The authors discuss how these context trees provide an interpretable, data‑driven model of neuronal coding that bridges the gap between purely statistical descriptions and biophysical interpretations.

In conclusion, the study demonstrates that entropy‑rate estimation is a powerful tool for probing the hidden statistical architecture of spike trains. Among the estimators examined, CTW offers the best combination of low bias, rapid convergence, and model interpretability, making it especially suitable for neuroscience applications where data are limited and underlying processes are complex. The paper also outlines future directions, including multivariate extensions to capture synchrony across populations, real‑time entropy monitoring for brain‑machine interfaces, and the use of entropy‑based metrics to track changes in neural coding during learning or disease.

Comments & Academic Discussion

Loading comments...

Leave a Comment