Website Optimization through Mining User Navigational Pattern

With the World Wide Web’s ubiquity increase and the rapid development of various online businesses, the complexity of web sites grow. The analysis of web user’s navigational pattern within a web site can provide useful information for server performance enhancements, restructuring a website and direct marketing in e-commerce etc. In this paper, an algorithm is proposed for mining such navigation patterns. The key insight is that users access information of interest and follow a certain path while navigating a web site. If they don’t find it, they would backtrack and choose among the alternate paths till they reach the destination. The point they backtrack is the Intermediate Reference Location. Identifying such Intermediate locations and destinations out of the pattern will be the main endeavor in the rest of this report.

💡 Research Summary

The paper addresses the problem of sub‑optimal website navigation caused by a mismatch between designers’ expectations and users’ actual browsing behavior. It proposes a server‑log‑based mining approach that identifies two key concepts: Intermediate Reference Locations (IRLs) – points where users backtrack because the current page does not contain the information they seek – and Destination Locations (DLs) – the pages that finally satisfy the user’s intent.

The methodology proceeds as follows. First, raw web access logs are filtered to retain only HTML page requests, and sessions are reconstructed using IP address and timestamp ordering. Each session becomes an ordered array of pages (page_array). For each page the algorithm checks whether the next page in the sequence is either the same page (indicating a backtrack) or a directly linked page (using the site’s hyperlink graph). If either condition holds, the page is a candidate for IRL or DL.

To distinguish IRL from DL, the authors introduce a time‑based threshold. For a given page P, they collect the dwell times t₁…tₙ of n users and the total time each user spent on the site before reaching P (T₁…Tₙ). A damping factor δ (0.15–0.85) reflecting page popularity is applied, and a weighted average

TP = δ·(t₁·T₁ + t₂·T₂ + … + tₙ·Tₙ) / (T₁ + T₂ + … + Tₙ)

is computed. If TP exceeds a pre‑defined threshold, the page is marked as a Destination Location; otherwise it is marked as an Intermediate Reference Location.

Next, the algorithm builds a table where each row contains a DL, its preceding Actual Location (AL), and the ordered list of IRLs that led to it (Ĩ₁, Ĩ₂, …). To prioritize IRLs, the authors assign decreasing probability weights Ω to successive IRLs (Ω₁ = 1, Ω₂ = 0.75, Ω₃ = 0.5, Ω₄ = 0.25, etc.). For each IRL k they compute a “finding probability” βₖ = Σ Ω (position of IRL k in all records). The average Sₚ = (Σ βₖ) / (number of IRLs) serves as a benchmark. IRLs with βₖ ≥ Sₚ are deemed “recommended” and are added as navigation shortcuts to the corresponding DL; subsequent IRLs in the same record are then pruned.

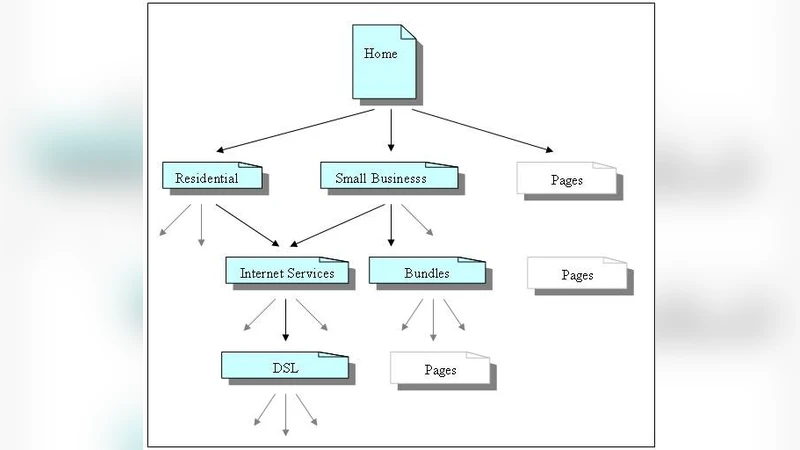

The authors evaluate the approach on a real‑world online ordering system of a major U.S. telecom provider, using one year of log data. After restructuring the site according to the mined IRLs (e.g., moving “Internet Services” under “Residential” and “Small Business” sections), they compare monthly hit counts to the target DL before and after optimization. The new navigation path consistently receives higher traffic, and overall authenticated user visits increase in the post‑optimization period. The paper reports that roughly 20 % of destination pages originally suffered from IRL/DL mismatches, and that correcting these mismatches yields measurable gains.

In the discussion, the authors note that their technique relies solely on server‑side logs, avoiding client‑side instrumentation and thus reducing deployment overhead. However, they acknowledge limitations: the method cannot differentiate a user’s explicit “Back” button press from a hyperlink click, the damping factor and time thresholds are set heuristically, and the experimental validation is confined to a single domain.

Future work is suggested in three directions: (1) incorporating page‑content similarity analysis to refine IRL/DL identification, (2) applying predictive analytics or machine‑learning models to forecast user actions from navigation patterns, and (3) extending the approach to multi‑device and multi‑domain environments.

Overall, the paper contributes a practical, log‑driven framework for detecting navigation bottlenecks and restructuring website topology, demonstrating tangible performance improvements in a production setting while highlighting avenues for further methodological enhancements.

Comments & Academic Discussion

Loading comments...

Leave a Comment