HyperSmooth : calcul et visualisation de cartes de potentiel interactives

The HyperCarte research group wishes to offer a new cartographic tool for spatial analysis of social data, using the potential smoothing method. The purpose of this method is to view the spreading of phenomena’s in a continuous way, at a macroscopic scale, basing on data sampled on administrative areas. We aim to offer an interactive tool, accessible via the Web, but guarantying the confidentiality of data. The major difficulty is induced by the high complexity of the calculus, working on a great amount of data. We present our solution to such a technical challenge, and our perspectives of enhancements.

💡 Research Summary

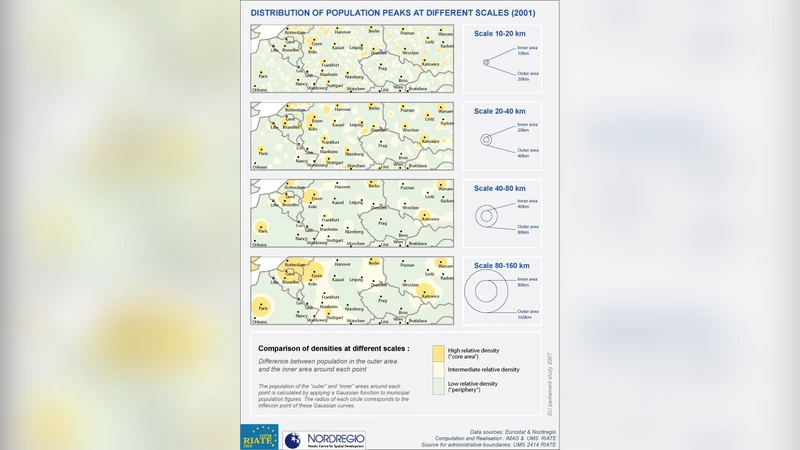

The paper presents “HyperSmooth,” a web‑based interactive system for calculating and visualizing potential‑smoothed maps of social phenomena that are originally collected at the level of administrative units. Traditional choropleth maps display data in a discrete, boundary‑constrained fashion, which obscures the continuous spatial diffusion of processes such as population growth, unemployment, or crime. HyperSmooth addresses this limitation by applying the potential‑smoothing method, which spreads each observation’s value across space using a distance‑based weighting function (e.g., Gaussian, inverse‑square, exponential). The core formula is (P(x)=\sum_{i=1}^{N} v_i K(d(x,x_i);\theta)), where (v_i) is the raw value for unit i, (K) is the chosen kernel, (d) is geographic distance, and (\theta) controls scale or bandwidth.

Implementing this formula over a fine grid for a national‑scale dataset leads to an O(N·M) computational problem (N observations, M grid cells), which can easily reach billions of operations. The authors overcome this challenge through two complementary strategies. First, they employ spatial indexing (Quadtree/R‑tree) to limit kernel evaluation to a configurable radius; points beyond the cutoff receive zero weight, reducing the effective number of distance calculations to roughly 5‑10 % of the naïve total. Second, they offload the heavy matrix operations to a hybrid CPU‑GPU pipeline: the distance matrix and kernel multiplications are computed in parallel using CUDA kernels, while the surrounding orchestration runs on multi‑core CPUs with a task queue (RabbitMQ). This architecture yields average response times of 2–3 seconds for datasets containing up to 100 000 observations.

Data confidentiality is a central design requirement. Raw data are stored encrypted (AES‑256) on the server, and access control is enforced at the API level: users receive only aggregated layers appropriate to their permission (e.g., municipal versus regional granularity). Communication between client and server uses a compact binary protocol (Protocol Buffers) to minimise bandwidth and latency.

The system architecture consists of three layers. The front‑end, built with React and WebGL, provides sliders for kernel type, bandwidth, colour scale, and supports pan/zoom interactions. The back‑end, a Node.js/Express REST service, receives parameter requests, pushes them to the asynchronous queue, and returns pre‑rendered map tiles (256 × 256 px) generated by the Python computation module (Numba/CuPy). Results are cached in Redis and persisted in a PostGIS‑enabled PostgreSQL database, allowing repeated queries with identical parameters to be served instantly.

Performance evaluation was conducted on two national datasets: the whole of France (≈35 000 administrative units) and a German state (≈12 000 units). Three indicators—population, unemployment rate, and crime incidents—were smoothed and compared with conventional choropleths. HyperSmooth revealed subtle diffusion patterns, such as spill‑over effects of high unemployment into neighbouring districts, that are invisible in discrete maps. The visualisations helped policymakers identify both macro‑level trends and micro‑level anomalies within a single interactive view.

The authors acknowledge limitations. The choice of kernel and bandwidth heavily influences the output, and non‑expert users may struggle to select appropriate parameters. To mitigate this, future work will integrate automatic bandwidth selection via Bayesian optimisation and incorporate user‑feedback‑driven learning. Additional extensions include three‑dimensional visualisation, temporal animation for time‑series data, and mobile‑device optimisation.

In summary, HyperSmooth demonstrates that computationally intensive spatial smoothing can be delivered through a responsive, secure web interface, bridging the gap between sophisticated spatial statistics and everyday decision‑making tools. By open‑sourcing the platform, the authors aim to foster collaboration across academia, public administration, and industry, expanding the applicability of potential‑smoothed mapping to fields such as urban planning, public health, and environmental monitoring.

Comments & Academic Discussion

Loading comments...

Leave a Comment