Higher Accuracy for Bayesian and Frequentist Inference: Large Sample Theory for Small Sample Likelihood

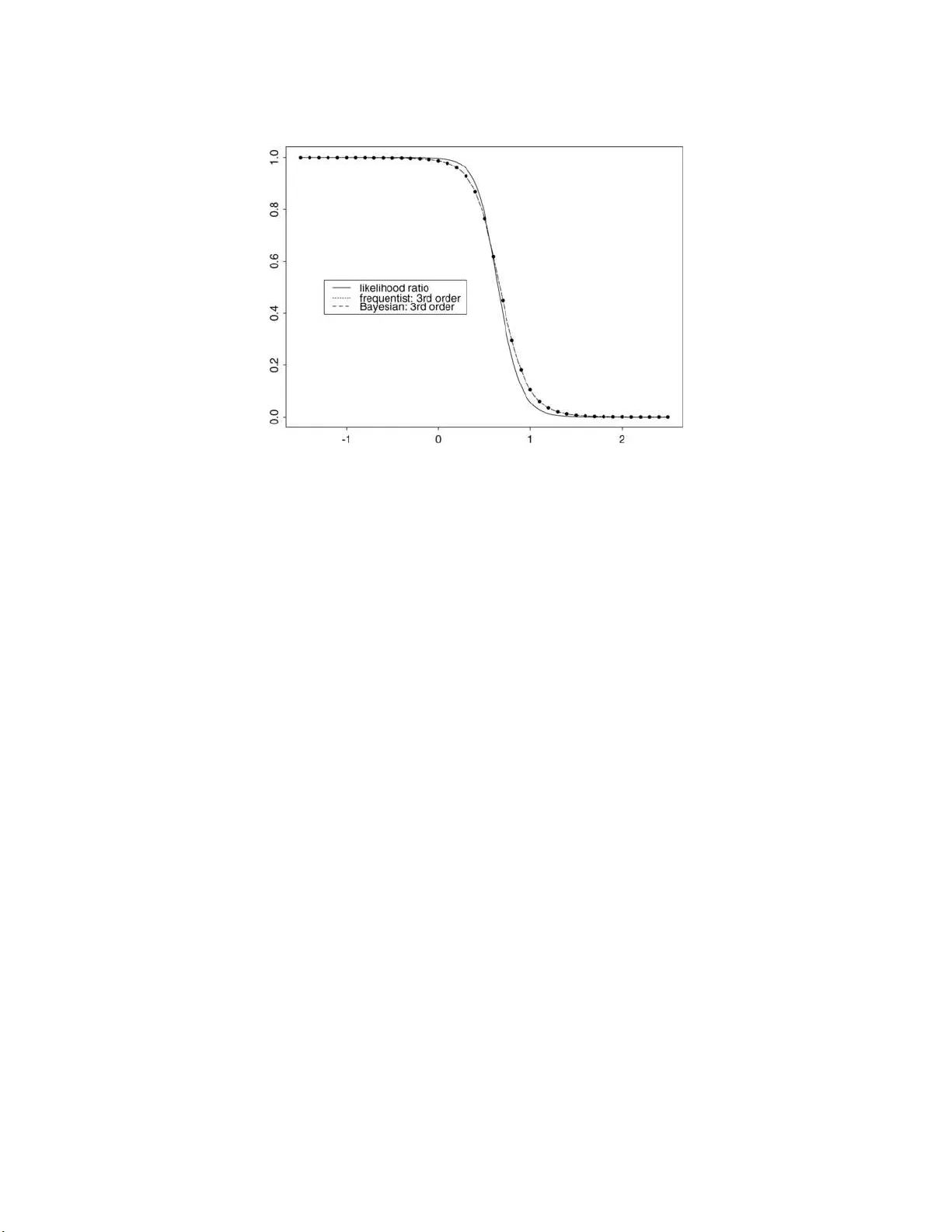

Recent likelihood theory produces $p$-values that have remarkable accuracy and wide applicability. The calculations use familiar tools such as maximum likelihood values (MLEs), observed information and parameter rescaling. The usual evaluation of suc…

Authors: M. Bedard, D. A. S. Fraser, A. Wong