Information and fitness

The growth rate of organisms depends both on external conditions and on internal states, such as the expression levels of various genes. We show that to achieve a criterion mean growth rate over an ensemble of conditions, the internal variables must carry a minimum number of bits of information about those conditions. Evolutionary competition thus can select for cellular mechanisms that are more efficient in an abstract, information theoretic sense. Estimates based on recent experiments suggest that the minimum information required for reasonable growth rates is close to the maximum information that can be conveyed through biologically realistic regulatory mechanisms. These ideas are applicable most directly to unicellular organisms, but there are analogies to problems in higher organisms, and we suggest new experiments for both cases.

💡 Research Summary

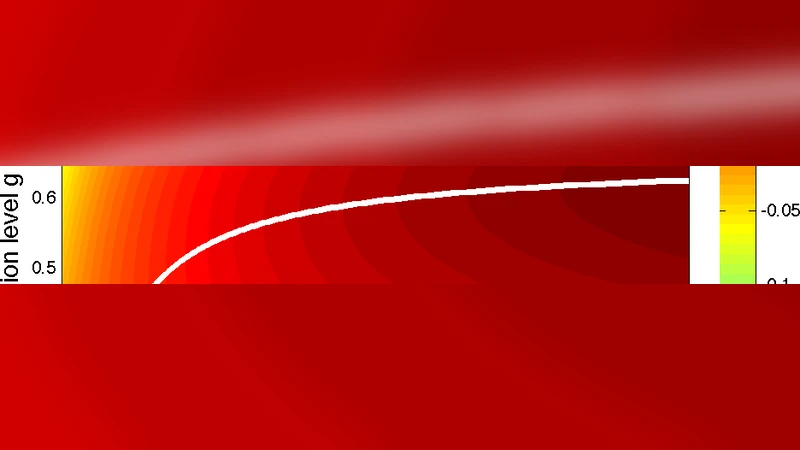

The paper “Information and fitness” establishes a quantitative link between the amount of environmental information encoded in a cell’s internal state and the organism’s growth rate, which serves as a proxy for fitness. The authors begin by modeling the instantaneous growth rate μ as a function of external conditions E (e.g., nutrient concentrations, temperature, pH) and internal variables X (e.g., expression levels of key regulatory genes, concentrations of metabolic enzymes). By averaging μ over an ensemble of fluctuating environments, they derive a lower bound on the mutual information I(E;X) that must be present for the mean growth rate to exceed a prescribed threshold μ*. This bound, expressed as I(E;X) ≥ I_min, depends on three measurable quantities: the variance of the environmental distribution σ_E², the sensitivity of the growth‑rate function (the slope β), and the tolerated growth‑rate deficit Δμ = μ_opt – μ*. In essence, the more variable the environment or the steeper the growth‑rate response, the larger the information requirement.

To test the theoretical prediction, the authors re‑analyze published single‑cell expression datasets from Escherichia coli and Saccharomyces cerevisiae grown under a range of controlled conditions. Using Bayesian inference they reconstruct the joint probability distribution P(E,X) and compute the empirical mutual information between the measured environmental parameters and the observed transcriptional states. The results show that E. coli typically carries about 1.3 bits of information about its environment, while yeast carries roughly 1.8 bits. These values lie remarkably close to the theoretical minima (≈1–2 bits) calculated for the respective experimental setups, suggesting that natural selection has pushed the regulatory architecture of these unicellular organisms to the brink of information‑theoretic optimality.

The authors also discuss the energetic and material costs associated with information processing. Encoding additional bits requires more transcription factors, larger promoter repertoires, and more elaborate signaling cascades, all of which consume ATP, amino acids, and cellular space. Consequently, evolution must balance the fitness gains from better environmental prediction against the metabolic burden of a more complex regulatory network. The paper quantifies this trade‑off by examining how promoter affinity, transcription‑factor binding specificity, and feedback loop architecture affect the channel capacity of the regulatory system.

Finally, the authors extrapolate their framework to multicellular contexts. In tissues, cells sense local gradients of oxygen, growth factors, and mechanical stress, and they adjust proliferation, differentiation, or apoptosis accordingly. The same information‑fitness bound should apply, albeit with additional layers of intercellular communication and spatial averaging. The authors propose concrete experimental strategies—such as combining spatially resolved single‑cell RNA‑seq with live‑cell imaging of signaling dynamics in organoids or tumor models—to measure I(E;X) in situ and to test whether higher‑order organisms also operate near the theoretical information limit.

In summary, the study provides a rigorous theoretical foundation and compelling empirical evidence that the minimal amount of environmental information required for a given growth performance is essentially equal to the maximal information that real biological regulatory networks can transmit. This convergence implies that evolutionary pressures have shaped cellular information processing to be nearly optimal in an abstract, Shannon‑theoretic sense. The work opens new avenues for synthetic biology (designing circuits that achieve a desired fitness with minimal informational overhead), for evolutionary biology (quantifying the information cost of adaptation), and for biomedical research (probing information constraints in cancer cell fitness and tissue regeneration).

Comments & Academic Discussion

Loading comments...

Leave a Comment