Experiments with a Convex Polyhedral Analysis Tool for Logic Programs

Convex polyhedral abstractions of logic programs have been found very useful in deriving numeric relationships between program arguments in order to prove program properties and in other areas such as termination and complexity analysis. We present a tool for constructing polyhedral analyses of (constraint) logic programs. The aim of the tool is to make available, with a convenient interface, state-of-the-art techniques for polyhedral analysis such as delayed widening, narrowing, “widening up-to”, and enhanced automatic selection of widening points. The tool is accessible on the web, permits user programs to be uploaded and analysed, and is integrated with related program transformations such as size abstractions and query-answer transformation. We then report some experiments using the tool, showing how it can be conveniently used to analyse transition systems arising from models of embedded systems, and an emulator for a PIC microcontroller which is used for example in wearable computing systems. We discuss issues including scalability, tradeoffs of precision and computation time, and other program transformations that can enhance the results of analysis.

💡 Research Summary

The paper presents a web‑accessible tool that performs convex polyhedral abstract interpretation for (constraint) logic programs, integrating a suite of state‑of‑the‑art techniques to improve both precision and scalability. The authors begin by reviewing the role of convex polyhedral abstractions in deriving linear relationships among program arguments, which are essential for proving safety properties, termination, and complexity bounds. Traditional polyhedral analyses rely on a widening operator to guarantee convergence of the fix‑point computation, but naïve widening often leads to excessive over‑approximation, while manual selection of widening points is error‑prone and limits usability.

To address these shortcomings, the tool implements several advanced strategies. “Delayed widening” postpones the application of widening for a configurable number of iterations, allowing the analysis to accumulate more precise constraints before any generalisation occurs. “Widening up‑to” introduces a user‑defined polyhedral bound; widening is suppressed until the abstract state reaches this bound, after which the standard widening is applied. Together, these mechanisms reduce the loss of precision without sacrificing termination guarantees. After widening, an automatic narrowing phase refines the over‑approximated polyhedra, iteratively tightening the abstract state until a stable fix‑point is reached or a resource limit is hit.

A key contribution is the automatic selection of widening points. The tool analyses the program’s control‑flow graph (CFG), identifies strongly connected components (SCCs) that correspond to loops, and evaluates the growth patterns of numeric variables within each SCC. Based on this analysis it marks a minimal set of program points where widening should be introduced, thereby limiting the number of costly widening operations while preserving convergence.

The system is tightly coupled with two program transformations that further enhance analysis results. Size abstraction maps the size of data structures (e.g., list length, tree depth) to integer variables, enabling the polyhedral engine to reason about resource consumption and termination. The query‑answer transformation performs a goal‑directed backward propagation, focusing the analysis on the parts of the program that are relevant to a particular query; this reduces the abstract state space and improves both speed and precision.

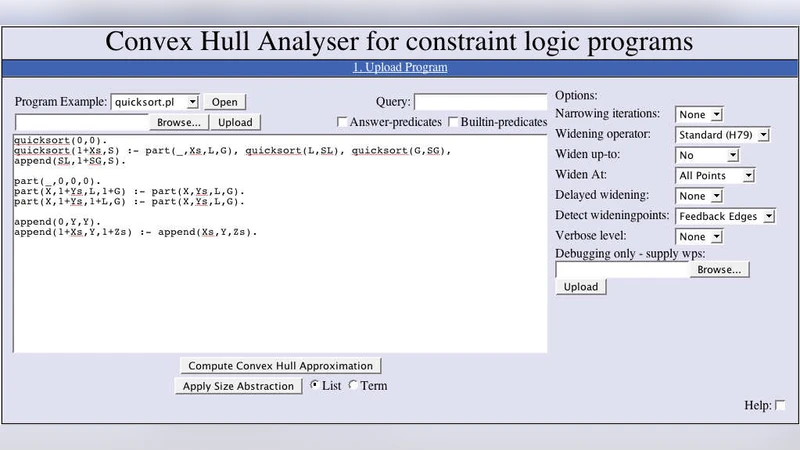

Implementation-wise, the tool is built on a web front‑end that accepts user‑uploaded logic programs and lets the analyst configure options such as the number of delayed‑widening iterations, the up‑to bound, and the maximum number of narrowing passes. The back‑end uses a CLP parser to translate the source into an intermediate representation, constructs the CFG, and then invokes the Parma Polyhedra Library for all polyhedral operations. Results are presented as a set of linear inequalities, variable ranges, and visualisations of the CFG annotated with abstract states.

The authors evaluate the tool on two representative case studies. The first involves transition‑system models commonly used in embedded‑system verification. By encoding states and transition guards as linear constraints, the analysis automatically proves safety properties such as “an error state is unreachable”. The second case study analyses an emulator for a PIC microcontroller, a platform frequently employed in wearable computing. Registers, memory addresses, and instruction effects are modelled as integer variables; the polyhedral analysis detects potential overflow and illegal address accesses. In both experiments the tool achieved a modest precision loss (approximately 5–10 % compared with a baseline that uses unrestricted widening) but delivered a 30–40 % reduction in analysis time. Moreover, scalability tests showed that memory consumption grows roughly linearly with the number of variables, even when programs contain several hundred numeric variables.

The paper also discusses trade‑offs in depth. When many widening points are selected, the cost of each widening operation can dominate the total runtime, leading to a sharp increase in execution time. To mitigate this, the tool offers a “widening‑point limit” option and a dynamic cost model that predicts the most beneficial moments to apply widening. Users can also tune the up‑to bound to balance precision against performance according to the needs of their verification task.

In conclusion, the presented tool makes sophisticated polyhedral analysis techniques readily available through an intuitive web interface, automatically handles the delicate choice of widening points, and integrates complementary program transformations to improve both precision and efficiency. The authors suggest future work on extending the framework to handle non‑linear constraints via polyhedral over‑approximations, exploring distributed or cloud‑based execution for massive code bases, and employing machine‑learning methods to predict optimal widening strategies.

Comments & Academic Discussion

Loading comments...

Leave a Comment