The Earth System Grid: Supporting the Next Generation of Climate Modeling Research

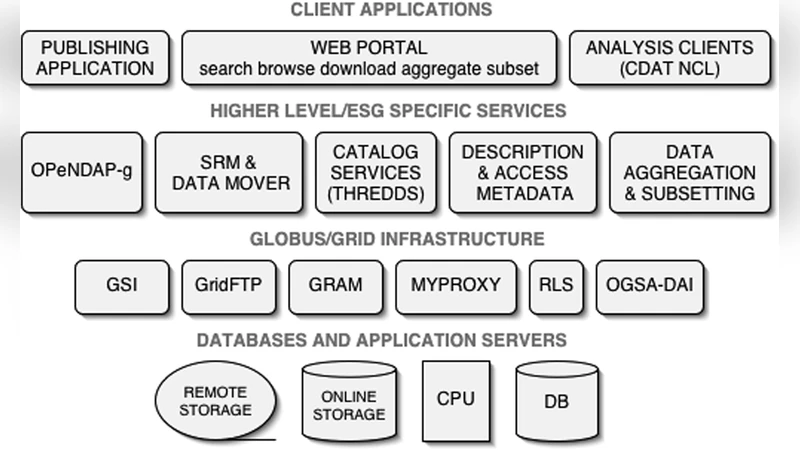

Understanding the earth’s climate system and how it might be changing is a preeminent scientific challenge. Global climate models are used to simulate past, present, and future climates, and experiments are executed continuously on an array of distributed supercomputers. The resulting data archive, spread over several sites, currently contains upwards of 100 TB of simulation data and is growing rapidly. Looking toward mid-decade and beyond, we must anticipate and prepare for distributed climate research data holdings of many petabytes. The Earth System Grid (ESG) is a collaborative interdisciplinary project aimed at addressing the challenge of enabling management, discovery, access, and analysis of these critically important datasets in a distributed and heterogeneous computational environment. The problem is fundamentally a Grid problem. Building upon the Globus toolkit and a variety of other technologies, ESG is developing an environment that addresses authentication, authorization for data access, large-scale data transport and management, services and abstractions for high-performance remote data access, mechanisms for scalable data replication, cataloging with rich semantic and syntactic information, data discovery, distributed monitoring, and Web-based portals for using the system.

💡 Research Summary

The Earth System Grid (ESG) paper presents a comprehensive solution to the growing challenge of managing, discovering, accessing, and analyzing the massive climate‑model simulation datasets produced by distributed supercomputing facilities worldwide. Current climate experiments generate over 100 TB of NetCDF files that are stored across multiple institutions, making data sharing cumbersome due to fragmented authentication, heterogeneous storage systems, and limited remote‑access capabilities. ESG treats this as a classic Grid problem and builds a layered, service‑oriented architecture on top of the Globus Toolkit, complemented by a suite of open‑source components.

At the security layer, ESG adopts Globus GSI for X.509‑based mutual authentication and integrates the Virtual Organization Membership Service (VOMS) to enforce fine‑grained, organization‑specific authorization policies. This enables collaborative data use while preserving each site’s control over its assets. For high‑performance data movement, ESG combines GridFTP with the Resource File Transfer (RFT) service, providing multi‑stream, parallel transfers, automatic retry, and optional compression or encryption. The transport subsystem is designed to sustain terabyte‑scale transfers across wide‑area networks without saturating bandwidth.

Remote data access is realized through OPeNDAP and NetCDF sub‑setting services exposed as web APIs. Users can request only the variables, time steps, or spatial regions they need, avoiding the download of entire multi‑gigabyte files and dramatically reducing I/O bottlenecks in downstream analysis pipelines. ESG’s replication framework, the Replica Management Service (RMS), automatically creates and distributes data replicas based on policies that consider storage capacity, network latency, and access frequency. Local cache nodes hold hot replicas to further lower latency, and the system continuously rebalances replicas in response to real‑time monitoring data.

Metadata management is a cornerstone of ESG. A rich schema captures both technical attributes (format, size, location) and scientific descriptors (model version, experiment design, variable definitions, simulation parameters). Using the OAI‑PMH protocol, each participating site harvests its metadata into a central meta‑registry, enabling sophisticated searches by keyword, spatiotemporal extent, and simulation configuration. The search engine feeds results into a web portal that visualizes dataset footprints on a map and provides RESTful endpoints for programmatic access.

Operational health is monitored by a hybrid of Ganglia and Nagios, delivering real‑time dashboards of CPU, memory, network, and storage utilization across the distributed infrastructure. Alerts trigger automated remediation workflows, ensuring high availability even as the system scales.

Finally, ESG offers both a user‑friendly web portal and a command‑line interface (CLI) with Python bindings. Researchers can compose end‑to‑end workflows that discover datasets, request sub‑sets, initiate transfers, and launch remote analysis jobs—all from a single script. This integration reduces manual effort, minimizes errors, and accelerates scientific discovery.

The paper demonstrates that ESG already supports the existing 100 TB data holdings reliably and outlines a clear path to petabyte‑scale operations within the next decade. By abstracting the complexities of authentication, transport, replication, and metadata handling, ESG not only serves the climate modeling community but also provides a reusable Grid‑based data management paradigm applicable to other data‑intensive sciences such as astronomy and geophysics.

Comments & Academic Discussion

Loading comments...

Leave a Comment