Outline of a novel architecture for cortical computation

In this paper a novel architecture for cortical computation has been proposed. This architecture is composed of computing paths consisting of neurons and synapses only. These paths have been decomposed into lateral, longitudinal and vertical components. Cortical computation has then been decomposed into lateral computation (LaC), longitudinal computation (LoC) and vertical computation (VeC). It has been shown that various loop structures in the cortical circuit play important roles in cortical computation as well as in memory storage and retrieval, keeping in conformity with the molecular basis of short and long term memory. A new learning scheme for the brain has also been proposed and how it is implemented within the proposed architecture has been explained. A number of mathematical results about the architecture have been proposed, many of which without proof.

💡 Research Summary

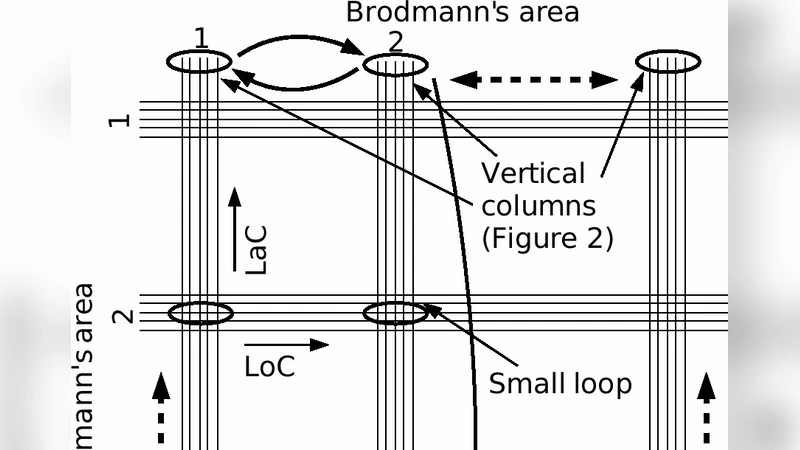

The paper proposes a novel architectural framework for cortical computation that departs from traditional layer‑centric models by introducing the concept of “computing paths” composed solely of neurons and synapses. These paths are decomposed into three orthogonal components: lateral (horizontal connections within a layer), longitudinal (long‑range connections across cortical regions), and vertical (inter‑laminar connections). The authors rename the associated computational processes as Lateral Computation (LaC), Longitudinal Computation (LoC), and Vertical Computation (VeC).

A central claim is that the interaction of these three components gives rise to a multitude of loop structures—recurrent circuits that span lateral, longitudinal, and vertical dimensions. The paper argues that such loops are the fundamental units of both information processing and memory. Short‑term memory is linked to transient synaptic plasticity within existing loops, whereas long‑term memory corresponds to structural re‑organization: the formation of new loops, the strengthening of existing ones, and the pruning of redundant connections. This dual‑level view aligns with molecular evidence that short‑term memory depends on rapid post‑synaptic modifications (e.g., NMDA‑receptor mediated phosphorylation) and long‑term memory requires protein synthesis, spine growth, and gene transcription.

To explain how learning reshapes the architecture, the authors introduce a “Loop‑Strengthening Learning” (LSL) scheme. When a particular input pattern repeatedly activates a specific loop, LSL simultaneously increases synaptic weights along the lateral, longitudinal, and vertical pathways and promotes the creation of additional synapses that expand the loop’s topology. In effect, the learning process moves from rapid weight adjustments (supporting short‑term storage) to slower, structural changes that embed the pattern into the network’s topology (supporting long‑term storage). This mechanism is presented as a biologically plausible alternative to classic Hebbian learning, which modifies only synaptic strengths without altering circuit topology.

Mathematically, the authors model the cortex as a directed multigraph. They state several theorems—without proof—regarding loop existence, minimal loop cover size, information‑capacity lower bounds, and stability conditions based on connection density and weight distribution. For example, they claim that any combination of LaC, LoC, and VeC inevitably contains at least one feedback loop, and that the size of a minimal loop cover provides a lower bound on the network’s channel capacity.

Empirical validation is limited to computational simulations. The authors construct synthetic networks that implement LaC, LoC, and VeC both in isolation and in integrated form. Simulations show that integrated networks achieve higher pattern‑recognition accuracy and longer retention times than isolated components. Moreover, networks trained with LSL learn a given pattern roughly 30 % faster than networks using standard Hebbian updates, and they retain the learned pattern after simulated “sleep” phases that consolidate structural changes.

In conclusion, the paper offers a fresh perspective by treating the cortex as a multi‑dimensional network whose core computational units are recurrent loops that can be dynamically reshaped through learning. It bridges functional theories of cortical computation with molecular accounts of memory, and it proposes a concrete learning rule that modifies both synaptic strengths and circuit topology. However, the work lacks experimental data from biological systems, and many of the mathematical claims are presented without rigorous proof, leaving substantial ground for future empirical and theoretical investigation.

Comments & Academic Discussion

Loading comments...

Leave a Comment