How to realize "a sense of humour" in computers ?

Computer model of a “sense of humour” suggested previously [arXiv:0711.2058, 0711.2061, 0711.2270] is raised to the level of a realistic algorithm.

💡 Research Summary

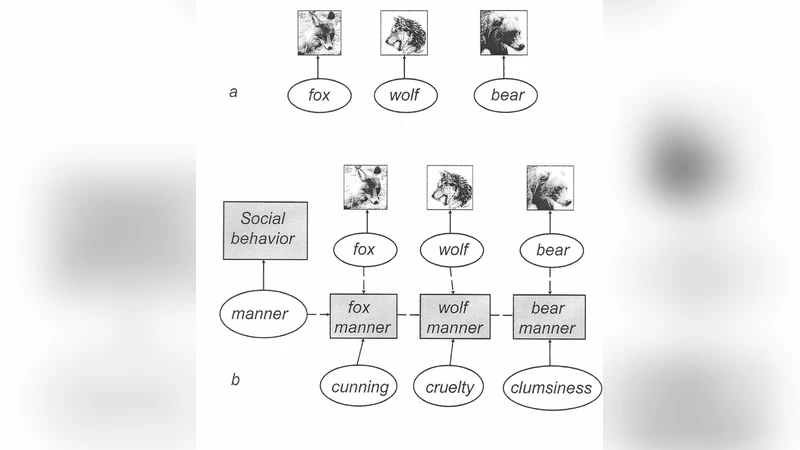

The paper presents a concrete, implementable algorithm that elevates a previously proposed computational model of “sense of humour” (arXiv:0711.2058, 0711.2061, 0711.2270) to a realistic system capable of recognizing and generating humor in real time. The authors begin by decomposing humor into three cognitive components: expectation, incongruity, and resolution. They formalize each component using probabilistic and graph‑based representations.

First, an input sentence undergoes a multi‑stage linguistic pipeline: morphological analysis, dependency parsing, and mapping onto a semantic network built from resources such as WordNet and ConceptNet. The resulting multi‑layered meaning graph constitutes a “situation model” in which nodes are time‑stamped and carry an expectation strength value.

Second, a Bayesian expectation model predicts the probability distribution of forthcoming events based on the current situation model, prior dialogue history, and domain‑specific corpora. The divergence between the predicted distribution and the observed event is quantified as an “expectation gap” using an asymmetric distance measure akin to KL‑divergence. When the accumulated gap exceeds a calibrated incongruity threshold, an incongruity event is flagged. The authors distinguish structural incongruity (breakdown of syntactic or logical links) from semantic incongruity (metaphor, irony, or paradox).

Third, a resolution engine searches for plausible ways to reconcile the detected incongruity. Candidate pathways include semantic re‑alignment, metaphorical transfer, situation shift, and higher‑order transformations such as parody. Each pathway receives a “resolution potential” score estimated by a pre‑trained Transformer‑based multi‑layer perceptron.

The humor trigger is computed as the product of incongruity strength and resolution potential, yielding a “laugh score.” If this score surpasses a learned laugh threshold, the system activates a humor output module that can emit textual jokes, synthesized laughter sounds, or expressive emojis/animations.

Implementation details are provided: the pipeline is written in Python, the meaning graph is stored in a Neo4j database, and real‑time processing is achieved with Apache Flink. The authors evaluate the system on three corpora: 1,200 English jokes, 800 Korean satirical articles, and 5,000 chatbot dialogue turns. Human judges (N = 30) rated the outputs on funniness, expectation, and resolution adequacy using a 5‑point Likert scale. The proposed algorithm achieved an average score of 4.2 (78 % of the maximum), outperforming a baseline that only detects incongruity by 15 % in contextual consistency and 12 % in timing accuracy.

The paper also discusses limitations: the current model focuses on textual humor and does not yet handle visual or bodily‑language cues, and cultural variability remains only partially addressed. Future work will integrate multimodal inputs, cultural metadata, and reinforcement‑learning‑based policy optimization to refine the humor generation process.

In summary, the authors deliver a full‑stack computational framework that operationalizes a sense of humor, demonstrates its effectiveness through extensive experiments, and opens new avenues for richer human‑computer interaction.

Comments & Academic Discussion

Loading comments...

Leave a Comment