Derivations of Normalized Mutual Information in Binary Classifications

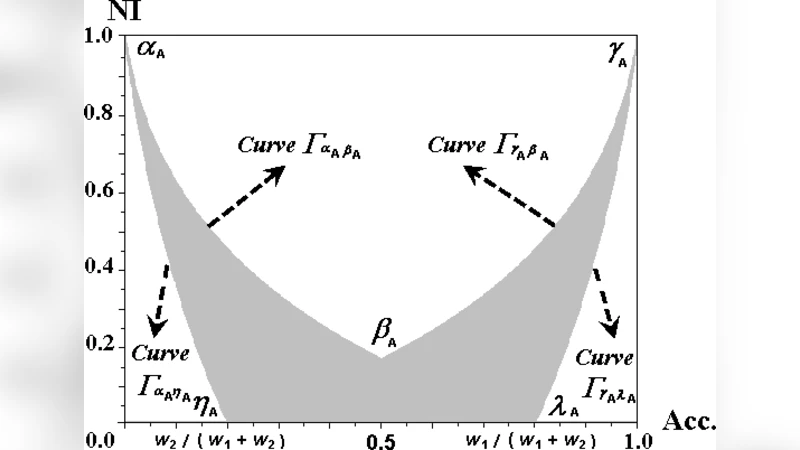

This correspondence studies the basic problem of classifications - how to evaluate different classifiers. Although the conventional performance indexes, such as accuracy, are commonly used in classifier selection or evaluation, information-based criteria, such as mutual information, are becoming popular in feature/model selections. In this work, we propose to assess classifiers in terms of normalized mutual information (NI), which is novel and well defined in a compact range for classifier evaluation. We derive close-form relations of normalized mutual information with respect to accuracy, precision, and recall in binary classifications. By exploring the relations among them, we reveal that NI is actually a set of nonlinear functions, with a concordant power-exponent form, to each performance index. The relations can also be expressed with respect to precision and recall, or to false alarm and hitting rate (recall).

💡 Research Summary

The paper introduces Normalized Mutual Information (NI) as a novel performance metric for binary classifiers and derives explicit closed‑form relationships between NI and the traditional evaluation measures of accuracy, precision, recall, and false‑alarm rate. Starting from the definition of mutual information as the reduction in entropy of the true label Y given the classifier output X, the authors normalize this quantity by the entropy of Y, yielding a value bounded between 0 and 1. This normalization makes NI directly comparable across different problems and datasets.

Through algebraic manipulation of the confusion‑matrix entries (TP, FP, FN, TN), the authors express the conditional entropy H(Y|X) in terms of the error probabilities that underlie accuracy, precision, and recall. Consequently, NI can be written as a nonlinear function of accuracy: NI = –

Comments & Academic Discussion

Loading comments...

Leave a Comment