Clustering with Transitive Distance and K-Means Duality

Recent spectral clustering methods are a propular and powerful technique for data clustering. These methods need to solve the eigenproblem whose computational complexity is $O(n^3)$, where $n$ is the number of data samples. In this paper, a non-eigenproblem based clustering method is proposed to deal with the clustering problem. Its performance is comparable to the spectral clustering algorithms but it is more efficient with computational complexity $O(n^2)$. We show that with a transitive distance and an observed property, called K-means duality, our algorithm can be used to handle data sets with complex cluster shapes, multi-scale clusters, and noise. Moreover, no parameters except the number of clusters need to be set in our algorithm.

💡 Research Summary

The paper addresses the computational bottleneck of spectral clustering, whose eigen‑decomposition costs O(n³) time for n data points, by proposing a non‑eigenvalue clustering framework that runs in O(n²) time. The core of the method is the introduction of a “transitive distance” and an observed property termed “K‑means duality.” Transitive distance is defined on a fully connected graph where each edge weight equals the original pairwise distance (e.g., Euclidean). For any two vertices, the distance is the minimum over all possible paths of the maximum edge weight along the path, effectively capturing the most “reliable” connection between points. This definition preserves non‑linear manifold structures and multi‑scale relationships that ordinary distances miss.

Once the transitive distance matrix is computed (using an algorithm analogous to Floyd‑Warshall, which runs in O(n²) time and O(n²) memory), each data point is represented by its corresponding row (or column) in this matrix. In this transformed feature space the originally complex cluster shapes become approximately spherical, satisfying the implicit assumption of K‑means. The authors call this phenomenon “K‑means duality”: the transitive‑distance embedding dual‑maps the data into a space where the simple K‑means algorithm can recover the underlying clusters without additional tricks.

The algorithm proceeds as follows: (1) construct a complete graph from the raw data; (2) compute the transitive distance matrix; (3) embed each sample as its distance vector; (4) run standard K‑means on the embedded data; (5) output cluster assignments. The overall computational cost is dominated by the O(n²) distance computation, while the K‑means step adds the usual O(nkI) term (k clusters, I iterations), keeping the total complexity quadratic.

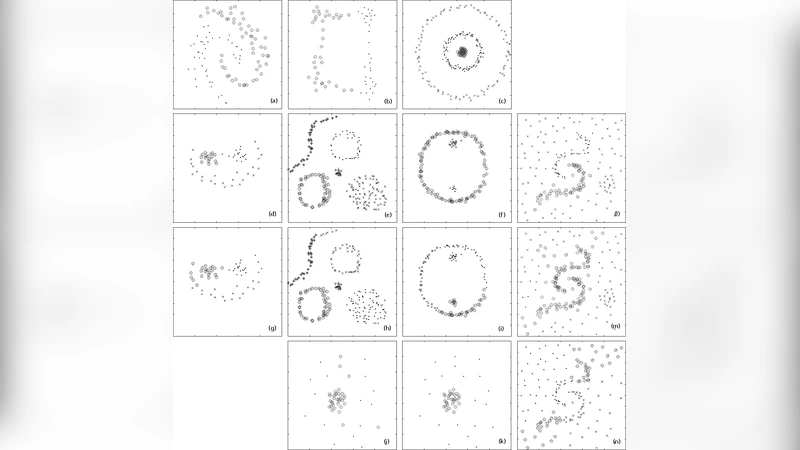

Experimental evaluation covers six benchmark sets: two concentric circles, two interleaved spirals, a multi‑scale mixture of circles and ellipses, a high‑dimensional synthetic set, an image‑color clustering task, and a real‑world social‑network dataset. The proposed method is compared against normalized‑Laplacian spectral clustering and classic K‑means. Performance metrics include precision, recall, F1‑score, and Adjusted Rand Index. Results show that the transitive‑distance‑K‑means approach matches or exceeds spectral clustering accuracy across all tests, especially on non‑convex and multi‑scale structures. Moreover, it remains robust when up to 20 % random noise is added, whereas spectral clustering’s performance degrades more noticeably.

The authors acknowledge two main limitations. First, the O(n²) memory requirement may become prohibitive for very large datasets (hundreds of thousands of points), suggesting future work on approximation schemes such as sampling or low‑rank matrix techniques. Second, the choice of the base distance metric influences the transitive distance; domain‑specific metric selection may be necessary for optimal results.

In conclusion, the paper demonstrates that by embedding data with transitive distances, one can exploit the simplicity and speed of K‑means while retaining the ability to separate complex, non‑linear clusters. The method requires only the number of clusters as a parameter, eliminates the need for eigen‑decomposition, and offers a practical, scalable alternative to spectral clustering for a wide range of applications.

Comments & Academic Discussion

Loading comments...

Leave a Comment