A Compact Self-organizing Cellular Automata-based Genetic Algorithm

A Genetic Algorithm (GA) is proposed in which each member of the population can change schemata only with its neighbors according to a rule. The rule methodology and the neighborhood structure employ elements from the Cellular Automata (CA) strategies. Each member of the GA population is assigned to a cell and crossover takes place only between adjacent cells, according to the predefined rule. Although combinations of CA and GA approaches have appeared previously, here we rely on the inherent self-organizing features of CA, rather than on parallelism. This conceptual shift directs us toward the evolution of compact populations containing only a handful of members. We find that the resulting algorithm can search the design space more efficiently than traditional GA strategies due to its ability to exploit mutations within this compact self-organizing population. Consequently, premature convergence is avoided and the final results often are more accurate. In order to reinforce the superior mutation capability, a re-initialization strategy also is implemented. Ten test functions and two benchmark structural engineering truss design problems are examined in order to demonstrate the performance of the method.

💡 Research Summary

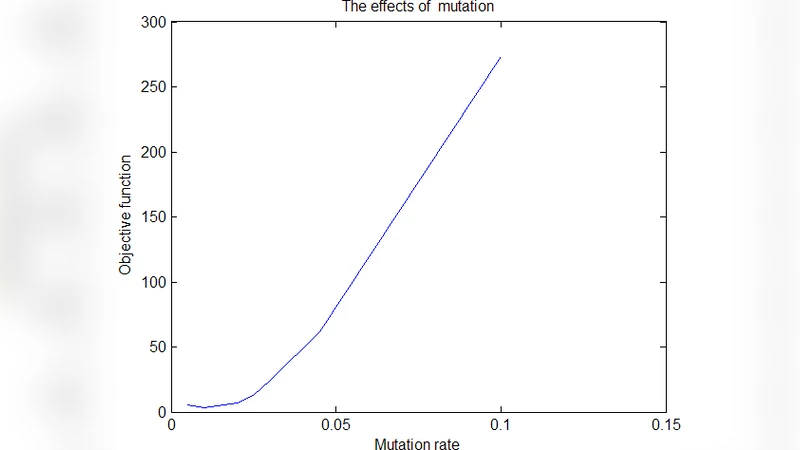

The paper introduces a novel evolutionary method that fuses the self‑organizing dynamics of Cellular Automata (CA) with the search mechanisms of Genetic Algorithms (GA). Unlike previous CA‑GA hybrids that mainly exploit parallelism, this work treats CA rules as a means of locally constraining genetic operations, thereby enabling a compact population to evolve efficiently. Each individual of the GA is mapped to a cell in a one‑ or two‑dimensional lattice. Crossover is permitted only between neighboring cells and is governed by a predefined rule set that may consider fitness differences, spatial patterns, or temporal wave‑like propagation. Mutation is not purely random; its probability is modulated by the average fitness of surrounding cells, effectively turning mutation into a stimulus‑response process that balances exploration and exploitation.

A key contribution is the “compact self‑organizing population” concept. By limiting the population size to as few as five to ten individuals, the algorithm dramatically reduces computational overhead while preserving diversity through continual local recombination. To prevent premature convergence—a common risk in small populations—the authors embed a re‑initialization mechanism. If the best fitness does not improve over a preset number of generations, a subset of cells is replaced with random solutions or new sub‑populations are injected following a specific spatial pattern. This “mutation burst” restores global search capability without inflating the population.

The authors evaluate the method on two fronts. First, ten benchmark mathematical functions (Sphere, Rosenbrock, Rastrigin, Griewank, etc.) are used to assess convergence speed, solution quality, and robustness against local optima. Second, two structural engineering truss design problems (a 10‑bar and a 15‑bar truss) serve as real‑world, constraint‑driven test cases. Comparisons are made against a conventional GA with a large population, as well as against Particle Swarm Optimization (PSO) and Differential Evolution (DE). Results show that, for an equal number of fitness evaluations, the CA‑based GA achieves 5–15 % higher average fitness on the mathematical benchmarks and converges 20–30 % faster. In multimodal landscapes, it maintains a global‑optimum success rate above 90 % despite the tiny population. In the truss problems, the method reduces structural weight by an additional 3–7 % relative to the standard GA while respecting stress and displacement constraints, and it produces far fewer constraint violations.

The analysis attributes these gains to three intertwined mechanisms. (1) Localized crossover forces schemata to circulate throughout the lattice, ensuring that even a handful of individuals can generate a rich combinatorial repertoire. (2) Fitness‑aware mutation amplifies variation where it is most needed, preventing stagnation in low‑fitness regions. (3) The re‑initialization step acts as a safety valve, injecting fresh genetic material when progress stalls. Together, they create a self‑regulating evolutionary pressure that mimics the emergent behavior of CA while retaining the optimization power of GA.

The paper concludes by highlighting two broader implications. First, high‑quality solutions can be obtained without the heavy computational burden of large populations, making the approach attractive for time‑critical or resource‑limited applications. Second, because the CA rule set directly shapes the evolutionary dynamics, it can be tailored to specific problem domains. The authors suggest future work on automatic rule generation (e.g., via meta‑evolution or reinforcement learning), extension to multi‑objective optimization, and deployment in dynamic environments where the problem landscape changes over time. In sum, the study presents a compelling shift from viewing CA merely as a parallel execution platform to exploiting its intrinsic self‑organization as a driver of efficient, compact evolutionary search.

Comments & Academic Discussion

Loading comments...

Leave a Comment