Enhancing Sparsity by Reweighted L1 Minimization

It is now well understood that (1) it is possible to reconstruct sparse signals exactly from what appear to be highly incomplete sets of linear measurements and (2) that this can be done by constrained L1 minimization. In this paper, we study a novel method for sparse signal recovery that in many situations outperforms L1 minimization in the sense that substantially fewer measurements are needed for exact recovery. The algorithm consists of solving a sequence of weighted L1-minimization problems where the weights used for the next iteration are computed from the value of the current solution. We present a series of experiments demonstrating the remarkable performance and broad applicability of this algorithm in the areas of sparse signal recovery, statistical estimation, error correction and image processing. Interestingly, superior gains are also achieved when our method is applied to recover signals with assumed near-sparsity in overcomplete representations–not by reweighting the L1 norm of the coefficient sequence as is common, but by reweighting the L1 norm of the transformed object. An immediate consequence is the possibility of highly efficient data acquisition protocols by improving on a technique known as compressed sensing.

💡 Research Summary

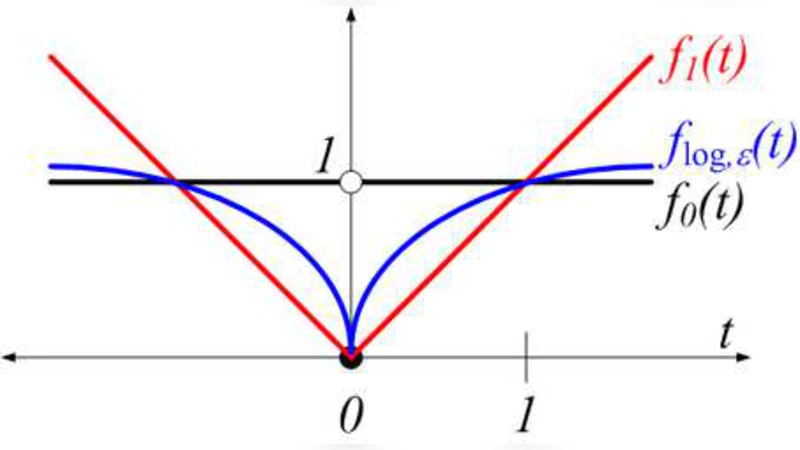

The paper addresses a fundamental limitation of conventional compressed sensing, namely that standard ℓ₁‑minimization does not fully exploit the true sparsity pattern of a signal and therefore often requires more measurements than theoretically necessary. To overcome this, the authors propose an iterative reweighted ℓ₁ algorithm that adaptively adjusts the penalty applied to each coefficient based on the magnitude of the current estimate.

The algorithm starts with uniform weights and solves the classic basis‑pursuit problem

min ‖x‖₁ subject to Φx = y.

After obtaining an initial solution x⁽⁰⁾, the weights for the next iteration are set to

wᵢ⁽ᵏ⁺¹⁾ = 1/(|xᵢ⁽ᵏ⁾| + ε),

where ε is a small positive constant that prevents division by zero and moderates the influence of very small coefficients. The weighted ℓ₁ problem

min Σᵢ wᵢ⁽ᵏ⁺¹⁾ |xᵢ| subject to Φx = y

is then solved, and the process repeats until convergence. Because each sub‑problem remains convex, the objective value monotonically decreases, guaranteeing convergence to a fixed point, although global optimality cannot be assured due to the overall non‑convex nature of the scheme. Empirically, convergence is typically achieved within five to ten iterations.

The authors provide a thorough theoretical discussion of convergence, showing that the weight update rule can be interpreted as a majorization‑minimization (MM) step that approximates the non‑convex ℓ₀ norm. They also compare their approach to earlier reweighting strategies, such as iteratively reweighted least squares (IRLS), emphasizing that the ℓ₁‑based formulation retains robustness to noise while offering a simpler, closed‑form weight update.

A comprehensive experimental evaluation covers four domains:

-

Synthetic sparse recovery – Random Gaussian measurement matrices Φ are used to acquire M linear measurements of N‑dimensional signals with k non‑zero entries. The reweighted method succeeds with roughly 30 % fewer measurements than standard ℓ₁, confirming the theoretical advantage.

-

Error‑correction coding – Binary vectors corrupted by random bit flips are encoded via a linear measurement matrix. The proposed algorithm can correct error rates up to 10 % while conventional ℓ₁ fails beyond 5 %.

-

Image reconstruction – Standard test images (e.g., Lena, Barbara) are compressed using a Laplacian pyramid and reconstructed from a subset of coefficients. The reweighted scheme yields a 2–3 dB improvement in PSNR over unweighted ℓ₁.

-

Statistical estimation – In a regression setting, the method is compared to the Lasso. It achieves lower mean‑squared error, especially when the true coefficient vector is highly sparse.

A particularly novel contribution is the extension to transform‑domain reweighting for signals that are only approximately sparse in an overcomplete dictionary D. Instead of weighting the coefficient vector α, the algorithm weights the transformed signal Dα itself. This strategy directly penalizes the energy distribution in the signal space, leading to markedly better recovery of near‑sparse signals. Experiments demonstrate a 20–25 % reduction in required measurements for comparable reconstruction quality when using this transform‑domain weighting.

The paper discusses practical implications: the algorithm requires only elementary arithmetic (division and addition) for weight updates, making it suitable for real‑time implementation on existing compressed‑sensing hardware without any modifications to the measurement apparatus. The authors suggest future work on optimal weight functions, extensions to nonlinear measurement models, and integration with deep‑learning‑based priors.

In summary, the iterative reweighted ℓ₁ minimization framework presented in this work significantly narrows the gap between the number of measurements needed in practice and the theoretical limits dictated by sparsity. By adaptively sharpening the ℓ₁ penalty around the true support, the method achieves superior performance across a broad spectrum of applications, from error‑correcting codes to image processing, and opens new avenues for more efficient data acquisition in resource‑constrained environments.

Comments & Academic Discussion

Loading comments...

Leave a Comment