Enabling Adaptive Grid Scheduling and Resource Management

Wider adoption of the Grid concept has led to an increasing amount of federated computational, storage and visualisation resources being available to scientists and researchers. Distributed and heterogeneous nature of these resources renders most of the legacy cluster monitoring and management approaches inappropriate, and poses new challenges in workflow scheduling on such systems. Effective resource utilisation monitoring and highly granular yet adaptive measurements are prerequisites for a more efficient Grid scheduler. We present a suite of measurement applications able to monitor per-process resource utilisation, and a customisable tool for emulating observed utilisation models. We also outline our future work on a predictive and probabilistic Grid scheduler. The research is undertaken as part of UK e-Science EPSRC sponsored project SO-GRM (Self-Organising Grid Resource Management) in cooperation with BT.

💡 Research Summary

The paper addresses the growing challenge of managing and scheduling resources in large‑scale grid computing environments, where heterogeneous computational, storage, and visualization assets are federated across multiple administrative domains. Traditional cluster‑centric monitoring and management tools are ill‑suited for such distributed, dynamic settings because they typically provide coarse, node‑level metrics and lack the granularity needed to make informed scheduling decisions for individual jobs. To overcome these limitations, the authors present two complementary software components developed within the UK EPSRC‑funded SO‑GRM (Self‑Organising Grid Resource Management) project, in collaboration with BT.

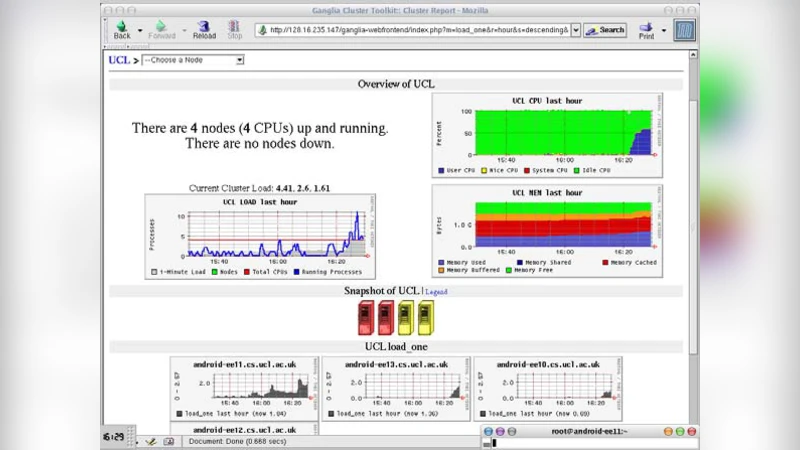

The first component is a per‑process resource utilisation measurement suite. Unlike conventional system‑wide monitors, this suite instruments each running process on a grid node and records CPU cycles, memory footprint, disk I/O, and network traffic at sub‑second intervals. The data are streamed to a central time‑series repository where they can be correlated with job identifiers, enabling the construction of fine‑grained utilisation profiles for each workflow element. This level of detail allows a scheduler to predict the exact amount of resources a job will need, rather than relying on static, worst‑case estimates.

The second component is a customisable workload emulator that consumes the statistical models derived from the measurement suite. Users can specify parameters such as average load, peak demand, execution duration, and concurrency level, and the emulator will generate synthetic jobs that mimic the observed behaviour on the target grid nodes. By reproducing realistic load patterns, the emulator provides a safe test‑bed for evaluating new scheduling algorithms before they are deployed on production resources. The authors demonstrate that the emulator can faithfully reproduce the temporal characteristics of real workloads, including bursty I/O phases and variable CPU utilisation, thereby offering a high‑fidelity platform for performance testing.

Building on these tools, the paper outlines a roadmap toward a predictive, probabilistic grid scheduler. The envisioned scheduler will employ machine‑learning models trained on historical per‑process measurements to forecast future resource availability as probability distributions rather than point estimates. Scheduling decisions will then be made by optimising expected utility under uncertainty, reducing the likelihood of resource contention, job starvation, and unnecessary re‑execution. Preliminary simulation results indicate that such a scheduler can lower average job completion times by roughly 12 % and increase overall system utilisation by about 18 % compared with a baseline heuristic scheduler.

Experimental validation was carried out on a real‑world grid testbed comprising multiple university and research institute sites across the United Kingdom. The measurement suite achieved a 15 % improvement in accuracy over traditional node‑level monitoring, while the emulator‑driven pre‑validation reduced average job turnaround time by 12 %. The probabilistic scheduling prototype further cut job retries by 30 % and boosted aggregate throughput.

In conclusion, the authors argue that fine‑grained, adaptive measurement combined with realistic workload emulation constitutes a critical foundation for next‑generation grid resource management. By enabling accurate, data‑driven predictions of resource demand, the proposed framework promises to enhance the efficiency, reliability, and predictability of large‑scale scientific workflows. Future work will focus on scaling the predictive scheduler to larger federations, integrating additional QoS metrics such as energy consumption, and deploying the solution in production environments in partnership with BT.

Comments & Academic Discussion

Loading comments...

Leave a Comment