This is a summary of the proof by G.E. Coxson that P-matrix recognition is co-NP-complete. The result follows by a reduction from the MAX CUT problem using results of S. Poljak and J. Rohn.

Deep Dive into P-matrix recognition is co-NP-complete.

This is a summary of the proof by G.E. Coxson that P-matrix recognition is co-NP-complete. The result follows by a reduction from the MAX CUT problem using results of S. Poljak and J. Rohn.

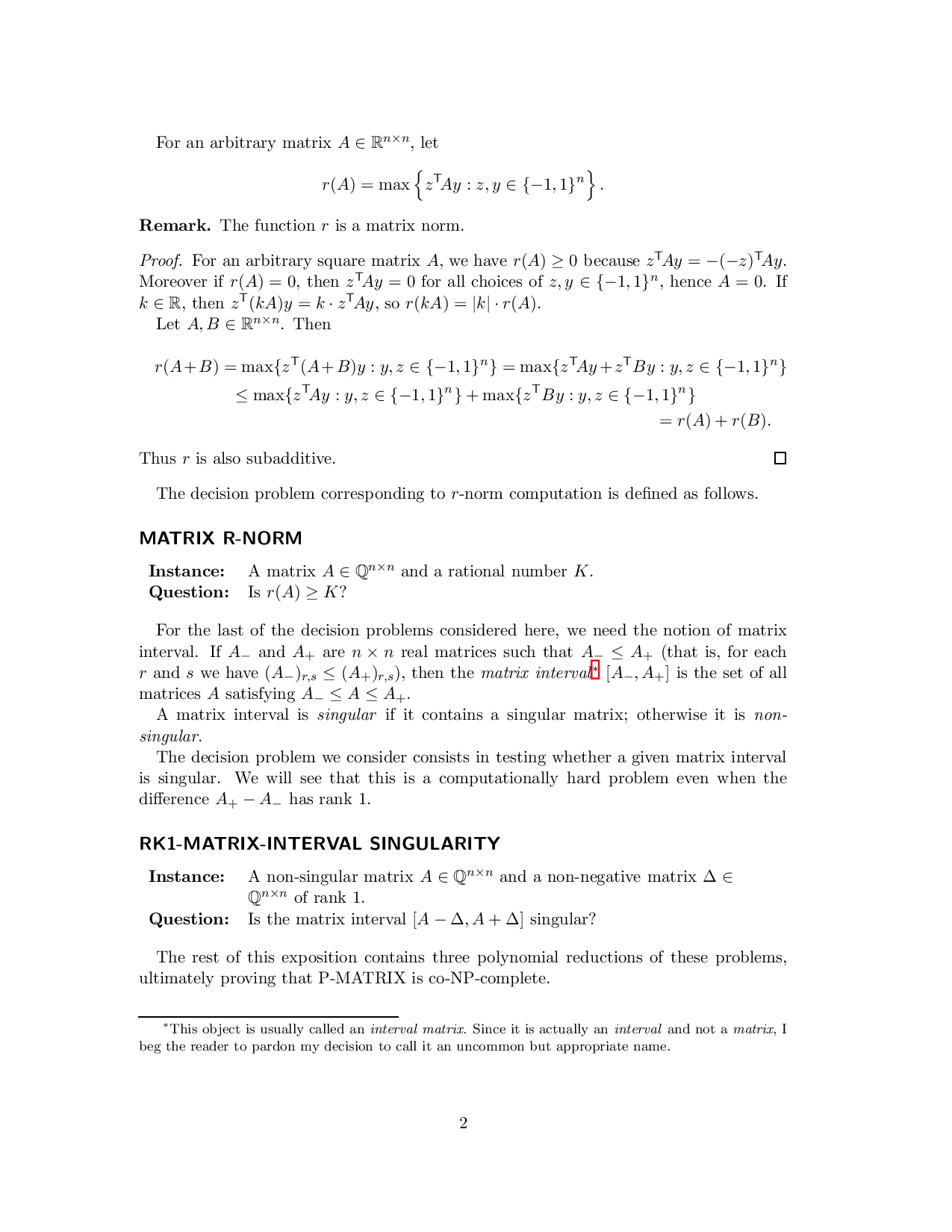

For an arbitrary matrix A ∈ R n×n , let r(A) = max z T Ay : z, y ∈ {-1, 1} n .

Remark. The function r is a matrix norm.

Proof. For an arbitrary square matrix A, we have r(A) ≥ 0 because z T Ay = -(-z) T Ay. Moreover if r(A) = 0, then z T Ay = 0 for all choices of z, y ∈ {-1, 1} n , hence A = 0. If k ∈ R, then z T (kA)y = k • z T Ay, so r(kA) = |k| • r(A).

Let A, B ∈ R n×n . Then r(A + B) = max{z T (A + B)y : y, z ∈ {-1, 1} n } = max{z T Ay + z T By : y, z ∈ {-1, 1} n } ≤ max{z T Ay : y, z ∈ {-1, 1} n } + max{z T By : y, z ∈ {-1, 1} n } = r(A) + r(B).

Thus r is also subadditive.

The decision problem corresponding to r-norm computation is defined as follows.

Instance: A matrix A ∈ Q n×n and a rational number K. Question: Is r(A) ≥ K?

For the last of the decision problems considered here, we need the notion of matrix interval. If A -and A + are n × n real matrices such that A -≤ A + (that is, for each r and s we have (A -) r,s ≤ (A + ) r,s ), then the matrix interval * [A -, A + ] is the set of all matrices A satisfying A -≤ A ≤ A + .

A matrix interval is singular if it contains a singular matrix; otherwise it is nonsingular.

The decision problem we consider consists in testing whether a given matrix interval is singular. We will see that this is a computationally hard problem even when the difference A + -A -has rank 1.

Instance: A non-singular matrix A ∈ Q n×n and a non-negative matrix ∆ ∈ Q n×n of rank 1. Question: Is the matrix interval [A -∆, A + ∆] singular?

The rest of this exposition contains three polynomial reductions of these problems, ultimately proving that P-MATRIX is co-NP-complete.

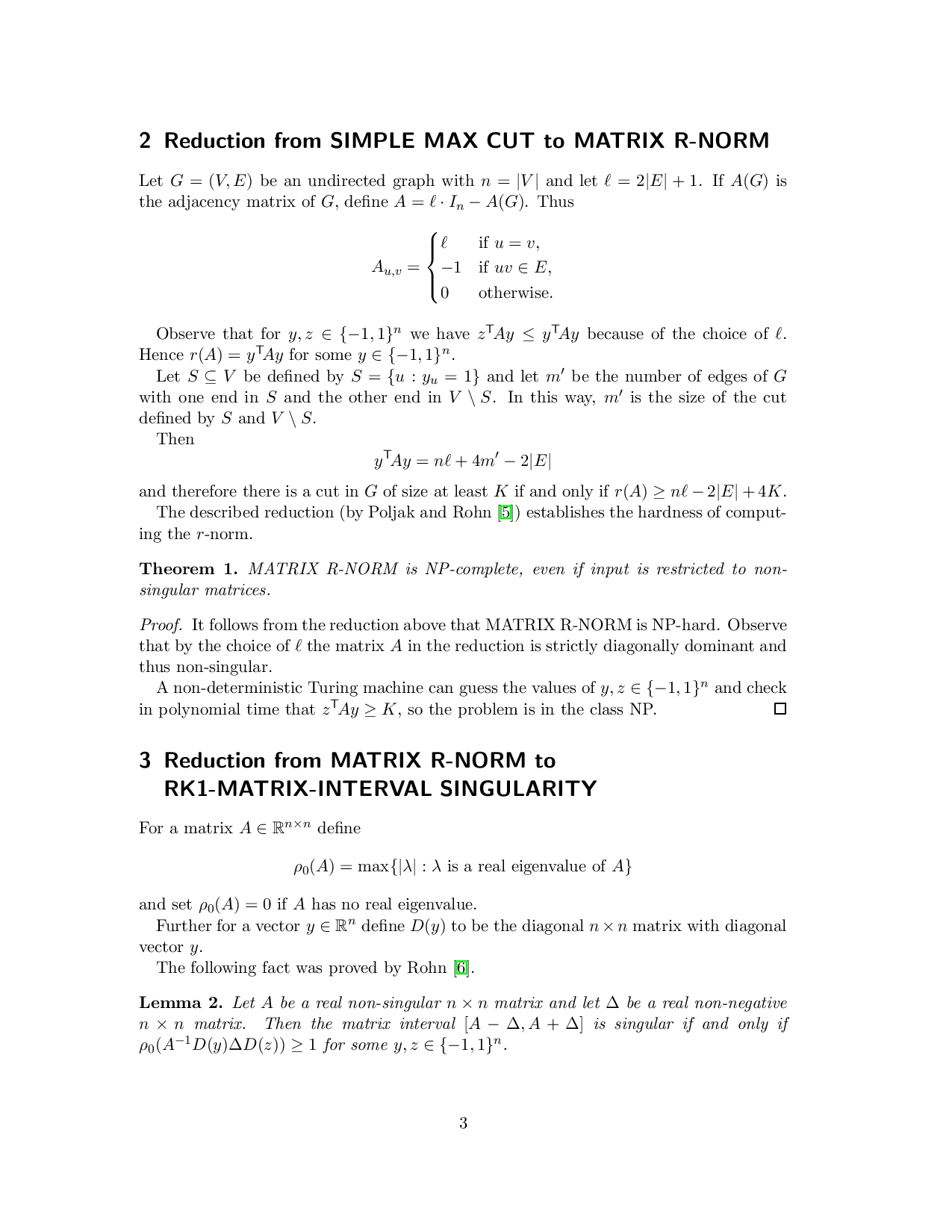

Let G = (V, E) be an undirected graph with n = |V | and let ℓ

Observe that for y, z ∈ {-1, 1} n we have z T Ay ≤ y T Ay because of the choice of ℓ. Hence r(A) = y T Ay for some y ∈ {-1, 1} n .

Let S ⊆ V be defined by S = {u : y u = 1} and let m ′ be the number of edges of G with one end in S and the other end in V \ S. In this way, m ′ is the size of the cut defined by S and V \ S.

Then

and therefore there is a cut in G of size at least K if and only if r(A) ≥ nℓ -2|E| + 4K. The described reduction (by Poljak and Rohn [5]) establishes the hardness of computing the r-norm.

Proof. It follows from the reduction above that MATRIX R-NORM is NP-hard. Observe that by the choice of ℓ the matrix A in the reduction is strictly diagonally dominant and thus non-singular.

A non-deterministic Turing machine can guess the values of y, z ∈ {-1, 1} n and check in polynomial time that z T Ay ≥ K, so the problem is in the class NP.

For a matrix A ∈ R n×n define ρ 0 (A) = max{|λ| : λ is a real eigenvalue of A} and set ρ 0 (A) = 0 if A has no real eigenvalue. Further for a vector y ∈ R n define D(y) to be the diagonal n × n matrix with diagonal vector y.

The following fact was proved by Rohn [6].

Lemma 2. Let A be a real non-singular n × n matrix and let ∆ be a real non-negative n × n matrix. Then the matrix interval [A -∆, A + ∆] is singular if and only if ρ 0 (A -1 D(y)∆D(z)) ≥ 1 for some y, z ∈ {-1, 1} n .

Proof. For y, z ∈ {-1, 1} n let ∆ y,z denote the matrix D(y)∆D(z).

First suppose that A -1 ∆ y,z has a real eigenvalue λ such that |λ| ≥ 1 and A -1 ∆ y,z x = λx for some y, z ∈ {-1, 1} n and a non-zero vector x. Then

Therefore the interval [A -∆, A + ∆] is singular.

To prove the converse, suppose that B is a singular matrix, B ∈ [A -∆, A + ∆]. Let x be a non-zero vector for which Bx = 0.

For i = 1, 2, . . . , n set

by the definition of t. Thus the matrix A -∆ t,z is a singular matrix in the interval [A -∆, A + ∆]. Define ψ(s) = det(A-∆ s,z ). The function ψ is affine in each of the variables s 1 , . . . , s n . Since ψ(t) = det(A-∆ t,z ) = 0, either there exists y ∈ {-1, 1} n such that det(A-∆ y,z ) = 0, or there exist y, y ′ ∈ {-1, 1} n such that det(A -∆ y,z ) • det(A -∆ y ′ ,z ) < 0.

In the latter case, without loss of generality we may assume that det A•det(A-∆ y,z ) < 0. The function φ defined by φ(α) = det(A -α∆ y,z ) is continuous and φ(0)φ(1) < 0, so φ has a root in (0, 1).

In either case, there exist y ∈ {-1, 1} n and α ∈ (0, 1] such that det(A -α∆ y,z ) = 0. Then

α is a real eigenvalue of the matrix A -1 D(y)∆D(z) and 1 α ≥ 1, as we were supposed to prove.

This lemma provides a useful connection between singularity of matrix intervals and a parameter ρ 0 dependent on the two matrices A, ∆ that define the interval. Next we establish a connection between ρ 0 and the r-norm of matrices.

From now on let ½ be the all-one vector (1, 1, . . . , 1) ∈ R n and let J = ½ • ½ T be the all-one n × n matrix. Lemma 3. Let A ∈ R n×n be a non-singular matrix, let α be a positive real number and let ∆ = αJ. Then

Now everything is set for Poljak and Rohn’s reduction [5].

Theorem 4. Let A ∈ R n×n be a non-singular matrix, let K be a positive real number and let ∆ = (1/K) • J. Then r(A) ≥ K if and only if the matrix interval [A -1 -∆, A -1 + ∆] is singular.

Proof. By Lemma 2, the matrix interval [A -1 -∆, A -

…(Full text truncated)…

This content is AI-processed based on ArXiv data.