Learning for Dynamic Bidding in Cognitive Radio Resources

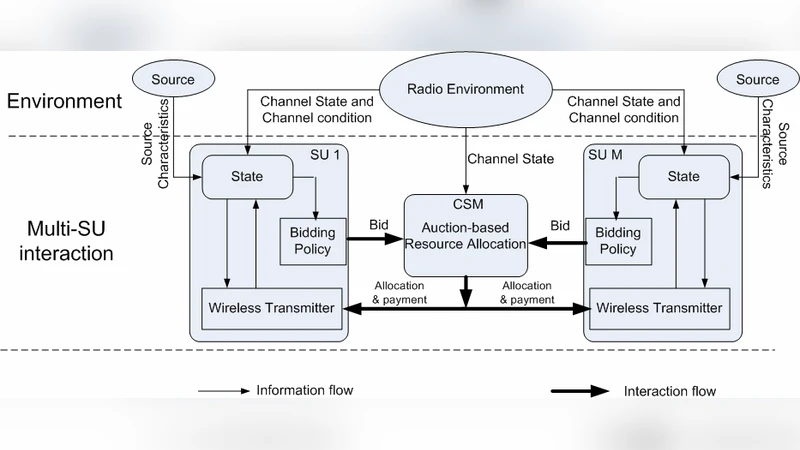

In this paper, we model the various wireless users in a cognitive radio network as a collection of selfish, autonomous agents that strategically interact in order to acquire the dynamically available spectrum opportunities. Our main focus is on developing solutions for wireless users to successfully compete with each other for the limited and time-varying spectrum opportunities, given the experienced dynamics in the wireless network. We categorize these dynamics into two types: one is the disturbance due to the environment (e.g. wireless channel conditions, source traffic characteristics, etc.) and the other is the impact caused by competing users. To analyze the interactions among users given the environment disturbance, we propose a general stochastic framework for modeling how the competition among users for spectrum opportunities evolves over time. At each stage of the dynamic resource allocation, a central spectrum moderator auctions the available resources and the users strategically bid for the required resources. The joint bid actions affect the resource allocation and hence, the rewards and future strategies of all users. Based on the observed resource allocation and corresponding rewards from previous allocations, we propose a best response learning algorithm that can be deployed by wireless users to improve their bidding policy at each stage. The simulation results show that by deploying the proposed best response learning algorithm, the wireless users can significantly improve their own performance in terms of both the packet loss rate and the incurred cost for the used resources.

💡 Research Summary

The paper addresses the problem of allocating time‑varying spectrum opportunities in a cognitive radio network where multiple wireless users act as selfish, autonomous agents. Each user wishes to acquire enough channels to satisfy its traffic while minimizing the monetary cost of the spectrum it purchases. To capture the interaction among users and the stochastic nature of the wireless environment, the authors model the system as a discrete‑time Markov game. The state of the game includes channel quality (e.g., fading), traffic load, and the recent bidding behavior of all users. At every time slot a central spectrum moderator conducts an auction for the currently available channels. Users submit bids based on their current state; the auction (implemented as a generalized Vickrey‑Clarke‑Groves mechanism) determines the allocation and the payment each winner must make. The instantaneous reward for user i is defined as a weighted combination of the successfully transmitted data (which reduces packet loss) and the payment incurred, i.e., r_i = α·throughput − β·cost.

Given that the joint bidding actions affect both the immediate reward and the future state, the authors propose a best‑response learning algorithm that each user can run locally. The algorithm maintains an estimate Q_i(s,b) of the expected discounted return when bidding b in state s. After each auction, the user observes the realized reward and the next state, and updates its Q‑value using a temporal‑difference rule: \

Comments & Academic Discussion

Loading comments...

Leave a Comment