Bayesian segmentation of hyperspectral images

In this paper we consider the problem of joint segmentation of hyperspectral images in the Bayesian framework. The proposed approach is based on a Hidden Markov Modeling (HMM) of the images with common segmentation, or equivalently with common hidden classification label variables which is modeled by a Potts Markov Random Field. We introduce an appropriate Markov Chain Monte Carlo (MCMC) algorithm to implement the method and show some simulation results.

💡 Research Summary

The paper addresses the challenging problem of jointly segmenting hyperspectral images (HSI) within a rigorous Bayesian framework. Traditional HSI segmentation methods often treat each spectral band independently, thereby neglecting the inherent spectral continuity across bands. To overcome this limitation, the authors model the entire hyperspectral cube as a hidden Markov model (HMM) in which a single set of hidden class labels is shared by all bands. Each pixel i is associated with a latent variable (z_i \in {1,\dots,K}) indicating its class. Conditional on (z_i), the observed spectral vector (\mathbf{x}i = (x_i^1,\dots,x_i^B)) is assumed to follow a multivariate Gaussian distribution with class‑specific mean (\boldsymbol\mu{z_i}) and covariance (\Sigma_{z_i}). This formulation captures the spectral signature of each material while keeping the label space compact.

Spatial coherence is enforced by placing a Potts Markov Random Field (MRF) prior on the label field ({z_i}). The Potts model introduces a smoothness parameter (\beta) that penalizes label differences between neighboring pixels; larger (\beta) yields smoother segmentations. The full Bayesian model therefore comprises the likelihood (Gaussian for each class), the Potts prior on labels, and priors on the class parameters ({\boldsymbol\mu_k,\Sigma_k}) and on (\beta). The resulting posterior distribution (p(\mathbf{Z},\Theta,\beta \mid \mathbf{X})) is analytically intractable because of the high dimensionality and the coupling introduced by the MRF.

To approximate the posterior, the authors develop a tailored Markov Chain Monte Carlo (MCMC) algorithm that combines Gibbs sampling with Metropolis–Hastings updates. The sampling scheme proceeds iteratively: (1) given the current label field (\mathbf{Z}), draw each class mean and covariance from their conjugate conditional posteriors (Normal–Inverse‑Wishart); (2) update each pixel label (z_i) by sampling from its conditional distribution, which depends on the Gaussian likelihood of the pixel’s spectrum and the Potts energy contributed by neighboring labels; (3) propose a new value for (\beta) and accept or reject it using a Metropolis step based on the change in the Potts prior probability. After a burn‑in period, the chain provides samples from the joint posterior, from which a final segmentation can be obtained via the maximum a posteriori (MAP) estimate or by majority voting across samples.

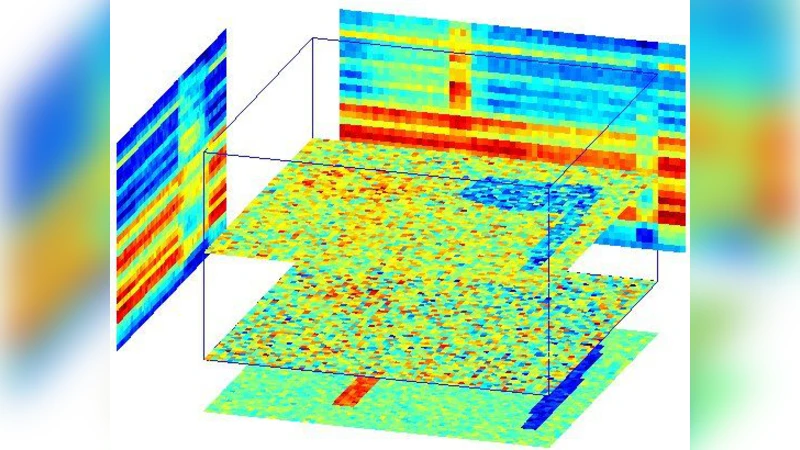

The methodology is evaluated on both synthetic hyperspectral data (where ground truth is known) and real airborne/spaceborne datasets (e.g., AVIRIS, ROSIS). Competing methods include band‑wise K‑means, Gaussian mixture models (GMM) without spatial regularization, and HMMs that ignore the Potts prior. Quantitative metrics such as Overall Accuracy and the Kappa coefficient demonstrate that the proposed Bayesian HMM‑Potts approach consistently outperforms the baselines, especially in noisy conditions and at object boundaries where spatial smoothness is critical. Qualitative visualizations confirm that the segmentation maps are both spectrally accurate and spatially coherent. An additional advantage highlighted by the experiments is the automatic estimation of the smoothness parameter (\beta), which removes the need for manual tuning.

Key contributions of the paper are: (i) a unified probabilistic model that jointly exploits spectral signatures and spatial context through a shared hidden label field; (ii) a practical MCMC inference scheme that efficiently explores the complex posterior and learns all hyper‑parameters, including the Potts smoothness weight; (iii) extensive empirical validation showing superior segmentation quality over traditional methods. The authors suggest several avenues for future work, such as extending the observation model to non‑Gaussian distributions, incorporating multi‑scale MRFs, and developing variational inference alternatives for faster, possibly real‑time, processing. The presented framework has potential applications beyond remote sensing, including medical imaging, material science, and any domain where multi‑channel high‑dimensional data require coherent segmentation.

Comments & Academic Discussion

Loading comments...

Leave a Comment