We consider the generic regularized optimization problem $\hat{\mathsf{\beta}}(\lambda)=\arg \min_{\beta}L({\sf{y}},X{\sf{\beta}})+\lambda J({\sf{\beta}})$. Efron, Hastie, Johnstone and Tibshirani [Ann. Statist. 32 (2004) 407--499] have shown that for the LASSO--that is, if $L$ is squared error loss and $J(\beta)=\|\beta\|_1$ is the $\ell_1$ norm of $\beta$--the optimal coefficient path is piecewise linear, that is, $\partial \hat{\beta}(\lambda)/\partial \lambda$ is piecewise constant. We derive a general characterization of the properties of (loss $L$, penalty $J$) pairs which give piecewise linear coefficient paths. Such pairs allow for efficient generation of the full regularized coefficient paths. We investigate the nature of efficient path following algorithms which arise. We use our results to suggest robust versions of the LASSO for regression and classification, and to develop new, efficient algorithms for existing problems in the literature, including Mammen and van de Geer's locally adaptive regression splines.

Deep Dive into Piecewise linear regularized solution paths.

We consider the generic regularized optimization problem $\hat{\mathsf{\beta}}(\lambda)=\arg \min_{\beta}L({\sf{y}},X{\sf{\beta}})+\lambda J({\sf{\beta}})$. Efron, Hastie, Johnstone and Tibshirani [Ann. Statist. 32 (2004) 407–499] have shown that for the LASSO–that is, if $L$ is squared error loss and $J(\beta)=\|\beta\|_1$ is the $\ell_1$ norm of $\beta$–the optimal coefficient path is piecewise linear, that is, $\partial \hat{\beta}(\lambda)/\partial \lambda$ is piecewise constant. We derive a general characterization of the properties of (loss $L$, penalty $J$) pairs which give piecewise linear coefficient paths. Such pairs allow for efficient generation of the full regularized coefficient paths. We investigate the nature of efficient path following algorithms which arise. We use our results to suggest robust versions of the LASSO for regression and classification, and to develop new, efficient algorithms for existing problems in the literature, including Mammen and van de Geer’s

Regularization is an essential component in modern data analysis, in particular when the number of predictors is large, possibly larger than the number of observations, and nonregularized fitting is likely to give badly over-fitted and useless models.

In this paper we consider the generic regularized optimization problem. The inputs we have are:

• A training data sample X = (x 1 , . . . , x n ) ⊤ , y = (y 1 , . . . , y n ) ⊤ , where x i ∈ R p and y i ∈ R for regression, y i ∈ {±1} for two-class classification. • A convex nonnegative loss functional L : R n × R n → R.

• A convex nonnegative penalty functional J : R p → R, with J(0) = 0. We will almost exclusively use J(β) = β q in this paper, that is, penalization of the ℓ q norm of the coefficient vector.

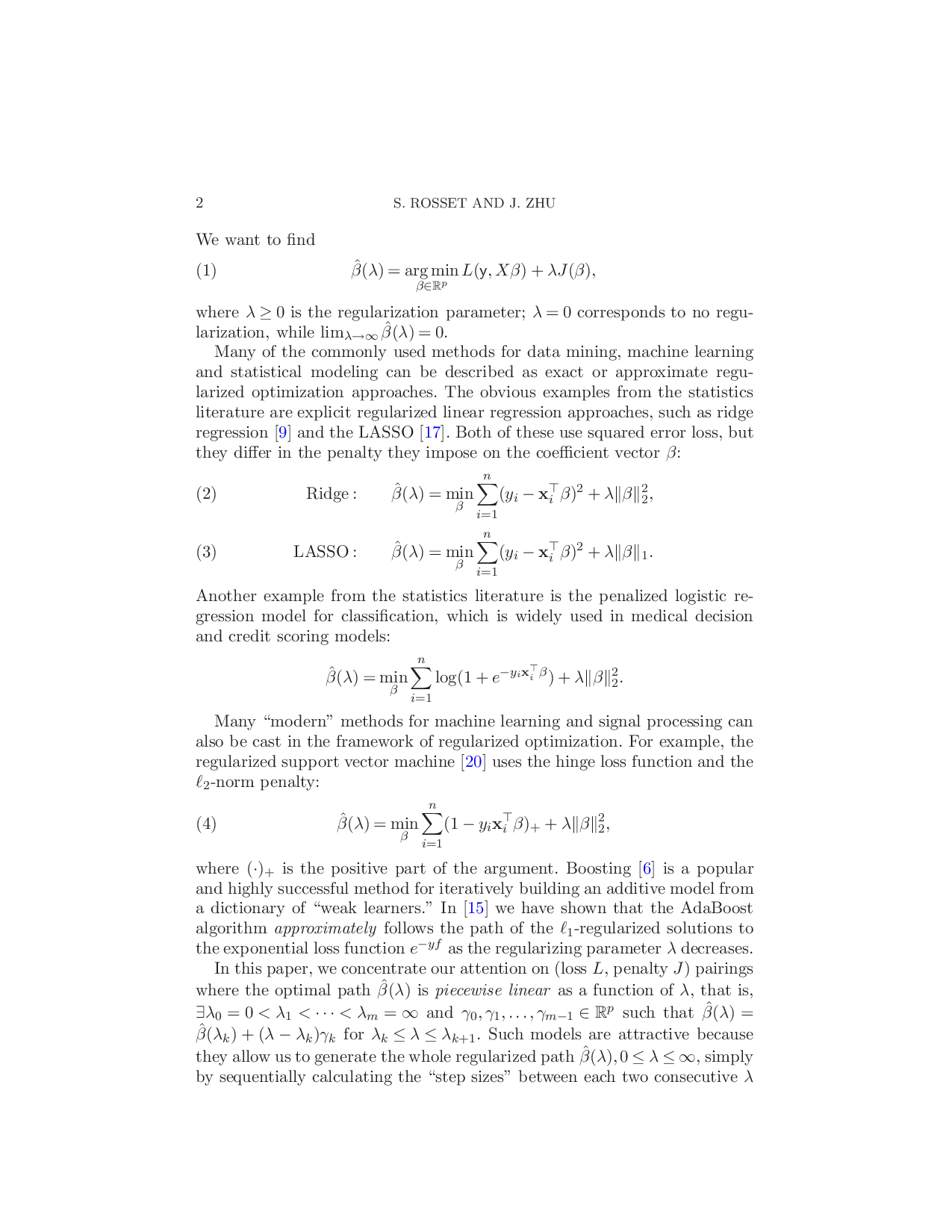

We want to find β(λ) = arg min β∈R p L(y, Xβ) + λJ(β), (1) where λ ≥ 0 is the regularization parameter; λ = 0 corresponds to no regularization, while lim λ→∞ β(λ) = 0.

Many of the commonly used methods for data mining, machine learning and statistical modeling can be described as exact or approximate regularized optimization approaches. The obvious examples from the statistics literature are explicit regularized linear regression approaches, such as ridge regression [9] and the LASSO [17]. Both of these use squared error loss, but they differ in the penalty they impose on the coefficient vector β:

LASSO :

Another example from the statistics literature is the penalized logistic regression model for classification, which is widely used in medical decision and credit scoring models:

Many “modern” methods for machine learning and signal processing can also be cast in the framework of regularized optimization. For example, the regularized support vector machine [20] uses the hinge loss function and the ℓ 2 -norm penalty:

where (•) + is the positive part of the argument. Boosting [6] is a popular and highly successful method for iteratively building an additive model from a dictionary of “weak learners.” In [15] we have shown that the AdaBoost algorithm approximately follows the path of the ℓ 1 -regularized solutions to the exponential loss function e -yf as the regularizing parameter λ decreases.

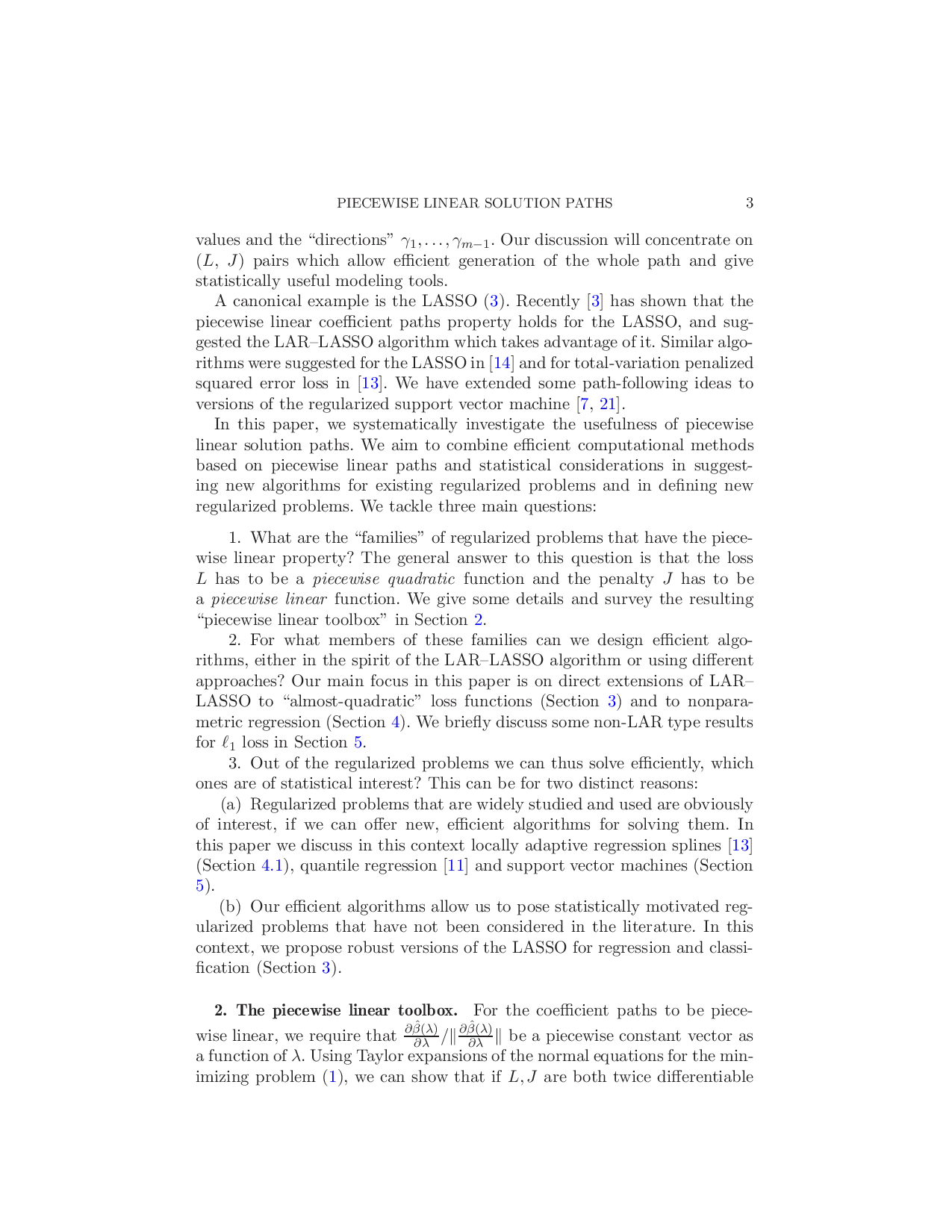

In this paper, we concentrate our attention on (loss L, penalty J ) pairings where the optimal path β(λ) is piecewise linear as a function of λ, that is, ∃λ 0 = 0 < λ 1 < • • • < λ m = ∞ and γ 0 , γ 1 , . . . , γ m-1 ∈ R p such that β(λ) = β(λ k ) + (λ -λ k )γ k for λ k ≤ λ ≤ λ k+1 . Such models are attractive because they allow us to generate the whole regularized path β(λ), 0 ≤ λ ≤ ∞, simply by sequentially calculating the “step sizes” between each two consecutive λ values and the “directions” γ 1 , . . . , γ m-1 . Our discussion will concentrate on (L, J ) pairs which allow efficient generation of the whole path and give statistically useful modeling tools.

A canonical example is the LASSO (3). Recently [3] has shown that the piecewise linear coefficient paths property holds for the LASSO, and suggested the LAR-LASSO algorithm which takes advantage of it. Similar algorithms were suggested for the LASSO in [14] and for total-variation penalized squared error loss in [13]. We have extended some path-following ideas to versions of the regularized support vector machine [7,21].

In this paper, we systematically investigate the usefulness of piecewise linear solution paths. We aim to combine efficient computational methods based on piecewise linear paths and statistical considerations in suggesting new algorithms for existing regularized problems and in defining new regularized problems. We tackle three main questions:

What are the “families” of regularized problems that have the piecewise linear property? The general answer to this question is that the loss L has to be a piecewise quadratic function and the penalty J has to be a piecewise linear function. We give some details and survey the resulting “piecewise linear toolbox” in Section 2.

For what members of these families can we design efficient algorithms, either in the spirit of the LAR-LASSO algorithm or using different approaches? Our main focus in this paper is on direct extensions of LAR-LASSO to “almost-quadratic” loss functions (Section 3) and to nonparametric regression (Section 4). We briefly discuss some non-LAR type results for ℓ 1 loss in Section 5.

Out of the regularized problems we can thus solve efficiently, which ones are of statistical interest? This can be for two distinct reasons:

(a) Regularized problems that are widely studied and used are obviously of interest, if we can offer new, efficient algorithms for solving them. In this paper we discuss in this context locally adaptive regression splines [13] (Section 4.1), quantile regression [11] and support vector machines (Section 5).

(b) Our efficient algorithms allow us to pose statistically motivated regularized problems that have not been considered in the literature. In this context, we propose robust versions of the LA

…(Full text truncated)…

This content is AI-processed based on ArXiv data.