Updating Probabilities with Data and Moments

We use the method of Maximum (relative) Entropy to process information in the form of observed data and moment constraints. The generic “canonical” form of the posterior distribution for the problem of simultaneous updating with data and moments is obtained. We discuss the general problem of non-commuting constraints, when they should be processed sequentially and when simultaneously. As an illustration, the multinomial example of die tosses is solved in detail for two superficially similar but actually very different problems.

💡 Research Summary

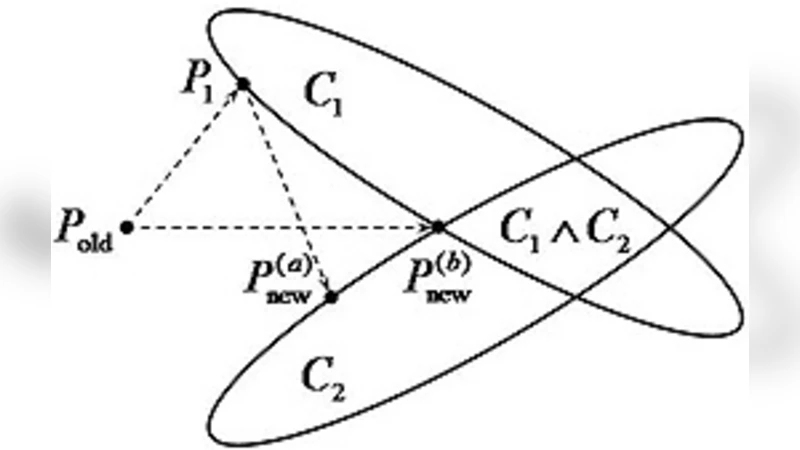

The paper develops a unified framework for updating probability distributions when the new information consists of both observed data and moment constraints. Traditional Bayesian updating treats only the likelihood derived from data, while many practical problems also impose expectations on functions of the parameters (e.g., means, variances, higher‑order moments). To accommodate both types of information, the authors invoke the principle of maximum (relative) entropy, which seeks the distribution that is closest to the prior in the Kullback‑Leibler sense while satisfying all imposed constraints.

Formally, let (P_{\text{old}}(\theta)) be the prior, (L(\mathbf{x}\mid\theta)) the likelihood of the observed data (\mathbf{x}), and (f_i(\theta)) a set of functions whose expectations are prescribed as (F_i). The updating problem becomes a constrained minimization of the KL‑divergence: \

Comments & Academic Discussion

Loading comments...

Leave a Comment